WordCount源码分析

环境:

- hadoop 3.1.3

流程:

- 将文件拆分成splits,因为文件很小,所以真正运行时一整个文件就是一个split,下图模拟两个split,并将文件按行分割形成key,value对,这一步由MapReduce框架自动完成,key是偏移量,包括换行所占字符数

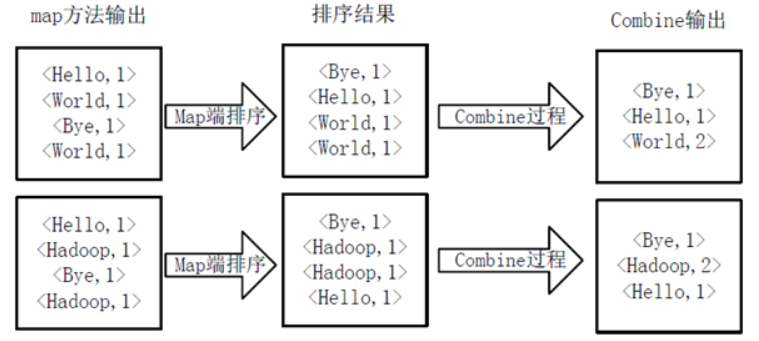

- 分割完成后交给用户定义的map方法进行处理,形成新的键值对

- 得到map方法输出的键值对后,Mapper会将他们按照key值进行排序,并执行combine过程,将key相同的value进行累加,得到最终输出结果

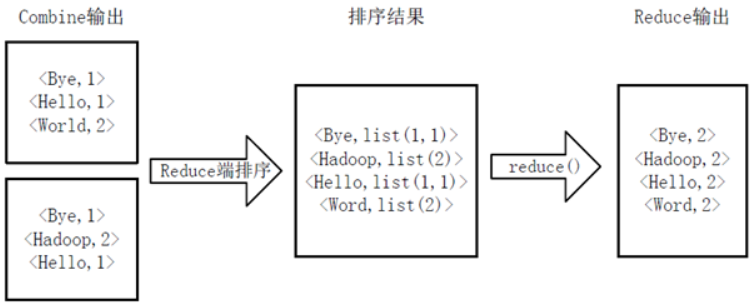

- Reducer先对Mapper接收的数据进行排序,再由用户自定义的reduce方法进行处理,得到新的键值对,最为最终程序的输出结果

package org.apache.hadoop.examples;

import org.apache.hadoop.conf.*;

import org.apache.hadoop.util.*;

import org.apache.hadoop.io.*;

import org.apache.hadoop.fs.*;

import org.apache.hadoop.mapreduce.lib.input.*;

import org.apache.hadoop.mapreduce.lib.output.*;

import java.io.*;

import org.apache.hadoop.mapreduce.*;

import java.util.*;

public class WordCount

{

public static void main(final String[] args) throws Exception {

//1、获取配置信息

final Configuration conf = new Configuration();

final String[] otherArgs = new GenericOptionsParser(conf, args).getRemainingArgs();

//2、获取配置参数,如果参数小于2就直接报错

if (otherArgs.length < 2) {

System.err.println("Usage: wordcount <in> [<in>...] <out>");

System.exit(2);

}

//3、创建一个job,创建名称

final Job job = Job.getInstance(conf, "word count");

//4、设置job运行的类

job.setJarByClass((Class)WordCount.class);

//5、设置mapper,combiner,reducer类

job.setMapperClass((Class)TokenizerMapper.class);

job.setCombinerClass((Class)IntSumReducer.class);

job.setReducerClass((Class)IntSumReducer.class);

//6、设置输出结果key和value的类

job.setOutputKeyClass((Class)Text.class);

job.setOutputValueClass((Class)IntWritable.class);

//7、读取参数,设置输入路径

for (int i = 0; i < otherArgs.length - 1; ++i) {

FileInputFormat.addInputPath(job, new Path(otherArgs[i]));

}

//8、读取参数,设置输出路径

FileOutputFormat.setOutputPath(job, new Path(otherArgs[otherArgs.length - 1]));

//9、结束程序

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

/**

* TokenizerMapper继承Mapper类

* Mapper<Object(输入key类型), Text(输入value值), Text(输出key值), IntWritable(输出value值)>

*/

public static class TokenizerMapper extends Mapper<Object, Text, Text, IntWritable>

{

//输出value

private static final IntWritable one;

//输出key

private Text word;

//构造器中初始化输出的key

public TokenizerMapper() {

this.word = new Text();

}

//重写map方法,key是偏移量,value是该行字符串

public void map(final Object key, final Text value, final Mapper.Context context) throws IOException, InterruptedException {

//每一行就是一个spilt,并会生成一个mapper

//value是一行的字符串,这里将其切割成多个单词,按照_\t\n\r\f空格,制表符,换行符,回车符,换页符

final StringTokenizer itr = new StringTokenizer(value.toString());

//循环遍历

while (itr.hasMoreTokens()) {

//把每个单词设置为输出的key

this.word.set(itr.nextToken());

//封装key和value,这里的value恒为1

context.write((Object)this.word, (Object)TokenizerMapper.one);

}

}

//单词只要出现就是1,map阶段有单词重复仍然置1,所以直接将<word,1>写入本地磁盘

static {

one = new IntWritable(1);

}

}

/**

* IntSumReducer继承Reducer类

* map的输出类型就是reduce的输入类型

* Reducer<Text(输入key类型), IntWritable(输入value值), Text(输出key值), IntWritable(输出value值)>

*/

public static class IntSumReducer extends Reducer<Text, IntWritable, Text, IntWritable>

{

//输出结果

private IntWritable result;

//构造函数初始化最终输出结果

public IntSumReducer() {

this.result = new IntWritable();

}

//key是单词,values是reducer从多个mapper中得到数据后进行排序并将相同的key组合成<key.list<V>>中的list<V>

//也就是说排序的操作是由mapper和reduce完成的,我们只需要专注解决排序处理后的结果

public void reduce(final Text key, final Iterable<IntWritable> values, final Reducer.Context context) throws IOException, InterruptedException {

//同一个spilt对应的mapper中,会将其进行combine,使得其中单词不重复,然后将这些键值对按照hash函数分配给对应的reducer

//reducer进行排序,组合成list,然后调用用户自定义的函数

//sum累加器

int sum = 0;

//遍历values

for (final IntWritable val : values) {

//累加

sum += val.get();

}

//设置输出value

this.result.set(sum);

//封装key和result

context.write((Object)key, (Object)this.result);

}

}

}

1614

1614

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?