CNN应用Relu激活函数时,根据√(2/n)设计权重初始值

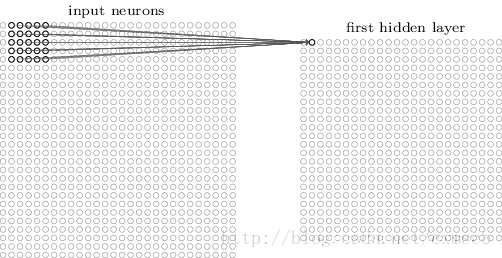

学习《深度学习入门(基于Python的理论与实现)》时,设计DeepConvNet,需要应用Relu激活函数,使用了ReLU的情况下推荐的初始值√(2/n),n为各层的神经元平均与前一层的几个神经元有连接

根据: 用filter的卷积运算连接关系.

由于权值/filter共享原则,输出数据只与输入数据的部分神经元节点(通过滤波器/卷积核)相连,连接数量即为filter_num * filter_size

"""

网络结构如下所示

conv - relu - conv- relu - pool - conv - relu - conv- relu - pool -

conv - relu - conv- relu - pool - affine - relu - dropout - affine - dropout - softmax

"""

def __init__(self, input_dim=(1, 28, 28),

conv_param_1={'filter_num': 16, 'filter_size': 3, 'pad': 1, 'stride': 1},

conv_param_2={'filter_num': 16, 'filter_size': 3, 'pad': 1, 'stride': 1},

conv_param_3={'filter_num': 32, 'filter_size': 3, 'pad': 1, 'stride': 1},

conv_param_4={'filter_num': 32, 'filter_size': 3, 'pad': 2, 'stride': 1},

conv_param_5={'filter_num': 64, 'filter_size': 3, 'pad': 1, 'stride': 1},

conv_param_6={'filter_num': 64, 'filter_size': 3, 'pad': 1, 'stride': 1},

hidden_size=50, output_size=10):

# 初始化权重===========

# 各层的神经元平均与前一层的几个神经元有连接

# 按照滤波器卷积运算,由于权值/filter共享原则,输出数据只与输入数据的部分神经元节点(通过滤波器/卷积核)相连,连接数量即为filter_num * filter_size

pre_node_nums = np.array(

[1 * 3 * 3, 16 * 3 * 3, 16 * 3 * 3, 32 * 3 * 3, 32 * 3 * 3, 64 * 3 * 3, 64 * 4 * 4, hidden_size])

wight_init_scales = np.sqrt(2.0 / pre_node_nums) # 使用ReLU的情况下推荐的初始值√(2/n)

self.params = {}

pre_channel_num = input_dim[0] # 初始通道数为输入数据的通道数

for idx, conv_param in enumerate(

[conv_param_1, conv_param_2, conv_param_3, conv_param_4, conv_param_5, conv_param_6]):

self.params['W' + str(idx + 1)] = wight_init_scales[idx] * \

np.random.randn(conv_param['filter_num'], pre_channel_num,

conv_param['filter_size'], conv_param['filter_size'])

self.params['b' + str(idx + 1)] = np.zeros(conv_param['filter_num'])

pre_channel_num = conv_param['filter_num'] # 每次卷积后滤波器个数传递给输出数据的通道数

self.params['W7'] = wight_init_scales[6] * np.random.randn(64 * 4 * 4, hidden_size) # Affine1层的权值初始化

self.params['b7'] = np.zeros(hidden_size)

self.params['W8'] = wight_init_scales[7] * np.random.randn(hidden_size,output_size) # Affine2层的权值初始化

self.params['b8'] = np.zeros(output_size)

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?