问题详情

使用 Spark mllib 测试推荐系统的开发过程中出现了如下错误:

Exception in thread "main" java.lang.NoSuchMethodError: com.google.common.base.Preconditions.checkArgument(ZLjava/lang/String;Ljava/lang/Object;)V

at org.apache.hadoop.conf.Configuration.set(Configuration.java:1357)

at org.apache.hadoop.conf.Configuration.set(Configuration.java:1338)

at org.apache.spark.deploy.SparkHadoopUtil$.org$apache$spark$deploy$SparkHadoopUtil$$appendS3AndSparkHadoopHiveConfigurations(SparkHadoopUtil.scala:456)

at org.apache.spark.deploy.SparkHadoopUtil$.newConfiguration(SparkHadoopUtil.scala:427)

at org.apache.spark.deploy.SparkHadoopUtil.newConfiguration(SparkHadoopUtil.scala:122)

at org.apache.spark.deploy.SparkHadoopUtil.<init>(SparkHadoopUtil.scala:49)

at org.apache.spark.deploy.SparkHadoopUtil$.instance$lzycompute(SparkHadoopUtil.scala:397)

at org.apache.spark.deploy.SparkHadoopUtil$.instance(SparkHadoopUtil.scala:397)

at org.apache.spark.deploy.SparkHadoopUtil$.get(SparkHadoopUtil.scala:418)

at org.apache.spark.SecurityManager.<init>(SecurityManager.scala:95)

at org.apache.spark.SparkEnv$.create(SparkEnv.scala:252)

at org.apache.spark.SparkEnv$.createDriverEnv(SparkEnv.scala:189)

at org.apache.spark.SparkContext.createSparkEnv(SparkContext.scala:267)

at org.apache.spark.SparkContext.<init>(SparkContext.scala:442)

at org.apache.spark.SparkContext$.getOrCreate(SparkContext.scala:2555)

at cn.itcast.tags.ml.rs.rdd.SparkAlsRmdMovie$.main(SparkAlsRmdMovie.scala:26)

at cn.itcast.tags.ml.rs.rdd.SparkAlsRmdMovie.main(SparkAlsRmdMovie.scala)

具体的依赖为:

<properties>

<scala.version>2.12</scala.version>

<scala.binary.version>2.12</scala.binary.version>

<spark.version>3.0.0</spark.version>

<hadoop.version>3.1.3</hadoop.version>

<hbase.version>2.1.10</hbase.version>

<netlib.java.version>1.1.2</netlib.java.version>

</properties>

<dependencies>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>2.12.10</version>

</dependency>

<!-- Spark Core 依赖 -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_${scala.binary.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<!-- Spark SQL 依赖 -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_${scala.binary.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<!-- Spark 依赖线性统计学依赖库 -->

<dependency>

<groupId>com.github.fommil.netlib</groupId>

<artifactId>all</artifactId>

<version>${netlib.java.version}</version>

<type>pom</type>

</dependency>

<!-- https://mvnrepository.com/artifact/org.jblas/jblas -->

<dependency>

<groupId>org.jblas</groupId>

<artifactId>jblas</artifactId>

<version>1.2.3</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-mllib_${scala.binary.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<!-- Hadoop Client 依赖 -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>${hadoop.version}</version>

</dependency>

</dependencies>

解决方案

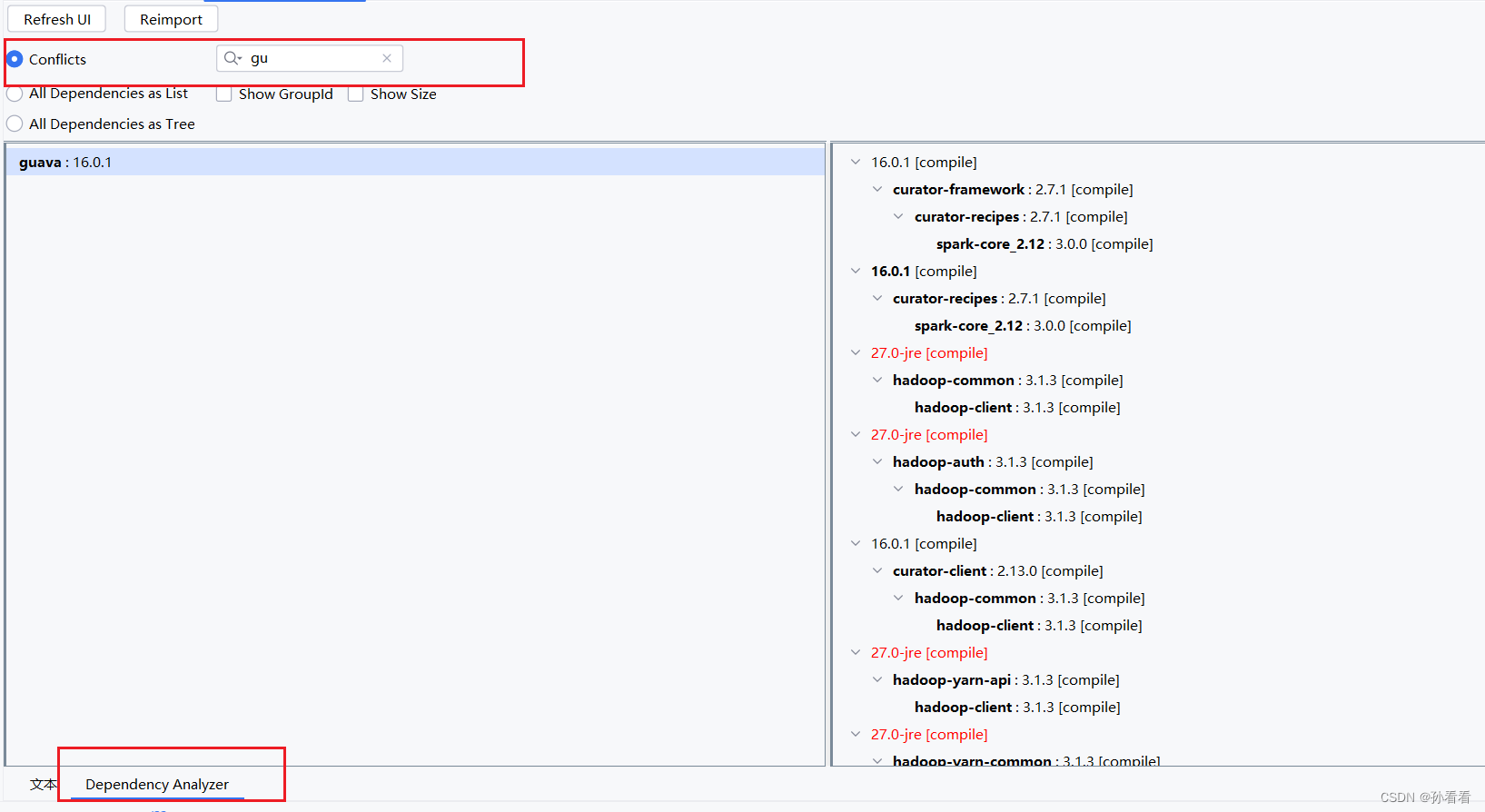

使用 Maven 分析工具,发现 guaua 依赖存在版本冲突,修改 maven 依赖排除指定的 gava 即可

修改后的 maven,将 spark-core 附带的 guava 依赖排除

<properties>

<scala.version>2.12</scala.version>

<scala.binary.version>2.12</scala.binary.version>

<spark.version>3.0.0</spark.version>

<hadoop.version>3.1.3</hadoop.version>

<hbase.version>2.1.10</hbase.version>

<netlib.java.version>1.1.2</netlib.java.version>

</properties>

<dependencies>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>2.12.10</version>

</dependency>

<!-- Spark Core 依赖 -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_${scala.binary.version}</artifactId>

<version>${spark.version}</version>

<exclusions>

<exclusion>

<artifactId>guava</artifactId>

<groupId>com.google.guava</groupId>

</exclusion>

</exclusions>

</dependency>

<!-- Spark SQL 依赖 -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_${scala.binary.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<!-- Spark 依赖线性统计学依赖库 -->

<dependency>

<groupId>com.github.fommil.netlib</groupId>

<artifactId>all</artifactId>

<version>${netlib.java.version}</version>

<type>pom</type>

</dependency>

<!-- https://mvnrepository.com/artifact/org.jblas/jblas -->

<dependency>

<groupId>org.jblas</groupId>

<artifactId>jblas</artifactId>

<version>1.2.3</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-mllib_${scala.binary.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<!-- Hadoop Client 依赖 -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>${hadoop.version}</version>

</dependency>

2988

2988

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?