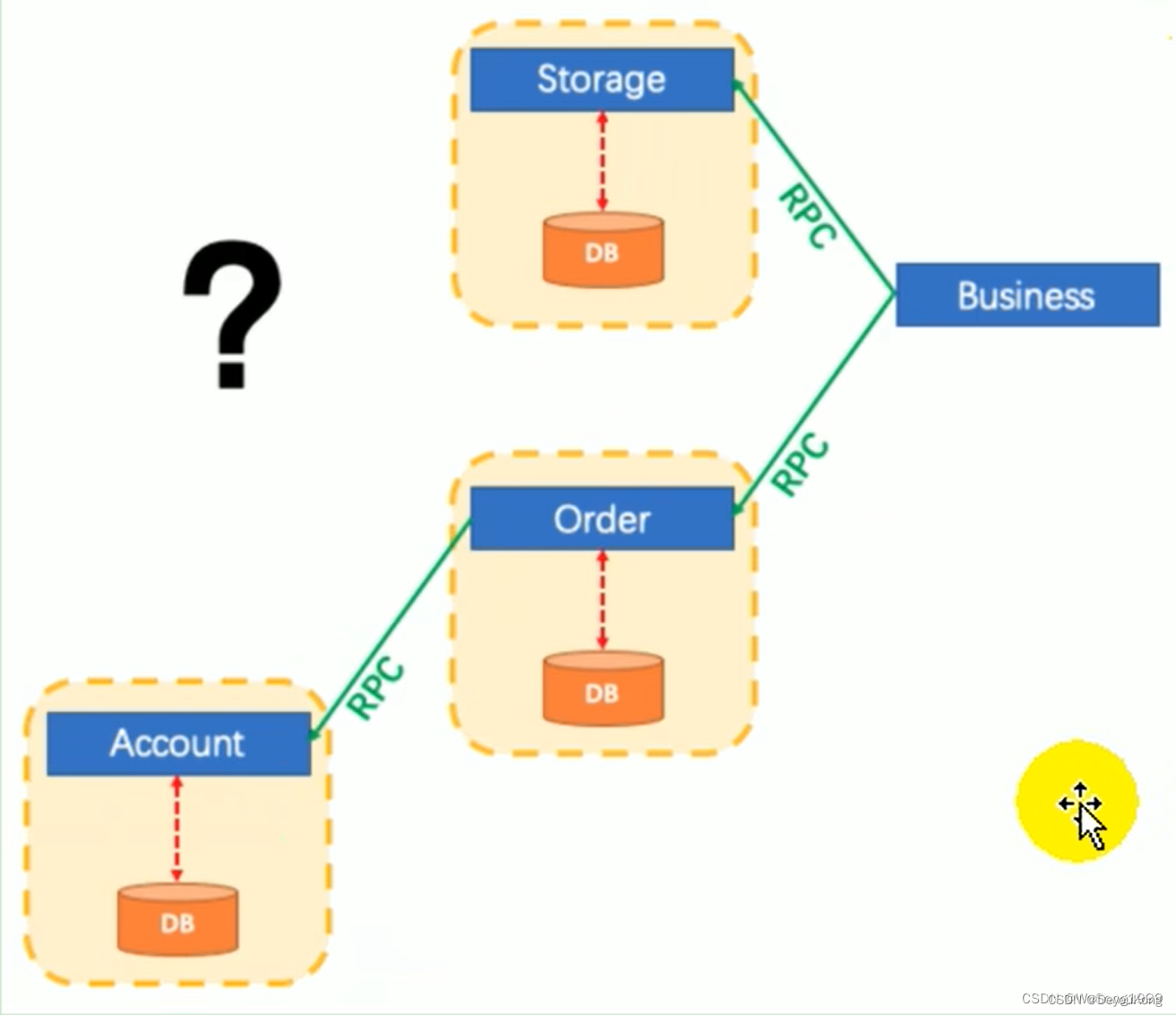

一个请求链路中包含多个服务,某一个服务出现了异常,所有服务对数据库的操作都必须回滚,这样才不会出现什么问题。

Spring Cloud Alibaba Seata 分布式事务

Seata控制分布式事务

Seata 的使用步骤

| 第一步 | 修改 seatea下的 registry.conf 配置文件,启动 seata-server |

|---|---|

| 第二步 | 数据库中涉及的业务表添加 undo_log 日志表 |

| 第三步 | 引入seata 的依赖 |

| 第四步 | 添加配置文件 registry.conf 并修改、添加配置文件 file.conf 并修改 |

| 第五步 | 添加 DataSourceConfig 配置类,需要将 DataSourceProxy 设置为主数据源,否则事务无法回滚 |

| 第六步 | 主业务方法上加 @GlobalTransactional |

| 第七步 | 其他普通业务方法加 @Transactional |

准备工作:每一个微服务对应的数据库必须先创建undo_log表

-- 注意此处0.3.0+ 增加唯一索引 ux_undo_log

CREATE TABLE `undo_log` (

`id` BIGINT(20) NOT NULL AUTO_INCREMENT,

`branch_id` BIGINT(20) NOT NULL,

`xid` VARCHAR(100) NOT NULL,

`context` VARCHAR(128) NOT NULL,

`rollback_info` LONGBLOB NOT NULL,

`log_status` INT(11) NOT NULL,

`log_created` DATETIME NOT NULL,

`log_modified` DATETIME NOT NULL,

`ext` VARCHAR(100) DEFAULT NULL,

PRIMARY KEY (`id`),

UNIQUE KEY `ux_undo_log` (`xid`,`branch_id`)

) ENGINE=INNODB AUTO_INCREMENT=1 DEFAULT CHARSET=utf8;

一、简介

之前用多线程模拟2PC事务提交的博客。自己用多线程去解决分布式事务是比较痛苦的。这里介绍一款比较流行的分布式事务框架,阿里的seata。

- 1、本文主要介绍分布式框架的seata(1.4.2)在项目开发中的简单使用。

- 2、seata 有四种模式,分别是AT、TCC、Sage、XA。本文使用的是AT模式,其他模式感兴趣的小伙伴可以自己去研究下。

- 3、本文项目结构:springboot 2.3.9.RELEASE + nacos 2.0.3 + seata 1.3.0 + mysql 5.7.x

二、安装和配置

1. nacos下载、安装

nacos的下载安装在这里就不赘述了。下面附上我使用的nacos版本下载地址

nacos 下载地址 https://github.com/alibaba/nacos/releases/tag/2.0.3

2. seata服务安装、配置

2.1 安装

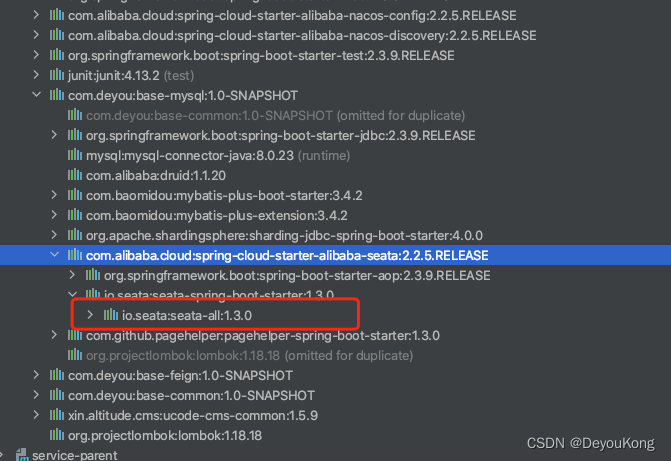

首先项目导入maven依赖

<dependency>

<groupId>com.alibaba.cloud</groupId>

<artifactId>spring-cloud-starter-alibaba-seata</artifactId>

</dependency>

然后查看导入的seata依赖是什么版本

seata下载地址 https://github.com/seata/seata/releases 下找到1.3.0版本,下载zip版本

下载后解压目录如下

2.2 配置

2.2.2、配置使用nacos+mysql

1、创建 seata 数据库

2、创建表,表结构可以从这里去取

3、创建config.txt文件的,需要到这里去取,config.txt放到根目录

#For details about configuration items, see https://seata.io/zh-cn/docs/user/configurations.html

#Transport configuration, for client and server

transport.type=TCP

transport.server=NIO

transport.heartbeat=true

transport.enableTmClientBatchSendRequest=false

transport.enableRmClientBatchSendRequest=true

transport.enableTcServerBatchSendResponse=false

transport.rpcRmRequestTimeout=30000

transport.rpcTmRequestTimeout=30000

transport.rpcTcRequestTimeout=30000

transport.threadFactory.bossThreadPrefix=NettyBoss

transport.threadFactory.workerThreadPrefix=NettyServerNIOWorker

transport.threadFactory.serverExecutorThreadPrefix=NettyServerBizHandler

transport.threadFactory.shareBossWorker=false

transport.threadFactory.clientSelectorThreadPrefix=NettyClientSelector

transport.threadFactory.clientSelectorThreadSize=1

transport.threadFactory.clientWorkerThreadPrefix=NettyClientWorkerThread

transport.threadFactory.bossThreadSize=1

transport.threadFactory.workerThreadSize=default

transport.shutdown.wait=3

transport.serialization=seata

transport.compressor=none

#Transaction routing rules configuration, only for the client

service.vgroupMapping.default_tx_group=default

#If you use a registry, you can ignore it

service.default.grouplist=127.0.0.1:8091

service.enableDegrade=false

service.disableGlobalTransaction=false

#Transaction rule configuration, only for the client

client.rm.asyncCommitBufferLimit=10000

client.rm.lock.retryInterval=10

client.rm.lock.retryTimes=30

client.rm.lock.retryPolicyBranchRollbackOnConflict=true

client.rm.reportRetryCount=5

client.rm.tableMetaCheckEnable=true

client.rm.tableMetaCheckerInterval=60000

client.rm.sqlParserType=druid

client.rm.reportSuccessEnable=false

client.rm.sagaBranchRegisterEnable=false

client.rm.sagaJsonParser=fastjson

client.rm.tccActionInterceptorOrder=-2147482648

client.tm.commitRetryCount=5

client.tm.rollbackRetryCount=5

client.tm.defaultGlobalTransactionTimeout=60000

client.tm.degradeCheck=false

client.tm.degradeCheckAllowTimes=10

client.tm.degradeCheckPeriod=2000

client.tm.interceptorOrder=-2147482648

client.undo.dataValidation=true

client.undo.logSerialization=jackson

client.undo.onlyCareUpdateColumns=true

server.undo.logSaveDays=7

server.undo.logDeletePeriod=86400000

client.undo.logTable=undo_log

client.undo.compress.enable=true

client.undo.compress.type=zip

client.undo.compress.threshold=64k

#For TCC transaction mode

tcc.fence.logTableName=tcc_fence_log

tcc.fence.cleanPeriod=1h

#Log rule configuration, for client and server

log.exceptionRate=100

#Transaction storage configuration, only for the server. The file, db, and redis configuration values are optional.

store.mode=db

store.lock.mode=file

store.session.mode=file

#Used for password encryption

#store.publicKey=

#If `store.mode,store.lock.mode,store.session.mode` are not equal to `file`, you can remove the configuration block.

#store.file.dir=file_store/data

#store.file.maxBranchSessionSize=16384

#store.file.maxGlobalSessionSize=512

#store.file.fileWriteBufferCacheSize=16384

#store.file.flushDiskMode=async

#store.file.sessionReloadReadSize=100

#These configurations are required if the `store mode` is `db`. If `store.mode,store.lock.mode,store.session.mode` are not equal to `db`, you can remove the configuration block.

store.db.datasource=druid

store.db.dbType=mysql

store.db.driverClassName=com.mysql.jdbc.Driver

store.db.url=jdbc:mysql://175.178.154.39:3306/seata?useUnicode=true&rewriteBatchedStatements=true

store.db.user=root

store.db.password=admin1234

store.db.minConn=5

store.db.maxConn=30

store.db.globalTable=global_table

store.db.branchTable=branch_table

store.db.distributedLockTable=distributed_lock

store.db.queryLimit=100

store.db.lockTable=lock_table

store.db.maxWait=5000

#These configurations are required if the `store mode` is `redis`. If `store.mode,store.lock.mode,store.session.mode` are not equal to `redis`, you can remove the configuration block.

#store.redis.mode=single

#store.redis.single.host=127.0.0.1

#store.redis.single.port=6379

#store.redis.sentinel.masterName=

#store.redis.sentinel.sentinelHosts=

#store.redis.maxConn=10

#store.redis.minConn=1

#store.redis.maxTotal=100

#store.redis.database=0

#store.redis.password=

#store.redis.queryLimit=100

#Transaction rule configuration, only for the server

server.recovery.committingRetryPeriod=1000

server.recovery.asynCommittingRetryPeriod=1000

server.recovery.rollbackingRetryPeriod=1000

server.recovery.timeoutRetryPeriod=1000

server.maxCommitRetryTimeout=-1

server.maxRollbackRetryTimeout=-1

server.rollbackRetryTimeoutUnlockEnable=false

server.distributedLockExpireTime=10000

server.xaerNotaRetryTimeout=60000

server.session.branchAsyncQueueSize=5000

server.session.enableBranchAsyncRemove=false

server.enableParallelRequestHandle=false

#Metrics configuration, only for the server

metrics.enabled=false

metrics.registryType=compact

metrics.exporterList=prometheus

metrics.exporterPrometheusPort=9898

修改store.db相关的内容,将store.redis、store.file的内容注释

4、registry.conf修改

registry {

# file 、nacos 、eureka、redis、zk、consul、etcd3、sofa

type = "nacos" # 注册中心

nacos {

application = "seata-server"

serverAddr = "localhost:8848"

group = "DEFAULT_GROUP"

namespace = "dev"

cluster = "default"

username = "nacos"

password = "nacos"

}

eureka {

serviceUrl = "http://localhost:8761/eureka"

application = "default"

weight = "1"

}

redis {

serverAddr = "localhost:6379"

db = 0

password = ""

cluster = "default"

timeout = 0

}

zk {

cluster = "default"

serverAddr = "127.0.0.1:2181"

sessionTimeout = 6000

connectTimeout = 2000

username = ""

password = ""

}

consul {

cluster = "default"

serverAddr = "127.0.0.1:8500"

}

etcd3 {

cluster = "default"

serverAddr = "http://localhost:2379"

}

sofa {

serverAddr = "127.0.0.1:9603"

application = "default"

region = "DEFAULT_ZONE"

datacenter = "DefaultDataCenter"

cluster = "default"

group = "SEATA_GROUP"

addressWaitTime = "3000"

}

file {

name = "file.conf"

}

}

config {

# file、nacos 、apollo、zk、consul、etcd3

type = "nacos" # 配置的使用方式

nacos {

serverAddr = "localhost:8848"

namespace = "dev"

group = "DEFAULT_GROUP"

username = "nacos"

password = "nacos"

}

consul {

serverAddr = "127.0.0.1:8500"

}

apollo {

appId = "seata-server"

apolloMeta = "http://192.168.1.204:8801"

namespace = "application"

}

zk {

serverAddr = "127.0.0.1:2181"

sessionTimeout = 6000

connectTimeout = 2000

username = ""

password = ""

}

etcd3 {

serverAddr = "http://localhost:2379"

}

file {

name = "file.conf"

}

}

5、file.conf修改

transport {

# tcp udt unix-domain-socket

type = "TCP"

#NIO NATIVE

server = "NIO"

#enable heartbeat

heartbeat = true

#thread factory for netty

thread-factory {

boss-thread-prefix = "NettyBoss"

worker-thread-prefix = "NettyServerNIOWorker"

server-executor-thread-prefix = "NettyServerBizHandler"

share-boss-worker = false

client-selector-thread-prefix = "NettyClientSelector"

client-selector-thread-size = 1

client-worker-thread-prefix = "NettyClientWorkerThread"

# netty boss thread size,will not be used for UDT

boss-thread-size = 1

#auto default pin or 8

worker-thread-size = 8

}

shutdown {

# when destroy server, wait seconds

wait = 3

}

serialization = "seata"

compressor = "none"

}

service {

#vgroup->rgroup

vgroupMmapping.my_test_tx_group = "default"

#only support single node

default.grouplist = "127.0.0.1:8091"

#degrade current not support

enableDegrade = false

#disable

disable = false

disableGlobalTransaction = false

#unit ms,s,m,h,d represents milliseconds, seconds, minutes, hours, days, default permanent

max.commit.retry.timeout = "-1"

max.rollback.retry.timeout = "-1"

}

client {

rm{

async.commit.buffer.limit = 10000

lock {

retry.internal = 10

retry.times = 30

retryPolicyBranchRollbackOnConflict = true

}

report.retry.count = 5

tableMetaCheckEnable = false

reportSuccessEnable = false

sqlParserType = druid

}

tm {

commitRetryCount = 5

rollbackRetryCount = 5

}

undo {

dataValidation = true

logSerialization = "jackson"

logTable = "undo_log"

}

log {

exceptionRate = 100

}

}

## transaction log store, only used in seata-server

store {

## store mode: file、db、redis

mode = "db"

## file store property

file {

## store location dir

dir = "sessionStore"

# branch session size , if exceeded first try compress lockkey, still exceeded throws exceptions

maxBranchSessionSize = 16384

# globe session size , if exceeded throws exceptions

maxGlobalSessionSize = 512

# file buffer size , if exceeded allocate new buffer

fileWriteBufferCacheSize = 16384

# when recover batch read size

sessionReloadReadSize = 100

# async, sync

flushDiskMode = async

}

## database store property

db {

## the implement of javax.sql.DataSource, such as DruidDataSource(druid)/BasicDataSource(dbcp)/HikariDataSource(hikari) etc.

datasource = "druid"

## mysql/oracle/postgresql/h2/oceanbase etc.

dbType = "mysql"

driverClassName = "com.mysql.jdbc.Driver"

url = "jdbc:mysql://localhost:3306/seata"

user = "root"

password = "admin1234"

minConn = 5

maxConn = 30

globalTable = "global_table"

branchTable = "branch_table"

lockTable = "lock_table"

queryLimit = 100

maxWait = 5000

}

## redis store property

redis {

host = "127.0.0.1"

port = "6379"

password = ""

database = "0"

minConn = 1

maxConn = 10

queryLimit = 100

}

}

lock {

## the lock store mode: local、remote

mode = "remote"

local {

## store locks in user's database

}

remote {

## store locks in the seata's server

}

}

recovery {

committing-retry-delay = 30

asyn-committing-retry-delay = 30

rollbacking-retry-delay = 30

timeout-retry-delay = 30

}

transaction {

undo.data.validation = true

undo.log.serialization = "jackson"

}

## metrics settings

metrics {

enabled = false

registry-type = "compact"

# multi exporters use comma divided

exporter-list = "prometheus"

exporter-prometheus-port = 9898

}

## server configuration, only used in server side

server {

recovery {

#schedule committing retry period in milliseconds

committingRetryPeriod = 1000

#schedule asyn committing retry period in milliseconds

asynCommittingRetryPeriod = 1000

#schedule rollbacking retry period in milliseconds

rollbackingRetryPeriod = 1000

#schedule timeout retry period in milliseconds

timeoutRetryPeriod = 1000

}

undo {

logSaveDays = 7

#schedule delete expired undo_log in milliseconds

logDeletePeriod = 86400000

}

#unit ms,s,m,h,d represents milliseconds, seconds, minutes, hours, days, default permanent

maxCommitRetryTimeout = "-1"

maxRollbackRetryTimeout = "-1"

rollbackRetryTimeoutUnlockEnable = false

}

6、推送配置到nacos

下载 nacos-config.sh 到conf文件夹下,右击空白处打开 Git Base,输入sh nacos-config.sh -h nacosIp -p 8848 -g DEFAULT_GROUP -t dev -u nacos -w nacos 回车即可。

2.2.2、单机使用文件file方式 – 测试过程好像有点问题

服务启动后,一直报错:

no available service 'null' found, please make sure registry config correct

这里我使用 文件的方式进行配置

1、 registry.conf:注册中心相关配置

registry {

# file 、nacos 、eureka、redis、zk、consul、etcd3、sofa

type = "nacos" # 1、确认注册中心用哪个,然后在下列的方式中进行修改

nacos {

application = "seata-server"

serverAddr = "localhost:8848" # 修改地址

group = "DEFAULT_GROUP" # 修改组

namespace = "dev" # 命名空间

cluster = "default"

username = "nacos"

password = "nacos"

}

eureka {

serviceUrl = "http://localhost:8761/eureka"

application = "default"

weight = "1"

}

redis {

serverAddr = "localhost:6379"

db = 0

password = ""

cluster = "default"

timeout = 0

}

zk {

cluster = "default"

serverAddr = "127.0.0.1:2181"

sessionTimeout = 6000

connectTimeout = 2000

username = ""

password = ""

}

consul {

cluster = "default"

serverAddr = "127.0.0.1:8500"

}

etcd3 {

cluster = "default"

serverAddr = "http://localhost:2379"

}

sofa {

serverAddr = "127.0.0.1:9603"

application = "default"

region = "DEFAULT_ZONE"

datacenter = "DefaultDataCenter"

cluster = "default"

group = "SEATA_GROUP"

addressWaitTime = "3000"

}

file {

name = "file.conf"

}

}

config {

# file、nacos 、apollo、zk、consul、etcd3

type = "file" # 配置文件使用哪个方式,后再进行配置,这里使用file

nacos {

serverAddr = "localhost:8848"

namespace = ""

group = "DEFAULT_GROUP"

username = "nacos"

password = "nacos"

}

consul {

serverAddr = "127.0.0.1:8500"

}

apollo {

appId = "seata-server"

apolloMeta = "http://192.168.1.204:8801"

namespace = "application"

}

zk {

serverAddr = "127.0.0.1:2181"

sessionTimeout = 6000

connectTimeout = 2000

username = ""

password = ""

}

etcd3 {

serverAddr = "http://localhost:2379"

}

file {

name = "file.conf"

}

}

2、 file.conf :事务日志存储位置

transport {

# tcp udt unix-domain-socket

type = "TCP"

#NIO NATIVE

server = "NIO"

#enable heartbeat

heartbeat = true

#thread factory for netty

thread-factory {

boss-thread-prefix = "NettyBoss"

worker-thread-prefix = "NettyServerNIOWorker"

server-executor-thread-prefix = "NettyServerBizHandler"

share-boss-worker = false

client-selector-thread-prefix = "NettyClientSelector"

client-selector-thread-size = 1

client-worker-thread-prefix = "NettyClientWorkerThread"

# netty boss thread size,will not be used for UDT

boss-thread-size = 1

#auto default pin or 8

worker-thread-size = 8

}

shutdown {

# when destroy server, wait seconds

wait = 3

}

serialization = "seata"

compressor = "none"

}

service {

#vgroup->rgroup

vgroup_mapping.my_test_tx_group = "default"

#only support single node

default.grouplist = "127.0.0.1:8091"

#degrade current not support

enableDegrade = false

#disable

disable = false

#unit ms,s,m,h,d represents milliseconds, seconds, minutes, hours, days, default permanent

max.commit.retry.timeout = "-1"

max.rollback.retry.timeout = "-1"

}

client {

async.commit.buffer.limit = 10000

lock {

retry.internal = 10

retry.times = 30

}

report.retry.count = 5

}

## transaction log store, only used in seata-server

store {

## store mode: file、db、redis

mode = "file"

## file store property

file {

## store location dir

dir = "sessionStore"

# branch session size , if exceeded first try compress lockkey, still exceeded throws exceptions

maxBranchSessionSize = 16384

# globe session size , if exceeded throws exceptions

maxGlobalSessionSize = 512

# file buffer size , if exceeded allocate new buffer

fileWriteBufferCacheSize = 16384

# when recover batch read size

sessionReloadReadSize = 100

# async, sync

flushDiskMode = async

}

## database store property

db {

## the implement of javax.sql.DataSource, such as DruidDataSource(druid)/BasicDataSource(dbcp)/HikariDataSource(hikari) etc.

datasource = "druid"

## mysql/oracle/postgresql/h2/oceanbase etc.

dbType = "mysql"

driverClassName = "com.mysql.jdbc.Driver"

url = "jdbc:mysql://127.0.0.1:3306/seata"

user = "mysql"

password = "mysql"

minConn = 5

maxConn = 30

globalTable = "global_table"

branchTable = "branch_table"

lockTable = "lock_table"

queryLimit = 100

maxWait = 5000

}

## redis store property

redis {

host = "127.0.0.1"

port = "6379"

password = ""

database = "0"

minConn = 1

maxConn = 10

queryLimit = 100

}

}

lock {

## the lock store mode: local、remote

mode = "remote"

local {

## store locks in user's database

}

remote {

## store locks in the seata's server

}

}

recovery {

committing-retry-delay = 30

asyn-committing-retry-delay = 30

rollbacking-retry-delay = 30

timeout-retry-delay = 30

}

transaction {

undo.data.validation = true

undo.log.serialization = "jackson"

}

## metrics settings

metrics {

enabled = false

registry-type = "compact"

# multi exporters use comma divided

exporter-list = "prometheus"

exporter-prometheus-port = 9898

}

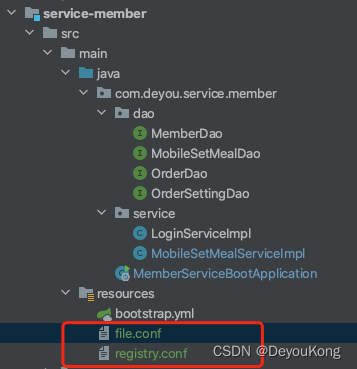

3、file.conf和registry.conf复制到各个服务的resources下

4、修改个服务下的file.conf文件中的应用名称

如application.yml文件中配置

spring:

environment: dev

application:

name: 'health-service-member' # 应用名称

则在file.conf文件中

service {

#vgroup->rgroup

vgroup_mapping.health-service-member-fescar-service-group = "default"

#only support single node

default.grouplist = "127.0.0.1:8091"

#degrade current not support

enableDegrade = false

#disable

disable = false

#unit ms,s,m,h,d represents milliseconds, seconds, minutes, hours, days, default permanent

max.commit.retry.timeout = "-1"

max.rollback.retry.timeout = "-1"

}

2.3、启动Seata

- 运行seata

windows双击seata-server.bat启动

linux 执行sh seata-server.sh启动

见到如下界面,即表示已经启动成功

nacos上seata服务上线,表示启动成功

3、项目整合

3.1、项目依赖

<!-- Seata依赖 -->

<dependency>

<groupId>com.alibaba.cloud</groupId>

<artifactId>spring-cloud-starter-alibaba-seata</artifactId>

</dependency>

<!-- Seata依赖 -->

3.2、配置类

package com.deyou.mysql.config;

import com.zaxxer.hikari.HikariDataSource;

import io.seata.rm.datasource.DataSourceProxy;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.autoconfigure.jdbc.DataSourceProperties;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import org.springframework.util.StringUtils;

import javax.annotation.Resource;

import javax.sql.DataSource;

/**

* @author Deyou Kong

* @description Seata配置文件

* @date 2023/2/16 9:05 上午

*/

@Configuration

public class SeataConfig {

@Resource

DataSourceProperties dataSourceProperties;

@Bean

public DataSource dataSource(DataSourceProperties dataSourceProperties){

HikariDataSource dataSource = dataSourceProperties.initializeDataSourceBuilder().type(HikariDataSource.class).build();

if(StringUtils.hasText(dataSourceProperties.getName())){

dataSource.setPoolName(dataSourceProperties.getName());

}

return new DataSourceProxy(dataSource);

}

}

3.3、代码整合

给分布式大事务入口处标注@GlobalTransactional全局事务注解

@GlobalTransactional

@Override

@Transactional

public Result<String> saveSetMealOrder(JSONObject jsonObject) throws InterruptedException {

String validateCode = (String) jsonObject.get("validateCode");

String smsCodeKey = RedisConstant.MOBILE_SET_MEAL_ORDER_SMS .replaceFirst("\\{phone}", jsonObject.getString("telephone"));

String redisValidateCode = redisUtil.get(smsCodeKey);

if (redisValidateCode == null){

return Result.error(MessageConstant.VALIDATECODE_INVALID);

}

if (!validateCode.equals(redisValidateCode)){

return Result.error(MessageConstant.VALIDATECODE_ERROR);

}

Date date = new Date();

try {

SimpleDateFormat dateFormat = new SimpleDateFormat("yyyy-MM-dd");

date = dateFormat.parse(jsonObject.getString("orderDate"));

} catch (Exception e) {

e.printStackTrace();

}

LambdaQueryWrapper<OrderSetting> lambdaQueryWrapper = new LambdaQueryWrapper<>();

lambdaQueryWrapper.eq(OrderSetting::getOrderDate, date);

OrderSetting orderSetting = orderSettingDao.selectOne(lambdaQueryWrapper);

if (orderSetting != null && orderSetting.getNumber() > orderSetting.getReservations()){

Order order = new Order();

Integer userId = JwtUtil.getUserId();

order.setMemberId(userId);

order.setOrderDate(date);

order.setOrderType("微信预约");

order.setOrderStatus("未到诊");

order.setSetmealId(jsonObject.getInteger("setmealId"));

orderDao.insert(order);

orderSetting.setReservations(orderSetting.getReservations()+1);

orderSettingDao.updateById(orderSetting);

CheckItem checkItem = new CheckItem();

checkItem.setCode("CCC223");

checkItem.setName("测试Seata1");

checkItem.setSex("1");

checkItem.setAge("10-50");

checkItem.setPrice(50f);

checkItem.setType("1");

checkItem.setAttention("测试Seata1");

checkItemService.addCheckItem(checkItem);

return Result.success(order.getId().toString(), MessageConstant.ORDER_SUCCESS);

}else {

if (orderSetting == null){

return Result.error(MessageConstant.ORDER_SETTING_IS_NULL); // 查询不到可预约数

}else {

return Result.error(MessageConstant.ORDER_SETTING_FULL); // 已预约满

}

}

}

CheckItemService.addCheckItem()代码如下:

@Override

public void addCheckItem(CheckItem checkItem) throws InterruptedException {

checkItemDao.insert(checkItem);

}

以上代码,在创建订单时,需要调用 CheckItemService 服务的 addCheckItem 接口,两方法处于不同的服务

3.4、测试

- 1、正常调用,数据入库正常

- 2、OrderService.saveSetMealOrder() 去掉@GlobalTransactional全局事务注解,再次测试,订单回滚成功,但是CheckItemService.addCheckItem没有回滚

- 3、异常调用

OrderService.saveSetMealOrder() 加上@GlobalTransactional全局事务注解

CheckItemService.addCheckItem()方法修改如下:

@Override

public void addCheckItem(CheckItem checkItem) throws InterruptedException {

checkItemDao.insert(checkItem);

Thread.sleep(6000L);

int i = 10/0;

}

测试,checkItemService服务报错了,这时候 CheckItemService 和 OrderService 的数据都回滚了

参考文档:https://blog.csdn.net/qq_41625866/article/details/125925588

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?