一、简介

Sqoop(Sql to hadoop+Hadoop to sql),官网:sqoop.apache.org

二、Sqoop 1.4.7安装

(1)解压

tar -zxvf /opt/software/sqoop-1.4.7.bin.tar.gz -C /opt/module

(2)改名

mv /opt/module/sqoop-1.4.7.bin__hadoop-2.6.0/ /opt/module/sqoop

(3)配置

复制 sqoop-env-template.sh 模板,并将模板重命名为 sqoop-env.sh。

cp /opt/module/sqoop/conf/sqoop-env-template.sh /opt/module/sqoop/conf/sqoop-env.shvim /opt/module/sqoop/conf/sqoop-env.sh添加Hadoop、Hive、ZooKeeper、Hbase(如果安装了)等组件的路径

export HADOOP_COMMON_HOME=/opt/module/hadoop-2.7.6

export HADOOP_MAPRED_HOME=/opt/module/hadoop-2.7.6

export HIVE_HOME=/opt/module/hive

(4)配置环境变量

sudo vi /etc/profile追加以下内容

# SQOOP

export SQOOP_HOME=/opt/module/sqoop

export PATH=$PATH:$SQOOP_HOME/bin

export CLASSPATH=$CLASSPATH:$SQOOP_HOME/lib激活环境变量

source /etc/profile(5)连接MySql数据库

将MySQL的Java Connector驱动复制到依赖库中

cp /opt/software/mysql-connector-java-5.1.49.jar /opt/module/sqoop/lib/

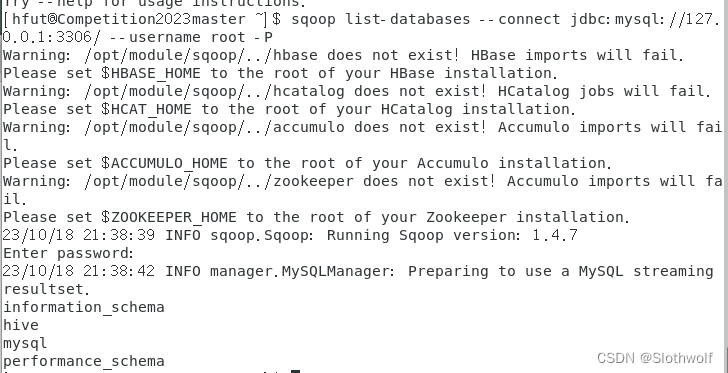

(6)测试

sqoop help在Hadoop集群和MySql全部启动的情况下,测试Sqoop是否能正常连接MySQL

sqoop list-databases --connect jdbc:mysql://127.0.0.1:3306/ --username root -P提示输入密码,然后会显示MySQL的数据库。

(7)连接Hive

将 hive 组件/opt/module/hive/lib/目录下的hive-common-1.2.1.jar、hive-shims-common-1.2.1.jar、hive-shims-0.23-1.2.1.jar也放入 Sqoop 的 lib 中。

cp /opt/module/hive/lib/hive-common-1.2.1.jar /opt/module/sqoop/lib/

cp /opt/module/hive/lib/hive-shims-common-1.2.1.jar /opt/module/sqoop/lib/

cp /opt/module/hive/lib/hive-shims-0.23-1.2.1.jar /opt/module/sqoop/lib/三、Sqoop简单使用

(1)导入数据

1)在MySQL中新建一张表并插入一些数据

mysql -uroot -p000000create database sqoop;

create table sqoop.staff(id int(4) primary key not null auto_increment, name varchar(255), sex varchar(255));

insert into sqoop.staff(name,sex) values('Thomas','Male');

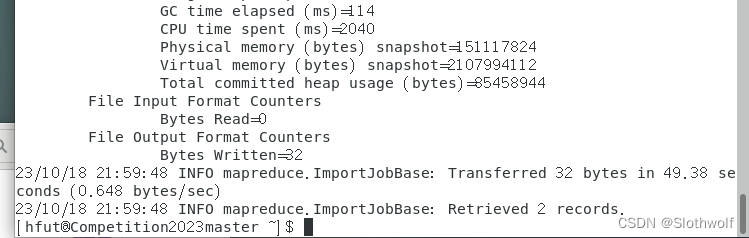

insert into sqoop.staff(name,sex) values('Catalina','Female');2)全部导入

记得改主机名

sqoop import \

--connect jdbc:mysql://hadoop102:3306/sqoop \

--username root \

--password 000000 \

--table staff \

--target-dir /user/company \

--delete-target-dir \

--num-mappers 1 \

--fields-terminated-by "\t"

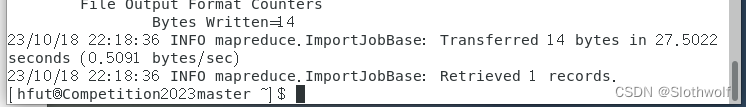

3)查询导入

sqoop import \

--connect jdbc:mysql://hadoop102:3306/sqoop \

--username root \

--password 000000 \

--target-dir /user/company \

--delete-target-dir \

--num-mappers 1 \

--fields-terminated-by "\t" \

--query 'select name,sex from staff where id <=1 and $CONDITIONS;'

4)导入指定列

sqoop import \

--connect jdbc:mysql://hadoop102:3306/sqoop \

--username root \

--password 000000 \

--table staff \

--target-dir /user/company \

--delete-target-dir \

--num-mappers 1 \

--fields-terminated-by "\t" \

--columns id,sex

5)查询条件导入(使用sqoop关键字筛选查询导入数据)

sqoop import \

--connect jdbc:mysql://hadoop102:3306/sqoop \

--username root \

--password 000000 \

--table staff \

--target-dir /user/company \

--delete-target-dir \

--num-mappers 1 \

--fields-terminated-by "\t" \

--where "id=1"

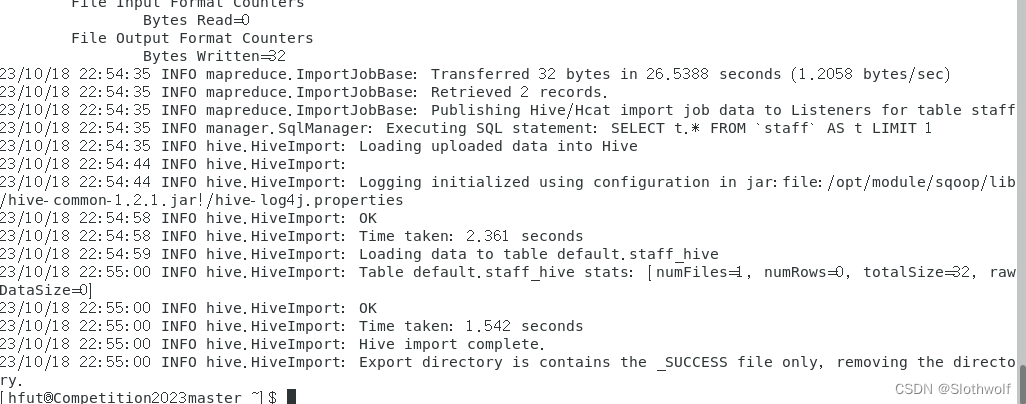

6)导入数据到Hive

HADOOP_CLASSPATH=$HADOOP_CLASSPATH:/opt/module/hive/lib/*

sqoop import \

--connect jdbc:mysql://hadoop102:3306/sqoop \

--username root \

--password 000000 \

--table staff \

--num-mappers 1 \

--hive-import \

--fields-terminated-by "\t" \

--hive-overwrite \

--hive-table staff_hive

(2)导出数据

注意,只支持从Hive/HDFS导出,不支持HBase导出到SQL

sqoop export \

--connect jdbc:mysql://hadoop102:3306/sqoop \

--username root \

--password 000000 \

--table staff \

--num-mappers 1 \

--export-dir /user/hive/warehouse/staff_hive \

--input-fields-terminated-by "\t"(3)脚本调用(考点)

使用opt格式的文件打包sqoop命令,然后执行

1.创建一个.opt文件

mkdir opt

touch opt/job_HDFS2RDBMS.opt2.编写sqoop脚本

vim opt/job_HDFS2RDBMS.optexport

--connect

jdbc:mysql://hadoop102:3306/sqoop

--username

root

--password

000000

--table

staff

--num-mappers

1

--export-dir

/user/hive/warehouse/staff_hive

--input-fields-terminated-by

"\t"3、执行脚本

sqoop --options-file opt/job_HDFS2RDBMS.opt

765

765

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?