全局配置

package utils

type GlobalObj struct {

//Server

TcpServer ziface.IServer //Zinx全局Server对象

Host string //服务器监听ip

TcpPort int //服务器监听端口号

Name string //服务器名称

//Zinx

Version string //当前服务器版本

MaxConn int //当前服务器最大连接数

MaxPackageSize uint32 //当前Zinx框架数据包的最大值

WorkerPoolSize uint32 //当前业务工作Worker池的Goroutine数量

MaxWorkerTaskLen uint32 //Zinx框架允许用户最多开辟多少个Worker(限定条件)

}

var GlobalObject *GlobalObj

func (g *GlobalObj) Reload() {

data, err := ioutil.ReadFile("conf/zinx.json")

if err != nil {

panic(err)

}

err = json.Unmarshal(data, &GlobalObject)

if err != nil {

panic(err)

}

}

func init() {

GlobalObject = &GlobalObj{

Name: "ZinxServerApp",

Version: "V0.6",

TcpPort: 8999,

Host: "0.0.0.0",

MaxConn: 1000,

MaxPackageSize: 4096,

WorkerPoolSize: 10,

MaxWorkerTaskLen: 1024,

}

GlobalObject.Reload()//如果不用默认数据,用户可以创建json文件自定义

}

声明一个GlobalObject全局变量,其中有用户自定义的参数,通过init()函数去初始化这个变量,同时给GlobalObject类绑定一个方法,用来加载用户创建的json文件数据。

Message

抽象层

type IMessage interface {

GetMsgId() uint32

GetMsgLen() uint32

GetMsg() []byte

SetMsgId(uint32)

SetMsgLen(uint32)

SetMsg([]byte)

}

实现层

type Message struct {

Id uint32

DataLen uint32

Data []byte

}

// 创建一个Msg包

func NewMsgPackage(id uint32, data []byte) *Message {

return &Message{

Id: id,

DataLen: uint32(len(data)),

Data: data,

}

}

func (m *Message) GetMsgId() uint32 {

return m.Id

}

func (m *Message) GetMsgLen() uint32 {

return m.DataLen

}

func (m *Message) GetMsg() []byte {

return m.Data

}

func (m *Message) SetMsgId(Id uint32) {

m.Id = Id

}

func (m *Message) SetMsgLen(DataLen uint32) {

m.DataLen = DataLen

}

func (m *Message) SetMsg(Data []byte) {

m.Data = Data

}

DataPack

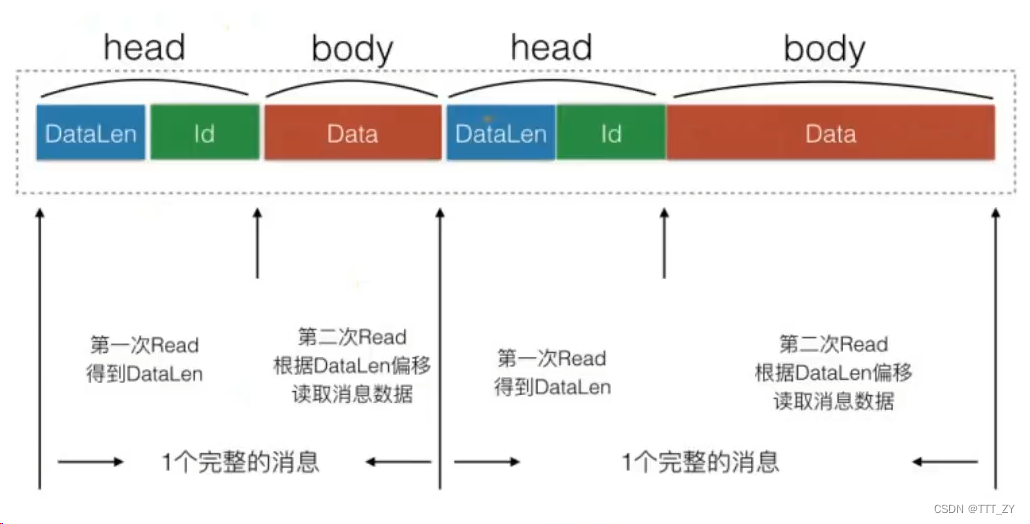

网络上传输数据是以二进制流的形式传输,这就可能导致数据与数据之间连续,无法区分,也就是粘包问题,解决此问题有俩种方法:TLV(Type、Len、Value)或者设置结束符。

抽象层

type IDataPack interface {

GetHeadLen() uint32

Pack(msg IMessage) ([]byte, error)

Unpack([]byte) (IMessage, error)

}

实现层

type DataPack struct {

}

func NewDataPack() *DataPack {

return &DataPack{}

}

func (d *DataPack) GetHeadLen() uint32 {

//DataLen uint32 + Id uint32 = 4 + 4 = 8 byte

return 8

}

func (d *DataPack) Pack(msg ziface.IMessage) ([]byte, error) {

//dataLen|dataId|data

dataBuff := bytes.NewBuffer([]byte{})

if err := binary.Write(dataBuff, binary.LittleEndian, msg.GetMsgLen()); err != nil {

return nil, err

}

if err := binary.Write(dataBuff, binary.LittleEndian, msg.GetMsgId()); err != nil {

return nil, err

}

if err := binary.Write(dataBuff, binary.LittleEndian, msg.GetMsg()); err != nil {

return nil, err

}

return dataBuff.Bytes(), nil

}

func (d *DataPack) Unpack(binaryData []byte) (ziface.IMessage, error) {

dataBuff := bytes.NewReader(binaryData)

msg := &Message{}

if err := binary.Read(dataBuff, binary.LittleEndian, &msg.DataLen); err != nil {

return nil, err

}

if err := binary.Read(dataBuff, binary.LittleEndian, &msg.Id); err != nil {

return nil, err

}

if utils.GlobalObject.MaxPackageSize > 0 && msg.DataLen > utils.GlobalObject.MaxPackageSize {

return nil, errors.New("too large msg data recv")

}

return msg, nil

}

MsgHandler

抽象层

// 消息管理抽象层

type IMsgHandle interface {

DoMsgHandler(request IRequest)

AddRouter(msgID uint32, router IRouter)

StartWorkerPool()

SendMsgToTaskQueue(request IRequest)

}

实现层

type MsgHandle struct {

//存放每个MsgID所对应的处理方法

Apis map[uint32]ziface.IRouter

//负责Worker取任务的消息队列

TaskQueue []chan ziface.IRequest

//业务工作worker池的worker数量

WorkerPoolSize uint32

}

func NewMsgHandle() *MsgHandle {

return &MsgHandle{

Apis: make(map[uint32]ziface.IRouter),

WorkerPoolSize: utils.GlobalObject.WorkerPoolSize,

TaskQueue: make([]chan ziface.IRequest, utils.GlobalObject.WorkerPoolSize),

}

}

func (m *MsgHandle) DoMsgHandler(request ziface.IRequest) {

handler, ok := m.Apis[request.GetMsgId()]

if !ok {

fmt.Println("api msgID = ", request.GetMsgId(), " is not found!")

}

handler.PreHandle(request)

handler.Handle(request)

handler.PostHandle(request)

}

func (m *MsgHandle) AddRouter(msgID uint32, router ziface.IRouter) {

if _, ok := m.Apis[msgID]; ok {

panic("repeat api , msgid = " + strconv.Itoa(int(msgID)))

}

m.Apis[msgID] = router

fmt.Println("add api router succ ", msgID)

}

// 启动一个Worker工作池

func (m *MsgHandle) StartWorkerPool() {

for i := 0; i < int(m.WorkerPoolSize); i++ {

m.TaskQueue[i] = make(chan ziface.IRequest, utils.GlobalObject.MaxWorkerTaskLen)

go m.StartOneWorker(i, m.TaskQueue[i])

}

}

// 启动一个Worker工作流程

func (m *MsgHandle) StartOneWorker(workerID int, taskQueue chan ziface.IRequest) {

fmt.Println("Worker ID = ", workerID, " is started...")

for {

select {

case request := <-taskQueue:

m.DoMsgHandler(request)

}

}

}

func (m *MsgHandle) SendMsgToTaskQueue(request ziface.IRequest) {

workerID := request.GetConnection().GetConnID() % m.WorkerPoolSize

fmt.Println("add connID = ", request.GetConnection().GetConnID(), " request MsgID = ", request.GetMsgId(),

" to WorkerID = ", workerID)

m.TaskQueue[workerID] <- request

}

使用MsgHandler模块集成管理路由方法,可以添加多路由函数到map中。

读写协程分离

客户端发数据—》服务端Reader拆包读数据—》Dohandler处理数据—》Dohandler通过管道将消息发给Writer—》Writer发数据给客户端

消息队列及工作池

// 启动一个Worker工作池

func (m *MsgHandle) StartWorkerPool() {

for i := 0; i < int(m.WorkerPoolSize); i++ {

m.TaskQueue[i] = make(chan ziface.IRequest, utils.GlobalObject.MaxWorkerTaskLen)

go m.StartOneWorker(i, m.TaskQueue[i])

}

}

// 启动一个Worker工作流程

func (m *MsgHandle) StartOneWorker(workerID int, taskQueue chan ziface.IRequest) {

fmt.Println("Worker ID = ", workerID, " is started...")

for {

select {

case request := <-taskQueue:

m.DoMsgHandler(request)

}

}

}```

func (m *MsgHandle) SendMsgToTaskQueue(request ziface.IRequest) {

// 将消息平均分配给不同的Worker

workerID := request.GetConnection().GetConnID() % m.WorkerPoolSize

fmt.Println("add connID = ", request.GetConnection().GetConnID(), " request MsgID = ", request.GetMsgId(),

" to WorkerID = ", workerID)

m.TaskQueue[workerID] <- request

}

增加链接个数限定,如果超过一定量的客户端个数,Zinx为了保证后端及时响应,而拒绝链接请求。

**抽象层**

```go

type IConnManager interface {

Add(conn IConnection)

Remove(conn IConnection)

Get(connID uint32) (IConnection, error)

Len() int

ClearConn()

}

实现层

type ConnManager struct {

connections map[uint32]ziface.IConnection

connLock sync.RWMutex

}

func NewConnManager() *ConnManager {

return &ConnManager{

connections: make(map[uint32]ziface.IConnection),

}

}

func (cm *ConnManager) Add(conn ziface.IConnection) {

cm.connLock.Lock()

defer cm.connLock.Unlock()

cm.connections[conn.GetConnID()] = conn

fmt.Println("connID = ", conn.GetConnID(), " add to ConnManager success. conn num = ", cm.Len())

}

func (cm *ConnManager) Remove(conn ziface.IConnection) {

cm.connLock.Lock()

defer cm.connLock.Unlock()

delete(cm.connections, conn.GetConnID())

fmt.Println("connID = ", conn.GetConnID(), " remove to ConnManager success. conn num = ", cm.Len())

}

func (cm *ConnManager) Get(connID uint32) (ziface.IConnection, error) {

cm.connLock.RLock()

defer cm.connLock.RUnlock()

if conn, ok := cm.connections[connID]; ok {

return conn, nil

} else {

return nil, errors.New("conn not found")

}

}

func (cm *ConnManager) Len() int {

return len(cm.connections)

}

func (cm *ConnManager) ClearConn() {

cm.connLock.Lock()

defer cm.connLock.Unlock()

for connID, conn := range cm.connections {

conn.Stop()

delete(cm.connections, connID)

}

fmt.Println("Clear All conn success! conn num = ", cm.Len())

}

内容来自 @刘丹冰Aceld

1219

1219

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?