问题

spark (采用yarn部署)UI界面查看Workers数目为0

详细问题

查看spark安装目录/spark/logs/日志文件

22/11/01 18:26:08 WARN Worker: Failed to connect to master master:7077

org.apache.spark.SparkException: Exception thrown in awaitResult:

at org.apache.spark.util.ThreadUtils$.awaitResult(ThreadUtils.scala:226)

at org.apache.spark.rpc.RpcTimeout.awaitResult(RpcTimeout.scala:75)

at org.apache.spark.rpc.RpcEnv.setupEndpointRefByURI(RpcEnv.scala:101)

at org.apache.spark.rpc.RpcEnv.setupEndpointRef(RpcEnv.scala:109)

at org.apache.spark.deploy.worker.Worker$$anonfun$org$apache$spark$deploy$worker$Worker$$tryRegisterAllMasters$1$$anon$1.run(Worker.scala:253)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:750)

Caused by: java.io.IOException: Failed to connect to master/192.168.174.129:7077

at org.apache.spark.network.client.TransportClientFactory.createClient(TransportClientFactory.java:245)

at org.apache.spark.network.client.TransportClientFactory.createClient(TransportClientFactory.java:187)

at org.apache.spark.rpc.netty.NettyRpcEnv.createClient(NettyRpcEnv.scala:198)

at org.apache.spark.rpc.netty.Outbox$$anon$1.call(Outbox.scala:194)

at org.apache.spark.rpc.netty.Outbox$$anon$1.call(Outbox.scala:190)

... 4 more

Caused by: io.netty.channel.AbstractChannel$AnnotatedConnectException: Connection refused: master/192.168.174.129:7077

at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:715)

at io.netty.channel.socket.nio.NioSocketChannel.doFinishConnect(NioSocketChannel.java:323)

at io.netty.channel.nio.AbstractNioChannel$AbstractNioUnsafe.finishConnect(AbstractNioChannel.java:340)

at io.netty.channel.nio.NioEventLoop.processSelectedKey(NioEventLoop.java:633)

at io.netty.channel.nio.NioEventLoop.processSelectedKeysOptimized(NioEventLoop.java:580)

at io.netty.channel.nio.NioEventLoop.processSelectedKeys(NioEventLoop.java:497)

at io.netty.channel.nio.NioEventLoop.run(NioEventLoop.java:459)

at io.netty.util.concurrent.SingleThreadEventExecutor$5.run(SingleThreadEventExecutor.java:858)

at io.netty.util.concurrent.DefaultThreadFactory$DefaultRunnableDecorator.run(DefaultThreadFactory.java:138)

... 1 more

Caused by: java.net.ConnectException: Connection refused

... 11 more

解决方案一

适用于spark 2.0及以上版本

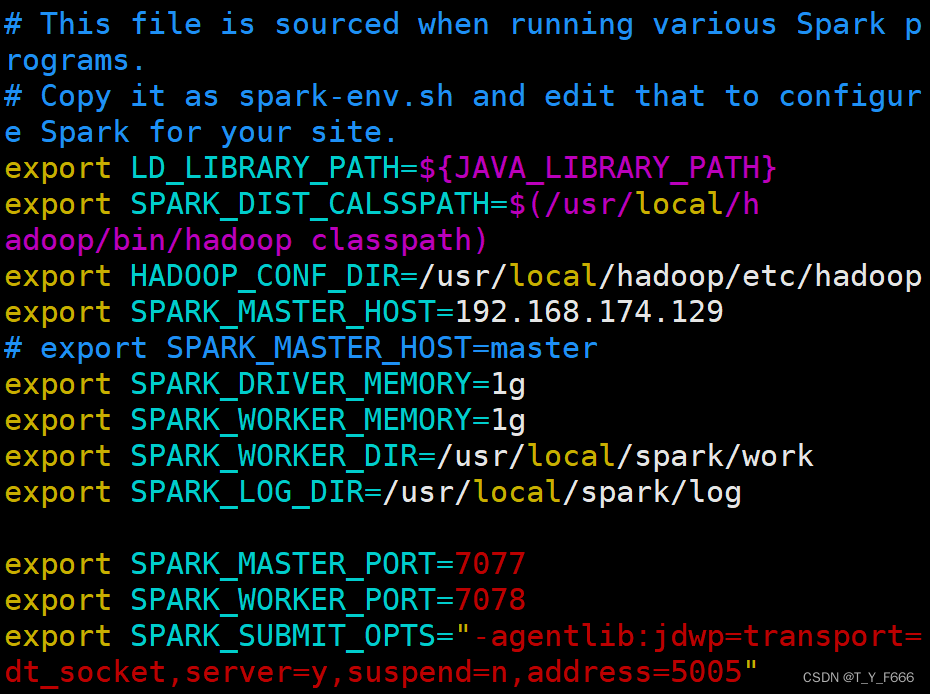

1 编辑spark安装目录/spark/conf/spark-env.sh

即

vim /spark安装目录/spark/conf/spark-env.sh

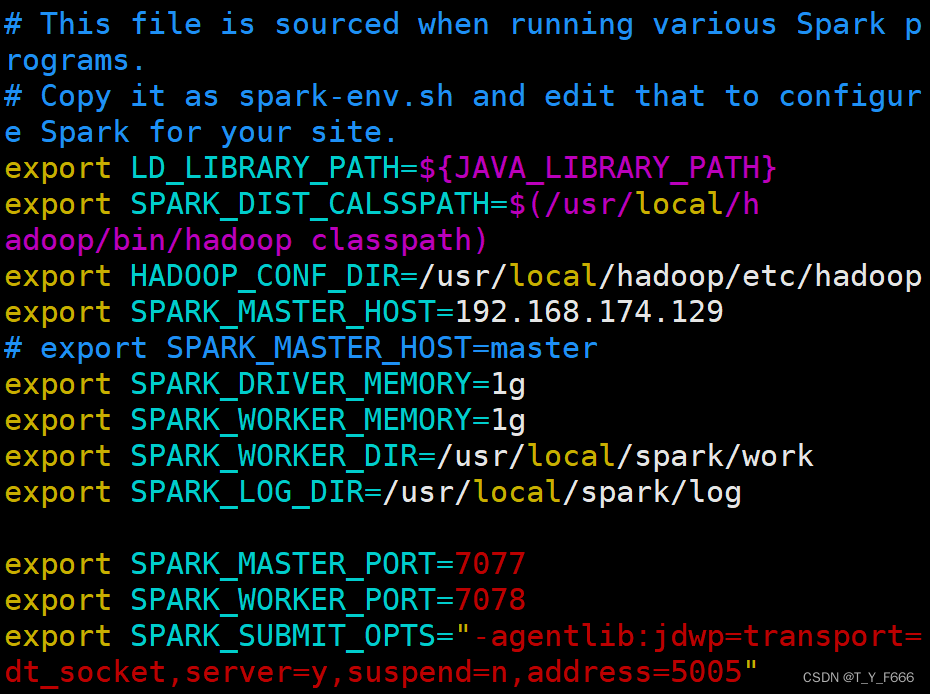

2 修改spark-env.sh中的SPARK_MASTER_IP=IP地址为

SPARK_MASTER_HOST=IP地址

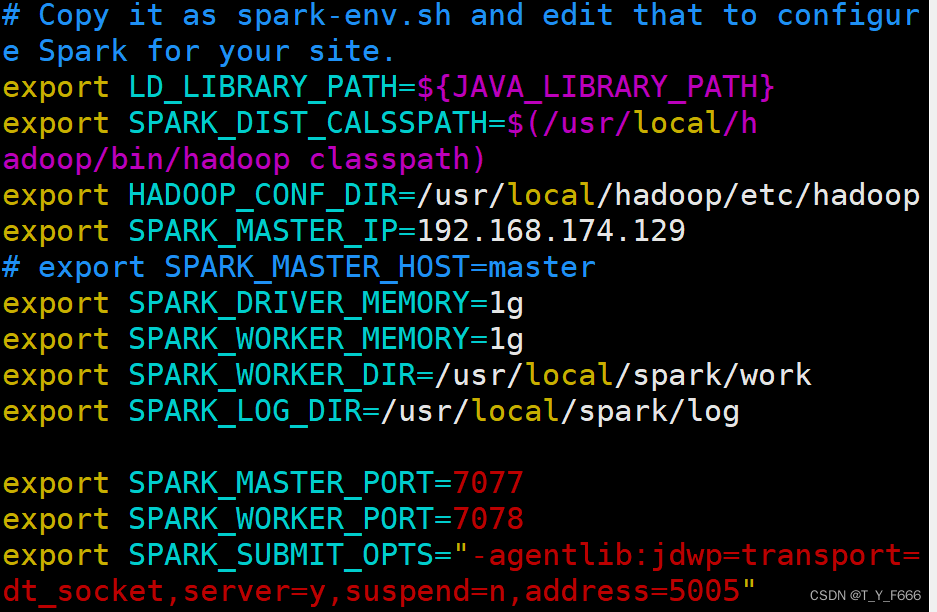

即将

改为

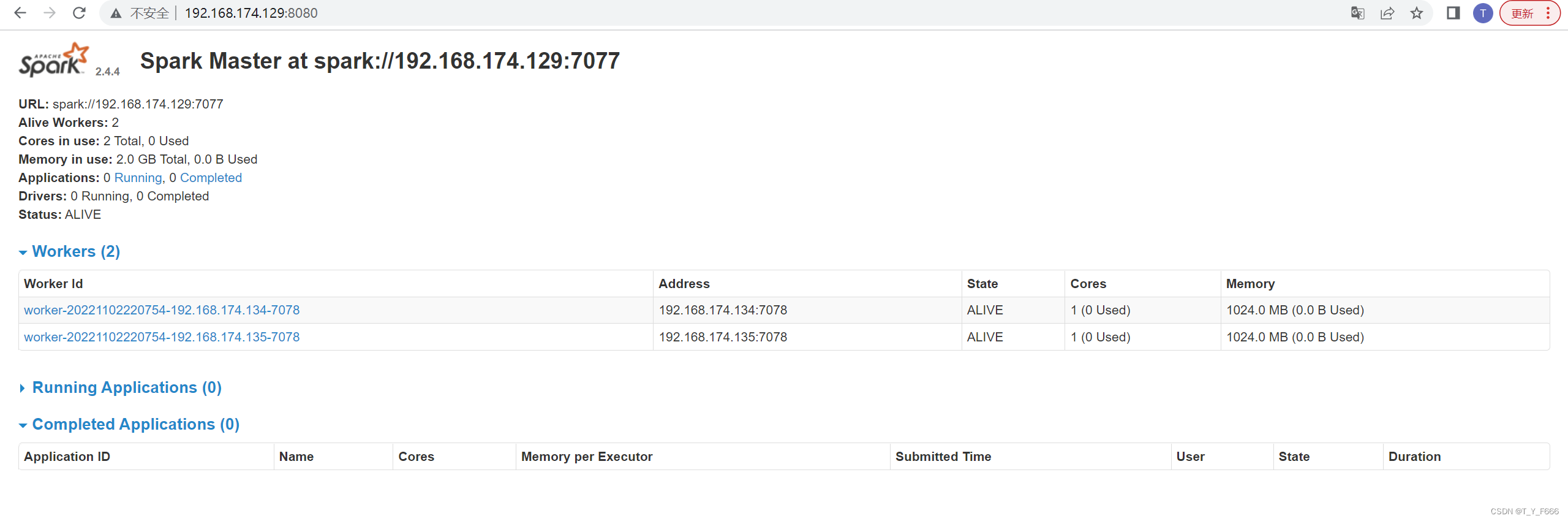

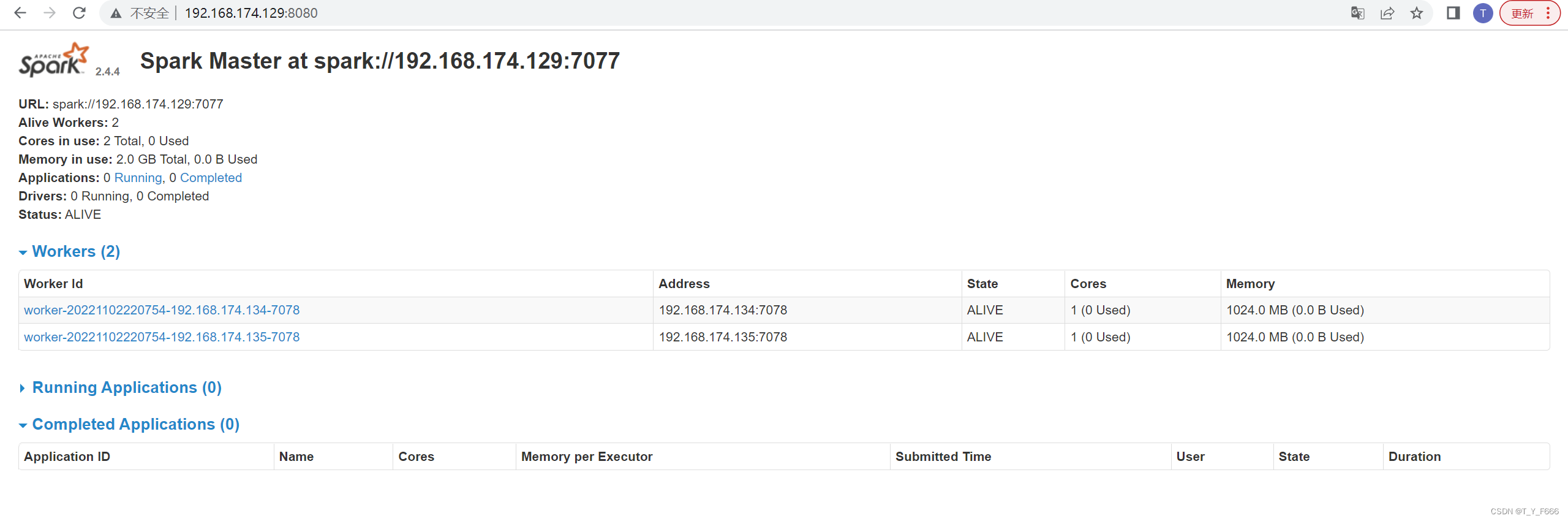

3 重启spark后再次查看

解决方案二

适用于spark 1.6及以下版本

1 编辑spark安装目录/spark/conf/spark-env.sh

即

vim /spark安装目录/spark/conf/spark-env.sh

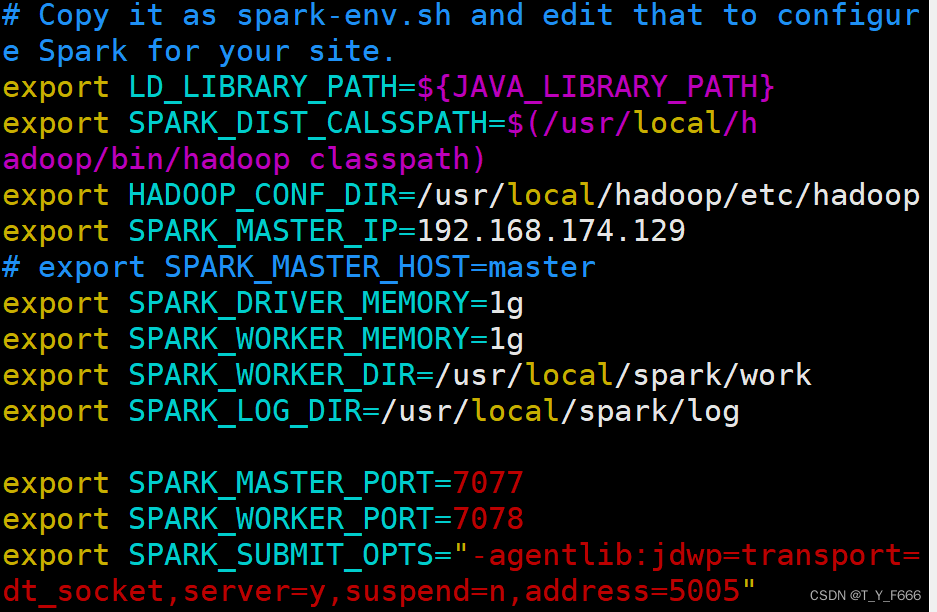

2 修改spark-env.sh中的SPARK_MASTER_HOST=IP地址为

SPARK_MASTER_IP=IP地址

即将

改为

3 重启spark后再次查看

原因

对于spark 1.6及以下版本, 官方API所提供的写法为

SPARK_MASTER_IP=您的主机

对于spark 2.0及以上版本,官方API所提供的写法为

SPARK_MASTER_HOST=您的主机

SPARK_LOCAL_IP=您的主机IP

实际上, 对于

SPARK_LOCAL_IP=您的主机IP

语句, 即使未加, 似乎也不会影响spark正常运行

总结

混淆 spark 1.6及以下版本以及spark 2.0及以上版本的写法, 大概率也不会产生问题(实际上, 笔者身边只有大约不到10%的人遇到了类似问题), 但是官网API所提供的写法无疑是规范的,因此建议采用官网API的写法。

原创不易

转载请标明出处

如果对你有所帮助 别忘啦点赞支持哈

8987

8987

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?