how-to-install-ganglia-from-prebuild-rpm-on-centos6.6-x86_64

写作缘由:之前对 ganglia 监控做过一些测试,觉得是一个非常不错的监控利器,安装使用简单;简单整理一下,分享给各位。

1 prepare test vms(using docker)

vm规划

| hostname | role |

|---|---|

| ssh1 | dns server |

| spark1/2/3 | nodes of hadoop cluster, gmond |

| monitor1 | gmetad/httpd/phpd |

1) create dns server for docker environment(optional)

使用 docker 部署测试环境,有一个重要的问题是网络配置问题:默认情况启动 docker container 时会分配的动态 ip (按顺序不重复分配,除非重启docker),而且 /etc/hosts 在 container 启动时会初始化;分配固定 ip 的方法比较复杂(能力有限,有人成功配置过的话,欢迎共享一下方法)。为了简化网络配置,我采用的方法是:

部署一个 DNS server(使用 dnsmasq),每次启动docker环境第一个启动DNS server,ip 固定(使用过程中不要重启);

其他 containers 配置 /etc/resolv.conf 指向你配置的 DNS servers;

containers之间通信直接使用 host即可。

(1)docker环境安装

暂未整理;也比较简单,请参考官方文档

安装后,下载 centos6的镜像

docker pull centos:6.6NOTE:

指定不同版本的 centos的镜像的 tag有效值请参考:centos官方提供的镜像说明

(2)部署 docker环境的 dns server

docker run -i -t -d -P -h ssh1 --name ssh1 --volume=/Users/Users_datadir_docker:/docker_vol01 centos:6.6 /bin/bash

dockerIn.sh ssh1cd /docker_vol01/script_docker/datadir_yum

sh update-yum-repo.sh //更新yum源的脚本,可以略过,为了提高软件安装速度,推荐使用 OS 的ISO镜像 部署本地或局域网 yum 源

dockerIn.sh ssh1 //dockerIn.sh 是连接 docker container的一种技术,比 使用 ssh 方便

yum install -y which.x86_64

yum install -y dnsmasq.x86_64cd /etc/

cp dnsmasq.conf dnsmasq.conf.org

vi dnsmasq.conf

resolv-file=/etc/dnsmasq.resolv.conf

strict-order

user=root

listen-address=127.0.0.1,0.0.0.0

addn-hosts=/etc/dnsmasq.hostscp /etc/resolv.conf /etc/resolv.conf.org

vi /etc/resolv.conf

#search lan

#nameserver 192.168.199.1

nameserver 127.0.0.1vi /etc/dnsmasq.resolv.conf

#nameserver 127.0.0.1 不应该添加

nameserver 202.96.209.5

nameserver 202.96.209.133

nameserver 223.5.5.5

nameserver 223.6.6.6

nameserver 114.114.114.114

nameserver 8.8.4.4

#nameserver 8.8.8.8vi /etc/dnsmasq.hosts

172.17.0.2 ssh1

172.17.0.3 spark1

172.17.0.4 spark2

172.17.0.5 spark3启动 dnsmasq

/etc/init.d/dnsmasq restart(3) 配置 docker container 的使用 dns进行域名解析

dockerIn.sh spark1/2/3

vi /etc/resolv.conf

#search lan

#nameserver 192.168.199.1

nameserver 172.17.0.22) 创建测试过程中被监控的虚拟主机 vms (需要在被监控的节点上部署 gmond)

docker run -i -t -d -P -h spark1 --name spark1 --volume=/Users/Users_datadir_docker:/docker_vol01 centos:6.6 /bin/bash

docker run -i -t -d -P -h spark2 --name spark2 --volume=/Users/Users_datadir_docker:/docker_vol01 centos:6.6 /bin/bash

docker run -i -t -d -P -h spark3 --name spark3 --volume=/Users/Users_datadir_docker:/docker_vol01 centos:6.6 /bin/bash3) 创建测试过程中部署ganglia server端的vm

docker run -i -t -d -P -h monitor1 --name monitor1 --volume=/Users/Users_datadir_docker:/docker_vol01 centos:6.6 /bin/bash

dockerIn.sh ganglia1

cd /docker_vol01/script_docker/datadir_yum

sh update-yum-repo.sh2 build rpm from source

1) prepare

(1)download ganglia source tarball

(2) install rpm-build

build tool: rpmbuild

yum search rpm-build

yum install -y rpm-build.x86_64yum install -y rpm-build.x86_64

(3) get build dependencies

rpmbuild -tb ganglia-3.6.0.tar.gz [hadoop@host1 ganglia] rpmbuild−tbganglia−3.6.0.tar.gzerror:Failedbuilddependencies:libpng−develisneededbyganglia−3.6.0−1.x8664libartlgpl−develisneededbyganglia−3.6.0−1.x8664gcc−c++isneededbyganglia−3.6.0−1.x8664python−develisneededbyganglia−3.6.0−1.x8664libconfuse−develisneededbyganglia−3.6.0−1.x8664pcre−develisneededbyganglia−3.6.0−1.x8664autoconfisneededbyganglia−3.6.0−1.x8664automakeisneededbyganglia−3.6.0−1.x8664libtoolisneededbyganglia−3.6.0−1.x8664expat−develisneededbyganglia−3.6.0−1.x8664rrdtool−develisneededbyganglia−3.6.0−1.x8664freetype−develisneededbyganglia−3.6.0−1.x8664apr−devel>1isneededbyganglia−3.6.0−1.x8664[hadoop@host1ganglia]

(4) install dependencies

yum list libpng-devel libart_lgpl-devel gcc-c++ python-devel libconfuse-devel pcre-devel autoconf automake libtool expat-devel rrdtool-devel freetype-devel apr-devel

yum install -y libpng-devel libart_lgpl-devel gcc-c++ python-devel libconfuse-devel pcre-devel autoconf automake libtool expat-devel rrdtool-devel freetype-devel apr-devel注意: libconfuse-devel 在 centos 6.6 的 ISO 中没有,需要单独下载安装

scp libconfuse-2.7-4.el6.x86_64.rpm hadoop@192.168.99.102:~/soft/ganglia/

scp libconfuse-devel-2.7-4.el6.x86_64.rpm hadoop@192.168.99.102:~/soft/ganglia/(5) build ganglia rpm packages from source tarball

rpmbuild -tb ganglia-3.6.0.tar.gz Wrote: /home/hadoop/rpmbuild/RPMS/x86_64/ganglia-gmetad-3.6.0-1.x86_64.rpm

Wrote: /home/hadoop/rpmbuild/RPMS/x86_64/ganglia-gmond-3.6.0-1.x86_64.rpm

Wrote: /home/hadoop/rpmbuild/RPMS/x86_64/ganglia-gmond-modules-python-3.6.0-1.x86_64.rpm

Wrote: /home/hadoop/rpmbuild/RPMS/x86_64/ganglia-devel-3.6.0-1.x86_64.rpm

Wrote: /home/hadoop/rpmbuild/RPMS/x86_64/libganglia-3.6.0-1.x86_64.rpm

Executing(%clean): /bin/sh -e /var/tmp/rpm-tmp.3ZcM0U

+ umask 022

+ cd /home/hadoop/rpmbuild/BUILD

+ cd ganglia-3.6.0

+ /bin/rm -rf /home/hadoop/rpmbuild/BUILDROOT/ganglia-3.6.0-1.x86_64

+ exit 0

[hadoop@host1 ganglia]$[hadoop@host1 ganglia] ls−l/home/hadoop/rpmbuild/RPMS/x8664/total688−rw−rw−r–1hadoophadoop55033Mar2223:42ganglia−devel−3.6.0−1.x8664.rpm−rw−rw−r–1hadoophadoop48222Mar2223:42ganglia−gmetad−3.6.0−1.x8664.rpm−rw−rw−r–1hadoophadoop347647Mar2223:42ganglia−gmond−3.6.0−1.x8664.rpm−rw−rw−r–1hadoophadoop145676Mar2223:42ganglia−gmond−modules−python−3.6.0−1.x8664.rpm−rw−rw−r–1hadoophadoop101579Mar2223:42libganglia−3.6.0−1.x8664.rpm[hadoop@host1ganglia]

(6) optional, configure ganglia yum repository

方式1: 构建本地 yum repostory

a

yum search createrepo

yum install -y createrepo.noarchb

cd /home/hadoop/rpmbuild/RPMS/x86_64/

cp ~/soft/ganglia/libconfuse-2.7-4.el6.x86_64.rpm .

cp ~/soft/ganglia/libconfuse-devel-2.7-4.el6.x86_64.rpm .

createrepo .c configure and update ganglia yum repository

vi ganglia3.6-centos6.6-x86_64.repo

[ganglia3.6-centos6.6-x64]

name=ganglia3.6_repo CentOS-$releasever - Media

#baseurl=http://192.168.101.1:60080/ganglia3.5-centos6.6-x64/

baseurl=file:///home/hadoop/rpmbuild/RPMS/x86_64/

gpgcheck=0cp ganglia3.6-centos6.6-x86_64.repo /etc/yum.repo.d/

yum clean al

yum makecached test

yum search ganglia3 install and configure ganglia

1) install gmond on spark1/2/3

dockerIn.sh spark1/2/3

yum search ganglia

yum install -y ganglia-gmond.x86_64cd /etc/ganglia/

cp gmond.conf gmond.conf.org

vi gmond.conf

cluster {

name = "MyCluster1"

owner = "unspecified"

latlong = "unspecified"

url = "unspecified"

}(1) configure gmond in multicast mode (默认的主播配置模式)

udp_send_channel {

#bind_hostname = yes # Highly recommended, soon to be default.

# This option tells gmond to use a source address

# that resolves to the machine's hostname. Without

# this, the metrics may appear to come from any

# interface and the DNS names associated with

# those IPs will be used to create the RRDs.

mcast_join = 239.2.11.71

port = 9649

ttl = 1

}

/* You can specify as many udp_recv_channels as you like as well. */

udp_recv_channel {

mcast_join = 239.2.11.71

port = 9649

bind = 239.2.11.71

retry_bind = true

}

/* You can specify as many tcp_accept_channels as you like to share

an xml description of the state of the cluster */

tcp_accept_channel {

port = 9649

}分发到 spark2/3

scp gmond.conf spark2:/etc/ganglia/

scp gmond.conf spark3:/etc/ganglia/(2) configure gmond in unicast mode (单播配置模式)

cd /etc/ganglia

cp gmond.conf gmond.conf.multicast.good

on spark1

vi gmond.conf

a

globals部分

send_metadata_interval = 30 /*secs */b

udp_send_channel {

#bind_hostname = yes # Highly recommended, soon to be default.

# This option tells gmond to use a source address

# that resolves to the machine's hostname. Without

# this, the metrics may appear to come from any

# interface and the DNS names associated with

# those IPs will be used to create the RRDs.

#mcast_join = 239.2.11.71

host = spark1

port = 9649

ttl = 1

}

udp_send_channel {

#bind_hostname = yes # Highly recommended, soon to be default.

# This option tells gmond to use a source address

# that resolves to the machine's hostname. Without

# this, the metrics may appear to come from any

# interface and the DNS names associated with

# those IPs will be used to create the RRDs.

#mcast_join = 239.2.11.71

host = spark2

port = 9649

ttl = 1

}c

udp_recv_channel {

mcast_join = 239.2.11.71

port = 9649

bind = 239.2.11.71

retry_bind = true

}

udp_recv_channel {

#mcast_join = 239.2.11.71

host = spark1

port = 9649

#bind = 239.2.11.71

#retry_bind = true

}d

tcp_accept_channel {

port = 9649

}

on spark2

vi gmond.conf

a

globals部分

send_metadata_interval = 30 /*secs */b

udp_send_channel {

#bind_hostname = yes # Highly recommended, soon to be default.

# This option tells gmond to use a source address

# that resolves to the machine's hostname. Without

# this, the metrics may appear to come from any

# interface and the DNS names associated with

# those IPs will be used to create the RRDs.

#mcast_join = 239.2.11.71

host = spark1

port = 9649

ttl = 1

}

udp_send_channel {

#bind_hostname = yes # Highly recommended, soon to be default.

# This option tells gmond to use a source address

# that resolves to the machine's hostname. Without

# this, the metrics may appear to come from any

# interface and the DNS names associated with

# those IPs will be used to create the RRDs.

#mcast_join = 239.2.11.71

host = spark2

port = 9649

ttl = 1

}c

udp_recv_channel {

mcast_join = 239.2.11.71

port = 9649

bind = 239.2.11.71

retry_bind = true

}

udp_recv_channel {

#mcast_join = 239.2.11.71

host = spark2

port = 9649

#bind = 239.2.11.71

#retry_bind = true

}d

tcp_accept_channel {

port = 9649

}

on spark3

vi gmond.conf

a

globals部分

send_metadata_interval = 30 /*secs */b

udp_send_channel {

#bind_hostname = yes # Highly recommended, soon to be default.

# This option tells gmond to use a source address

# that resolves to the machine's hostname. Without

# this, the metrics may appear to come from any

# interface and the DNS names associated with

# those IPs will be used to create the RRDs.

#mcast_join = 239.2.11.71

host = spark1

port = 9649

ttl = 1

}

udp_send_channel {

#bind_hostname = yes # Highly recommended, soon to be default.

# This option tells gmond to use a source address

# that resolves to the machine's hostname. Without

# this, the metrics may appear to come from any

# interface and the DNS names associated with

# those IPs will be used to create the RRDs.

#mcast_join = 239.2.11.71

host = spark2

port = 9649

ttl = 1

}c

#udp_recv_channel {

# mcast_join = 239.2.11.71

# port = 9649

# bind = 239.2.11.71

# retry_bind = true

#}d

#tcp_accept_channel {

# port = 9649

#}2) install gmetad on monitor1

(1)

yum install -y ganglia-gmetad.x86_64(2)configure

cd /etc/ganglia/

cp gmetad.conf gmetad.conf.org

vi gmetad.conf

data_source "MyCluster1" spark1:9649 spark2:96493) install gweb + httpd +phpd on monitor1

(1) install and configure gweb

a)

推荐 ganglia-web-3.6.2 安装在 非root用户能够访问的地方,避免放在 ntfs 远程磁盘(可能导致 httpd 没有权限访问 web没有显示)

mkdir -p /data/

cd /data/

tar zxf ganglia-web-3.6.2.tar.gz

ln -s ganglia-web-3.6.2/ ganglia

chown -R nobody:apache ganglia*

cd ganglia

mkdir dwoo

mkdir cache/ compiled/

chmod -R 777 cache/ compiled/

#其他规划

mkdir -p /var/log/httpd/ganglia

chown -R nobody /var/log/httpd/ganglia

mkdir -p /var/lib/ganglia/

chown -R nobody:apache /var/lib/ganglia/

b)

cd ganglia

cp conf_default.php conf_default.php.org

有文档中提到 可在 conf.php 中配置需要配置的参数,继承并覆盖 conf_default.php 中的配置,但测试发现 配置 conf.php 在访问web时没有生效,推荐配置 conf_default.php

vi conf_default.php

#$conf['gweb_confdir'] = "/var/lib/ganglia-web";

#$conf['gweb_confdir'] = "/var/www/html/ganglia";

$conf['gweb_confdir'] = "/data/ganglia";

#$conf['auth_system'] = 'readonly';

$conf['auth_system'] = 'disabled';

#$conf['metric_groups_initially_collapsed'] = false;

$conf['metric_groups_initially_collapsed'] = true;c)

vi conf/httpd.conf

#User apache

User nobody

Group apachevi /etc/httpd/conf.d/ganglia-vhost.conf

配置示例:

<VirtualHost *:60080>

#ServerAdmin admin@localhost.com

DocumentRoot /data/ganglia

#ServerName my.ganglia.com

ErrorLog /var/log/httpd/ganglia/error.log

CustomLog /var/log/httpd/ganglia/access.log common

<Directory /data/ganglia>

Order deny,allow

Allow from all

</Directory>

</VirtualHost>(2) install httpd + php

yum install -y httpd.x86_64

yum install -y php.x86_64(3) configure httpd

cd /etc/httpd

cp conf/httpd.conf conf/httpd.conf.org

vi conf/httpd.conf

a) add

Listen 60080b) make source

Include conf.d/*.confc) add

LoadModule php5_module modules/libphp5.so

#For PHP

<IfModule php5_module>

<FilesMatch \.php$>

SetHandler application/x-httpd-php

</FilesMatch>

<FilesMatch "\.ph(p[2-6]?|tml)$">

SetHandler application/x-httpd-php

</FilesMatch>

<FilesMatch "\.phps$">

SetHandler application/x-httpd-php-source

</FilesMatch>

</IfModule>在部分(rpm版本中没有这部分配置,直接查找),

找到 AddType application/x-gzip .gz .tgz 在其下添加如下内容

#AddType application/x-httpd-php .php

AddType application/x-httpd-php .php .phtml .php3 .inc

AddType application/x-httpd-php-source .phps注意: (.前面有空格)

d) modify

<IfModule dir_module>

DirectoryIndex index.html

</IfModule>–>

<IfModule dir_module>

DirectoryIndex index.php index.html

</IfModule>vi conf.d/ganglia-vhost.conf

<VirtualHost *:60080>

#ServerAdmin admin@localhost.com

DocumentRoot /docker_vol01/data/ganglia

#ServerName my.ganglia.com

<Directory /docker_vol01/data/ganglia>

Order deny,allow

Allow from all

</Directory>

</VirtualHost>(4)configure php

cp php.ini php.ini.org

vi php.ini

a)

;register_globals = Off

register_globals = On其他修改:

修改1:根据需求增大memory_limit, ganglia 指标多时需要的内存较大,可能提示可分配内存耗尽,这个根据情况选择大小

;memory_limit = 128M

memory_limit = 512M修改2:修改 date.timezone ,否则提示

[Sat Jan 10 22:03:11.375281 2015] [:error] [pid 2609:tid 140024146241280] [client 127.0.0.1:45395] PHP Warning: date(): It is not safe to rely on the system’s timezone settings. You are required to use the date.timezone setting or the date_default_timezone_set() function. In case you used any of those methods and you are still getting this warning, you most likely misspelled the timezone identifier. We selected the timezone ‘UTC’ for now, but please set date.timezone to select your timezone. in /home/hadoop/app/ganglia/ganglia-web-3.6.2/header.php on line 5, referer: http://localhost:60080/ganglia/?r=hour&cs=&ce=&c=hadoop_cluster1&h=&tab=v&vn=load_all_report&hide-hf=false&m=load_one&sh=1&z=small&hc=4&host_regex=&max_graphs=0&s=by+name

;date.timezone =

date.timezone = PRC4 test

1) start gmond on spark1/2/3

service gmond status

service gmond start2) start gmetad on monitor1

service gmetad status

service gmetad start3) start apache httpd on monitor1

service httpd status

service httpd start4)access ganglia web in brower

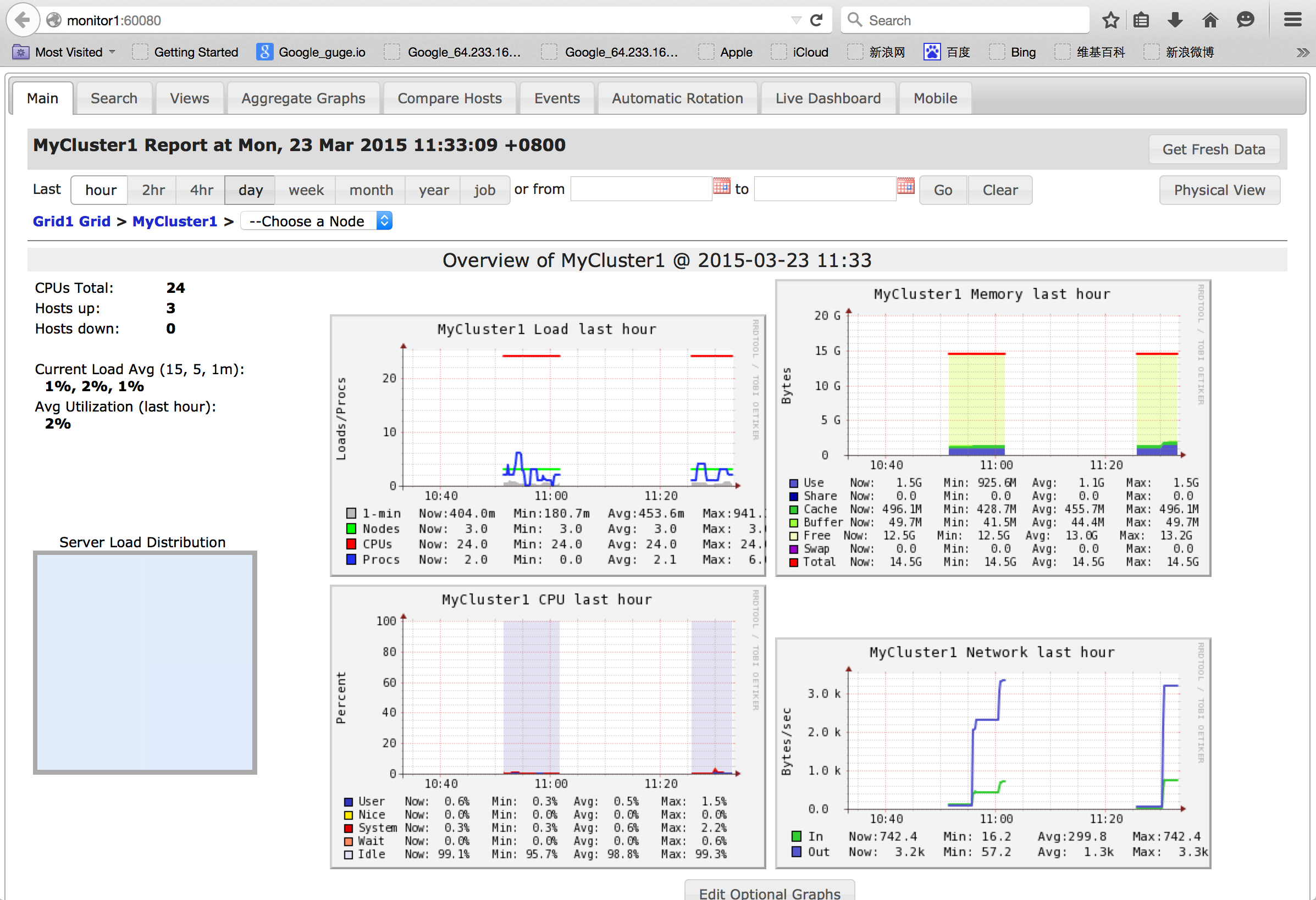

http://monitor1:60080

Ganglia web首页

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?