how-to-configure-and-use-spark-history-server

参考

spark monitoring Viewing After the Fact

基础知识

how to configure spark ?

locations to configure sparkspark properties using val sparkConf=new SparkConf().set… in spark application code

- Dynamically Loading Spark Properties using spark-submit options

./bin/spark-submit --name "My app" --master local[4] --conf spark.shuffle.spill=false --conf "spark.executor.extraJavaOptions=-XX:+PrintGCDetails -XX:+PrintGCTimeStamps" myApp.jar- Viewing Spark Properties using spark app WebUI

http://<driver>:4040in the “Environment” tab

- environment variable using conf/spark-env.sh

- loging using conf/log4j.properites

Available Properties about history server

| Property Name | Default | Meaning |

|---|---|---|

| spark.eventLog.compress | false | Whether to compress logged events, if spark.eventLog.enabled is true. |

| spark.eventLog.dir | file:///tmp/spark-events | Base directory in which Spark events are logged, if spark.eventLog.enabled is true. Within this base directory, Spark creates a sub-directory for each application, and logs the events specific to the application in this directory. Users may want to set this to a unified location like an HDFS directory so history files can be read by the history server. |

| spark.eventLog.enabled | false | Whether to log Spark events, useful for reconstructing the Web UI after the application has finished. |

* Environment Variables

略

enable spark eventlog

method 1:

bin/spark-submit --conf spark.eventLog.enabled=true --conf spark.eventLog.dir=file:/data01/data_tmp/spark-events ...method 2:

vi conf/spark-env.shSPARK_DAEMON_JAVA_OPTS= SPARK_MASTER_OPTS= SPARK_WORKER_OPTS= SPARK_JAVA_OPTS="-Dspark.eventLog.enabled=true -Dspark.eventLog.dir=file:/data01/data_tmp/spark-events"NOTE:

a spark.eventLog.dir=file:/data01/data_tmp/spark-events

- eventlog 是由 driver 写日志,对于 local/standalone/yarn-client 是没有大问题,但对于 spark-cluster 需要特别注意,最好设置为 hdfs 路径

- spark.eventLog.dir 指定目录不会自动生成,需要手工创建,有相应权限

b these Environment variable will be used in bin/spark-class)

test spark app after enable eventlog

test case:

object WordCount extends App {

val sparkConf = new SparkConf().setAppName("WordCount")

val sc = new SparkContext(sparkConf)

val lines = sc.textFile("file:/data01/data/datadir_github/spark/README.md")

val words = lines.flatMap(_.split("\\s+"))

val wordsCount = words.map(word=>(word, 1)).reduceByKey(_ + _)

wordsCount.foreach(println)

sc.stop()

}测试问题1:IDEA中 running spark in local 模式没有生成 eventlog ,继续测试自带 的 examples.SparkPi 一样

处理方法1: 在 CLI 测试

bin/run-example SparkPi结果报错:

Exception in thread “main” java.lang.IllegalArgumentException: Log directory file:/data01/data_tmp/spark-events does not exist.

处理方法2:

mkdir -p /data01/data_tmp/spark-events bin/run-example SparkPi结果:测试确认 /data01/data_tmp/spark-events 下生成了eventlog

继续在 IDEA中测试 running spark in local 发现依然没有生成 eventlog

原因分析:在 IDEA 中测试,虽然在依赖添加了 SPARK_CONF_DIR 路径,但 IDEA中执行并不像 在 CLI 使用bin/spark-submit提交app 读取解析conf/spark-env.sh中的配置文件处理方法3:

在 IDEA 的 run configuration 设置 vm options-Dspark.master="local[2]" -Dspark.eventLog.enabled=true -Dspark.eventLog.dir=file:/data01/data_tmp/spark-events

结果: local 模式正常

问题2:IDEA 测试 spark-on-yarn报错(暂没有解决)

在 IDEA 的 run configuration 设置 vm options-Dspark.master="yarn-client" -Dspark.eventLog.enabled=true -Dspark.eventLog.dir=file:/data01/data_tmp/spark-events报错

configure, start and use spark history server

configure

vi conf/spark-env.shSPARK_HISTORY_OPTS="-Dspark.history.fs.logDirectory=file:/data01/data_tmp/spark-events" #set when you use spark-history-serverNOTE

- spark.history.fs.logDirectory , spark.eventLog.dir 可以不同,意味着能够移动 eventlog 文件,便于协助诊断

- spark.history.ui.port

start

bin/start-history-server.sh

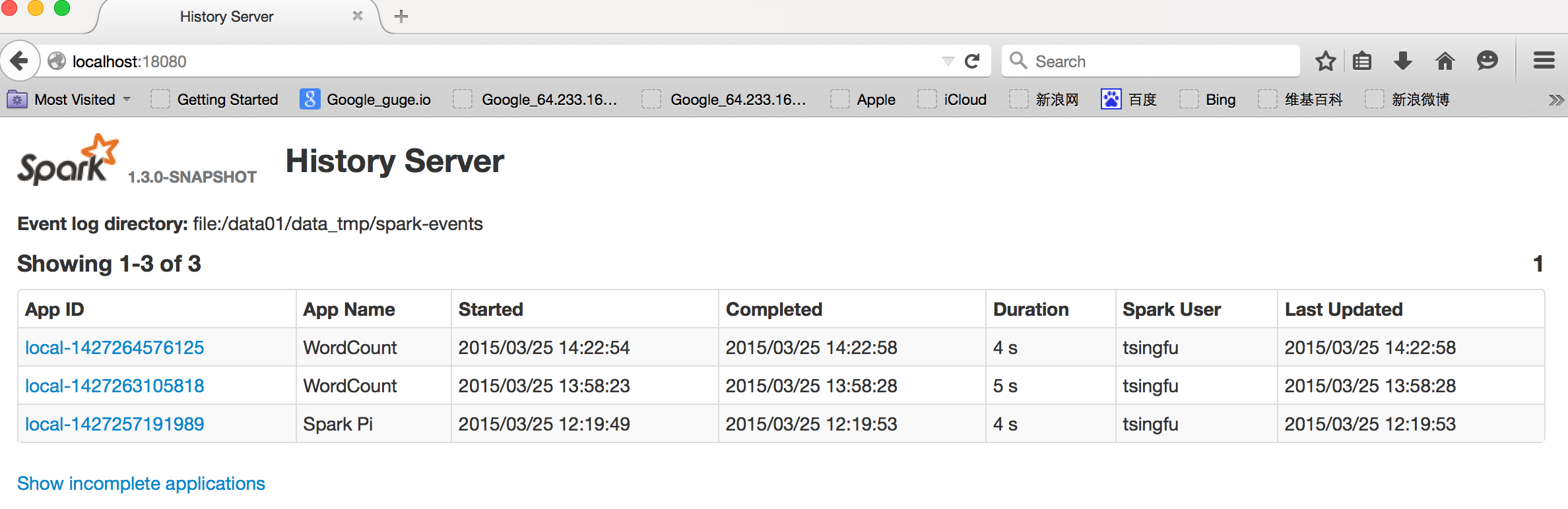

access spark history WebUI at http://<server-url>:18080

spark history server WebUI applications

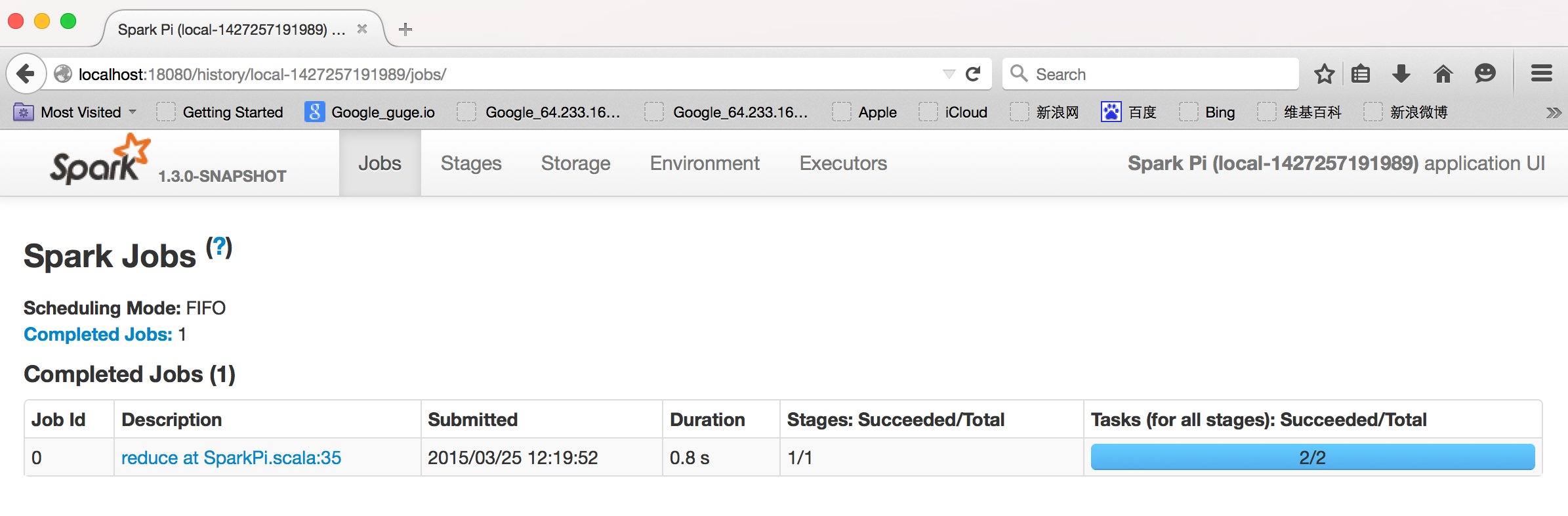

spark history server WebUI specific app

1758

1758

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?