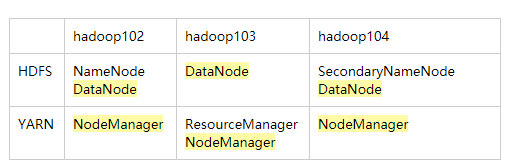

NN(1) DN(3) 2N(1)

DN和NM是混搭在一起的。DN:当前节点存储,NM:当前节点的cpu.内存

RM(1) NM(3)

对于HDFS来说,主机是NN(102) 其他102,103,104是从机

对于YARN来说,主机是RM(103),其他102,103,104是从机

在102上配置,然后分发到103.104

在env中填java_home

vim core-site.xml

<configuration>

<!--指定HDFS中NameNode的地址 -->

<property>

<name>fs.defaultFS</name>

<value>hdfs://hadoop102:9000</value>

</property>

<!--指定Hadoop运行时产生文件的存储目录 -->

<property>

<name>hadoop.tmp.dir</name>

<value>/opt/module/hadoop-2.7.2/data/tmp</value>

</property>

</configuration>

hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>hadoop104:50090</value>

</property>

</configuration>

yarn-site.xml

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>hadoop103</value>

</property>

</configuration>

分发:xsync etc

格式化 hdfs namenode -format

启动hadoop集群

hadoop-daemon.sh start namenode

hadoop-daemon.sh start datanode

hadoop-daemon.sh start secondarynamenode

/opt/module/hadoop-2.7.2/data/tmp/dfs/name/current

/opt/module/hadoop-2.7.2/data/tmp/dfs/data/current

clusterID=CID-85a9e8de-decd-4a12-93f6-22ff54a94fea

clusterID=CID-156192a5-0f88-4202-bd97-5dd6d1e71daf(两个ID不一样)

去这个目录下/opt/module/hadoop-2.7.2/data/tmp/dfs/data

删除data

[atguigu@hadoop103 dfs]$ rm data -rf

然后再重新启动hadoop

登录hadoop102:50070

1139

1139

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?