1 准备环境

192.168.0.251 shulaibao1

192.168.0.252 shulaibao2

hadoop-2.8.0-bin

spark-2.1.1-bin-hadoop2.7

关闭selinux:

/etc/selinux/config:SELINUX=disabled

增加hadoop用户组与用户

groupadd−g1000hadoopuseradd -u 2000 -g hadoop hadoop

mkdir−p/home/data/app/hadoopchown -R hadoop:hadoop /home/data/app/hadoop

$passwd hadoop

配置无密码登录

ssh−keygen−trsacd /home/hadoop/.ssh cpidrsa.pubauthorizedkeyshadoop1scp authorized_keys_hadoop2

hadoop@hadoop1:/home/hadoop/.ssh scpauthorizedkeyshadoop3hadoop@hadoop1:/home/hadoop/.ssh使用cat authorized_keys_hadoop1 >>

authorized_keys 命令 使用$scp authorized_keys

hadoop@hadoop2:/home/hadoop/.ssh把密码文件分发出去

- 1.1 安装jdk

推荐jdk1.8

- 1.2 安装并设置protobuf

注:该程序包需要在gcc安装完毕后才能安装,否则提示无法找到gcc编译器。

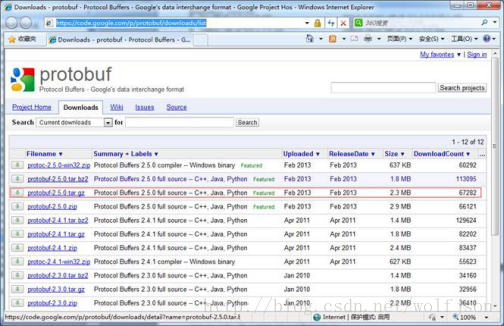

- 1.2.1 下载protobuf安装包

推荐版本2.5+

下载链接为: https://code.google.com/p/protobuf/downloads/list

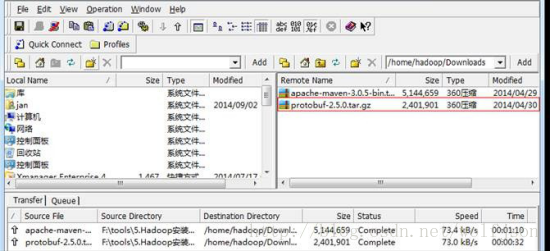

- 1.2.2使用ssh工具把protobuf-2.5.0.tar.gz包上传到/home/data/software目录

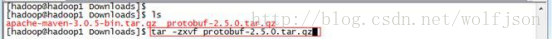

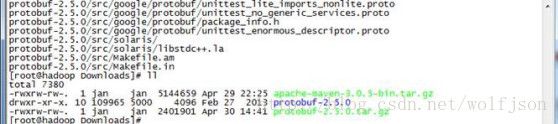

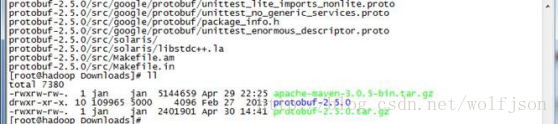

1.2.3 解压安装包

$tar -zxvf protobuf-2.5.0.tar.gz

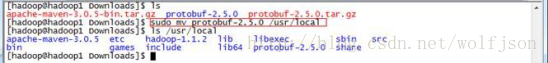

- 1.2.4 把protobuf-2.5.0目录转移到/usr/local目录下

$sudo mv protobuf-2.5.0 /usr/local

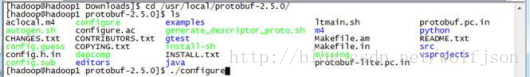

- 1.2.5 进行目录运行命令

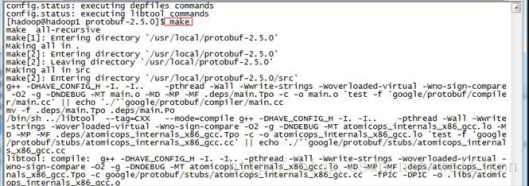

进入目录以root用户运行如下命令:

#./configure

#make

#make check

#make install- 1.2.6 验证是否安装成功

运行成功之后,通过如下方式来验证是否安装成功

#protoc2 安装hadoop

- 2.1 上传、解压、创建目录

tar -zxvf

mkdir tmp

Mdkdir name

Mkdir data- 2.2 hadoop核心配置

配置路径:/home/data/app/hadoop/etc/hadoop

Core.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration> <property> <name>fs.default.name</name> <value>hdfs://shulaibao1:9010</value> </property> <property> <name>fs.defaultFS</name> <value>hdfs://shulaibao1:9010</value> </property> <property> <name>io.file.buffer.size</name> <value>131072</value> </property> <property> <name>hadoop.tmp.dir</name> <value>file:/home/data/app/hadoop/hadoop-2.8.0/tmp</value> <description>Abase for other temporary directories.</description> </property> <property> <name>hadoop.proxyuser.hduser.hosts</name> <value>*</value> </property> <property> <name>hadoop.proxyuser.hduser.groups</name> <value>*</value> </property> </configuration>Hdfs-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration> <property> <name>dfs.namenode.secondary.http-address</name> <value>shulaibao1:9011</value> </property> <property> <name>dfs.namenode.name.dir</name> <value>file:/home/data/app/hadoop/hadoop-2.8.0/name</value> </property> <property> <name>dfs.datanode.data.dir</name> <value>file:/home/data/app/hadoop/hadoop-2.8.0/data</value> </property> <property> <name>dfs.replication</name> <value>1</value> </property> <property> <name>dfs.webhdfs.enabled</name> <value>true</value> </property> </configuration>Mapred-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration> <property> <name>mapreduce.framework.name</name> <value>yarn</value> </property> <property> <name>mapreduce.jobhistory.address</name> <value>shulaibao1:10020</value> </property> <property> <name>mapreduce.jobhistory.webapp.address</name> <value>shulaibao1:19888</value> </property> </configuration>

Yarn-site.xml

<?xml version="1.0"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Site specific YARN configuration properties -->

<configuration> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property> <property> <name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name> <value>org.apache.hadoop.mapred.ShuffleHandler</value> </property> <property> <name>yarn.resourcemanager.address</name> <value>shulaibao1:8032</value> </property> <property> <name>yarn.resourcemanager.scheduler.address</name> <value>shulaibao1:8030</value> </property> <property> <name>yarn.resourcemanager.resource-tracker.address</name> <value>shulaibao1:8031</value> </property> <property> <name>yarn.resourcemanager.admin.address</name> <value>shulaibao1:8033</value> </property> <property> <name>yarn.resourcemanager.webapp.address</name> <value>shulaibao1:8088</value> </property> </configuration>

Slaves

shulaibao1

shulaibao2

- 2.2 hadoop-env.sh yarn-env.sh环境配置

/home/hadoop/.bash_profile增加环境变量

export JAVA_HOME=/home/data/software/jdk1.8.0_121

export HADOOP_HOME=/home/data/app/hadoop/hadoop-2.8.0 export PATH=$PATH:/home/data/app/hadoop/hadoop-2.8.0/binHadoop-env.sh修改export

- HADOOP_CONF_DIR={HADOOP_CONF_DIR:-"HADOOP_HOME/etc/hadoop”}

- 2.3 分发到

Scp -r source target -h -p2.4 验证hdfs

路径:/home/data/app/hadoop/hadoop-2.8.0/bin

- 初始化格式化namenode

$./bin/hdfs namenode -format

- 启动hdfs

$./start-dfs.sh

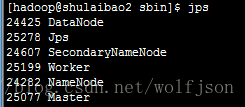

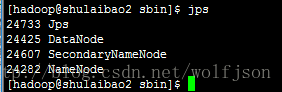

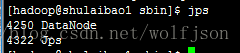

- Jps

Master:

Slave:

3 安装spark

- 3.1 下载并上传并解压

- 3.2 基础环境配置

/etc/profile

export SPARK_HOME=/home/data/app/hadoop/spark-2.1.1-bin-hadoop2.7 export PATH=$PATH:$SPARK_HOME/bin:$SPARK_HOME/sbin- 3.3 spark核心配置

/home/data/app/hadoop/spark-2.1.1-bin-hadoop2.7/conf/spark-env.sh

export SPARK_MASTER_IP=shulaibao2

export SPARK_MASTER_PORT=7077 export SPARK_WORKER_CORES=1 export SPARK_WORKER_INSTANCES=1 export SPARK_WORKER_MEMORY=512M export SPARK_LOCAL_IP=192.168.0.251 export PYTHONH vim /home/data/app/hadoop/spark-2.1.1-bin-hadoop2.7/conf/slaves shulaibao1 shulaibao2-

3.4 发到其他机器

-

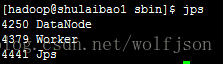

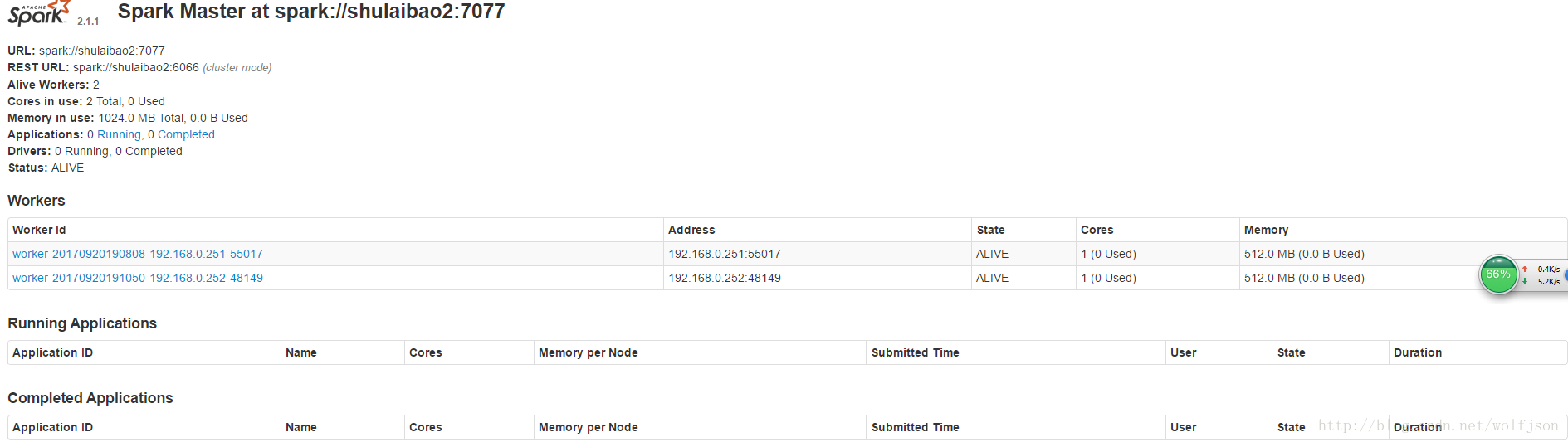

3.5 启动spark并验证

/home/data/app/hadoop/spark-2.1.1-bin-hadoop2.7/sbin ./start-all.shMaster:

Slave:

Spark webui:http://192.168.0.252:8082/

7382

7382

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?