hadoop-hdfs-ha 搭建

ps.省略了基础环境的配置(没有ssh免密以及主机名称,jdk)

-

zookeeper配置

到zookeeper的conf目录下cp zoo_sample.cfg zoo.cfg对zoo.cfg进行配置

# The number of milliseconds of each tick tickTime=2000 # The number of ticks that the initial # synchronization phase can take initLimit=10 # The number of ticks that can pass between # sending a request and getting an acknowledgement syncLimit=5 # the directory where the snapshot is stored. # do not use /tmp for storage, /tmp here is just # example sakes. dataDir=/var/zookeeper # the port at which the clients will connect clientPort=2181 # the maximum number of client connections. # increase this if you need to handle more clients #maxClientCnxns=60 # # Be sure to read the maintenance section of the # administrator guide before turning on autopurge. # # http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance # # The number of snapshots to retain in dataDir #autopurge.snapRetainCount=3 # Purge task interval in hours # Set to "0" to disable auto purge feature #autopurge.purgeInterval=1 server.1=192.168.41.11:2888:3888 #第二个端口用来选举leader和follower server.2=192.168.41.12:2888:3888 server.3=192.168.41.13:2888:3888注意我这里省略了分发的步骤,分发步骤见4

mkdir -p /var/zookeeper #递归的创建目录echo 1 > /var/zookeeper/myid #这里注意myid和zookeeper服务器一一对应eg.第一台myid就1 ,第二台就2以此类推。 -

hdfs-site.xml配置

<configuration> <property> <name>fs.replication</name><!--设置两个备份--> <value>2</value> </property> <property> <name>dfs.nameservices</name><!--设置namenode的逻辑映射--> <value>mycluster</value> </property> <property> <name>dfs.ha.namenodes.mycluster</name> <value>nn1,nn2</value> </property> <property> <name>dfs.namenode.rpc-address.mycluster.nn1</name> <value>master:8020</value> </property> <property> <name>dfs.namenode.rpc-address.mycluster.nn2</name> <value>slave1:8020</value> </property> <property> <name>dfs.namenode.http-address.mycluster.nn1</name> <value>master:50070</value> </property> <property> <name>dfs.namenode.http-address.mycluster.nn2</name> <value>slave1:50070</value> </property> <property> <name>dfs.namenode.shared.edits.dir</name><!--设置journalnode地址--> <value>qjournal://master:8485;slave1:8485;slave2:8485/mycluster</value> </property> <property> <name>dfs.journalnode.edits.dir</name> <value>/var/hadoop/journalnode</value> </property> <property> <name>dfs.client.failover.proxy.provider.mycluster</name> <value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value> </property> <property> <name>dfs.ha.fencing.methods</name><!--通过ssh来控制--> <value>sshfence</value> </property> <property> <name>dfs.ha.fencing.ssh.private-key-files</name><!--ssh免密--> <value>/root/.ssh/id_rsa</value> </property> <property> <name>dfs.ha.automatic-failover.enabled</name><!--自动failover--> <value>true</value> </property> </configuration> -

core-site.xml配置

<configuration> <property> <name>fs.defaultFS</name> <value>hdfs://mycluster</value> </property> <property> <name>hadoop.tmp.dir</name> <value>/var/hadoop-2.6/ha</value> </property> <property> <name>ha.zookeeper.quorum</name><!--zookeeper地址--> <value>slave1:2181,slave2:2181,slave3:2181</value> </property> </configuration> -

slaves文件配置

在这个文件下添加datanode的主机名 -

配置文件分发

到目标目录下eg.我hadoop,zookeeper存放在/opt 下接下来bash命令以我的位置为例scp -r ./zk root@目标主机:`pwd`/zkscp -r ./hadoop root@目标主机:`pwd`/hadoop -

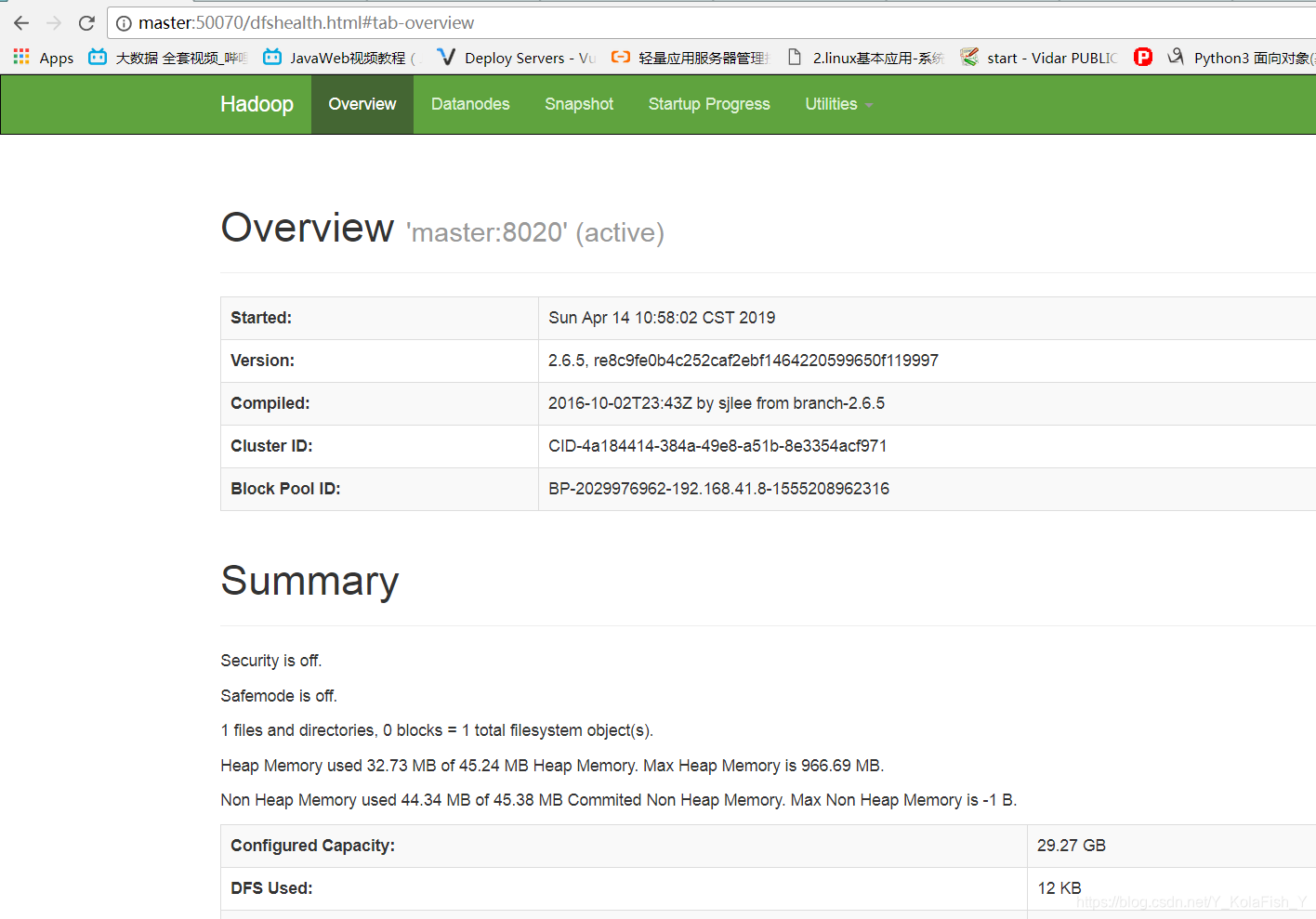

启动集群

~先启动journalnodehadoop-daemon.sh start journalnode~格式化master的namenode并启动

hdfs namenode -formathadoop-daemon.sh start namenode~开启slave1 的namenode(注意千万别格式化)

hdfs namenode -bootStrapStandby~zk

在zookeeper服务器下启动

zkServer.sh start在master下格式化zookeeper

hdfs zkfc -formatZK~启动角色

start-dfs.sh

master

slave1

7542

7542

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?