前些天oozie的安装已经全部完成了,下面我们来看看上传实例,oozie自带的有oozie的几个实例,这里我们以map-reduce为例讲解,初步了解下oozie的使用。

1.解压oozie-examples.tar文件:

tar-zxvf oozie-examples.tar

然后会解压出一个examples文件夹,在里面找到map-reduce文件夹。

2.看到该文件夹下有下面几个文件:

workflow.xml、job.properties和lib,下面我就具体说下这几个文件都是什么。

job.properties,这里提供了workflow.xml中所需要的参数,oozie在运行时,首先会调用job.properties来读取参数,具体内容如下:

| nameNode=hdfs://localhost:8020 jobTracker=localhost:8021 queueName=default examplesRoot=examples

oozie.wf.application.path=${nameNode}/user/${user.name}/${examplesRoot}/apps/map-reduce outputDir=map-reduce |

这里的内容和我们实际搭建的环境不服,需要求改:

namenode改成hadoop的namenode地址,jobTracker同理。这里我们看到这样一个参数“oozie.wf.application.path”,这个参数是致命一个地址,这个地址就是oozie提交的实例需要存在于这个路径下。下面是我修改后的具体内容:

| nameNode=hdfs://192.168.132.2:9000 jobTracker=192.168.132.2:9001 queueName=default examplesRoot=examples

oozie.wf.application.path=${nameNode}/user/${user.name}/${examplesRoot}/apps/map-reduce outputDir=map-reduce |

| <!-- Copyright (c) 2010 Yahoo! Inc. All rightsreserved. Licensed under the Apache License, Version2.0 (the "License"); you may not use this file except incompliance with the License. You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreedto in writing, software distributed under the License is distributedon an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND,either express or implied. See the License for the specific languagegoverning permissions and limitations under the License. Seeaccompanying LICENSE file. --> <workflow-appxmlns="uri:oozie:workflow:0.1" name="map-reduce-wf"> <start to="mr-node"/> <action name="mr-node"> <map-reduce> <job-tracker>${jobTracker}</job-tracker> <name-node>${nameNode}</name-node> <prepare> <deletepath="${nameNode}/user/${wf:user()}/${examplesRoot}/output-data/${outputDir}"/> </prepare> <configuration> <property> <name>mapred.job.queue.name</name> <value>${queueName}</value> </property> <property> <name>mapred.mapper.class</name> <value>org.apache.oozie.example.SampleMapper</value> </property> <property> <name>mapred.reducer.class</name> <value>org.apache.oozie.example.SampleReducer</value> </property> <property> <name>mapred.map.tasks</name> <value>1</value> </property> <property> <name>mapred.input.dir</name> <value>/user/${wf:user()}/${examplesRoot}/input-data/text</value> </property> <property> <name>mapred.output.dir</name> <value>/user/${wf:user()}/${examplesRoot}/output-data/${outputDir}</value> </property> </configuration> </map-reduce> <ok to="end"/> <error to="fail"/> </action> <kill name="fail"> <message>Map/Reduce failed, errormessage[${wf:errorMessage(wf:lastErrorNode())}]</message> </kill> <end name="end"/> </workflow-app> |

具体内容根据自己的需求来写,这个是实例,什么都不需要更改。

lib文件中保存的是需要的map-reduce的jar包。

下面我们就可以把这些文件上传到hadoop上:

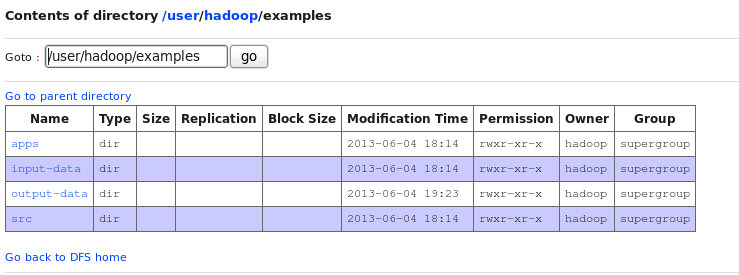

$hadoopfs -mkdir /user/hadoop

$hadoopfs -put /usr/oozie/examples /user/hadoop/

这样我们的实例就全部上传上去了。然后我们可以执行下oozie:

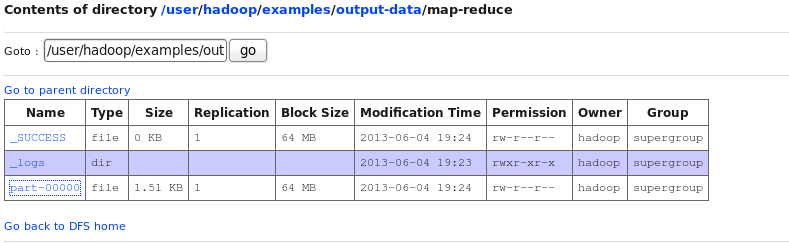

ooziejob -oozie http://192.168.132.2:11000/oozie -config/usr/oozie/examples/apps/map-reduce/job.properties -run

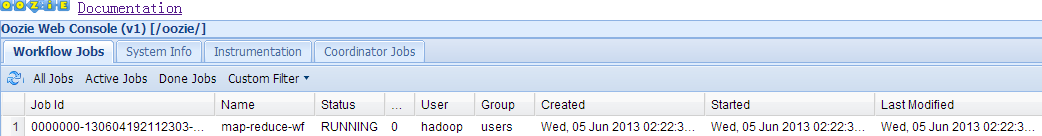

之后我们会得到一个id,这个id就是job的id,然后登陆192.168.132.2:11000/oozie,就可以看到任务的执行状况。

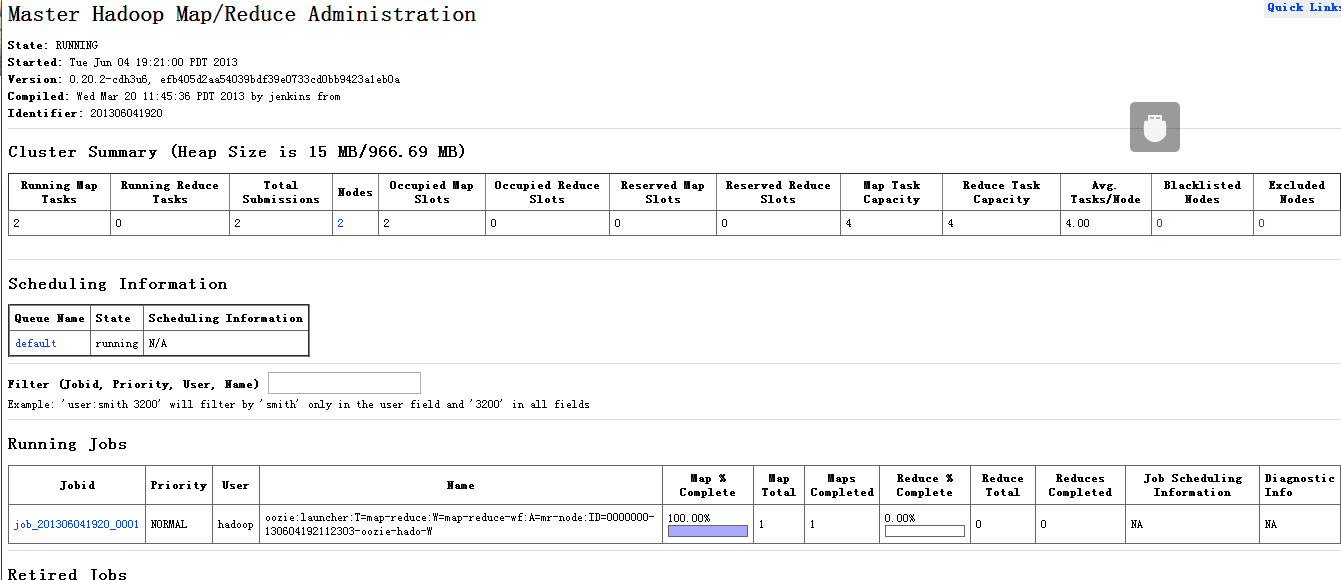

截图:任务进行中:

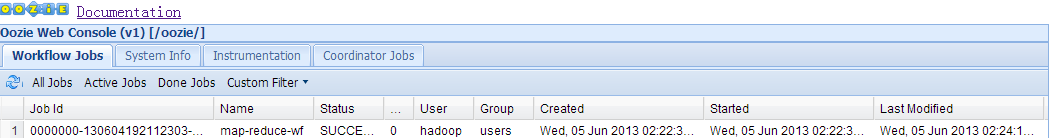

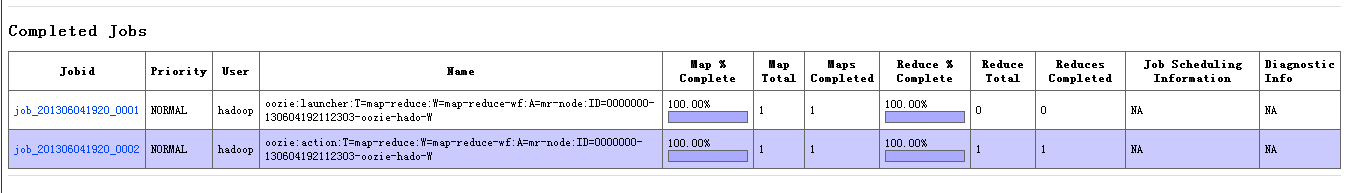

任务完成:

741

741

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?