新的版本中kafka都内置了zookeeper,但是内置的zookeeper最好只用作测试,作为线上使用,最好是自己下载新的稳定版的zookeeper,并搭建集群。搭建的流程,可以参考我的另外关于搭建zookeeper集群的文章——《[ZooKeeper]实践篇-安装并搭建集群》

一、下载kafka

现在最新的kafka版本是2.3.0,可以进官网下载。

下载下来是一个名为 kafka_2.12-2.3.0.tgz 的文件。

github上面已经开源更新到2.3.1了:

但是下载解压以后不能直接使用,因为不是二进制,需要使用gradle编译。

二、安装

将文件上传到自己虚拟机/app/soft/目录下。

1.配置环境变量

这里主要是配置Java环境变量。

一般机器上面有默认安装的jdk,而且一般版本较低:

[root@test13 kafka]# java -version

java version "1.7.0_45"

OpenJDK Runtime Environment (rhel-2.4.3.3.el6-x86_64 u45-b15)

OpenJDK 64-Bit Server VM (build 24.45-b08, mixed mode)

如果使用默认的jdk,启动kafka时会报错:

可以保留并配置新的jdk,但这里最好先对原本的jdk进行卸载。

[root@test13 kafka]# rpm -qa | grep jdk

java-1.7.0-openjdk-1.7.0.45-2.4.3.3.el6.x86_64

java-1.6.0-openjdk-1.6.0.0-1.66.1.13.0.el6.x86_64

[root@test13 kafka]# rpm -e java-1.7.0-openjdk-1.7.0.45-2.4.3.3.el6.x86_64

[root@test13 kafka]# rpm -e java-1.6.0-openjdk-1.6.0.0-1.66.1.13.0.el6.x86_64

比如我使用的是app用户,就进入用户目录下进行配置:

[app@test13 ~]$ vi ~/.bashrc

# .bashrc

# Source global definitions

if [ -f /etc/bashrc ]; then

. /etc/bashrc

fi

# User specific aliases and functions

JAVA_HOME=/app/soft/jdk1.8.0_131

PATH=$JAVA_HOME/bin:$PATH

CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

export JAVA_HOME

export PATH

export CLASSPATH

source该文件,并查看java版本:

[app@test13 ~]$ source .bashrc

[app@test13 ~]$ java -version

java version "1.8.0_131"

2.解压

[app@node1 soft]$ tar -xf kafka_2.12-2.3.0.tgz

[app@node1 soft]$ ll

总用量 64896

-rw-r--r-- 1 root root 9230052 11月 4 14:36 apache-zookeeper-3.5.6-bin.tar.gz

drwx--x--x. 15 app app 200 7月 24 14:54 docker

drwxr-xr-x 3 app app 140 7月 22 09:35 harbor

drwxr-xr-x 8 app app 255 3月 15 2017 jdk1.8.0_131

drwxr-xr-x 6 app app 89 6月 20 04:44 kafka_2.12-2.3.0

-rw-rw-r-- 1 app app 57215197 11月 11 09:52 kafka_2.12-2.3.0.tgz

drwxr-xr-x. 7 app app 67 6月 13 14:52 keepalived

drwxr-xr-x. 6 app app 104 11月 4 09:44 predixy-1.0.5

drwxrwxr-x. 7 app app 4096 6月 17 16:27 redis-5.0.5

drwxr-xr-x 8 app app 158 11月 4 15:50 zookeeper

drwxrwxr-x 8 app app 158 9月 6 17:00 zookeeper_old

[app@node1 soft]$ mv kafka_2.12-2.3.0 kafka

[app@node1 soft]$ ll

总用量 64896

-rw-r--r-- 1 root root 9230052 11月 4 14:36 apache-zookeeper-3.5.6-bin.tar.gz

drwx--x--x. 15 app app 200 7月 24 14:54 docker

drwxr-xr-x 3 app app 140 7月 22 09:35 harbor

drwxr-xr-x 8 app app 255 3月 15 2017 jdk1.8.0_131

drwxr-xr-x 6 app app 89 6月 20 04:44 kafka

-rw-rw-r-- 1 app app 57215197 11月 11 09:52 kafka_2.12-2.3.0.tgz

drwxr-xr-x. 7 app app 67 6月 13 14:52 keepalived

drwxr-xr-x. 6 app app 104 11月 4 09:44 predixy-1.0.5

drwxrwxr-x. 7 app app 4096 6月 17 16:27 redis-5.0.5

drwxr-xr-x 8 app app 158 11月 4 15:50 zookeeper

drwxrwxr-x 8 app app 158 9月 6 17:00 zookeeper_old

3.启动

[app@test13 kafka]$ bin/kafka-server-start.sh config/server.properties

显示如下消息表示启动成功:

启动过程可能会出现的问题:

(1)jdk的问题

解决方案:检查jdk的版本,并使用jdk1.8及以上版本。

(2)域名的问题

具体为启动时报错:

[2019-11-11 09:02:54,155] ERROR [KafkaServer id=0] Fatal error during KafkaServer startup. Prepare to shutdown (kafka.server.KafkaServer)

java.net.UnknownHostException: test13.localdomain: test13.localdomain: 未知的名称或服务

at java.net.InetAddress.getLocalHost(InetAddress.java:1505)

at kafka.server.KafkaServer.$anonfun$createBrokerInfo$7(KafkaServer.scala:412)

at scala.collection.TraversableLike.$anonfun$map$1(TraversableLike.scala:237)

at scala.collection.mutable.ResizableArray.foreach(ResizableArray.scala:62)

at scala.collection.mutable.ResizableArray.foreach$(ResizableArray.scala:55)

at scala.collection.mutable.ArrayBuffer.foreach(ArrayBuffer.scala:49)

at scala.collection.TraversableLike.map(TraversableLike.scala:237)

at scala.collection.TraversableLike.map$(TraversableLike.scala:230)

at scala.collection.AbstractTraversable.map(Traversable.scala:108)

at kafka.server.KafkaServer.createBrokerInfo(KafkaServer.scala:410)

at kafka.server.KafkaServer.startup(KafkaServer.scala:261)

at kafka.server.KafkaServerStartable.startup(KafkaServerStartable.scala:38)

at kafka.Kafka$.main(Kafka.scala:84)

at kafka.Kafka.main(Kafka.scala)

Caused by: java.net.UnknownHostException: test13.localdomain: 未知的名称或服务

at java.net.Inet6AddressImpl.lookupAllHostAddr(Native Method)

at java.net.InetAddress$2.lookupAllHostAddr(InetAddress.java:928)

at java.net.InetAddress.getAddressesFromNameService(InetAddress.java:1323)

at java.net.InetAddress.getLocalHost(InetAddress.java:1500)

... 13 more

[2019-11-11 09:02:54,175] INFO [KafkaServer id=0] shutting down (kafka.server.KafkaServer)

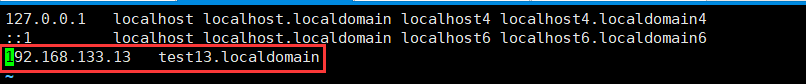

这里主要是因为找不到主机名的地址映射,主要原因是在/etc/sysconfig/network配置了对应的主机名,而/etc/hosts中没有进行域名映射导致的问题,因此需要在/etc/hosts中配置一条规则:

因为kafka使用了zookeeper管理集群,我们启动了kafka,来看一下zookeeper中多了什么:

[zk: localhost:2181(CONNECTED) 0] ls /

[admin, brokers, cluster, config, consumers, controller, controller_epoch, isr_change_notification, latest_producer_id_block, log_dir_event_notification, zookeeper]

如果不去深究究竟存了什么,可能对于他们之间的关系还是云里雾里,我们暂时随便敲几个命令,看看里面存了些什么:

[zk: localhost:2181(CONNECTED) 2] get /admin

null

[zk: localhost:2181(CONNECTED) 3] get config

Path must start with / character

[zk: localhost:2181(CONNECTED) 4] get /config

null

[zk: localhost:2181(CONNECTED) 5] ls /config

[brokers, changes, clients, topics, users]

[zk: localhost:2181(CONNECTED) 6] get /config/brokers

null

[zk: localhost:2181(CONNECTED) 7] ls /config/brokers

[]

[zk: localhost:2181(CONNECTED) 8] ls /

[admin, brokers, cluster, config, consumers, controller, controller_epoch, foo, isr_change_notification, latest_producer_id_block, log_dir_event_notification, node_test, node_test_ciphertext, zookeeper]

[zk: localhost:2181(CONNECTED) 9] ls /isr_change_notification

[]

[zk: localhost:2181(CONNECTED) 10] get /isr_change_notification

null

[zk: localhost:2181(CONNECTED) 11] get /con

config consumers controller controller_epoch

[zk: localhost:2181(CONNECTED) 11] get /controller

controller controller_epoch

[zk: localhost:2181(CONNECTED) 11] get /controller

{"version":1,"brokerid":0,"timestamp":"1573438482621"}

[zk: localhost:2181(CONNECTED) 12] get /cluster

null

[zk: localhost:2181(CONNECTED) 13] ls /cluster

[id]

[zk: localhost:2181(CONNECTED) 14] ls /cluster/id

[]

[zk: localhost:2181(CONNECTED) 15] get /cluster/id

{"version":"1","id":"2dBkXzIaSMSSxKrVxajKFA"}

[zk: localhost:2181(CONNECTED) 16] ls /

[admin, brokers, cluster, config, consumers, controller, controller_epoch, foo, isr_change_notification, latest_producer_id_block, log_dir_event_notification, node_test, node_test_ciphertext, zookeeper]

[zk: localhost:2181(CONNECTED) 17] ls /brokers

[ids, seqid, topics]

[zk: localhost:2181(CONNECTED) 18] ls /brokers/ids

[0]

[zk: localhost:2181(CONNECTED) 19] ls /brokers/ids/0

[]

[zk: localhost:2181(CONNECTED) 20] get /brokers/ids/0

{"listener_security_protocol_map":{"PLAINTEXT":"PLAINTEXT"},"endpoints":["PLAINTEXT://test13.localdomain:9092"],"jmx_port":-1,"host":"test13.localdomain","timestamp":"1573438482605","port":9092,"version":4}

[zk: localhost:2181(CONNECTED) 21] ls /admin/delete_topics

[]

[zk: localhost:2181(CONNECTED) 22] get /admin/delete_topics

null

[zk: localhost:2181(CONNECTED) 23] ls /admin/delete_topics/

Path must not end with / character

[zk: localhost:2181(CONNECTED) 24] quit

WATCHER::

WatchedEvent state:Closed type:None path:null

2019-11-11 11:00:02,498 [myid:] - INFO [main:ZooKeeper@1422] - Session: 0x1000007c9660000 closed

2019-11-11 11:00:02,499 [myid:] - INFO [main-EventThread:ClientCnxn$EventThread@524] - EventThread shut down for session: 0x1000007c9660000

大概和猜想的差不多,就是存了一些集群的信息,包括kafka的集群信息,比如有哪些broker、集群的消费者信息、isr的信息、controller的信息等等。关于具体的情况,可参考我另一篇文章:[Kafka]基本概念

三、配置文件

| 名称 | 描述 | 类型 | 默认值 | 可用值 | 重要性 |

|---|---|---|---|---|---|

| zookeeper.connect | zookeeperd的连接地址,格式为hostname:port。连接的是集群时,逗号分隔:hostname1:port1,hostname2:port2,hostname3:port3 | string | high | ||

| advertised.listeners | 暴露给外部的listeners,advertised.listeners=PLAINTEXT://your.host.name:9092 | string | null | high | |

| listeners | kafka绑定的的ip和端口,listeners = listener_name://host_name:port | string | null | high | |

| broker.id | 服务端的id,同一个环境中保持唯一 | int | -1 | high | |

| broker.id.generation.enable | 服务端的id自动生成 | boolean | true | medium | |

| log.dir | 日志数据的目录 | string | /tmp/kafka-logs | high | |

| log.dirs | 日志数据的目录 | string | null | high | |

| message.max.bytes | Kafka允许的最大记录批次大小。后续的版本中每个消息都可以单独设置max.message.bytes | int | 1000012 | [0,…] | high |

| num.partitions | 每个topic默认的日志分片数,在创建时没指定将使用该值 | int | 1 | [1,…] | medium |

| log.segment.bytes | 日志文件的最大大小 | int | 1073741824 | [14,…] | high |

| log.retention.hours | 日志文件保留的最大时间 | int | 168 | high | |

| log.retention.bytes | 每个topic下面日志文件保留的最大大小 | int | -1 | high | |

| zookeeper.session.timeout.ms | zookeeper的session超时时间 | int | 6000 | high | |

| zookeeper.connection.timeout.ms | 客户端等待和zookeeper建立连接的最大时间 | int | 6000 | high | |

| zookeeper.sync.time.ms | zk follower落后于zk leader的最长时间 | int | 2000 | high |

以上主要是一些常用的配置,更具体的信息可以参考官方文档。

四、搭建集群

前提是你搭建好了一个zookeeper集群。

服务器列表

| ip | OS release |

|---|---|

| 192.168.133.13 | CentOS6.5 |

| 192.168.133.14 | CentOS7.6 |

| 192.168.133.15 | CentOS7.6 |

首先需要修改这几个配置:

192.168.133.13

broker.id=0

listeners=PLAINTEXT://192.168.133.13:9092

log.dirs=/app/kafka-logs

zookeeper.connect=192.168.133.13:2181,192.168.133.14:2181,192.168.133.15:2181

192.168.133.14

broker.id=1

listeners=PLAINTEXT://192.168.133.14:9092

log.dirs=/app/kafka-logs

zookeeper.connect=192.168.133.13:2181,192.168.133.14:2181,192.168.133.15:2181

192.168.133.15

broker.id=2

listeners=PLAINTEXT://192.168.133.15:9092

log.dirs=/app/kafka-logs

zookeeper.connect=192.168.133.13:2181,192.168.133.14:2181,192.168.133.15:2181

启动kafka集群:

/app/soft/kafka/bin/kafka-server-start.sh /app/soft/kafka/config/server.properties

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?