hadoop fs

hadoop fs -ls / hadoop fs -lsr hadoop fs -mkdir /user/hadoop hadoop fs -put a.txt /user/hadoop/ hadoop fs -get /user/hadoop/a.txt / hadoop fs -cp src dst hadoop fs -mv src dst hadoop fs -cat /user/hadoop/a.txt hadoop fs -rm /user/hadoop/a.txt hadoop fs -rmr /user/hadoop/a.txt hadoop fs -text /user/hadoop/a.txt hadoop fs -copyFromLocal localsrc dst # 与hadoop fs -put 功能类似 hadoop fs -moveFromLocal localsrc dst # 将本地文件上传到 hdfs,同时删除本地文件

hadoop dfsadmin

# 报告文件系统的基本信息和统计信息

hadoop dfsadmin -report

hadoop dfsadmin -safemode enter | leave | get | wait

# 安全模式维护命令。安全模式是 Namenode 的一个状态,这种状态下,Namenode

# 1. 不接受对名字空间的更改(只读)

# 2. 不复制或删除块

# Namenode 会在启动时自动进入安全模式,当配置的块最小百分比数满足最小的副本数条件时,会自动离开安全模式。安全模式可以手动进入,但是这样的话也必须手动关闭安全模式。hadoop fsck

运行 HDFS 文件系统检查工具。

hadoop fsck [GENERIC_OPTIONS] <path> [-move | -delete | -openforwrite] [-files [-blocks [-locations | -racks]]]start-balancer.sh

创建代码目录

配置主机名Hadoop,sudo输入密码 将Hadoop添加到最后一行末尾

sudo vim /etc/hosts

# 将hadoop添加到最后一行的末尾,修改后类似:(使用 tab 键添加空格)

# 172.17.2.98 f738b9456777 hadoop

ping hadoop启动

cd /app/hadoop-1.1.2/bin

./start-all.sh

jps#查看启动进程,确保节点启动建立myclass input 目录

cd /app/hadoop-1.1.2/

rm -rf myclass input

mkdir -p myclass input

建立例子文件上传到 HDFS 中

进入 /app/hadoop-1.1.2/input 目录,在该目录中创建 quangle.txt 文件。

cd /app/hadoop-1.1.2/input

touch quangle.txt

vi quangle.txt内容为

On the top of the Crumpetty Tree

The Quangle Wangle sat,

But his face you could not see,

On account of his Beaver Hat.使用如下命令在 hdfs 中建立目录 /class4

hadoop fs -mkdir /class4

hadoop fs -ls /把例子文件上传到 hdfs 的 /class4 文件夹中

cd /app/hadoop-1.1.2/input

hadoop fs -copyFromLocal quangle.txt /class4/quangle.txt

hadoop fs -ls /class4配置本地环境

对 /app/hadoop-1.1.2/conf 目录中的 hadoop-env.sh 进行配置,如下所示:cd /app/hadoop-1.1.2/conf

sudo vi hadoop-env.sh加入 HADOOP_CLASSPATH 变量,值为 /app/hadoop-1.1.2/myclass,设置完毕后编译该配置文件,使配置生效。

export HADOOP_CLASSPATH=/app/hadoop-1.1.2/myclass编写代码

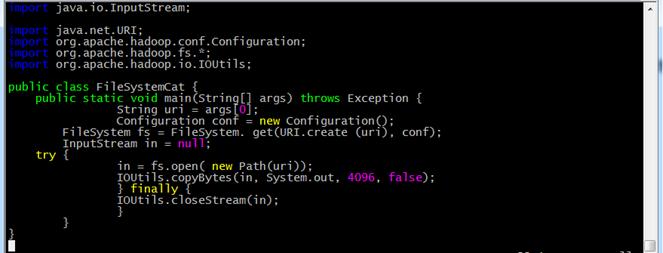

进入 /app/hadoop-1.1.2/myclass 目录,在该目录中建立 FileSystemCat.java 代码文件,命令如下:

cd /app/hadoop-1.1.2/myclass/

vi FileSystemCat.java输入代码内容:

编译代码

在 /app/hadoop-1.1.2/myclass 目录中,使用如下命令编译代码:

javac -classpath ../hadoop-core-1.1.2.jar FileSystemCat.java

使用编译代码读取 HDFS 文件

使用如下命令读取 HDFS 中 /class4/quangle.txt 内容:

hadoop FileSystemCat /class4/quangle.txt在本地文件系统生成一个大约 100 字节的文本文件,写一段程序读入这个文件并将其第 101-120 字节的内容写入 HDFS 成为一个新文件。

//注意:在编译前请先删除中文注释!

import java.io.File;

import java.io.FileInputStream;

import java.io.FileOutputStream;

import java.io.OutputStream;

import java.net.URI;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IOUtils;

import org.apache.hadoop.util.Progressable;

public class LocalFile2Hdfs {

public static void main(String[] args) throws Exception {

// 获取读取源文件和目标文件位置参数

String local = args[0];

String uri = args[1];

FileInputStream in = null;

OutputStream out = null;

Configuration conf = new Configuration();

try {

// 获取读入文件数据

in = new FileInputStream(new File(local));

// 获取目标文件信息

FileSystem fs = FileSystem.get(URI.create(uri), conf);

out = fs.create(new Path(uri), new Progressable() {

@Override

public void progress() {

System.out.println("*");

}

});

// 跳过前100个字符

in.skip(100);

byte[] buffer = new byte[20];

// 从101的位置读取20个字符到buffer中

int bytesRead = in.read(buffer);

if (bytesRead >= 0) {

out.write(buffer, 0, bytesRead);

}

} finally {

IOUtils.closeStream(in);

IOUtils.closeStream(out);

}

}

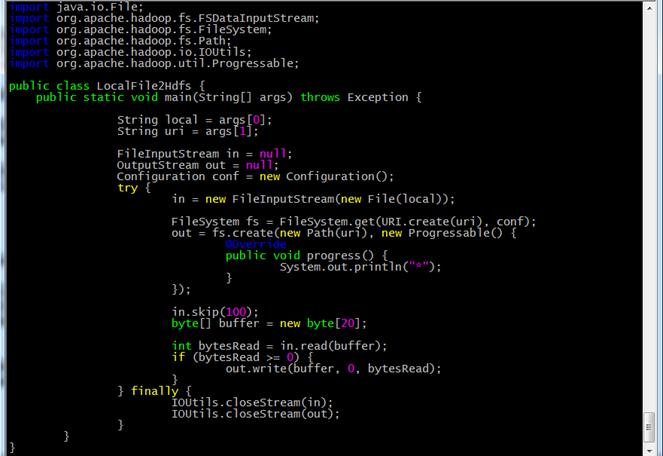

}编写代码

进入 /app/hadoop-1.1.2/myclass 目录,在该目录中建立 LocalFile2Hdfs.java 代码文件,命令如下

cd /app/hadoop-1.1.2/myclass/

vi LocalFile2Hdfs.java输入代码内容:

编译代码

在 /app/hadoop-1.1.2/myclass 目录中,使用如下命令编译代码

javac -classpath ../hadoop-core-1.1.2.jar LocalFile2Hdfs.java建立测试文件

进入 /app/hadoop-1.1.2/input 目录,在该目录中建立 local2hdfs.txt 文件。

cd /app/hadoop-1.1.2/input/

vi local2hdfs.txt内容为:

Washington (CNN) -- Twitter is suing the U.S. government in an effort to loosen restrictions on what the social media giant can say publicly about the national security-related requests it receives for user data.

The company filed a lawsuit against the Justice Department on Monday in a federal court in northern California, arguing that its First Amendment rights are being violated by restrictions that forbid the disclosure of how many national security letters and Foreign Intelligence Surveillance Act court orders it receives -- even if that number is zero.

Twitter vice president Ben Lee wrote in a blog post that it's suing in an effort to publish the full version of a "transparency report" prepared this year that includes those details.

The San Francisco-based firm was unsatisfied with the Justice Department's move in January to allow technological firms to disclose the number of national security-related requests they receive in broad ranges.使用编译代码上传文件内容到 HDFS

使用如下命令读取 local2hdfs 第 101-120 字节的内容写入 HDFS 成为一个新文件:

cd /app/hadoop-1.1.2/input

hadoop LocalFile2Hdfs local2hdfs.txt /class4/local2hdfs_part.txt

hadoop fs -ls /class4验证是否成功

使用如下命令读取 local2hdfs_part.txt 内容

hadoop fs -cat /class4/local2hdfs_part.txt实验案例 3 的反向操作,在 HDFS 中生成一个大约 100 字节的文本文件,写一段程序读入这个文件,并将其第 101-120 字节的内容写入本地文件系统成为一个新文件。

import java.io.File;

import java.io.FileInputStream;

import java.io.FileOutputStream;

import java.io.OutputStream;

import java.net.URI;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IOUtils;

public class Hdfs2LocalFile {

public static void main(String[] args) throws Exception {

String uri = args[0];

String local = args[1];

FSDataInputStream in = null;

OutputStream out = null;

Configuration conf = new Configuration();

try {

FileSystem fs = FileSystem.get(URI.create(uri), conf);

in = fs.open(new Path(uri));

out = new FileOutputStream(local);

byte[] buffer = new byte[20];

in.skip(100);

int bytesRead = in.read(buffer);

if (bytesRead >= 0) {

out.write(buffer, 0, bytesRead);

}

} finally {

IOUtils.closeStream(in);

IOUtils.closeStream(out);

}

}

}

185

185

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?