一.前期准备

1.1 hadoop

版本:Hadoop 2.6.5

1.2 mysql

版本:5.6.33 MySQL Community Server (GPL)

1.3 mysql驱动包

版本:mysql-connector-java-5.1.40-bin.jar

1.4 hive安装包

官网下载:apache-hive-2.1.1-bin.tar.gz

二.hive安装

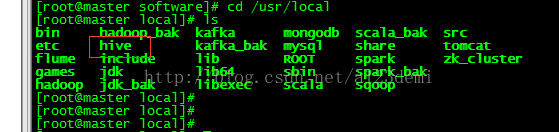

2.1 hive部署

#定位

cd /opt/software

#解压

tar -zxvf apache-hive-2.1.1-bin.tar.gz

#复制hive解压到/usr/local/hive

cp -r apache-hive-2.1.1-bin /usr/local/hive

2.2 mysql驱动包导入

把1.3中的mysql驱动包放置到$HIVE_HOME\lib目录

三.hadoop配置

3.1 启动dfs+yarn

#定位

cd /usr/local/hadoop

#启动dfs+yarn

sbin/start-all.sh3.2 创建HDFS目录并赋予权限

hdfs dfs -mkdir -p /usr/hive/warehouse

hdfs dfs -mkdir -p /usr/hive/tmp

hdfs dfs -mkdir -p /usr/hive/log

hdfs dfs -chmod g+w /usr/hive/warehouse

hdfs dfs -chmod g+w /usr/hive/tmp

hdfs dfs -chmod g+w /usr/hive/log四.hive配置

4.1 配置环境变量

vi /etc/profile

--------------------------------------------

#hive

export HIVE_HOME=/usr/local/hive

#path

export PATH=$PATH:$HIVE_HOME/bin

--------------------------------------------

重新编译profile

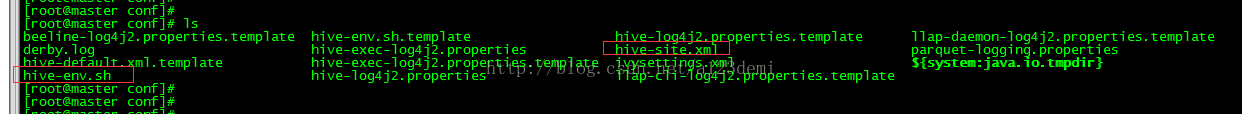

source /etc/profile4.2 hive-env.sh配置

#定位

cd /usr/local/hive/conf

#生成hive-env.sh文件

cp -r hive-env.sh.template hive-env.sh

#配置

vi hive-env.sh

--------------------------------------------

# Folder containing extra ibraries required for hive compilation/execution can be controlled by:

# export HIVE_AUX_JARS_PATH=

export JAVA_HOME=/usr/local/jdk

export HADOOP_HOME=/usr/local/hadoop

export HIVE_HOME=/usr/local/hive

# HADOOP_HOME=${bin}/../../hadoop

# Hive Configuration Directory can be controlled by:

export HIVE_CONF_DIR=$HIVE_HOME/conf

# Folder containing extra ibraries required for hive compilation/execution can be controlled by:

export HIVE_AUX_JARS_PATH=/usr/local/hive/lib/*4.3 hive-site.xml配置

#生成hive-site.xml文件

cp -r hive-default.xml.template hive-site.xml

#配置

vi hive-site.xml

--------------------------------------------

<configuration>

<!-- WARNING!!! This file is auto generated for documentation purposes ONLY! -->

<!-- WARNING!!! Any changes you make to this file will be ignored by Hive. -->

<!-- WARNING!!! You must make your changes in hive-site.xml instead. -->

<!-- Hive Execution Parameters -->

<property>

<name>hive.exec.scratchdir</name>

<value>/usr/hive/tmp</value>

<description>HDFS root scratch dir for Hive jobs which gets created with write all (733) permission. For each connecting user, an HDFS scratch dir: ${hive.exec.scratchdir}/<username> is created, with ${hive.scratch.dir.permission}.</description>

</property>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/usr/hive/warehouse</value>

<description>location of default database for the warehouse</description>

</property>

<property>

<name>hive.querylog.location</name>

<value>/usr/hive/log</value>

<description>Location of Hive run time structured log file</description>

</property>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://192.168.32.128:3306/hive?createDatabaseIfNotExist=true&characterEncoding=UTF-8&useSSL=false</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>root</value>

</property>

</configuration>

4.4 注意

本文mysql用户使用的是root用户,生产环境可以自建一个用户,

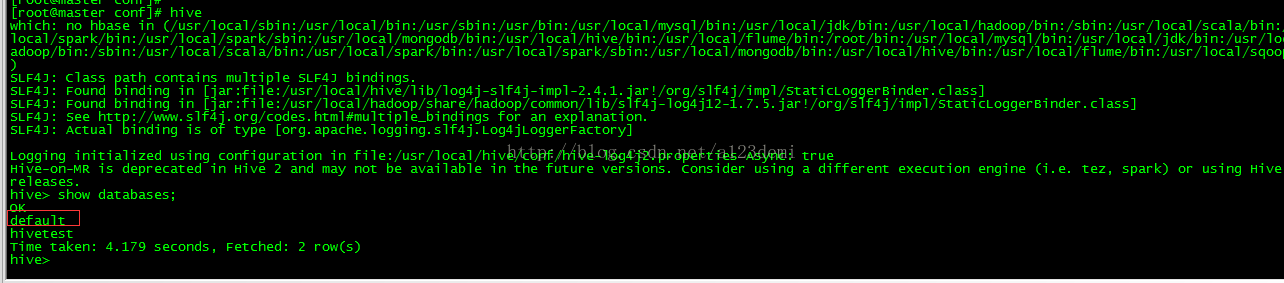

五.测试实现

5.1schematool 命令来执行初始化操作

从 Hive 2.1 版本开始, 我们需要先运行 schematool 命令来执行初始化操作,

schematool -dbType mysql -initSchema5.2 Hive客户端启动

#命令

hive

hive操作的命令基本与mysql一致。

6428

6428

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?