最近在研究Spark的相关知识,本地搭建了一个开发环境Windows7+Eclipse+JDK1.7。

一. 日志效率原因

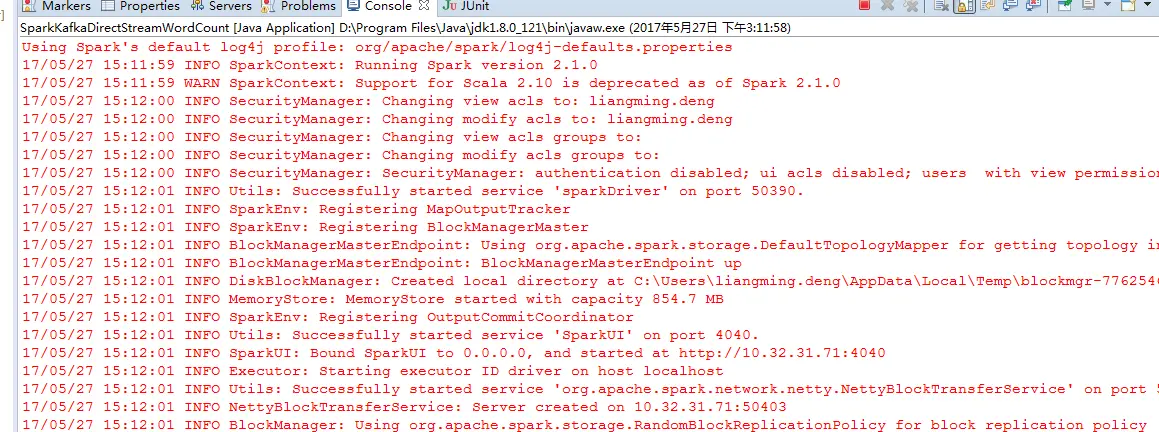

开发时,控制台输出一大堆日志信息,严重影响查看日志效率。

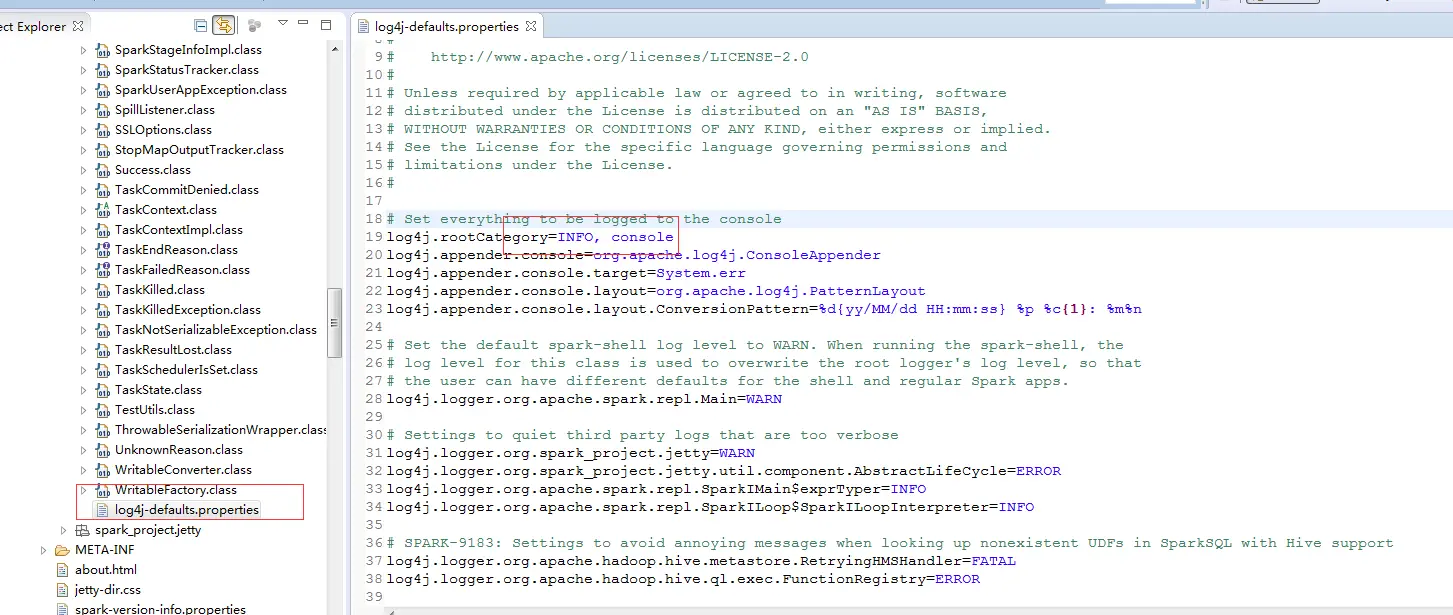

从控制台输出日志我们可以看出,应用程序是默认加载Spark-core包下面的log4j-defaults.properties日志文件。查看log4j-defaults.properties文件

由上图可知,Spark-core包设置默认的日志级别为info,所以我们才看到一大堆日志信i息。

那针对以上问题,在开发过程中我们如何解决?

二. 日志级别解决方法

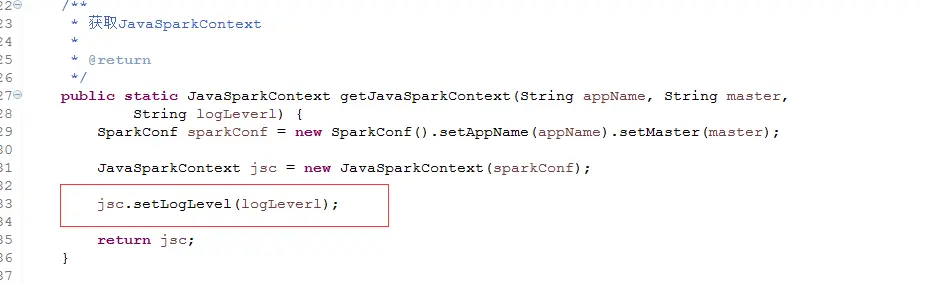

方式一.局部应用设置

针对SparkContext应用,Spark有专门的api设置日志级别,如下:

上述方法,只针对SparkContext相关的应用,而对Spark-streaming等应用无效果。

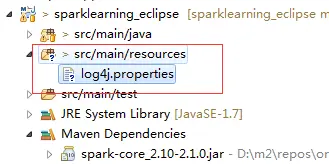

方式二.全局应用设置

针对spark所有应用,可以Java工程目录中新建/src/main/resources目录,把log4j.properties放置该目录。

log4j.properties生成:

1. Spark中conf默认配置文件是log4j.properties.template,可以将其改名为log4j.properties;

2. 将Spark-core包中的log4j-default.properties内容复制到log4j.properties文件。

#log4j内容如下

#

# Licensed to the Apache Software Foundation (ASF) under one or more

# contributor license agreements. See the NOTICE file distributed with

# this work for additional information regarding copyright ownership.

# The ASF licenses this file to You under the Apache License, Version 2.0

# (the "License"); you may not use this file except in compliance with

# the License. You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

#

# Set everything to be logged to the console

log4j.rootCategory=WARN, console

log4j.appender.console=org.apache.log4j.ConsoleAppender

log4j.appender.console.target=System.err

log4j.appender.console.layout=org.apache.log4j.PatternLayout

log4j.appender.console.layout.ConversionPattern=%d{yy/MM/dd HH:mm:ss} %p %c{1}: %m%n

# Settings to quiet third party logs that are too verbose

log4j.logger.org.spark-project.jetty=WARN

log4j.logger.org.spark-project.jetty.util.component.AbstractLifeCycle=ERROR

log4j.logger.org.apache.spark.repl.SparkIMain$exprTyper=INFO

log4j.logger.org.apache.spark.repl.SparkILoop$SparkILoopInterpreter=INFO

log4j.logger.org.apache.parquet=ERROR

log4j.logger.parquet=ERROR

# SPARK-9183: Settings to avoid annoying messages when looking up nonexistent UDFs in SparkSQL with Hive support

log4j.logger.org.apache.hadoop.hive.metastore.RetryingHMSHandler=FATAL

log4j.logger.org.apache.hadoop.hive.ql.exec.FunctionRegistry=ERROR

在开发工程中,我们可以设置日志级别为WARN,即:

log4j.rootCategory=WARN, console

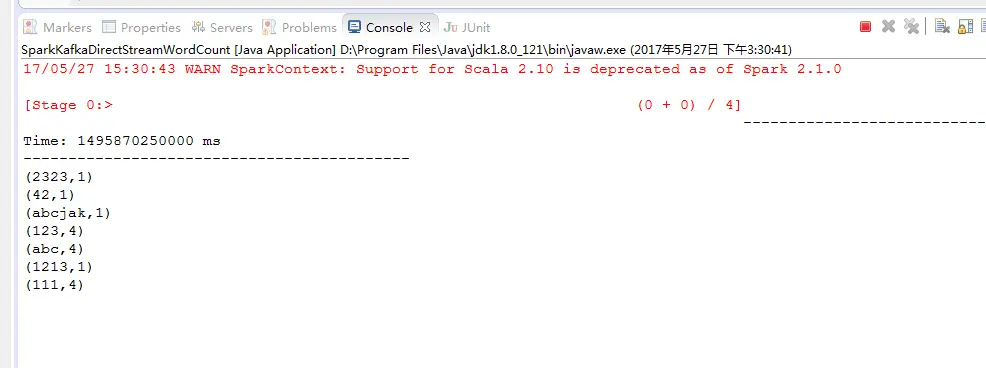

三. 日志级别设置效果

2342

2342

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?