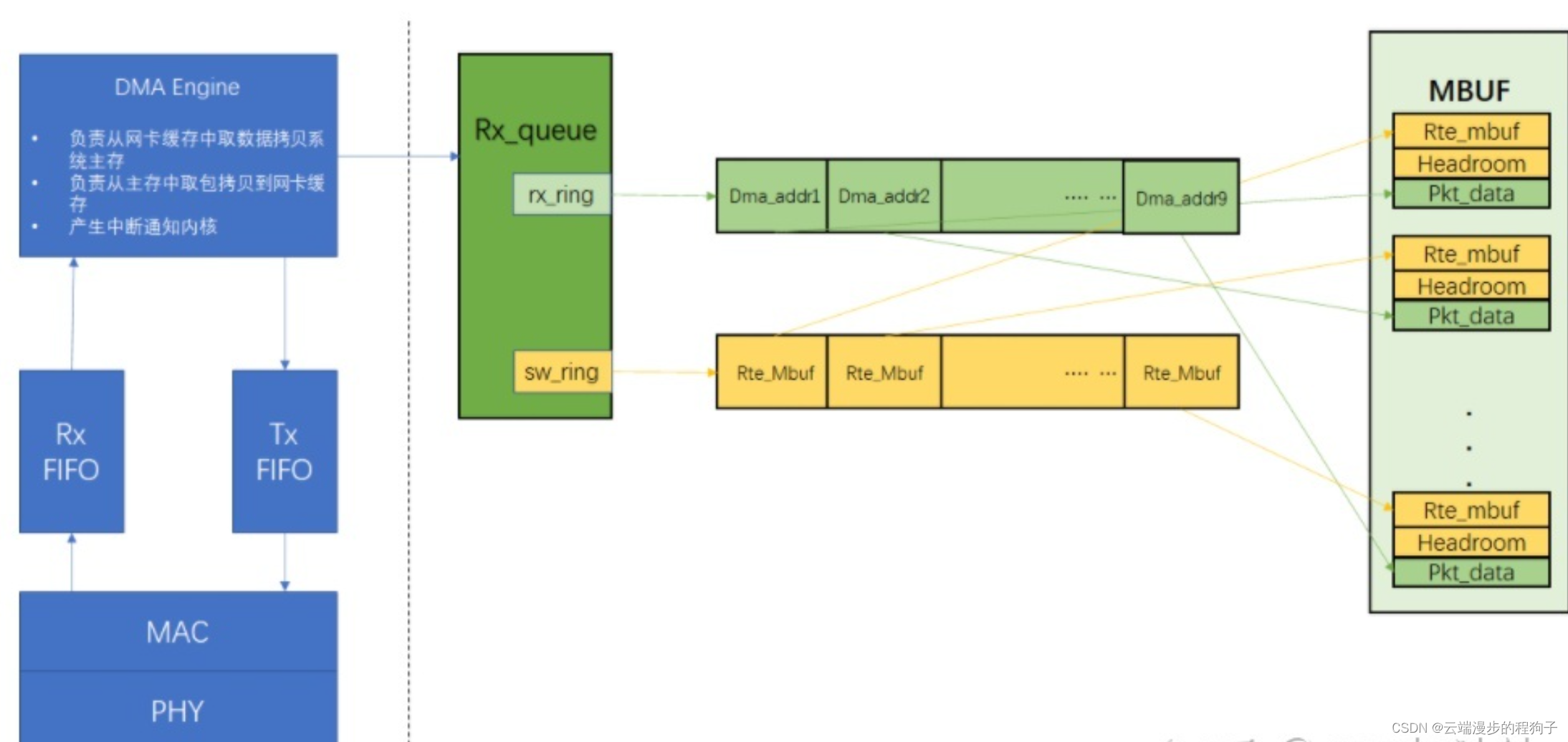

具体详细流程如下:

(1)CPU填缓冲地址(mbuf中的data)到收接收侧描述符(在dpdk初始化时就会第一次填充),也就是上图中rx_ring会指向 mbuf池中的 部分mbuf用于接收数据包;另外CPU通过操作网卡的base、size寄存器,将rx_ring环形队列的起始地址和内 存卡大小告诉给DMA控制器,将描述符队列的物理地址写入到寄存器后,dma 通过读这个寄存器就知道了描述符队列的地 址,进而 dma收到报文后,会将报文保存到描述符指向的 mbuf 空间

(2)网卡读取rx_ring队列里接收侧的描述符进而获取系统缓冲区地址

(3)从外部到达网卡的报文数据先存储到网卡本地的RX_FIFO缓冲区

(4)DMA通过PCIE总线将报文数据写到系统的缓冲区地址

(5)网卡回写rx_ring接收侧描述符的更新状态DD标志,置1表示接收完毕

(6)CPU读取描述符sw_ring队列中元素的DD状态,如果为1则表示网卡已经接收完毕,应用可以读取数据包

(7)CPU从sw_ring中读取完数据包后有个“狸猫换太子”的动作,重新从mbuf池中申请一个mbuf替换到sw_ring的该描述符中、 将新分配的mbuf虚拟地址转换为物理地址更新到rx_ring中该条目位置的dma物理地址、更新描述符rx_ring队列里的DD标 志置0,这样网卡就可以持续往rx_ring缓冲区写数据了

(8)CPU判断rx_ring里可用描述符小于配置的阈值时更新tail寄存器,而不是回填一个mbuf到描述符就更新下tail寄存器(因为CPU高频率的操作寄存器是性能的杀手,所以改用此机制)

(9)至此,应用接收数据包完毕

注意:这里有两个非常关键的队列rx_ring、sw_ring,rx_ring描述符里存放的是mbuf里data区的起始物理地址供DMA控制器收到报文后往该地址写入(硬件DMA直接操作物理地址,不需要cpu参与);sw_ring描述符里存放的是mbuf的起始虚拟地址供应用读取数据包

uint16_t

eth_igb_recv_pkts(void *rx_queue, struct rte_mbuf **rx_pkts,

uint16_t nb_pkts)

{undefined

struct igb_rx_queue *rxq;

volatile union e1000_adv_rx_desc *rx_ring;

volatile union e1000_adv_rx_desc *rxdp;

struct igb_rx_entry *sw_ring;

struct igb_rx_entry *rxe;

struct rte_mbuf *rxm;

struct rte_mbuf *nmb;

union e1000_adv_rx_desc rxd;

uint64_t dma_addr;

uint32_t staterr;

uint32_t hlen_type_rss;

uint16_t pkt_len;

uint16_t rx_id;

uint16_t nb_rx;

uint16_t nb_hold;

uint64_t pkt_flags;

nb_rx = 0;

nb_hold = 0;

rxq = rx_queue;

rx_id = rxq->rx_tail;

rx_ring = rxq->rx_ring;

sw_ring = rxq->sw_ring;

while (nb_rx < nb_pkts) {undefined

/*第一步:从描述符队列中找到被应用最后一次接收的那个文件描述符的位置*/

rxdp = &rx_ring[rx_id];

staterr = rxdp->wb.upper.status_error;

/*第二步:检查DD状态是否为1,为1则说明驱动已经将报文成功放到接收队列,否则直接退出*/

if (! (staterr & rte_cpu_to_le_32(E1000_RXD_STAT_DD)))

break;

rxd = *rxdp;

PMD_RX_LOG(DEBUG, "port_id=%u queue_id=%u rx_id=%u "

"staterr=0x%x pkt_len=%u",

(unsigned) rxq->port_id, (unsigned) rxq->queue_id,

(unsigned) rx_id, (unsigned) staterr,

(unsigned) rte_le_to_cpu_16(rxd.wb.upper.length));

/*第三步:从mbuf池中重新申请一个mbuf,为下面的填充做准备*/

nmb = rte_mbuf_raw_alloc(rxq->mb_pool);

if (nmb == NULL) {undefined

PMD_RX_LOG(DEBUG, "RX mbuf alloc failed port_id=%u "

"queue_id=%u", (unsigned) rxq->port_id,

(unsigned) rxq->queue_id);

rte_eth_devices[rxq->port_id].data->rx_mbuf_alloc_failed++;

break;

}

nb_hold++;

/*第四步:找到了描述符的位置,也就找到了需要取出的mbuf*/

rxe = &sw_ring[rx_id];

rx_id++;

if (rx_id == rxq->nb_rx_desc)

rx_id = 0;

/* Prefetch next mbuf while processing current one. */

rte_igb_prefetch(sw_ring[rx_id].mbuf);

/*

* When next RX descriptor is on a cache-line boundary,

* prefetch the next 4 RX descriptors and the next 8 pointers

* to mbufs.

*/

if ((rx_id & 0x3) == 0) {undefined

rte_igb_prefetch(&rx_ring[rx_id]);

rte_igb_prefetch(&sw_ring[rx_id]);

}

rxm = rxe->mbuf;

/*第五步:给描述符sw_ring队列重新填写新申请的mbuf*/

rxe->mbuf = nmb;

/*第六步:将新申请的mbuf的虚拟地址转换为物理地址,为rx_ring的缓冲区填充做准备*/

dma_addr =

rte_cpu_to_le_64(rte_mbuf_data_iova_default(nmb));

/*第七步:将rx_ring描述符中该条目的DD标志置0,表示允许DMA控制器操作*/

rxdp->read.hdr_addr = 0;

/*第八步:重新填充rx_ring描述符中该条目的dma地址*/

rxdp->read.pkt_addr = dma_addr;

/*

* Initialize the returned mbuf.

* 1) setup generic mbuf fields:

* - number of segments,

* - next segment,

* - packet length,

* - RX port identifier.

* 2) integrate hardware offload data, if any:

* - RSS flag & hash,

* - IP checksum flag,

* - VLAN TCI, if any,

* - error flags.

*/

/*第九步:对获取到的数据包做部分封装,比如:报文类型、长度等*/

pkt_len = (uint16_t) (rte_le_to_cpu_16(rxd.wb.upper.length) -

rxq->crc_len);

rxm->data_off = RTE_PKTMBUF_HEADROOM;

rte_packet_prefetch((char *)rxm->buf_addr + rxm->data_off);

rxm->nb_segs = 1;

rxm->next = NULL;

rxm->pkt_len = pkt_len;

rxm->data_len = pkt_len;

rxm->port = rxq->port_id;

rxm->hash.rss = rxd.wb.lower.hi_dword.rss;

hlen_type_rss = rte_le_to_cpu_32(rxd.wb.lower.lo_dword.data);

/*

* The vlan_tci field is only valid when PKT_RX_VLAN is

* set in the pkt_flags field and must be in CPU byte order.

*/

if ((staterr & rte_cpu_to_le_32(E1000_RXDEXT_STATERR_LB)) &&

(rxq->flags & IGB_RXQ_FLAG_LB_BSWAP_VLAN)) {undefined

rxm->vlan_tci = rte_be_to_cpu_16(rxd.wb.upper.vlan);

} else {undefined

rxm->vlan_tci = rte_le_to_cpu_16(rxd.wb.upper.vlan);

}

pkt_flags = rx_desc_hlen_type_rss_to_pkt_flags(rxq, hlen_type_rss);

pkt_flags = pkt_flags | rx_desc_status_to_pkt_flags(staterr);

pkt_flags = pkt_flags | rx_desc_error_to_pkt_flags(staterr);

rxm->ol_flags = pkt_flags;

rxm->packet_type = igb_rxd_pkt_info_to_pkt_type(rxd.wb.lower.

lo_dword.hs_rss.pkt_info);

/*

* Store the mbuf address into the next entry of the array

* of returned packets.

*/

/*第十步:将获取到的报文放入将要返回给用户操作的指针数组中*/

rx_pkts[nb_rx++] = rxm;

}

rxq->rx_tail = rx_id;

/*

* If the number of free RX descriptors is greater than the RX free

* threshold of the queue, advance the Receive Descriptor Tail (RDT)

* register.

* Update the RDT with the value of the last processed RX descriptor

* minus 1, to guarantee that the RDT register is never equal to the

* RDH register, which creates a "full" ring situtation from the

* hardware point of view...

*/

/*第十步:CPU判断rx_ring里可用描述符小于配置的阈值时更新尾部寄存器供DMA控制器参考*/

nb_hold = (uint16_t) (nb_hold + rxq->nb_rx_hold);

if (nb_hold > rxq->rx_free_thresh) {undefined

PMD_RX_LOG(DEBUG, "port_id=%u queue_id=%u rx_tail=%u "

"nb_hold=%u nb_rx=%u",

(unsigned) rxq->port_id, (unsigned) rxq->queue_id,

(unsigned) rx_id, (unsigned) nb_hold,

(unsigned) nb_rx);

rx_id = (uint16_t) ((rx_id == 0) ?

(rxq->nb_rx_desc - 1) : (rx_id - 1));

E1000_PCI_REG_WRITE(rxq->rdt_reg_addr, rx_id);

nb_hold = 0;

}

rxq->nb_rx_hold = nb_hold;

return nb_rx;

}

1002

1002

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?