继BERT维度剪枝之后,尝试了BERT层数暴力裁剪,直接剪掉若干层。

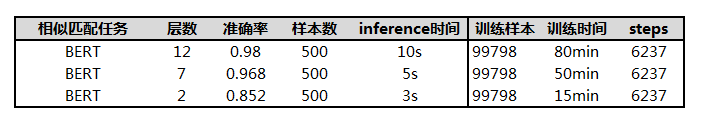

试验结果:

结论:训练提升40%左右、效果下降1.2%,推断速度提升50%。

代码参考 : 最简单的模型轻量化方法:20行代码为BERT剪枝 最简单的模型轻量化方法:20行代码为BERT剪枝-腾讯云开发者社区-腾讯云, 但是进行了一些调整。

1)首先,将谷歌pretrain的模型直接裁剪另存:

=====L-2

import tensorflow as tf

import os

sess = tf.Session()

last_name = 'bert_model.ckpt'

model_path = './'

imported_meta = tf.train.import_meta_graph(os.path.join(model_path, last_name + '.meta'))

imported_meta.restore(sess, os.path.join(model_path, last_name))

init_op=tf.local_variables_initializer()

sess.run(init_op)

bert_dict = {}

for var in tf.global_variables():

if var.name.startswith('bert/encoder/layer_') and not var.name.startswith('bert/encoder/layer_0') and not var.name.startswith('bert/encoder/layer_11'):

pass

else:

bert_dict[var.name]=sess.run(var).tolist()

need_vars = []

for var in tf.global_variables():

if var.name.startswith('bert/encoder/layer_') and not var.name.startswith('bert/encoder/layer_0/') and not var.name.startswith('bert/encoder/layer_1/'):

pass

elif var.name.startswith('bert/encoder/layer_1/'):

new_name = var.name.replace("bert/encoder/layer_1","bert/encoder/layer_11")

op=tf.assign(var,bert_dict[new_name])

sess.run(op)

need_vars.append(var)

else:

need_vars.append(var)

saver = tf.train.Saver(need_vars)

saver.save(sess, os.path.join('../chinese_L-2_H-768_A-12/', 'bert_model.ckpt'))2)参数修改,在预训练时修改bert的参数:

{

"attention_probs_dropout_prob": 0.1,

"directionality": "bidi",

"hidden_act": "gelu",

"hidden_dropout_prob": 0.1,

"hidden_size": 768,

"initializer_range": 0.02,

"intermediate_size": 3072,

"max_position_embeddings": 512,

"num_attention_heads": 12,

"num_hidden_layers": 2,

"pooler_fc_size": 768,

"pooler_num_attention_heads": 12,

"pooler_num_fc_layers": 3,

"pooler_size_per_head": 128,

"pooler_type": "first_token_transform",

"type_vocab_size": 2,

"vocab_size": 21128

}

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?