简介

MTT S4000 是基于摩尔线程曲院 GPU 架构打造的全功能元计算卡,为千亿规模大语言模型的训练、微调和推理进行了定制优化,结合先进的图形渲染能力、视频编解码能力和超高清 8K HDR 显示能力,助力人工智能、图形渲染、多媒体、科学计算与物理仿真等复合应用场景的计算加速。

MTT S4000 全面支持大语言模型的预训练、微调和推理服务,MUSA 软件栈专门针对大规模集群的分布式计算性能进行了优化,适配主流分布式计算加速框架, 包括 DeepSpeed, Colossal AI,Megatron 等,支持千亿参数大语言模型的稳定预训练。

官方参数如下

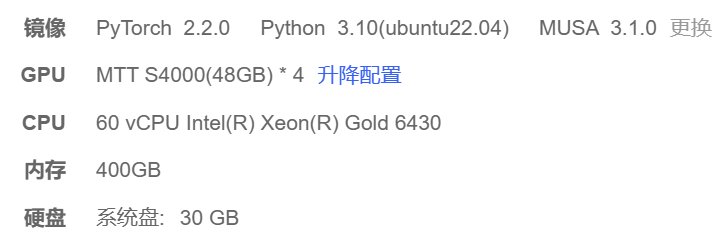

运行环境

本次运行环境为AutoDL云中的镜像环境,系统环境如下

常用命令

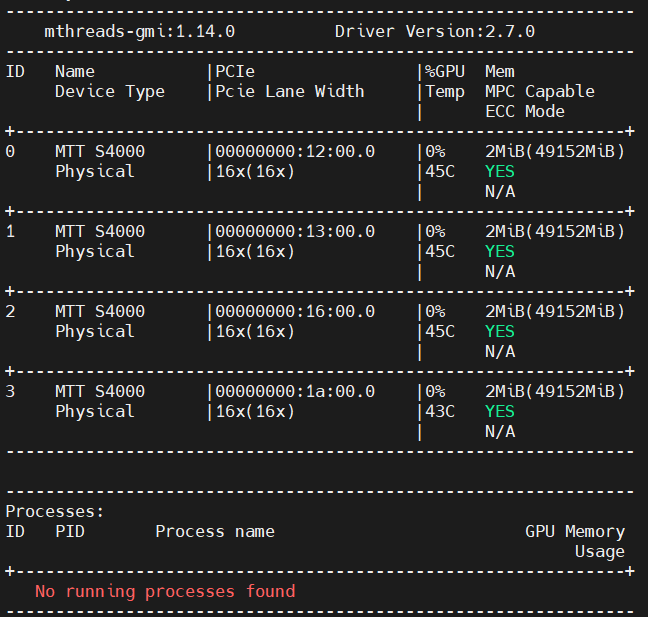

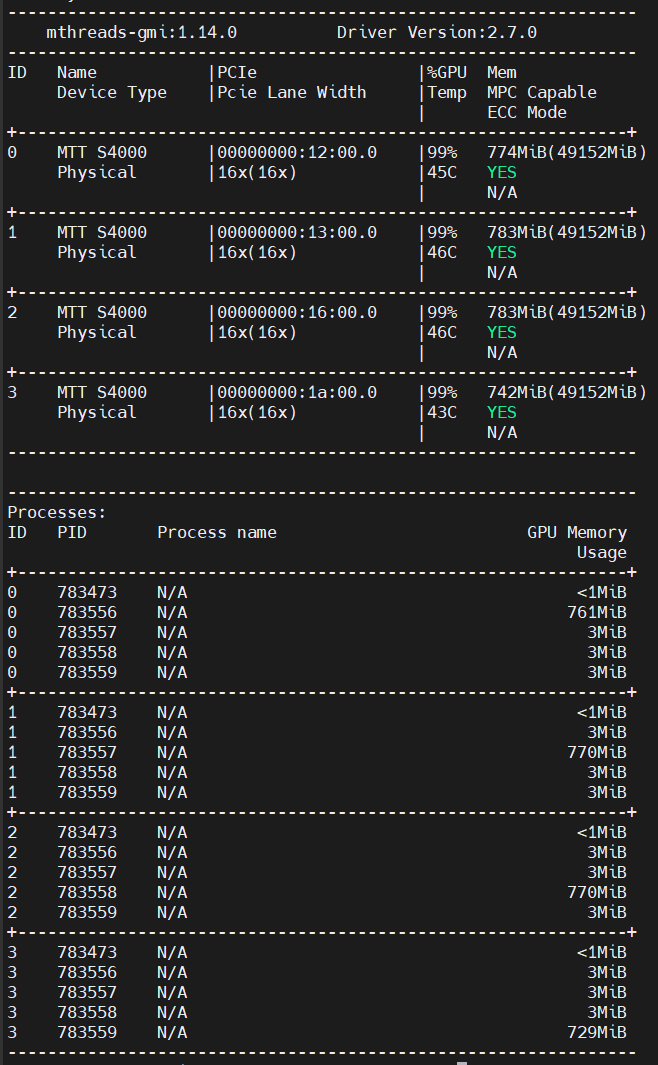

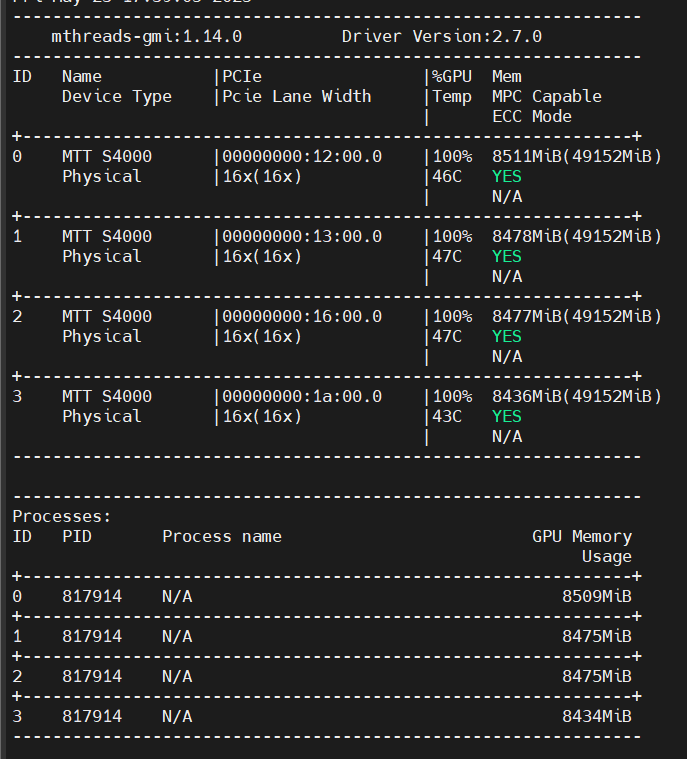

显卡运行状态

输入如下命令

mthreads-gmi

即可查看当前显卡运行状态

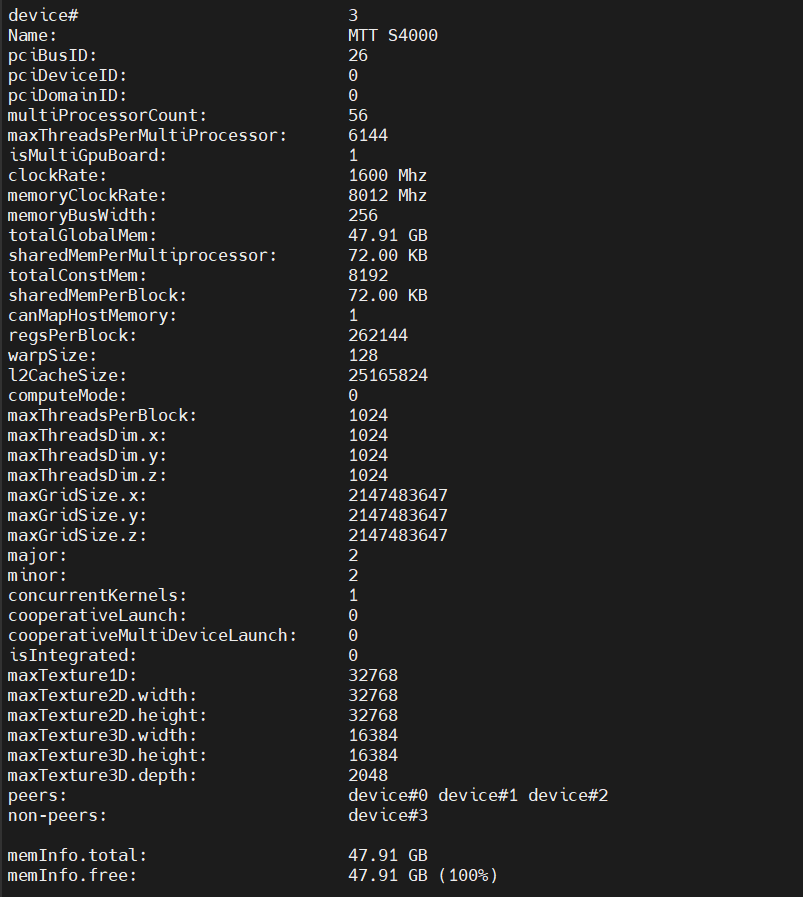

查看当前GPU详细信息

输入

musaInfo即可

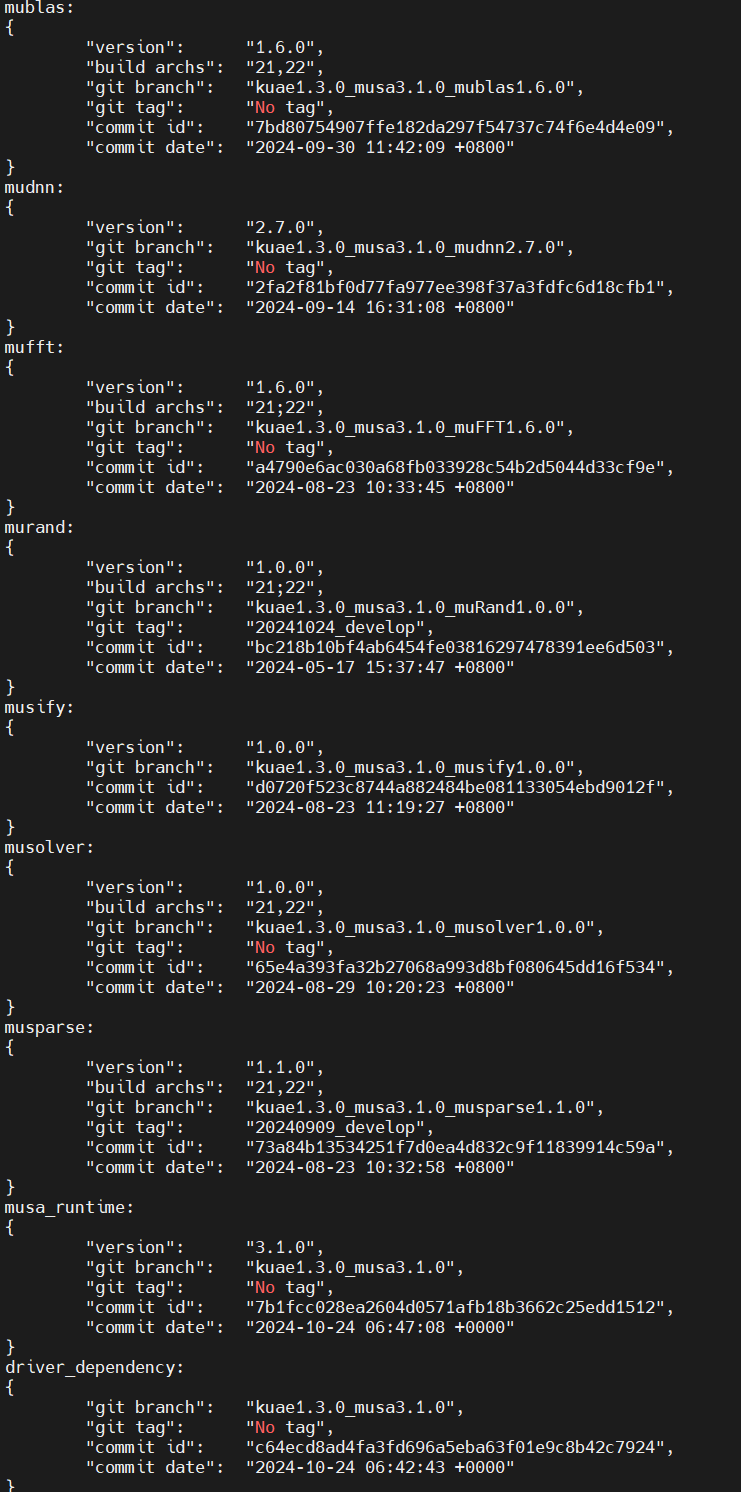

查看当前运行环境版本

输入

musa_version_query

即可查看当前运行环境版本

Pytorch部分

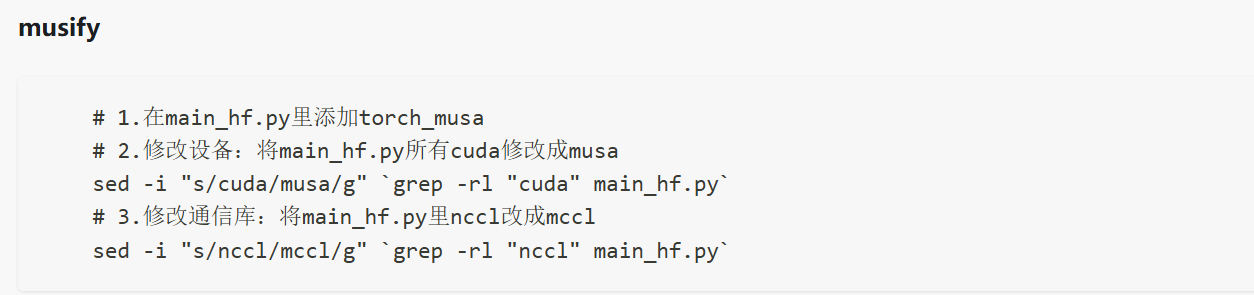

转义

根据官网介绍,对于pytorch代码,只需要正确import torch_musa的拓展插件,并且将代码中的所有cuda->musa,将所有的nccl->mccl即可。

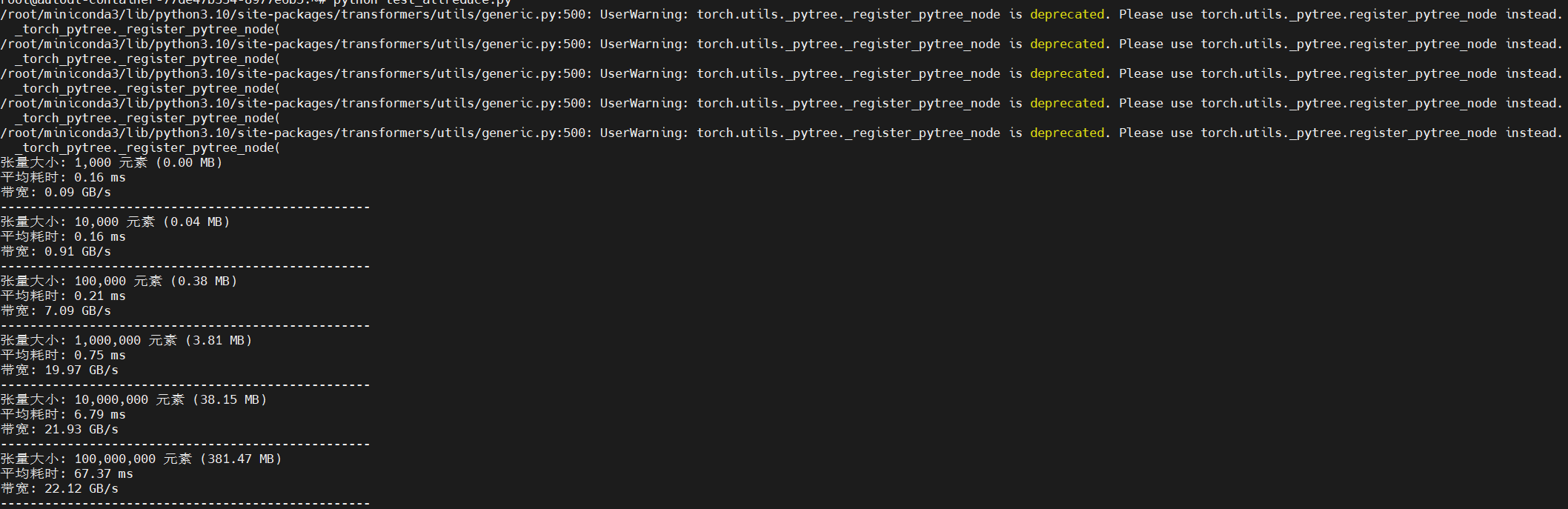

实测

作者使用豆包随机生成了一个测试allreduce的pytorch代码,代码如下,在经过上述转译后能正常运行

import os

import time

import argparse

import torch

import torch_musa

import torch.distributed as dist

from torch.nn.parallel import DistributedDataParallel as DDP

def setup(rank, world_size):

os.environ['MASTER_ADDR'] = 'localhost'

os.environ['MASTER_PORT'] = '12355'

# 初始化MUSA分布式环境

dist.init_process_group("mccl", rank=rank, world_size=world_size)

torch.musa.set_device(rank)

def cleanup():

dist.destroy_process_group()

def run_benchmark(rank, world_size, sizes, num_iters=100, warmup=20):

setup(rank, world_size)

for size in sizes:

# 创建随机张量(使用MUSA设备)

tensor = torch.rand(size, device=f'musa:{rank}')

# 预热

for _ in range(warmup):

dist.all_reduce(tensor)

torch.musa.synchronize()

# 测量时间

start_time = time.time()

for _ in range(num_iters):

dist.all_reduce(tensor)

torch.musa.synchronize()

end_time = time.time()

# 计算统计信息

total_time = end_time - start_time

avg_time = total_time / num_iters

size_mb = size * 4 / (1024 * 1024) # float32是4字节

bandwidth = (size_mb * world_size) / avg_time # MB/s

if rank == 0:

print(f"张量大小: {size:,} 元素 ({size_mb:.2f} MB)")

print(f"平均耗时: {avg_time * 1000:.2f} ms")

print(f"带宽: {bandwidth / 1024:.2f} GB/s")

print("-" * 50)

cleanup()

def main():

parser = argparse.ArgumentParser()

parser.add_argument('--sizes', type=int, nargs='+',

default=[1000, 10000, 100000, 1000000, 10000000, 100000000],

metavar='N',

help='测试的张量大小列表')

parser.add_argument('--num-iters', type=int, default=100,

help='每个大小的迭代次数')

parser.add_argument('--warmup', type=int, default=20,

help='预热迭代次数')

args = parser.parse_args()

world_size = torch.musa.device_count()

if world_size != 4:

raise ValueError("此脚本需要4个MUSA GPU,但发现 {} 个GPU".format(world_size))

import torch.multiprocessing as mp

mp.spawn(run_benchmark,

args=(world_size, args.sizes, args.num_iters, args.warmup),

nprocs=world_size,

join=True)

if __name__ == "__main__":

main()

MUSA编程

p2p通信部分

代码参考

笔者按照英伟达cudasamples仓库中的p2pbandwidthtest 代码,cuda-samples/Samples/5_Domain_Specific/p2pBandwidthLatencyTest at master · NVIDIA/cuda-samples · GitHub

并且参考相应的musa event api与mublasapi

https://docs.mthreads.com/musa-sdk/musa-sdk-doc-online/api/mcc_um.zh-CN

编写了一个适用于摩尔线程的p2p通信检测验证程序

代码部分

#include <cstdio>

#include <vector>

#include <musa_runtime.h> // 假设 MUSA 头文件

using namespace std;

const char *sSampleName = "P2P (Peer-to-Peer) GPU Bandwidth Latency Test";

typedef enum {

P2P_WRITE = 0,

P2P_READ = 1,

} P2PDataTransfer;

typedef enum {

CE = 0,

SM = 1,

} P2PEngine;

P2PEngine p2p_mechanism = CE; // 默认使用 Copy Engine

// 错误检查宏

#define musaCheckError() \

{ \

musaError_t e = musaGetLastError(); \

if (e != musaSuccess) { \

printf("MUSA failure %s:%d: '%s'\n", __FILE__, __LINE__, musaGetErrorString(e)); \

exit(EXIT_FAILURE); \

} \

}

// 延迟内核

__global__ void delay(volatile int *flag, unsigned long long timeout_clocks = 10000000)

{

// 等待应用程序通知我们它已经完成了实验的排队,或者超时并退出,允许应用程序继续执行

long long int start_clock, sample_clock;

start_clock = clock64();

while (!*flag) {

sample_clock = clock64();

if (sample_clock - start_clock > timeout_clocks) {

break;

}

}

}

// P2P 复制内核

__global__ void copyp2p(int4 *__restrict__ dest, const int4 *__restrict__ src, size_t num_elems) {

size_t globalId = blockIdx.x * blockDim.x + threadIdx.x;

size_t gridSize = blockDim.x * gridDim.x;

#pragma unroll 5 // 移除括号

for (size_t i = globalId; i < num_elems; i += gridSize) {

dest[i] = src[i];

}

}

// 打印帮助信息

void printHelp(void) {

printf("Usage: p2pBandwidthLatencyTest [OPTION]...\n");

printf("Tests bandwidth/latency of GPU pairs using P2P and without P2P\n");

printf("\n");

printf("Options:\n");

printf("--help\t\tDisplay this help menu\n");

printf("--p2p_read\tUse P2P reads for data transfers between GPU pairs\n");

printf("--sm_copy\tUse SM intiated p2p transfers instead of Copy Engine\n");

printf("--numElems=<NUM_OF_INT_ELEMS> Number of integer elements for p2p copy\n");

}

// 检查P2P访问

void checkP2Paccess(int numGPUs) {

for (int i = 0; i < numGPUs; i++) {

musaSetDevice(i);

musaCheckError();

for (int j = 0; j < numGPUs; j++) {

if (i != j) {

int access;

musaDeviceCanAccessPeer(&access, i, j);

musaCheckError();

printf("Device=%d %s Access Peer Device=%d\n", i, access ? "CAN" : "CANNOT", j);

}

}

}

printf("\n***NOTE: Devices without P2P access fall back to normal memcpy.\n");

}

// 执行P2P复制

void performP2PCopy(int *dest, int destDevice, int *src, int srcDevice,

size_t num_elems, int repeat, bool p2paccess,

musaStream_t streamToRun) {

int blockSize, numBlocks;

musaOccupancyMaxPotentialBlockSize(&numBlocks, &blockSize, copyp2p);

musaCheckError();

if (p2p_mechanism == SM && p2paccess) {

for (int r = 0; r < repeat; r++) {

copyp2p<<<numBlocks, blockSize, 0, streamToRun>>>((int4*)dest, (int4*)src, num_elems/4);

}

} else {

for (int r = 0; r < repeat; r++) {

musaMemcpyPeerAsync(dest, destDevice, src, srcDevice,

sizeof(int)*num_elems, streamToRun);

musaCheckError();

}

}

}

// 输出带宽矩阵

void outputBandwidthMatrix(int numElems, int numGPUs, bool p2p, P2PDataTransfer p2p_method) {

int repeat = 5;

volatile int *flag = NULL;

vector<int *> buffers(numGPUs);

vector<int *> buffersD2D(numGPUs);

vector<musaEvent_t> start(numGPUs);

vector<musaEvent_t> stop(numGPUs);

vector<musaStream_t> stream(numGPUs);

musaHostAlloc((void **)&flag, sizeof(*flag), musaHostAllocPortable);

musaCheckError();

for (int d = 0; d < numGPUs; d++) {

musaSetDevice(d);

musaStreamCreateWithFlags(&stream[d], musaStreamNonBlocking);

musaMalloc(&buffers[d], numElems * sizeof(int));

musaMemset(buffers[d], 0, numElems * sizeof(int));

musaMalloc(&buffersD2D[d], numElems * sizeof(int));

musaMemset(buffersD2D[d], 0, numElems * sizeof(int));

musaCheckError();

musaEventCreate(&start[d]);

musaCheckError();

musaEventCreate(&stop[d]);

musaCheckError();

}

vector<double> bandwidthMatrix(numGPUs * numGPUs);

for (int i = 0; i < numGPUs; i++) {

musaSetDevice(i);

for (int j = 0; j < numGPUs; j++) {

int access = 0;

if (p2p) {

musaDeviceCanAccessPeer(&access, i, j);

if (access) {

musaDeviceEnablePeerAccess(j, 0);

musaCheckError();

musaSetDevice(j);

musaDeviceEnablePeerAccess(i, 0);

musaCheckError();

musaSetDevice(i);

musaCheckError();

}

}

musaStreamSynchronize(stream[i]);

musaCheckError();

// 阻塞流,直到所有工作排队完成

*flag = 0;

delay<<<1, 1, 0, stream[i]>>>(flag);

musaCheckError();

musaEventRecord(start[i], stream[i]);

musaCheckError();

if (i == j) {

performP2PCopy(buffers[i], i, buffersD2D[i], i, numElems, repeat, access, stream[i]);

}

else {

if (p2p_method == P2P_WRITE) {

performP2PCopy(buffers[j], j, buffers[i], i, numElems, repeat, access, stream[i]);

}

else {

performP2PCopy(buffers[i], i, buffers[j], j, numElems, repeat, access, stream[i]);

}

}

musaEventRecord(stop[i], stream[i]);

musaCheckError();

// 释放排队的事件

*flag = 1;

musaStreamSynchronize(stream[i]);

musaCheckError();

float time_ms;

musaEventElapsedTime(&time_ms, start[i], stop[i]);

double time_s = time_ms / 1e3;

double gb = numElems * sizeof(int) * repeat / (double)1e9;

if (i == j) {

gb *= 2;

}

bandwidthMatrix[i * numGPUs + j] = gb / time_s;

if (p2p && access) {

musaDeviceDisablePeerAccess(j);

musaSetDevice(j);

musaDeviceDisablePeerAccess(i);

musaSetDevice(i);

musaCheckError();

}

}

}

printf(" D\\D");

for (int j = 0; j < numGPUs; j++) {

printf("%6d ", j);

}

printf("\n");

for (int i = 0; i < numGPUs; i++) {

printf("%6d ", i);

for (int j = 0; j < numGPUs; j++) {

printf("%6.02f ", bandwidthMatrix[i * numGPUs + j]);

}

printf("\n");

}

for (int d = 0; d < numGPUs; d++) {

musaSetDevice(d);

musaFree(buffers[d]);

musaFree(buffersD2D[d]);

musaCheckError();

musaEventDestroy(start[d]);

musaCheckError();

musaEventDestroy(stop[d]);

musaCheckError();

musaStreamDestroy(stream[d]);

musaCheckError();

}

musaFreeHost((void *)flag);

musaCheckError();

}

// 输出双向带宽矩阵

void outputBidirectionalBandwidthMatrix(int numElems, int numGPUs, bool p2p) {

int repeat = 5;

volatile int *flag = NULL;

vector<int *> buffers(numGPUs);

vector<int *> buffersD2D(numGPUs);

vector<musaEvent_t> start(numGPUs);

vector<musaEvent_t> stop(numGPUs);

vector<musaStream_t> stream0(numGPUs);

vector<musaStream_t> stream1(numGPUs);

musaHostAlloc((void **)&flag, sizeof(*flag), musaHostAllocPortable);

musaCheckError();

for (int d = 0; d < numGPUs; d++) {

musaSetDevice(d);

musaMalloc(&buffers[d], numElems * sizeof(int));

musaMemset(buffers[d], 0, numElems * sizeof(int));

musaMalloc(&buffersD2D[d], numElems * sizeof(int));

musaMemset(buffersD2D[d], 0, numElems * sizeof(int));

musaCheckError();

musaEventCreate(&start[d]);

musaCheckError();

musaEventCreate(&stop[d]);

musaCheckError();

musaStreamCreateWithFlags(&stream0[d], musaStreamNonBlocking);

musaCheckError();

musaStreamCreateWithFlags(&stream1[d], musaStreamNonBlocking);

musaCheckError();

}

vector<double> bandwidthMatrix(numGPUs * numGPUs);

for (int i = 0; i < numGPUs; i++) {

musaSetDevice(i);

for (int j = 0; j < numGPUs; j++) {

int access = 0;

if (p2p) {

musaDeviceCanAccessPeer(&access, i, j);

if (access) {

musaSetDevice(i);

musaDeviceEnablePeerAccess(j, 0);

musaCheckError();

musaSetDevice(j);

musaDeviceEnablePeerAccess(i, 0);

musaCheckError();

}

}

musaSetDevice(i);

musaStreamSynchronize(stream0[i]);

musaStreamSynchronize(stream1[j]);

musaCheckError();

// 阻塞流,直到所有工作排队完成

*flag = 0;

musaSetDevice(i);

// 无需阻塞 stream1,因为它会在 stream0 的事件上阻塞

delay<<<1, 1, 0, stream0[i]>>>(flag);

musaCheckError();

// 强制 stream1 在 stream0 开始之前不启动,以确保 stream0 上的事件完全涵盖所有操作所需的时间

musaEventRecord(start[i], stream0[i]);

musaStreamWaitEvent(stream1[j], start[i], 0);

if (i == j) {

// 对于 GPU 内操作,执行 2 次内存复制 buffersD2D <-> buffers

performP2PCopy(buffers[i], i, buffersD2D[i], i, numElems, repeat, access, stream0[i]);

performP2PCopy(buffersD2D[i], i, buffers[i], i, numElems, repeat, access, stream1[i]);

}

else {

if (access && p2p_mechanism == SM) {

musaSetDevice(j);

}

performP2PCopy(buffers[i], i, buffers[j], j, numElems, repeat, access, stream1[j]);

if (access && p2p_mechanism == SM) {

musaSetDevice(i);

}

performP2PCopy(buffers[j], j, buffers[i], i, numElems, repeat, access, stream0[i]);

}

// 通知 stream0 stream1 已完成,并记录总事务的时间

musaEventRecord(stop[j], stream1[j]);

musaStreamWaitEvent(stream0[i], stop[j], 0);

musaEventRecord(stop[i], stream0[i]);

// 释放排队的操作

*flag = 1;

musaStreamSynchronize(stream0[i]);

musaStreamSynchronize(stream1[j]);

musaCheckError();

float time_ms;

musaEventElapsedTime(&time_ms, start[i], stop[i]);

double time_s = time_ms / 1e3;

double gb = 2.0 * numElems * sizeof(int) * repeat / (double)1e9;

if (i == j) {

gb *= 2;

}

bandwidthMatrix[i * numGPUs + j] = gb / time_s;

if (p2p && access) {

musaSetDevice(i);

musaDeviceDisablePeerAccess(j);

musaSetDevice(j);

musaDeviceDisablePeerAccess(i);

}

}

}

printf(" D\\D");

for (int j = 0; j < numGPUs; j++) {

printf("%6d ", j);

}

printf("\n");

for (int i = 0; i < numGPUs; i++) {

printf("%6d ", i);

for (int j = 0; j < numGPUs; j++) {

printf("%6.02f ", bandwidthMatrix[i * numGPUs + j]);

}

printf("\n");

}

for (int d = 0; d < numGPUs; d++) {

musaSetDevice(d);

musaFree(buffers[d]);

musaFree(buffersD2D[d]);

musaCheckError();

musaEventDestroy(start[d]);

musaCheckError();

musaEventDestroy(stop[d]);

musaCheckError();

musaStreamDestroy(stream0[d]);

musaCheckError();

musaStreamDestroy(stream1[d]);

musaCheckError();

}

musaFreeHost((void *)flag);

musaCheckError();

}

// 输出延迟矩阵

void outputLatencyMatrix(int numGPUs, bool p2p, P2PDataTransfer p2p_method) {

int repeat = 100;

int numElems = 4; // 执行 1 个 int4 传输

volatile int *flag = NULL;

vector<int *> buffers(numGPUs);

vector<int *> buffersD2D(numGPUs); // 用于 D2D(即 GPU 内复制)的缓冲区

vector<musaStream_t> stream(numGPUs);

vector<musaEvent_t> start(numGPUs);

vector<musaEvent_t> stop(numGPUs);

musaHostAlloc((void **)&flag, sizeof(*flag), musaHostAllocPortable);

musaCheckError();

for (int d = 0; d < numGPUs; d++) {

musaSetDevice(d);

musaStreamCreateWithFlags(&stream[d], musaStreamNonBlocking);

musaMalloc(&buffers[d], sizeof(int) * numElems);

musaMemset(buffers[d], 0, sizeof(int) * numElems);

musaMalloc(&buffersD2D[d], sizeof(int) * numElems);

musaMemset(buffersD2D[d], 0, sizeof(int) * numElems);

musaCheckError();

musaEventCreate(&start[d]);

musaCheckError();

musaEventCreate(&stop[d]);

musaCheckError();

}

vector<double> gpuLatencyMatrix(numGPUs * numGPUs);

vector<double> cpuLatencyMatrix(numGPUs * numGPUs);

for (int i = 0; i < numGPUs; i++) {

musaSetDevice(i);

for (int j = 0; j < numGPUs; j++) {

int access = 0;

if (p2p) {

musaDeviceCanAccessPeer(&access, i, j);

if (access) {

musaDeviceEnablePeerAccess(j, 0);

musaCheckError();

musaSetDevice(j);

musaDeviceEnablePeerAccess(i, 0);

musaSetDevice(i);

musaCheckError();

}

}

musaStreamSynchronize(stream[i]);

musaCheckError();

// 阻塞流,直到所有工作排队完成

*flag = 0;

delay<<<1, 1, 0, stream[i]>>>(flag);

musaCheckError();

musaEventRecord(start[i], stream[i]);

if (i == j) {

// 执行 GPU 内的 D2D 复制

performP2PCopy(buffers[i], i, buffersD2D[i], i, numElems, repeat, access, stream[i]);

}

else {

if (p2p_method == P2P_WRITE) {

performP2PCopy(buffers[j], j, buffers[i], i, numElems, repeat, access, stream[i]);

}

else {

performP2PCopy(buffers[i], i, buffers[j], j, numElems, repeat, access, stream[i]);

}

}

musaEventRecord(stop[i], stream[i]);

// 现在工作已经排队完成,释放流

*flag = 1;

musaStreamSynchronize(stream[i]);

musaCheckError();

float gpu_time_ms;

musaEventElapsedTime(&gpu_time_ms, start[i], stop[i]);

gpuLatencyMatrix[i * numGPUs + j] = gpu_time_ms * 1e3 / repeat;

if (p2p && access) {

musaDeviceDisablePeerAccess(j);

musaSetDevice(j);

musaDeviceDisablePeerAccess(i);

musaSetDevice(i);

musaCheckError();

}

}

}

printf(" GPU");

for (int j = 0; j < numGPUs; j++) {

printf("%6d ", j);

}

printf("\n");

for (int i = 0; i < numGPUs; i++) {

printf("%6d ", i);

for (int j = 0; j < numGPUs; j++) {

printf("%6.02f ", gpuLatencyMatrix[i * numGPUs + j]);

}

printf("\n");

}

for (int d = 0; d < numGPUs; d++) {

musaSetDevice(d);

musaFree(buffers[d]);

musaFree(buffersD2D[d]);

musaCheckError();

musaEventDestroy(start[d]);

musaCheckError();

musaEventDestroy(stop[d]);

musaCheckError();

musaStreamDestroy(stream[d]);

musaCheckError();

}

musaFreeHost((void *)flag);

musaCheckError();

}

// 主函数

int main(int argc, char **argv) {

int numGPUs, numElems = 40000000;

P2PDataTransfer p2p_method = P2P_WRITE;

musaGetDeviceCount(&numGPUs);

musaCheckError();

// 处理命令行参数

for (int i = 1; i < argc; i++) {

if (strcmp(argv[i], "--help") == 0) {

printHelp();

return 0;

} else if (strcmp(argv[i], "--p2p_read") == 0) {

p2p_method = P2P_READ;

} else if (strcmp(argv[i], "--sm_copy") == 0) {

p2p_mechanism = SM;

} else if (strncmp(argv[i], "--numElems=", 11) == 0) {

numElems = atoi(argv[i] + 11);

}

}

printf("[%s]\n", sSampleName);

// 输出设备信息

for (int i = 0; i < numGPUs; i++) {

musaDeviceProp prop;

musaGetDeviceProperties(&prop, i);

printf("Device: %d, %s, pciBusID: %x, pciDeviceID: %x, pciDomainID:%x\n",

i, prop.name, prop.pciBusID, prop.pciDeviceID, prop.pciDomainID);

}

checkP2Paccess(numGPUs);

// 输出P2P连接矩阵

printf("P2P Connectivity Matrix\n");

printf(" D\\D");

for (int j = 0; j < numGPUs; j++) {

printf("%6d", j);

}

printf("\n");

for (int i = 0; i < numGPUs; i++) {

printf("%6d\t", i);

for (int j = 0; j < numGPUs; j++) {

if (i != j) {

int access;

musaDeviceCanAccessPeer(&access, i, j);

printf("%6d", (access) ? 1 : 0);

} else {

printf("%6d", 1);

}

}

printf("\n");

}

// 输出各种测试结果

printf("Unidirectional P2P=Disabled Bandwidth Matrix (GB/s)\n");

outputBandwidthMatrix(numElems, numGPUs, false, P2P_WRITE);

printf("Unidirectional P2P=Enabled Bandwidth (P2P Writes) Matrix (GB/s)\n");

outputBandwidthMatrix(numElems, numGPUs, true, P2P_WRITE);

if (p2p_method == P2P_READ) {

printf("Unidirectional P2P=Enabled Bandwidth (P2P Reads) Matrix (GB/s)\n");

outputBandwidthMatrix(numElems, numGPUs, true, p2p_method);

}

printf("Bidirectional P2P=Disabled Bandwidth Matrix (GB/s)\n");

outputBidirectionalBandwidthMatrix(numElems, numGPUs, false);

printf("Bidirectional P2P=Enabled Bandwidth Matrix (GB/s)\n");

outputBidirectionalBandwidthMatrix(numElems, numGPUs, true);

printf("P2P=Disabled Latency Matrix (us)\n");

outputLatencyMatrix(numGPUs, false, P2P_WRITE);

printf("P2P=Enabled Latency (P2P Writes) Matrix (us)\n");

outputLatencyMatrix(numGPUs, true, P2P_WRITE);

if (p2p_method == P2P_READ) {

printf("P2P=Enabled Latency (P2P Reads) Matrix (us)\n");

outputLatencyMatrix(numGPUs, true, p2p_method);

}

printf("\nNOTE: Results may vary when GPU Boost is enabled.\n");

return 0;

}

编译

参考mcc编译手册,此时代码中引用的库为musa_runtime,则编译是-l参数后跟musart

mcc p2p.mu -o p2p -lmusart

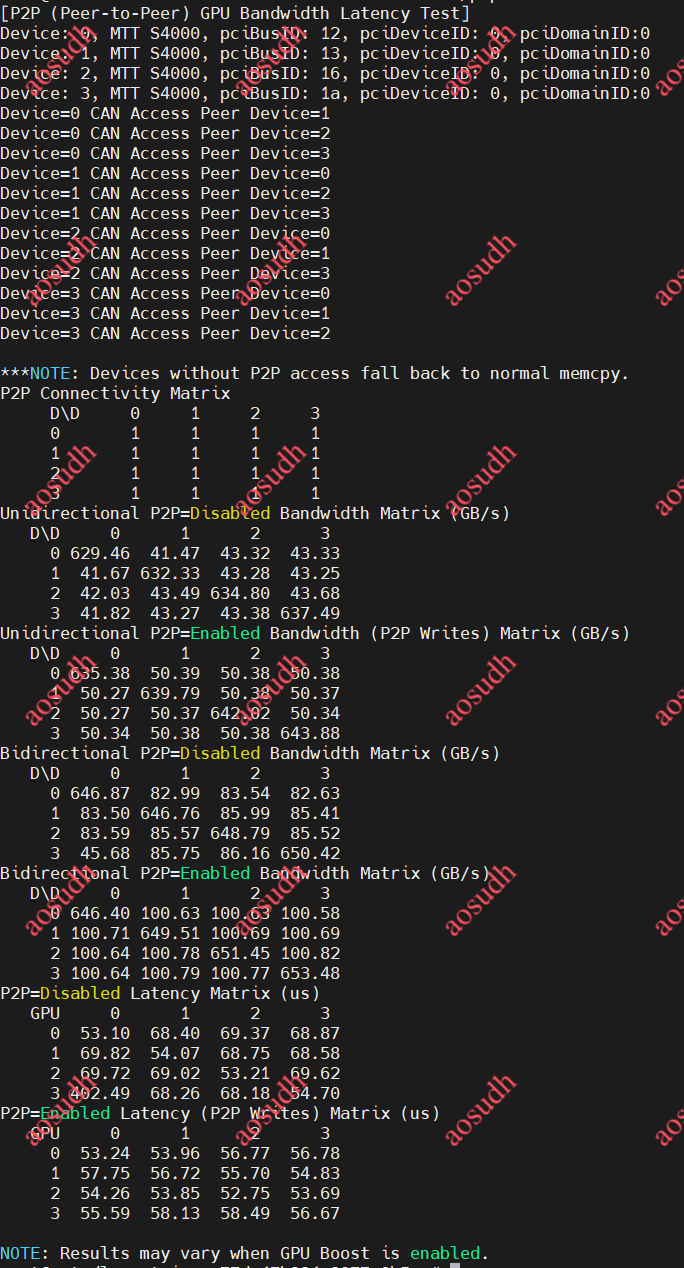

结果

可以看到p2p已经正确开启,但是延迟测试有问题,后续改进

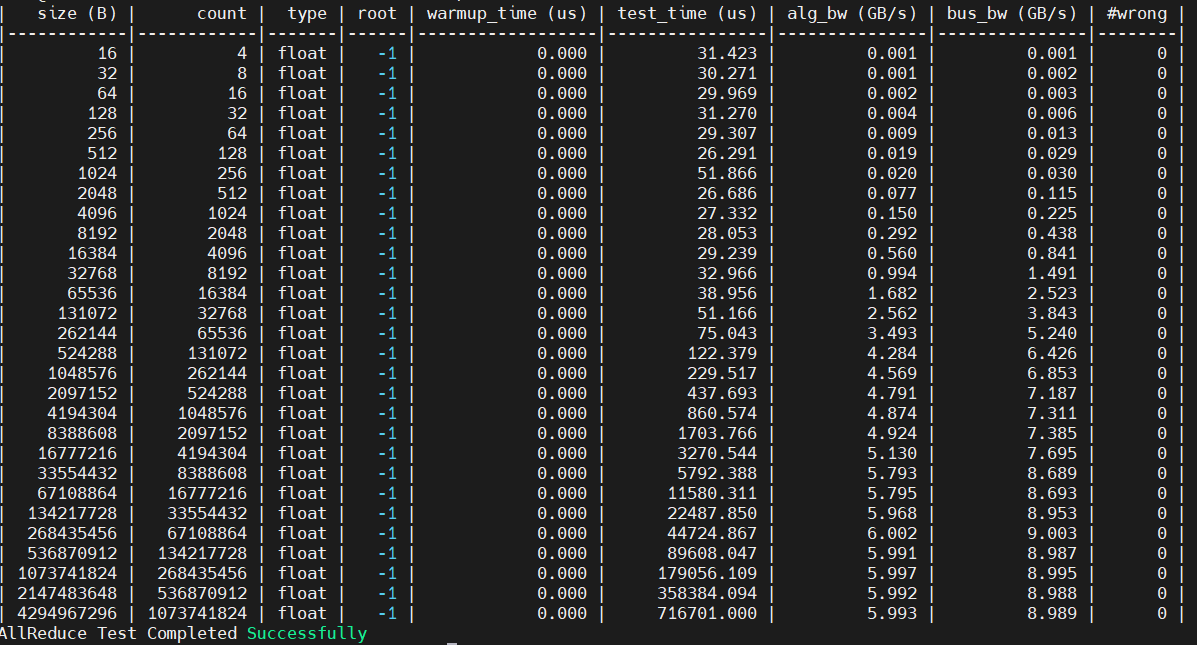

基于musa编程的allreduce测试

代码参考

主要参考了NCCLtest中的allreduce部分逻辑

GitHub - NVIDIA/nccl-tests: NCCL Tests

并且参考了mublas api设计

https://docs.mthreads.com/musa-sdk/musa-sdk-doc-online/api/mublas_api

代码部分

#include <stdio.h>

#include <stdlib.h>

#include <math.h>

#include "musa_runtime.h"

#include "mccl.h"

#include <inttypes.h> // 必须包含此头文件

// 宏定义(所有标识符在此处声明)

#define MIN_SIZE_B 16ULL // 最小测试尺寸(16字节)

#define MAX_SIZE_B (4096ULL * 1024ULL * 1024ULL) // 最大测试尺寸(4096MB)

#define STEP_FACTOR 2ULL // 尺寸增长因子(每次翻倍)

#define WARMUP_ITERS 5 // 热身迭代次数

#define TEST_ITERS 20 // 测试迭代次数

#define ROOT_RANK -1 // 根节点(-1表示全归约)

#define DATA_TYPE mcclFloat // 数据类型

#define REDUCTION_OP mcclSum // 归约操作

#define FLOAT_SIZE sizeof(float) // float类型字节数(4字节)

// 错误检查宏

#define MUSACHECK(cmd) do { \

musaError_t err = cmd; \

if (err != musaSuccess) { \

printf("MUSA Error at %s:%d: %s\n", __FILE__, __LINE__, musaGetErrorString(err)); \

exit(EXIT_FAILURE); \

} \

} while(0)

#define MCCLCHECK(cmd) do { \

mcclResult_t res = cmd; \

if (res != mcclSuccess) { \

printf("MCCL Error at %s:%d: %s\n", __FILE__, __LINE__, mcclGetErrorString(res)); \

exit(EXIT_FAILURE); \

} \

} while(0)

// 带宽计算函数

void calculate_bandwidth(size_t count, int type_size, double time_sec, double* alg_bw, double* bus_bw, int nranks) {

if (time_sec <= 0 || count == 0) {

*alg_bw = 0.0;

*bus_bw = 0.0;

return;

}

double data_size_gb = (double)(count * type_size) / 1e9;

*alg_bw = data_size_gb / time_sec;

double factor = (nranks > 1) ? (2.0 * (nranks - 1)) / nranks : 1.0;

*bus_bw = *alg_bw * factor;

}

int main(int argc, char* argv[]) {

int nDev = 4; // 设备数量

int devs[4] = {0, 1, 2, 3}; // 设备ID列表

mcclComm_t comms[4]; // MCCL通信器

musaStream_t streams[4]; // 流数组

float** sendbuff = NULL; // 发送缓冲区

float** recvbuff = NULL; // 接收缓冲区

size_t current_size_b = MIN_SIZE_B; // 当前测试尺寸(字节)

double alg_bw, bus_bw; // 算法带宽和总线带宽

int test_wrong = 0; // 错误计数

// 初始化MCCL通信器

MCCLCHECK(mcclCommInitAll(comms, nDev, devs));

// 分配设备内存并创建流

sendbuff = (float**)malloc(nDev * sizeof(float*));

recvbuff = (float**)malloc(nDev * sizeof(float*));

for (int i = 0; i < nDev; ++i) {

MUSACHECK(musaSetDevice(i));

MUSACHECK(musaMalloc(&sendbuff[i], MAX_SIZE_B)); // 分配最大尺寸内存

MUSACHECK(musaMalloc(&recvbuff[i], MAX_SIZE_B));

MUSACHECK(musaStreamCreate(&streams[i])); // 创建独立流

}

// 打印结果表头

printf("| %10s | %10s | %5s | %4s | %14s | %13s | %13s | %13s | %5s |\n",

"size (B)", "count", "type", "root", "warmup_time (us)", "test_time (us)", "alg_bw (GB/s)", "bus_bw (GB/s)", "#wrong");

printf("|------------|------------|-------|------|------------------|----------------|---------------|---------------|--------|\n");

// 尺寸循环测试

while (current_size_b <= MAX_SIZE_B) {

size_t element_count = current_size_b / FLOAT_SIZE; // 元素数量

// 跳过非对齐尺寸

if (current_size_b % FLOAT_SIZE != 0) {

current_size_b *= STEP_FACTOR;

continue;

}

// 初始化设备数据(通过主机内存正确赋值为1.0f)

for (int i = 0; i < nDev; ++i) {

MUSACHECK(musaSetDevice(i));

float* host_buf = (float*)malloc(current_size_b);

for (size_t j = 0; j < element_count; ++j) host_buf[j] = 1.0f;

MUSACHECK(musaMemcpy(sendbuff[i], host_buf, current_size_b, musaMemcpyHostToDevice));

free(host_buf);

MUSACHECK(musaMemset(recvbuff[i], 0, current_size_b));

}

// 热身迭代(包含流同步)

for (int warmup = 0; warmup < WARMUP_ITERS; ++warmup) {

MCCLCHECK(mcclGroupStart());

for (int i = 0; i < nDev; ++i) {

MCCLCHECK(mcclAllReduce(

sendbuff[i], recvbuff[i],

element_count, DATA_TYPE, REDUCTION_OP,

comms[i], streams[i]

));

}

MCCLCHECK(mcclGroupEnd());

for (int i = 0; i < nDev; ++i) {

MUSACHECK(musaSetDevice(i));

MUSACHECK(musaStreamSynchronize(streams[i]));

}

}

// 事件计时(仅在主设备0操作)

musaEvent_t start, stop;

MUSACHECK(musaSetDevice(0));

MUSACHECK(musaEventCreate(&start));

MUSACHECK(musaEventCreate(&stop));

MUSACHECK(musaEventRecord(start, streams[0]));

// 测试迭代(包含完整Group操作)

MCCLCHECK(mcclGroupStart());

for (int iter = 0; iter < TEST_ITERS; ++iter) {

for (int i = 0; i < nDev; ++i) {

MUSACHECK(musaSetDevice(i));

MCCLCHECK(mcclAllReduce(

sendbuff[i], recvbuff[i],

element_count, DATA_TYPE, REDUCTION_OP,

comms[i], streams[i]

));

}

}

MCCLCHECK(mcclGroupEnd());

MUSACHECK(musaEventRecord(stop, streams[0]));

MUSACHECK(musaEventSynchronize(stop));

// 计算平均时间

float total_time_ms;

MUSACHECK(musaEventElapsedTime(&total_time_ms, start, stop));

double avg_time_us = (total_time_ms / TEST_ITERS) * 1000;

// 计算带宽

calculate_bandwidth(element_count, FLOAT_SIZE, avg_time_us / 1e6, &alg_bw, &bus_bw, nDev);

// 验证结果(允许浮点精度误差)

test_wrong = 0;

float expected = (float)nDev;

for (int i = 0; i < nDev; ++i) {

MUSACHECK(musaSetDevice(i));

float* h_recv = (float*)malloc(current_size_b);

MUSACHECK(musaMemcpy(h_recv, recvbuff[i], current_size_b, musaMemcpyDeviceToHost));

for (size_t j = 0; j < element_count; ++j) {

if (fabs(h_recv[j] - expected) > 1e-6) test_wrong++;

}

free(h_recv);

}

// 打印结果

printf("| %10" PRIu64 " | %10" PRIu64 " | %4s | %4d | %16.3f | %14.3f | %13.3f | %13.3f | %6d |\n",

(uint64_t)current_size_b, (uint64_t)element_count, "float", ROOT_RANK, 0.0, avg_time_us, alg_bw, bus_bw, test_wrong);

// 销毁事件

MUSACHECK(musaSetDevice(0));

MUSACHECK(musaEventDestroy(start));

MUSACHECK(musaEventDestroy(stop));

// 增大测试尺寸

current_size_b *= STEP_FACTOR;

}

// 释放资源

for (int i = 0; i < nDev; ++i) {

MUSACHECK(musaSetDevice(i));

MUSACHECK(musaFree(sendbuff[i]));

MUSACHECK(musaFree(recvbuff[i]));

MUSACHECK(musaStreamDestroy(streams[i]));

mcclCommDestroy(comms[i]);

}

free(sendbuff);

free(recvbuff);

printf("AllReduce Test Completed Successfully\n");

return 0;

}编译

因为代码用了musa_runtime与mccl两个库,因此编译选项也会有所改变

mcc allreduce.mu -o allreduce -lmusart -lmccl

结果

不知道为什么结果测出来和用pytorch测出来结果相差不小,目测是因为musa event打点计时函数没使用正确(在p2p测试的自交中也有体现,不管什么情况都是50us左右),这个需要后续再看下

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?