step1: 下载apache-hive-1.2.1-bin.tar.gz

step2 : 用rz 上传 到linux 指定目录 (/opt/software/)

step3: 解压 tar -zxvf apache-hive-1.2.1-bin.tar.gz -C /opt/module/

step4: 配置环境hive的环境变量 vi /etc/profile

HIVE_HOME

step5: 进入HIVE的conf目录,然后修改hive-env.sh.template 和 hive-log4j.properties.template为

hive-env.sh和 hive-log4j.properties 并添加hive-site.xml文件,xml后缀不要弄错了

hive-env.sh 配置如下

# Set HADOOP_HOME to point to a specific hadoop install directory

HADOOP_HOME=/opt/module/hadoop-2.8.4

# Hive Configuration Directory can be controlled by:

export HIVE_CONF_DIR=/opt/module/hive/conf

hive-log4j.properties配置如下:

hive.log.dir=/opt/module/hive/logs

hive-site.xml文件配置如下:

configuration>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://bigdata121:3306/metastore121?createDatabaseIfNotExist=true</value>

<description>JDBC connect string for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

<description>Driver class name for a JDBC metastore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

<description>username to use against metastore database</description>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

<description>password to use against metastore database</description>

</property>

</configuration>

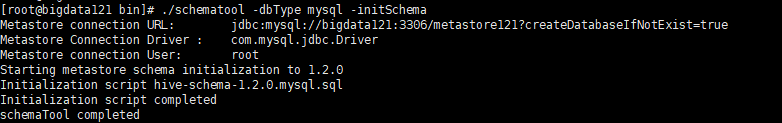

step6: 进入bin目录下,然后执行

./schematool -dbType mysql -initSchema

可能会报找不到驱动 这时进入hive lib目录添加 mysql-connector-java-5.1.27.jar架包

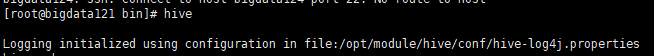

step7: 启动Hive发现 如下错误

Logging initialized using configuration in file:/opt/module/hive/conf/hive-log4j.properties

Exception in thread "main" java.lang.RuntimeException: java.net.ConnectException: Call From bigdata121/192.168.1.121 to bigdata121:9000 failed on connection exception: java.net.ConnectException: Connection refused; For more details see: http://wiki.apache.org/hadoop/ConnectionRefused

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:522)

at org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:677)

at org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:621)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.util.RunJar.run(RunJar.java:234)

at org.apache.hadoop.util.RunJar.main(RunJar.java:148)

Caused by: java.net.ConnectException: Call From bigdata121/192.168.1.121 to bigdata121:9000 failed on connection exception: java.net.ConnectException: Connection refused; For more details see: http://wiki.apache.org/hadoop/ConnectionRefused

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.net.NetUtils.wrapWithMessage(NetUtils.java:801)

at org.apache.hadoop.net.NetUtils.wrapException(NetUtils.java:732)

at org.apache.hadoop.ipc.Client.getRpcResponse(Client.java:1493)

at org.apache.hadoop.ipc.Client.call(Client.java:1435)

at org.apache.hadoop.ipc.Client.call(Client.java:1345)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:227)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:116)

at com.sun.proxy.$Proxy16.getFileInfo(Unknown Source)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.getFileInfo(ClientNamenodeProtocolTranslatorPB.java:796)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:409)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeMethod(RetryInvocationHandler.java:163)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invoke(RetryInvocationHandler.java:155)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeOnce(RetryInvocationHandler.java:95)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:346)

at com.sun.proxy.$Proxy17.getFileInfo(Unknown Source)

at org.apache.hadoop.hdfs.DFSClient.getFileInfo(DFSClient.java:1649)

at org.apache.hadoop.hdfs.DistributedFileSystem$27.doCall(DistributedFileSystem.java:1440)

at org.apache.hadoop.hdfs.DistributedFileSystem$27.doCall(DistributedFileSystem.java:1437)

at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81)

at org.apache.hadoop.hdfs.DistributedFileSystem.getFileStatus(DistributedFileSystem.java:1437)

at org.apache.hadoop.fs.FileSystem.exists(FileSystem.java:1437)

at org.apache.hadoop.hive.ql.session.SessionState.createRootHDFSDir(SessionState.java:596)

at org.apache.hadoop.hive.ql.session.SessionState.createSessionDirs(SessionState.java:554)

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:508)

... 8 more

Caused by: java.net.ConnectException: Connection refused

at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717)

at org.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206)

at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:531)

at org.apache.hadoop.ipc.Client$Connection.setupConnection(Client.java:685)

at org.apache.hadoop.ipc.Client$Connection.setupIOstreams(Client.java:788)

at org.apache.hadoop.ipc.Client$Connection.access$3500(Client.java:410)

at org.apache.hadoop.ipc.Client.getConnection(Client.java:1550)

at org.apache.hadoop.ipc.Client.call(Client.java:1381)

... 32 more

然后启动start-dfs.sh 和 start-yarn.sh

由于我把hive-site.xml文件扩展名搞错了一直报

./schematool -dbType mysql -initSchema

Metastore connection URL: jdbc:derby:;databaseName=metastore_db;create=true

Metastore Connection Driver : org.apache.derby.jdbc.EmbeddedDriver

Metastore connection User: APP

org.apache.hadoop.hive.metastore.HiveMetaException: Failed to get schema version.

*** schemaTool failed ***

step7: 再次启动Hive,结果如下

step8: 再次启动

./schematool -dbType mysql -initSchema

step9 : 进入mysql

step10:查看Navicat

959

959

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?