附上参考博客

这里要说的三个概念为:

· 卷积的基本运算

· receptive field (感受野)

· feature maps

卷积的基本的操作

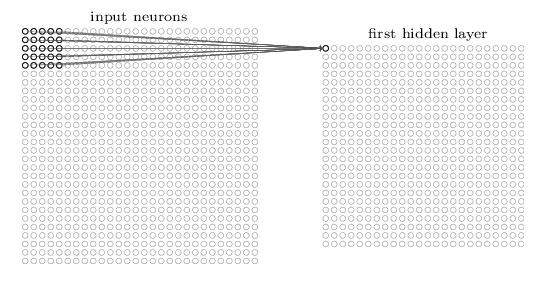

这里把输入层 到 隐藏层的映射称为 特征映射(a feature map) ,卷积核共享的权值 w 叫做 shared weights.,b叫做 shared bias。 shared weights和shared bias 定义了一个核或者过滤器( a kernel or filter ),规定 每一个隐藏层神经元的这些(图中是25个)个权值w 和 b都共享。 也就是说隐藏层神经元共享权值。

一张 W1×H1 W 1 × H 1 的图像,经过一个 F×F F × F 卷积核运算,假设卷积运算时候的stride 为 S,那么我们可以求得输出图像的尺寸为:

W2=(W1−F)/S+1 W 2 = ( W 1 − F ) / S + 1

H2=(H1−F)/S+1 H 2 = ( H 1 − F ) / S + 1

结合 zero padding,我们可以求得输出图像的大小为:

W2=(W1−F+2P)/S+1 W 2 = ( W 1 − F + 2 P ) / S + 1

H2=(H1−F+2P)/S+1 H 2 = ( H 1 − F + 2 P ) / S + 1

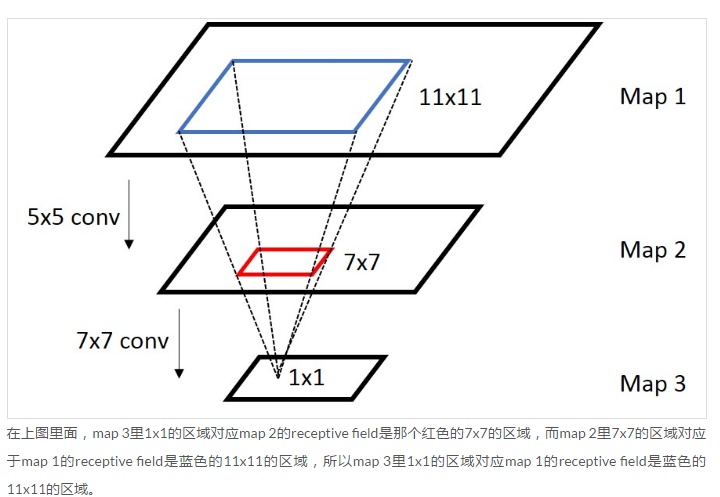

Receptive Field

普通来说就是卷积层 i+1 层中的每个像素点是由卷积层 i 中特征图里像素级邻域与卷积核相乘所得,其中像素级邻域就是receptive field,通俗来说就是与卷积矩阵相乘的那个矩阵。

换个说法:卷积神经网络CNN中,某一层输出结果中一个元素所对应的输入层的区域大小,被称作感受野receptive field。感受野的大小是由kernel size,stride,padding , outputsize 一起决定的。

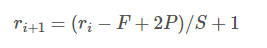

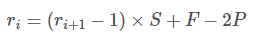

将上面的卷积计算规则化:

ri

r

i

是第 i 层 featrue map 的 size,

ri+1

r

i

+

1

是第 i+1 层feature map 的 size。我们可以反过来由

ri+1

r

i

+

1

求得

ri

r

i

的size:

这里反过来计算时,如果

ri+1

r

i

+

1

是一个n(n<=它所在层的feature map的size),求得的

ri

r

i

即代表对应上层的receptive field大小。

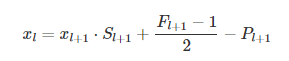

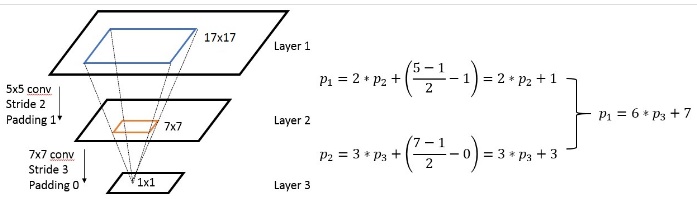

这是计算不同 layer 之间的 receptive field,有的时候,我们需要计算的是一种坐标映射关系,即 receptive filed 的中心点的坐标,这个坐标映射关系满足下面的关系:

这是计算任意一个layer输入输出的坐标映射关系,如果是计算任意feature map之间的关系,只需要用简单的组合就可以得到,下图是一个简单的例子:

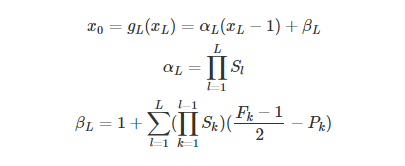

总结公式:

自己也还没太清楚,附上几份参考文献:

有代码

知乎专栏

转自上文

net_struct = {'alexnet': {'net':[[11,4,0],[3,2,0],[5,1,2],[3,2,0],[3,1,1],[3,1,1],[3,1,1],[3,2,0]],

'name':['conv1','pool1','conv2','pool2','conv3','conv4','conv5','pool5']},

'vgg16': {'net':[[3,1,1],[3,1,1],[2,2,0],[3,1,1],[3,1,1],[2,2,0],[3,1,1],[3,1,1],[3,1,1],

[2,2,0],[3,1,1],[3,1,1],[3,1,1],[2,2,0],[3,1,1],[3,1,1],[3,1,1],[2,2,0]],

'name':['conv1_1','conv1_2','pool1','conv2_1','conv2_2','pool2','conv3_1','conv3_2',

'conv3_3', 'pool3','conv4_1','conv4_2','conv4_3','pool4','conv5_1','conv5_2','conv5_3','pool5']},

'zf-5':{'net': [[7,2,3],[3,2,1],[5,2,2],[3,2,1],[3,1,1],[3,1,1],[3,1,1]],

'name': ['conv1','pool1','conv2','pool2','conv3','conv4','conv5']}}

imsize = 224

def outFromIn(isz, net, layernum):

totstride = 1

insize = isz

for layer in range(layernum):

fsize, stride, pad = net[layer]

outsize = (insize - fsize + 2*pad) / stride + 1

insize = outsize

totstride = totstride * stride

return outsize, totstride

def inFromOut(net, layernum):

RF = 1

for layer in reversed(range(layernum)):

fsize, stride, pad = net[layer]

RF = ((RF -1)* stride) + fsize

return RF

if __name__ == '__main__':

print("layer output sizes given image = %dx%d" % (imsize, imsize))

for net in net_struct.keys():

print('************net structrue name is %s**************'% net)

for i in range(len(net_struct[net]['net'])):

p = outFromIn(imsize,net_struct[net]['net'], i+1)

rf = inFromOut(net_struct[net]['net'], i+1)

print("Layer Name = %s, Output size = %3d, Stride = % 3d, RF size = %3d" % (net_struct[net]['name'][i], p[0], p[1], rf))layer output sizes given image = 224x224

************net structrue name is alexnet**************

Layer Name = conv1, Output size = 54, Stride = 4, RF size = 11

Layer Name = pool1, Output size = 26, Stride = 8, RF size = 19

Layer Name = conv2, Output size = 26, Stride = 8, RF size = 51

Layer Name = pool2, Output size = 12, Stride = 16, RF size = 67

Layer Name = conv3, Output size = 12, Stride = 16, RF size = 99

Layer Name = conv4, Output size = 12, Stride = 16, RF size = 131

Layer Name = conv5, Output size = 12, Stride = 16, RF size = 163

Layer Name = pool5, Output size = 5, Stride = 32, RF size = 195

************net structrue name is vgg16**************

Layer Name = conv1_1, Output size = 224, Stride = 1, RF size = 3

Layer Name = conv1_2, Output size = 224, Stride = 1, RF size = 5

Layer Name = pool1, Output size = 112, Stride = 2, RF size = 6

Layer Name = conv2_1, Output size = 112, Stride = 2, RF size = 10

Layer Name = conv2_2, Output size = 112, Stride = 2, RF size = 14

Layer Name = pool2, Output size = 56, Stride = 4, RF size = 16

Layer Name = conv3_1, Output size = 56, Stride = 4, RF size = 24

Layer Name = conv3_2, Output size = 56, Stride = 4, RF size = 32

Layer Name = conv3_3, Output size = 56, Stride = 4, RF size = 40

Layer Name = pool3, Output size = 28, Stride = 8, RF size = 44

Layer Name = conv4_1, Output size = 28, Stride = 8, RF size = 60

Layer Name = conv4_2, Output size = 28, Stride = 8, RF size = 76

Layer Name = conv4_3, Output size = 28, Stride = 8, RF size = 92

Layer Name = pool4, Output size = 14, Stride = 16, RF size = 100

Layer Name = conv5_1, Output size = 14, Stride = 16, RF size = 132

Layer Name = conv5_2, Output size = 14, Stride = 16, RF size = 164

Layer Name = conv5_3, Output size = 14, Stride = 16, RF size = 196

Layer Name = pool5, Output size = 7, Stride = 32, RF size = 212

************net structrue name is zf-5**************

Layer Name = conv1, Output size = 112, Stride = 2, RF size = 7

Layer Name = pool1, Output size = 56, Stride = 4, RF size = 11

Layer Name = conv2, Output size = 28, Stride = 8, RF size = 27

Layer Name = pool2, Output size = 14, Stride = 16, RF size = 43

Layer Name = conv3, Output size = 14, Stride = 16, RF size = 75

Layer Name = conv4, Output size = 14, Stride = 16, RF size = 107

Layer Name = conv5, Output size = 14, Stride = 16, RF size = 139Feature map

Feature map 中的神经元是共享权重系数的,feature map 中的每一个神经元对应的就是前一层的 feature map 中的某个邻域,反应的是这个邻域与卷积核做卷积之后的一种响应,因为这是一种局部的响应,所以 feature map 可以记录 feature ,也可以记录 location,响应的位置。

Feature map和Receptive Field的关联

一句话:feature map某个节点的响应对应的输入图像的区域就是receptive field。

例如:比如我们第一层是一个3*3的卷积核,那么我们经过这个卷积核得到的feature map中的每个节点都源自这个3*3的卷积核与原图像中3*3的区域做卷积,那么我们就称这个feature map的节点receptive field大小为3*3。

1014

1014

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?