1、查询至少连续三天下单的用户

1.1、表结构

create table order_info(

order_id string COMMENT '订单id',

user_id string COMMENT '用户id',

create_date string COMMENT '下单日期',

total_amount decimal(16, 2) COMMENT '订单总金额'

) COMMENT '订单表'

ROW FORMAT DELIMITED FIELDS TERMINATED BY '\t';

1.2、数据

insert overwrite table order_info

values

('1', '101', '2021-09-27', 29000.00),

('2', '101', '2021-09-28', 70500.00),

('3', '101', '2021-09-29', 43300.00),

('4', '101', '2021-09-30', 860.00),

('5', '102', '2021-10-01', 46180.00),

('6', '102', '2021-10-01', 50000.00),

('7', '102', '2021-10-01', 75500.00),

('8', '102', '2021-10-02', 6170.00),

('9', '103', '2021-10-02', 18580.00),

('10', '103', '2021-10-02', 28000.00),

('11', '103', '2021-10-02', 23400.00),

('12', '103', '2021-10-03', 5910.00),

('13', '104', '2021-10-03', 13000.00),

('14', '104', '2021-10-03', 69500.00),

('15', '104', '2021-10-03', 2000.00),

('16', '104', '2021-10-03', 5380.00),

('17', '105', '2021-10-04', 6210.00),

('18', '105', '2021-10-04', 68000.00),

('19', '105', '2021-10-04', 43100.00),

('20', '105', '2021-10-04', 2790.00),

('21', '106', '2021-10-04', 9390.00),

('22', '106', '2021-10-05', 58000.00),

('23', '106', '2021-10-05', 46600.00),

('24', '106', '2021-10-05', 5160.00),

('25', '107', '2021-10-05', 55350.00),

('26', '107', '2021-10-05', 14500.00),

('27', '107', '2021-10-06', 47400.00),

('28', '107', '2021-10-06', 6900.00),

('29', '108', '2021-10-06', 56570.00),

('30', '108', '2021-10-06', 44500.00),

('31', '108', '2021-10-07', 50800.00),

('32', '108', '2021-10-07', 3900.00),

('33', '109', '2021-10-07', 41480.00),

('34', '109', '2021-10-07', 88000.00),

('35', '109', '2020-10-08', 15000.00),

('36', '109', '2020-10-08', 9020.00),

('37', '1010', '2020-10-08', 9260.00),

('38', '1010', '2020-10-08', 12000.00),

('39', '1010', '2020-10-08', 23900.00),

('40', '1010', '2020-10-08', 6790.00);

1.3.1、解法1

(1)先根据下单日期、用户号进行去重(去重可用distinct或group by,建议少用distinct)

(2)根据用户进行分组、根据下单日期进行升序排序

(3)下单日期与排序序号的差值日期一样,则表示连续下单,此时根据用户号和 差值日期分组查询,继续数大于等于3的为【至少连续三天下单的用户】

select user_id,date_sub(create_date,rn) as st,count(1) as cnt

from

(

select

user_id

,create_date

,row_number() over (partition by user_id order by create_date ) as rn

from (select distinct user_id,create_date from order_info) a

) t

group by user_id

,date_sub(create_date,rn)

having count(1)>=3;

1.3.2、解法2

(1) 先根据下单日期、用户号进行去重

(2) 根据用户分区,下单日期排序,用lag函数取当前行往前两行的下单日期,如果当前日期与此日期相差2,则为三日连续下单。

select

distinct user_id

from

(

select

t.user_id

, t.create_date

, lag(t.create_date,2) over (partition by t.user_id order by t.create_date) as lg

from

(

select

user_id

, create_date

from order_info

group by user_id

, create_date

)t

)t1

where datediff(t1.lg,t1.create_date) = -2

;

2、查询首次下单后第二天连续下单的用户比率

解法一:

(1)按照用户号和下单日期去重,使得一个用户一天只有一条数据

(2)按照用户号分区、下单日期排序,使用lead取出当前行后一行的下单日期

(3)若当前用户的当前行日期减去后一行的下单日期,相差为1,则当前用户为次日连续下单的用户,对该用户id进行去重,再比上去重后的当前所有用户即为【首次下单后第二天连续下单的用户比率】

select

count(distinct case when (datediff(t1.create_date,ld) = -1) then t1.user_id end) / count(distinct user_id)

from

(

select

t.user_id

, t.create_date

, lead(t.create_date,1) over (partition by t.user_id order by t.create_date asc) as ld

from

(

select

user_id

, create_date

from order_info

group by user_id

, create_date

)t

)t1;

解法二:

(1)按照用户号和下单日期去重,使得一个用户一天只有一条数据

(2)按照用户号分区、下单日期升序排序,使用lead取出当前行后一行的下单日期;

(3)取出用户首日消费数据,count数据量;并且计算当前日期是否比lead的下一日期早一天,若是,count数据量,再去比上用户首日消费数据总量

select sum(if(datediff(tt.create_date,ld) = -1,1,0))/count(1)

from

(

select

t.user_id

, t.create_date

, row_number() over(partition by user_id order by create_date asc ) as rn

, lead(t.create_date,1) over (partition by t.user_id order by t.create_date asc) as ld

from

(

select

user_id

, create_date

from order_info

group by user_id

, create_date

) t

)tt

where tt.rn = 1 -- 取出用户消费的首日数据

;

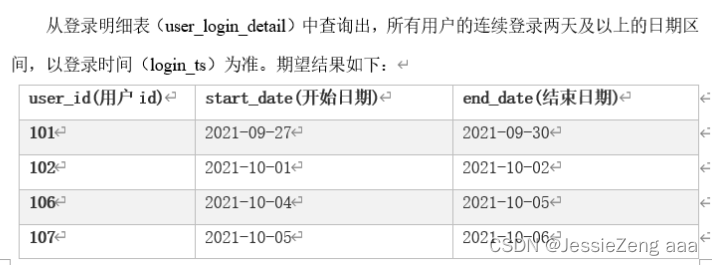

3、查询所有用户的连续登录两天及以上的日期区间

3.1、表结构

-- 登录明细表

CREATE TABLE user_login_detail

(

user_id string comment '用户id',

ip_address string comment 'ip地址',

login_ts string comment '登录时间',

logout_ts string comment '登出时间'

) COMMENT '用户登录明细表'

ROW FORMAT DELIMITED FIELDS TERMINATED BY '\t';

3.2、数据准备

INSERT overwrite table user_login_detail

VALUES ('101', '180.149.130.161', '2021-09-21 08:00:00', '2021-09-27 08:30:00'),

('101', '180.149.130.161', '2021-09-27 08:00:00', '2021-09-27 08:30:00'),

('101', '180.149.130.161', '2021-09-28 09:00:00', '2021-09-28 09:10:00'),

('101', '180.149.130.161', '2021-09-29 13:30:00', '2021-09-29 13:50:00'),

('101', '180.149.130.161', '2021-09-30 20:00:00', '2021-09-30 20:10:00'),

('102', '120.245.11.2', '2021-09-22 09:00:00', '2021-09-27 09:30:00'),

('102', '120.245.11.2', '2021-10-01 08:00:00', '2021-10-01 08:30:00'),

('102', '180.149.130.174', '2021-10-01 07:50:00', '2021-10-01 08:20:00'),

('102', '120.245.11.2', '2021-10-02 08:00:00', '2021-10-02 08:30:00'),

('103', '27.184.97.3', '2021-09-23 10:00:00', '2021-09-27 10:30:00'),

('103', '27.184.97.3', '2021-10-03 07:50:00', '2021-10-03 09:20:00'),

('104', '27.184.97.34', '2021-09-24 11:00:00', '2021-09-27 11:30:00'),

('104', '27.184.97.34', '2021-10-03 07:50:00', '2021-10-03 08:20:00'),

('104', '27.184.97.34', '2021-10-03 08:50:00', '2021-10-03 10:20:00'),

('104', '120.245.11.89', '2021-10-03 08:40:00', '2021-10-03 10:30:00'),

('105', '119.180.192.212', '2021-10-04 09:10:00', '2021-10-04 09:30:00'),

('106', '119.180.192.66', '2021-10-04 08:40:00', '2021-10-04 10:30:00'),

('106', '119.180.192.66', '2021-10-05 21:50:00', '2021-10-05 22:40:00'),

('107', '219.134.104.7', '2021-09-25 12:00:00', '2021-09-27 12:30:00'),

('107', '219.134.104.7', '2021-10-05 22:00:00', '2021-10-05 23:00:00'),

('107', '219.134.104.7', '2021-10-06 09:10:00', '2021-10-06 10:20:00'),

('107', '27.184.97.46', '2021-10-06 09:00:00', '2021-10-06 10:00:00'),

('108', '101.227.131.22', '2021-10-06 09:00:00', '2021-10-06 10:00:00'),

('108', '101.227.131.22', '2021-10-06 22:00:00', '2021-10-06 23:00:00'),

('109', '101.227.131.29', '2021-09-26 13:00:00', '2021-09-27 13:30:00'),

('109', '101.227.131.29', '2021-10-06 08:50:00', '2021-10-06 10:20:00'),

('109', '101.227.131.29', '2021-10-08 09:00:00', '2021-10-08 09:10:00'),

('1010', '119.180.192.10', '2021-09-27 14:00:00', '2021-09-27 14:30:00'),

('1010', '119.180.192.10', '2021-10-09 08:50:00', '2021-10-09 10:20:00');

3.3、解题思路

(1)根据用户号、登录日期去重

(2)根据用户号分区、登录日期升序排序

(3)若登录日期减去排序序号后时间一致,则为连续的日期,因此根据用户号、登陆日期减排序序号后的日期分组,大于等于2的用户记录为连续登录两天以上的用户;并且使用collect_set列转行函数将用户连续登录的一组日期存放在列表中

(4)取出用户id和连续登录两天及以上的日期区间

select user_id,st[0] as start_date,st[size(st)-1] as end_date

from

(

select user_id,date_sub(login_dt,rn) as dt,sort_array(collect_set(login_dt)) as st

from

(

select user_id

,login_dt,row_number() over(partition by user_id order by login_dt asc) as rn

from

(

select user_id

,substr(login_ts,1,10) as login_dt

from user_login_detail

group by user_id

,substr(login_ts,1,10)

) t

) tt

group by user_id,date_sub(login_dt,rn)

having count(1)>=2 --连续登录两天以上

) ttt

;

4、1 号商品销售额>21000或者2号商品销售额>=1000 ,从 order_detail 中查找出这两个商品连续2个月满足条件的商品

4.1、表结构

CREATE TABLE order_detail

(

order_detail_id string COMMENT '订单明细id',

order_id string COMMENT '订单id',

sku_id string COMMENT '商品id',

create_date string COMMENT '下单日期',

price decimal(16, 2) COMMENT '下单时的商品单价',

sku_num int COMMENT '下单商品件数'

) COMMENT '订单明细表'

ROW FORMAT DELIMITED FIELDS TERMINATED BY '\t';

4.1、数据准备

INSERT overwrite table order_detail

values ('1', '1', '1', '2021-09-27', 2000.00, 2),

('2', '1', '3', '2021-09-27', 5000.00, 5),

('3', '2', '4', '2021-09-28', 6000.00, 9),

('4', '2', '5', '2021-09-28', 500.00, 33),

('5', '3', '7', '2021-09-29', 100.00, 37),

('6', '3', '8', '2021-09-29', 600.00, 46),

('7', '3', '9', '2021-09-29', 1000.00, 12),

('8', '4', '12', '2021-09-30', 20.00, 43),

('9', '5', '1', '2021-10-01', 2000.00, 8),

('10', '5', '2', '2021-10-01', 10.00, 18),

('11', '5', '3', '2021-10-01', 5000.00, 6),

('12', '6', '4', '2021-10-01', 6000.00, 8),

('13', '6', '6', '2021-10-01', 2000.00, 1),

('14', '7', '7', '2021-10-01', 100.00, 17),

('15', '7', '8', '2021-10-01', 600.00, 48),

('16', '7', '9', '2021-10-01', 1000.00, 45),

('17', '8', '10', '2021-10-02', 100.00, 48),

('18', '8', '11', '2021-10-02', 50.00, 15),

('19', '8', '12', '2021-10-02', 20.00, 31),

('20', '9', '1', '2021-09-30', 2000.00, 9),

('21', '9', '2', '2021-10-02', 10.00, 5800),

('22', '10', '4', '2021-10-02', 6000.00, 1),

('23', '10', '5', '2021-10-02', 500.00, 24),

('24', '10', '6', '2021-10-02', 2000.00, 5),

('25', '11', '8', '2021-10-02', 600.00, 39),

('26', '12', '10', '2021-10-03', 100.00, 47),

('27', '12', '11', '2021-10-03', 50.00, 19),

('28', '12', '12', '2021-10-03', 20.00, 13000),

('29', '13', '1', '2021-10-03', 2000.00, 4),

('30', '13', '3', '2021-10-03', 5000.00, 1),

('31', '14', '4', '2021-10-03', 6000.00, 5),

('32', '14', '5', '2021-10-03', 500.00, 47),

('33', '14', '6', '2021-10-03', 2000.00, 8),

('34', '15', '7', '2021-10-03', 100.00, 20),

('35', '16', '10', '2021-10-03', 100.00, 22),

('36', '16', '11', '2021-10-03', 50.00, 42),

('37', '16', '12', '2021-10-03', 20.00, 7400),

('38', '17', '1', '2021-10-04', 2000.00, 3),

('39', '17', '2', '2021-10-04', 10.00, 21),

('40', '18', '4', '2021-10-04', 6000.00, 8),

('41', '18', '5', '2021-10-04', 500.00, 28),

('42', '18', '6', '2021-10-04', 2000.00, 3),

('43', '19', '7', '2021-10-04', 100.00, 55),

('44', '19', '8', '2021-10-04', 600.00, 11),

('45', '19', '9', '2021-10-04', 1000.00, 31),

('46', '20', '11', '2021-10-04', 50.00, 45),

('47', '20', '12', '2021-10-04', 20.00, 27),

('48', '21', '1', '2021-10-04', 2000.00, 2),

('49', '21', '2', '2021-10-04', 10.00, 39),

('50', '21', '3', '2021-10-04', 5000.00, 1),

('51', '22', '4', '2021-10-05', 6000.00, 8),

('52', '22', '5', '2021-10-05', 500.00, 20),

('53', '23', '7', '2021-10-05', 100.00, 58),

('54', '23', '8', '2021-10-05', 600.00, 18),

('55', '23', '9', '2021-10-05', 1000.00, 30),

('56', '24', '10', '2021-10-05', 100.00, 27),

('57', '24', '11', '2021-10-05', 50.00, 28),

('58', '24', '12', '2021-10-05', 20.00, 53),

('59', '25', '1', '2021-10-05', 2000.00, 5),

('60', '25', '2', '2021-10-05', 10.00, 35),

('61', '25', '3', '2021-10-05', 5000.00, 9),

('62', '26', '4', '2021-10-05', 6000.00, 1),

('63', '26', '5', '2021-10-05', 500.00, 13),

('64', '26', '6', '2021-10-05', 2000.00, 1),

('65', '27', '7', '2021-10-06', 100.00, 30),

('66', '27', '8', '2021-10-06', 600.00, 19),

('67', '27', '9', '2021-10-06', 1000.00, 33),

('68', '28', '10', '2021-10-06', 100.00, 37),

('69', '28', '11', '2021-10-06', 50.00, 46),

('70', '28', '12', '2021-10-06', 20.00, 45),

('71', '29', '1', '2021-10-06', 2000.00, 8),

('72', '29', '2', '2021-10-06', 10.00, 57),

('73', '29', '3', '2021-10-06', 5000.00, 8),

('74', '30', '4', '2021-10-06', 6000.00, 3),

('75', '30', '5', '2021-10-06', 500.00, 33),

('76', '30', '6', '2021-10-06', 2000.00, 5),

('77', '31', '8', '2021-10-07', 600.00, 13),

('78', '31', '9', '2021-10-07', 1000.00, 43),

('79', '32', '10', '2021-10-07', 100.00, 24),

('80', '32', '11', '2021-10-07', 50.00, 30),

('81', '33', '1', '2021-10-07', 2000.00, 8),

('82', '33', '2', '2021-10-07', 10.00, 48),

('83', '33', '3', '2021-10-07', 5000.00, 5),

('84', '34', '4', '2021-10-07', 6000.00, 10),

('85', '34', '5', '2021-10-07', 500.00, 44),

('86', '34', '6', '2021-10-07', 2000.00, 3),

('87', '35', '8', '2020-10-08', 600.00, 25),

('88', '36', '10', '2020-10-08', 100.00, 57),

('89', '36', '11', '2020-10-08', 50.00, 44),

('90', '36', '12', '2020-10-08', 20.00, 56),

('91', '37', '1', '2020-10-08', 2000.00, 2),

('92', '37', '2', '2020-10-08', 10.00, 26),

('93', '37', '3', '2020-10-08', 5000.00, 1),

('94', '38', '6', '2020-10-08', 2000.00, 6),

('95', '39', '7', '2020-10-08', 100.00, 35),

('96', '39', '8', '2020-10-08', 600.00, 34),

('97', '40', '10', '2020-10-08', 100.00, 37),

('98', '40', '11', '2020-10-08', 50.00, 51),

('99', '40', '12', '2020-10-08', 20.00, 27);

5、找出销售额连续3天超过100的商品

解题思路

(1)每个单品按日汇总销售额

(2)按单品、按日排序

(3)单品销售日期减排序序号后的日期相同则为连续排序,找出连续超过3条的数据的商品

(4)对商品进行去重

select distinct sku_id

from

(

select sku_id,date_sub(create_date,rn),count(1)

from

(

select sku_id

,create_date,row_number() over(partition by sku_id order by create_date asc) as rn

from

(

select

sku_id

,create_date

,sum(price * sku_num) as tot_sale_amt

from order_detail

group by sku_id

,create_date

having sum(price * sku_num)>100

) t1

) t2

group by sku_id

,date_sub(create_date,rn)

having count(1)>=3

) t3

;

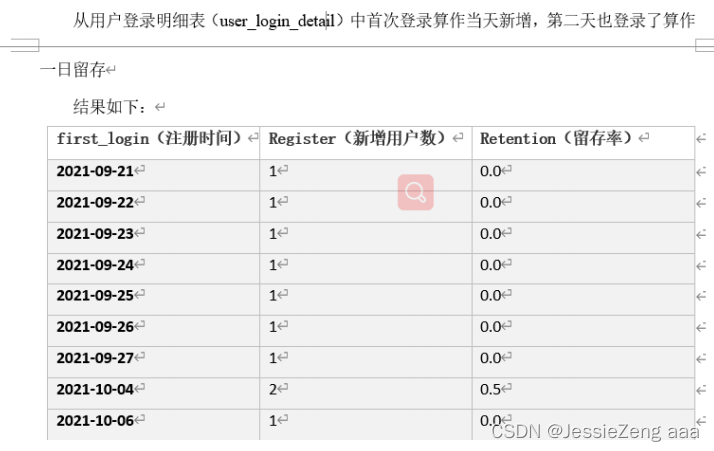

6、查询有新注册用户的当天的新用户数量、新用户的首日留存率(注册第二日也登录叫首日留存)

6.1、表结构

-- 登录明细表

CREATE TABLE user_login_detail

(

user_id string comment '用户id',

ip_address string comment 'ip地址',

login_ts string comment '登录时间',

logout_ts string comment '登出时间'

) COMMENT '用户登录明细表'

ROW FORMAT DELIMITED FIELDS TERMINATED BY '\t';

6.2、数据准备

INSERT overwrite table user_login_detail

VALUES ('101', '180.149.130.161', '2021-09-21 08:00:00', '2021-09-27 08:30:00'),

('101', '180.149.130.161', '2021-09-27 08:00:00', '2021-09-27 08:30:00'),

('101', '180.149.130.161', '2021-09-28 09:00:00', '2021-09-28 09:10:00'),

('101', '180.149.130.161', '2021-09-29 13:30:00', '2021-09-29 13:50:00'),

('101', '180.149.130.161', '2021-09-30 20:00:00', '2021-09-30 20:10:00'),

('102', '120.245.11.2', '2021-09-22 09:00:00', '2021-09-27 09:30:00'),

('102', '120.245.11.2', '2021-10-01 08:00:00', '2021-10-01 08:30:00'),

('102', '180.149.130.174', '2021-10-01 07:50:00', '2021-10-01 08:20:00'),

('102', '120.245.11.2', '2021-10-02 08:00:00', '2021-10-02 08:30:00'),

('103', '27.184.97.3', '2021-09-23 10:00:00', '2021-09-27 10:30:00'),

('103', '27.184.97.3', '2021-10-03 07:50:00', '2021-10-03 09:20:00'),

('104', '27.184.97.34', '2021-09-24 11:00:00', '2021-09-27 11:30:00'),

('104', '27.184.97.34', '2021-10-03 07:50:00', '2021-10-03 08:20:00'),

('104', '27.184.97.34', '2021-10-03 08:50:00', '2021-10-03 10:20:00'),

('104', '120.245.11.89', '2021-10-03 08:40:00', '2021-10-03 10:30:00'),

('105', '119.180.192.212', '2021-10-04 09:10:00', '2021-10-04 09:30:00'),

('106', '119.180.192.66', '2021-10-04 08:40:00', '2021-10-04 10:30:00'),

('106', '119.180.192.66', '2021-10-05 21:50:00', '2021-10-05 22:40:00'),

('107', '219.134.104.7', '2021-09-25 12:00:00', '2021-09-27 12:30:00'),

('107', '219.134.104.7', '2021-10-05 22:00:00', '2021-10-05 23:00:00'),

('107', '219.134.104.7', '2021-10-06 09:10:00', '2021-10-06 10:20:00'),

('107', '27.184.97.46', '2021-10-06 09:00:00', '2021-10-06 10:00:00'),

('108', '101.227.131.22', '2021-10-06 09:00:00', '2021-10-06 10:00:00'),

('108', '101.227.131.22', '2021-10-06 22:00:00', '2021-10-06 23:00:00'),

('109', '101.227.131.29', '2021-09-26 13:00:00', '2021-09-27 13:30:00'),

('109', '101.227.131.29', '2021-10-06 08:50:00', '2021-10-06 10:20:00'),

('109', '101.227.131.29', '2021-10-08 09:00:00', '2021-10-08 09:10:00'),

('1010', '119.180.192.10', '2021-09-27 14:00:00', '2021-09-27 14:30:00'),

('1010', '119.180.192.10', '2021-10-09 08:50:00', '2021-10-09 10:20:00');

6.3、解题思路

(1)先根据用户号和日期去重

(2)根据用户号分区,根据日期升序排序

(3)取出当前记录行下一条数据的登录日期

(4)取排序序号为1也就是用户首次登录的记录,再看这条记录的登陆日期与下一个日期是否相差为1,差1为连续,即首日留存

select login_dt

,count(distinct user_id) as regs_user_cnt

,count(distinct case when datediff(login_dt,ld)= -1 then user_id end) as reten_user_cnt

,count(distinct case when datediff(login_dt,ld)= -1 then user_id end)/count(distinct user_id) as reten -- 首日留存率

from

(

select user_id,login_dt,rn,lead(login_dt,1) over (partition by user_id order by login_dt asc) as ld

from

( select user_id

,login_dt

,row_number() over (partition by user_id order by login_dt asc) as rn

from (select user_id,substr(login_ts,1,10) as login_dt from user_login_detail group by user_id,substr(login_ts,1,10)) t1

) t2

) t3

where t3.rn =1

group by login_dt

;

313

313

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?