from keras.layers import Bidirectional, Concatenate, Permute, Dot, Input, LSTM, Multiply

from keras.layers import RepeatVector, Dense, Activation, Lambda

from keras.optimizers import Adam

from keras.utils import to_categorical

from keras.models import load_model, Model

import keras.backend as K

import numpy as np

from faker import Faker

import random

from tqdm import tqdm

from babel.dates import format_date

from nmt_utils import *

import matplotlib.pyplot as plt

%matplotlib inline

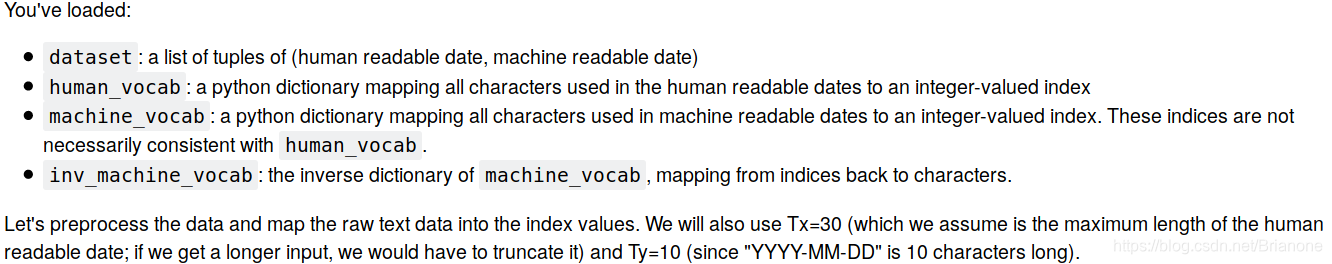

m = 10000

dataset, human_vocab, machine_vocab, inv_machine_vocab = load_dataset(m)dataset[:10][('9 may 1998', '1998-05-09'),

('10.09.70', '1970-09-10'),

('4/28/90', '1990-04-28'),

('thursday january 26 1995', '1995-01-26'),

('monday march 7 1983', '1983-03-07'),

('sunday may 22 1988', '1988-05-22'),

('tuesday july 8 2008', '2008-07-08'),

('08 sep 1999', '1999-09-08'),

('1 jan 1981', '1981-01-01'),

('monday may 22 1995', '1995-05-22')]

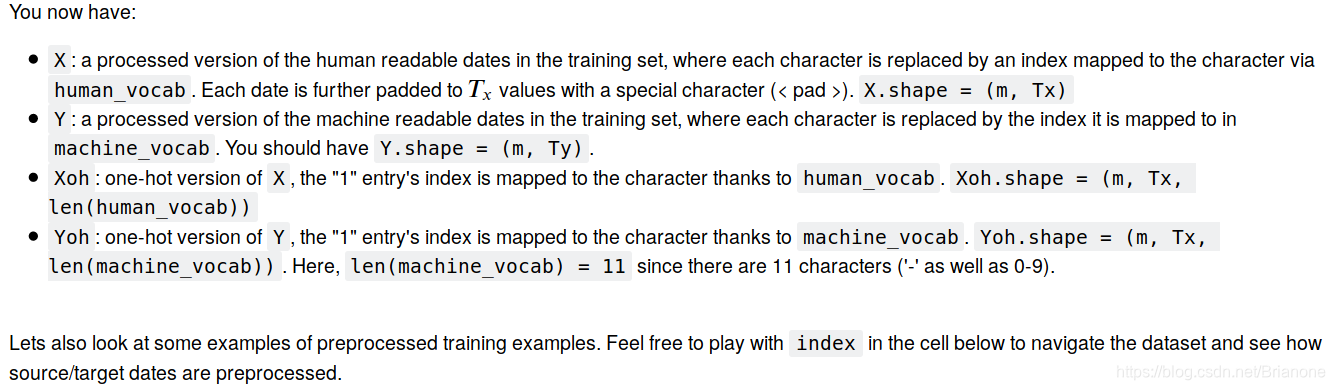

Tx = 30

Ty = 10

X, Y, Xoh, Yoh = preprocess_data(dataset, human_vocab, machine_vocab, Tx, Ty)

print("X.shape:", X.shape)

print("Y.shape:", Y.shape)

print("Xoh.shape:", Xoh.shape)

print("Yoh.shape:", Yoh.shape)X.shape: (10000, 30) Y.shape: (10000, 10) Xoh.shape: (10000, 30, 37) Yoh.shape: (10000, 10, 11)

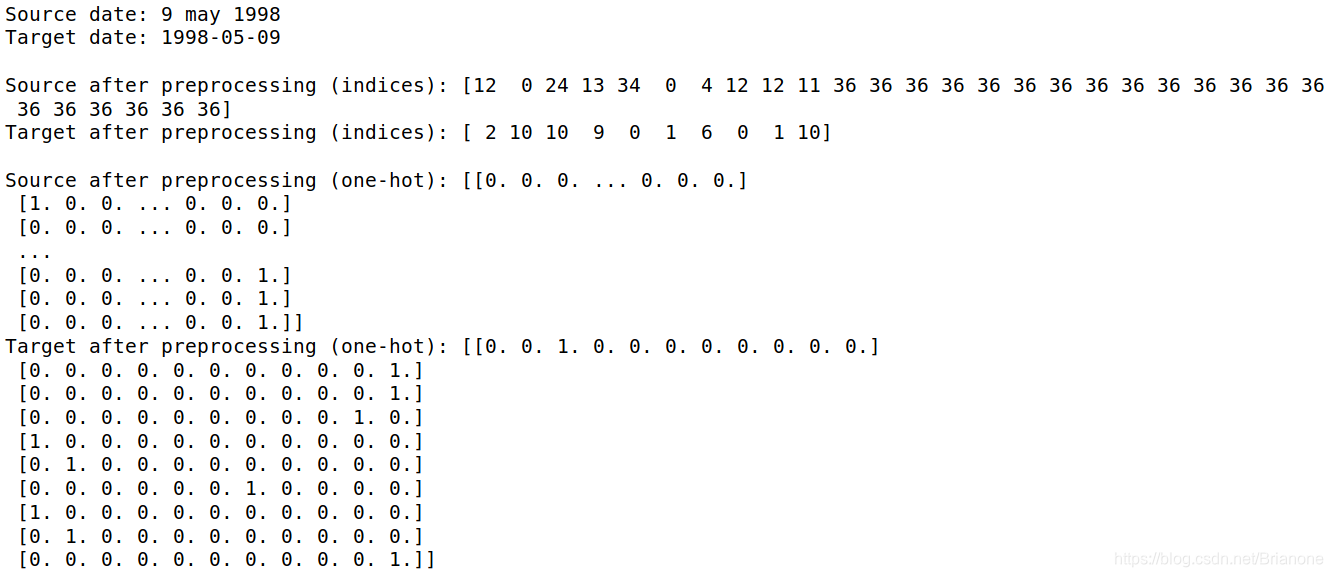

index = 0

print("Source date:", dataset[index][0])

print("Target date:", dataset[index][1])

print()

print("Source after preprocessing (indices):", X[index])

print("Target after preprocessing (indices):", Y[index])

print()

print("Source after preprocessing (one-hot):", Xoh[index])

print("Target after preprocessing (one-hot):", Yoh[index])

# Defined shared layers as global variables

repeator = RepeatVector(Tx)

concatenator = Concatenate(axis=-1)

densor1 = Dense(10, activation = "tanh")

densor2 = Dense(1, activation = "relu")

activator = Activation(softmax, name='attention_weights') # We are using a custom softmax(axis = 1) loaded in this notebook

dotor = Dot(axes = 1)# GRADED FUNCTION: one_step_attention

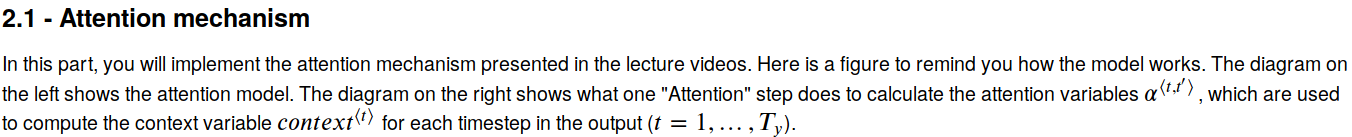

def one_step_attention(a, s_prev):

"""

Performs one step of attention: Outputs a context vector computed as a dot product of the attention weights

"alphas" and the hidden states "a" of the Bi-LSTM.

Arguments:

a -- hidden state output of the Bi-LSTM, numpy-array of shape (m, Tx, 2*n_a)

s_prev -- previous hidden state of the (post-attention) LSTM, numpy-array of shape (m, n_s)

Returns:

context -- context vector, input of the next (post-attetion) LSTM cell

"""

### START CODE HERE ###

# Use repeator to repeat s_prev to be of shape (m, Tx, n_s) so that you can concatenate it with all hidden states "a" (≈ 1 line)

s_prev = repeator(s_prev)

# Use concatenator to concatenate a and s_prev on the last axis (≈ 1 line)

concat = concatenator([a, s_prev])

# Use densor1 to propagate concat through a small fully-connected neural network to compute the "intermediate energies" variable e. (≈1 lines)

e = densor1(concat)

# Use densor2 to propagate e through a small fully-connected neural network to compute the "energies" variable energies. (≈1 lines)

energies = densor2(e)

# Use "activator" on "energies" to compute the attention weights "alphas" (≈ 1 line)

alphas = activator(energies)

# Use dotor together with "alphas" and "a" to compute the context vector to be given to the next (post-attention) LSTM-cell (≈ 1 line)

context = dotor([alphas, a])

### END CODE HERE ###

return contextn_a = 32

n_s = 64

post_activation_LSTM_cell = LSTM(n_s, return_state = True)

output_layer = Dense(len(machine_vocab), activation=softmax)

# GRADED FUNCTION: model

def model(Tx, Ty, n_a, n_s, human_vocab_size, machine_vocab_size):

"""

Arguments:

Tx -- length of the input sequence

Ty -- length of the output sequence

n_a -- hidden state size of the Bi-LSTM

n_s -- hidden state size of the post-attention LSTM

human_vocab_size -- size of the python dictionary "human_vocab"

machine_vocab_size -- size of the python dictionary "machine_vocab"

Returns:

model -- Keras model instance

"""

# Define the inputs of your model with a shape (Tx,)

# Define s0 and c0, initial hidden state for the decoder LSTM of shape (n_s,)

X = Input(shape=(Tx, human_vocab_size))

s0 = Input(shape=(n_s,), name='s0')

c0 = Input(shape=(n_s,), name='c0')

s = s0

c = c0

# Initialize empty list of outputs

outputs = []

### START CODE HERE ###

# Step 1: Define your pre-attention Bi-LSTM. Remember to use return_sequences=True. (≈ 1 line)

a = Bidirectional(LSTM(n_a, return_sequences=True))(X)

# Step 2: Iterate for Ty steps

for t in range(Ty):

# Step 2.A: Perform one step of the attention mechanism to get back the context vector at step t (≈ 1 line)

context = one_step_attention(a, s)

# Step 2.B: Apply the post-attention LSTM cell to the "context" vector.

# Don't forget to pass: initial_state = [hidden state, cell state] (≈ 1 line)

s, _, c = post_activation_LSTM_cell(context, initial_state=[s, c])

# Step 2.C: Apply Dense layer to the hidden state output of the post-attention LSTM (≈ 1 line)

out = output_layer(s)

# Step 2.D: Append "out" to the "outputs" list (≈ 1 line)

outputs.append(out)

# Step 3: Create model instance taking three inputs and returning the list of outputs. (≈ 1 line)

model = Model(inputs=[X, s0, c0], outputs=outputs)

### END CODE HERE ###

return modelmodel = model(Tx, Ty, n_a, n_s, len(human_vocab), len(machine_vocab))model.summary()__________________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

==================================================================================================

input_1 (InputLayer) (None, 30, 37) 0

__________________________________________________________________________________________________

s0 (InputLayer) (None, 64) 0

__________________________________________________________________________________________________

bidirectional_1 (Bidirectional) (None, 30, 64) 17920 input_1[0][0]

__________________________________________________________________________________________________

repeat_vector_1 (RepeatVector) (None, 30, 64) 0 s0[0][0]

lstm_1[0][0]

lstm_1[1][0]

lstm_1[2][0]

lstm_1[3][0]

lstm_1[4][0]

lstm_1[5][0]

lstm_1[6][0]

lstm_1[7][0]

lstm_1[8][0]

__________________________________________________________________________________________________

concatenate_1 (Concatenate) (None, 30, 128) 0 bidirectional_1[0][0]

repeat_vector_1[0][0]

bidirectional_1[0][0]

repeat_vector_1[1][0]

bidirectional_1[0][0]

repeat_vector_1[2][0]

bidirectional_1[0][0]

repeat_vector_1[3][0]

bidirectional_1[0][0]

repeat_vector_1[4][0]

bidirectional_1[0][0]

repeat_vector_1[5][0]

bidirectional_1[0][0]

repeat_vector_1[6][0]

bidirectional_1[0][0]

repeat_vector_1[7][0]

bidirectional_1[0][0]

repeat_vector_1[8][0]

bidirectional_1[0][0]

repeat_vector_1[9][0]

__________________________________________________________________________________________________

dense_1 (Dense) (None, 30, 10) 1290 concatenate_1[0][0]

concatenate_1[1][0]

concatenate_1[2][0]

concatenate_1[3][0]

concatenate_1[4][0]

concatenate_1[5][0]

concatenate_1[6][0]

concatenate_1[7][0]

concatenate_1[8][0]

concatenate_1[9][0]

__________________________________________________________________________________________________

dense_2 (Dense) (None, 30, 1) 11 dense_1[0][0]

dense_1[1][0]

dense_1[2][0]

dense_1[3][0]

dense_1[4][0]

dense_1[5][0]

dense_1[6][0]

dense_1[7][0]

dense_1[8][0]

dense_1[9][0]

__________________________________________________________________________________________________

attention_weights (Activation) (None, 30, 1) 0 dense_2[0][0]

dense_2[1][0]

dense_2[2][0]

dense_2[3][0]

dense_2[4][0]

dense_2[5][0]

dense_2[6][0]

dense_2[7][0]

dense_2[8][0]

dense_2[9][0]

__________________________________________________________________________________________________

dot_1 (Dot) (None, 1, 64) 0 attention_weights[0][0]

bidirectional_1[0][0]

attention_weights[1][0]

bidirectional_1[0][0]

attention_weights[2][0]

bidirectional_1[0][0]

attention_weights[3][0]

bidirectional_1[0][0]

attention_weights[4][0]

bidirectional_1[0][0]

attention_weights[5][0]

bidirectional_1[0][0]

attention_weights[6][0]

bidirectional_1[0][0]

attention_weights[7][0]

bidirectional_1[0][0]

attention_weights[8][0]

bidirectional_1[0][0]

attention_weights[9][0]

bidirectional_1[0][0]

__________________________________________________________________________________________________

c0 (InputLayer) (None, 64) 0

__________________________________________________________________________________________________

lstm_1 (LSTM) [(None, 64), (None, 33024 dot_1[0][0]

s0[0][0]

c0[0][0]

dot_1[1][0]

lstm_1[0][0]

lstm_1[0][2]

dot_1[2][0]

lstm_1[1][0]

lstm_1[1][2]

dot_1[3][0]

lstm_1[2][0]

lstm_1[2][2]

dot_1[4][0]

lstm_1[3][0]

lstm_1[3][2]

dot_1[5][0]

lstm_1[4][0]

lstm_1[4][2]

dot_1[6][0]

lstm_1[5][0]

lstm_1[5][2]

dot_1[7][0]

lstm_1[6][0]

lstm_1[6][2]

dot_1[8][0]

lstm_1[7][0]

lstm_1[7][2]

dot_1[9][0]

lstm_1[8][0]

lstm_1[8][2]

__________________________________________________________________________________________________

dense_3 (Dense) (None, 11) 715 lstm_1[0][0]

lstm_1[1][0]

lstm_1[2][0]

lstm_1[3][0]

lstm_1[4][0]

lstm_1[5][0]

lstm_1[6][0]

lstm_1[7][0]

lstm_1[8][0]

lstm_1[9][0]

==================================================================================================

Total params: 52,960

Trainable params: 52,960

Non-trainable params: 0

### START CODE HERE ### (≈2 lines)

opt = Adam(lr=0.005, decay=0.01)

model.compile(optimizer=opt,

loss='categorical_crossentropy',

metrics=['accuracy'])

### END CODE HERE ###

s0 = np.zeros((m, n_s))

c0 = np.zeros((m, n_s))

outputs = list(Yoh.swapaxes(0,1))model.fit([Xoh, s0, c0], outputs, epochs=1, batch_size=100)Epoch 1/1 10000/10000 [==============================] - 11s 1ms/step - loss: 16.3522 - dense_3_loss: 2.5974 - dense_3_acc: 0.5065 - dense_3_acc_1: 0.6418 - dense_3_acc_2: 0.2782 - dense_3_acc_3: 0.0636 - dense_3_acc_4: 0.9898 - dense_3_acc_5: 0.3664 - dense_3_acc_6: 0.0582 - dense_3_acc_7: 0.9735 - dense_3_acc_8: 0.2067 - dense_3_acc_9: 0.0928

model.load_weights('models/model.h5')EXAMPLES = ['3 May 1979', '5 April 09', '21th of August 2016', 'Tue 10 Jul 2007', 'Saturday May 9 2018', 'March 3 2001', 'March 3rd 2001', '1 March 2001']

for example in EXAMPLES:

source = string_to_int(example, Tx, human_vocab)

# source = np.array(list(map(lambda x: to_categorical(x, num_classes=len(human_vocab)), source))).swapaxes(0,1)

# prediction = model.predict([source, s0, c0])

source = np.array(list(map(lambda x: to_categorical(x, num_classes=len(human_vocab)), source))) #不能变换 数据维度 ,

ttt=np.expand_dims(source,axis=0) # 在 axis=0的位置 ,增加一个 维度,以适应 输入维度要求

prediction = model.predict([ttt, s0, c0])

prediction = np.argmax(prediction, axis = -1)

output = [inv_machine_vocab[int(i)] for i in prediction]

print("source:", example)

print("output:", ''.join(output))source: 3 May 1979 output: 1979-05-03 source: 5 April 09 output: 2009-05-05 source: 21th of August 2016 output: 2016-08-21 source: Tue 10 Jul 2007 output: 2007-07-10 source: Saturday May 9 2018 output: 2018-05-09 source: March 3 2001 output: 2001-03-03 source: March 3rd 2001 output: 2001-03-03 source: 1 March 2001 output: 2001-03-01

model.summary()Layer (type) Output Shape Param # Connected to

==================================================================================================

input_1 (InputLayer) (None, 30, 37) 0

__________________________________________________________________________________________________

s0 (InputLayer) (None, 64) 0

__________________________________________________________________________________________________

bidirectional_1 (Bidirectional) (None, 30, 64) 17920 input_1[0][0]

__________________________________________________________________________________________________

repeat_vector_1 (RepeatVector) (None, 30, 64) 0 s0[0][0]

lstm_1[0][0]

lstm_1[1][0]

lstm_1[2][0]

lstm_1[3][0]

lstm_1[4][0]

lstm_1[5][0]

lstm_1[6][0]

lstm_1[7][0]

lstm_1[8][0]

__________________________________________________________________________________________________

concatenate_1 (Concatenate) (None, 30, 128) 0 bidirectional_1[0][0]

repeat_vector_1[0][0]

bidirectional_1[0][0]

repeat_vector_1[1][0]

bidirectional_1[0][0]

repeat_vector_1[2][0]

bidirectional_1[0][0]

repeat_vector_1[3][0]

bidirectional_1[0][0]

repeat_vector_1[4][0]

bidirectional_1[0][0]

repeat_vector_1[5][0]

bidirectional_1[0][0]

repeat_vector_1[6][0]

bidirectional_1[0][0]

repeat_vector_1[7][0]

bidirectional_1[0][0]

repeat_vector_1[8][0]

bidirectional_1[0][0]

repeat_vector_1[9][0]

__________________________________________________________________________________________________

dense_1 (Dense) (None, 30, 10) 1290 concatenate_1[0][0]

concatenate_1[1][0]

concatenate_1[2][0]

concatenate_1[3][0]

concatenate_1[4][0]

concatenate_1[5][0]

concatenate_1[6][0]

concatenate_1[7][0]

concatenate_1[8][0]

concatenate_1[9][0]

__________________________________________________________________________________________________

dense_2 (Dense) (None, 30, 1) 11 dense_1[0][0]

dense_1[1][0]

dense_1[2][0]

dense_1[3][0]

dense_1[4][0]

dense_1[5][0]

dense_1[6][0]

dense_1[7][0]

dense_1[8][0]

dense_1[9][0]

__________________________________________________________________________________________________

attention_weights (Activation) (None, 30, 1) 0 dense_2[0][0]

dense_2[1][0]

dense_2[2][0]

dense_2[3][0]

dense_2[4][0]

dense_2[5][0]

dense_2[6][0]

dense_2[7][0]

dense_2[8][0]

dense_2[9][0]

__________________________________________________________________________________________________

dot_1 (Dot) (None, 1, 64) 0 attention_weights[0][0]

bidirectional_1[0][0]

attention_weights[1][0]

bidirectional_1[0][0]

attention_weights[2][0]

bidirectional_1[0][0]

attention_weights[3][0]

bidirectional_1[0][0]

attention_weights[4][0]

bidirectional_1[0][0]

attention_weights[5][0]

bidirectional_1[0][0]

attention_weights[6][0]

bidirectional_1[0][0]

attention_weights[7][0]

bidirectional_1[0][0]

attention_weights[8][0]

bidirectional_1[0][0]

attention_weights[9][0]

bidirectional_1[0][0]

__________________________________________________________________________________________________

c0 (InputLayer) (None, 64) 0

__________________________________________________________________________________________________

lstm_1 (LSTM) [(None, 64), (None, 33024 dot_1[0][0]

s0[0][0]

c0[0][0]

dot_1[1][0]

lstm_1[0][0]

lstm_1[0][2]

dot_1[2][0]

lstm_1[1][0]

lstm_1[1][2]

dot_1[3][0]

lstm_1[2][0]

lstm_1[2][2]

dot_1[4][0]

lstm_1[3][0]

lstm_1[3][2]

dot_1[5][0]

lstm_1[4][0]

lstm_1[4][2]

dot_1[6][0]

lstm_1[5][0]

lstm_1[5][2]

dot_1[7][0]

lstm_1[6][0]

lstm_1[6][2]

dot_1[8][0]

lstm_1[7][0]

lstm_1[7][2]

dot_1[9][0]

lstm_1[8][0]

lstm_1[8][2]

__________________________________________________________________________________________________

dense_3 (Dense) (None, 11) 715 lstm_1[0][0]

lstm_1[1][0]

lstm_1[2][0]

lstm_1[3][0]

lstm_1[4][0]

lstm_1[5][0]

lstm_1[6][0]

lstm_1[7][0]

lstm_1[8][0]

lstm_1[9][0]

==================================================================================================

Total params: 52,960

Trainable params: 52,960

Non-trainable params: 0

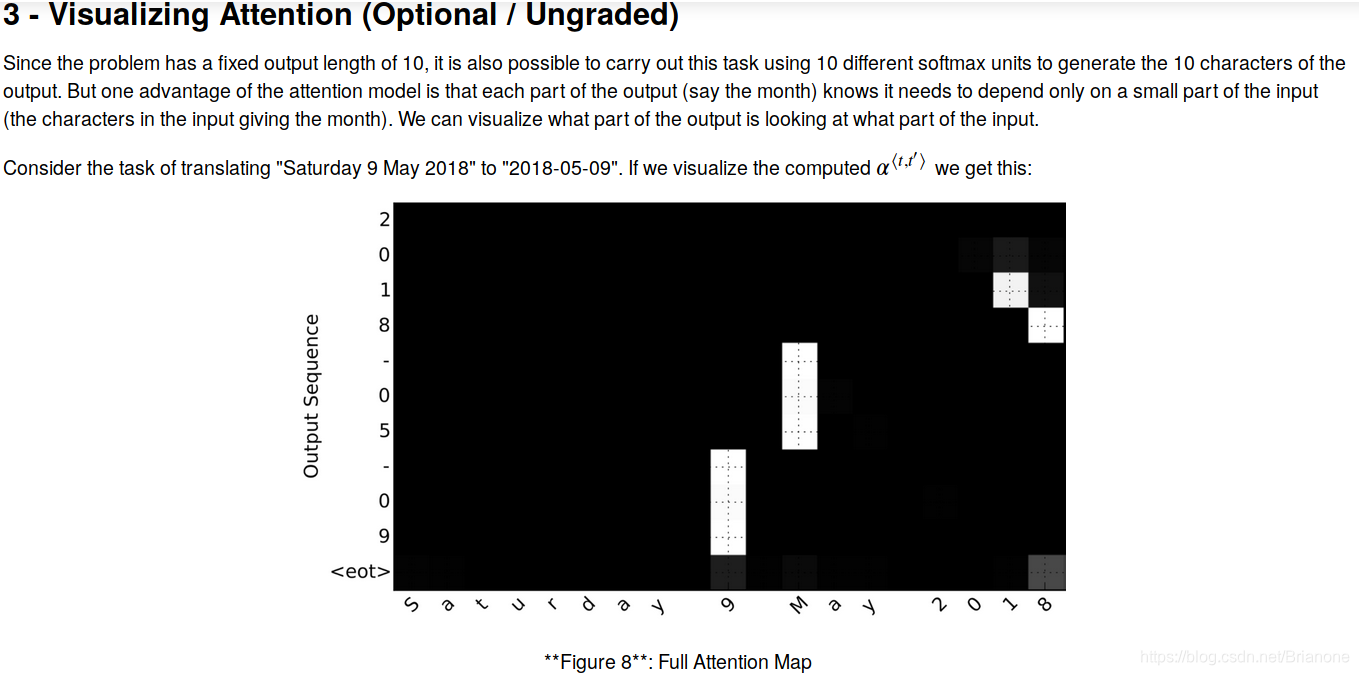

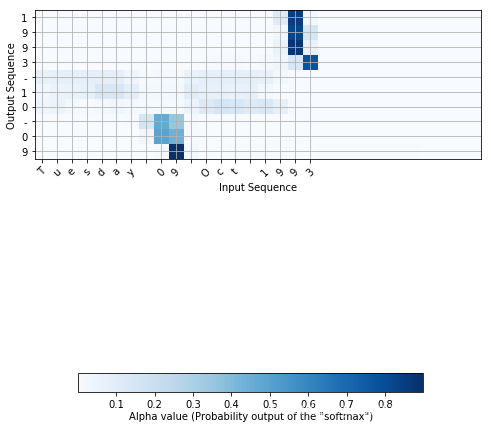

attention_map = plot_attention_map(model, human_vocab, inv_machine_vocab, "Tuesday 09 Oct 1993", num = 7, n_s = 64)

325

325

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?