12月13日hadoop-3.0.0发布正式版啦,试试最新的

Release Notes:

Minimum required Java version increased from Java 7 to Java 8

Support for erasure coding in HDFS

……

Support for more than 2 NameNodes.

Default ports of multiple services have been changed.

but see the release notes for HDFS-9427 and HADOOP-12811 for a list of port changes.

下载:

Release Notes:Minimum required Java version increased from Java 7 to Java 8

Support for erasure coding in HDFS

……

Support for more than 2 NameNodes.

Default ports of multiple services have been changed.

but see the release notes for HDFS-9427 and HADOOP-12811 for a list of port changes.wget http://mirrors.tuna.tsinghua.edu.cn/apache/hadoop/common/hadoop-3.0.0/hadoop-3.0.0.tar.gz

解压:

tar xf hadoop-3.0.0.tar.gz(一)修改配置文件

cd hadoop-3.0.0/etc/hadoop

<property>

<name>fs.defaultFS</name>

<value>hdfs://master:9000</value>

</property>

这一步需要先建立对应的目录:

mkdir -p /data/hd3/namenode /data/hd3/datanode

内容如下:

<property>

<name>dfs.replication</name>

<value>2</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>/data/hd3/namenode</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/data/hd3/datanode</value>

</property>yarn-site.xml

<property>

<name>yarn.resourcemanager.hostname</name>

<value>master</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.env-whitelist</name>

<value>JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_MAPRED_HOME</value>

</property>

<property>

<name>yarn.nodemanager.vmem-check-enabled</name>

<value>false</value>

</property>

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>49152</value>

</property>

<property>

<name>yarn.scheduler.maximum-allocation-mb</name>

<value>49152</value>

</property>mapred-site.xml

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

修改slave,注意,这里改成了workers文件

slave1

slave2JAVA_HOME=/home/cloud/jdk1.8.0_144修改profile文件

sudo vim /etc/profile

添加

OK,至此配置全部完成,下面启动Hadoop。

如果不报错的话,应该看到如下信息:

在Slave机器上jps:

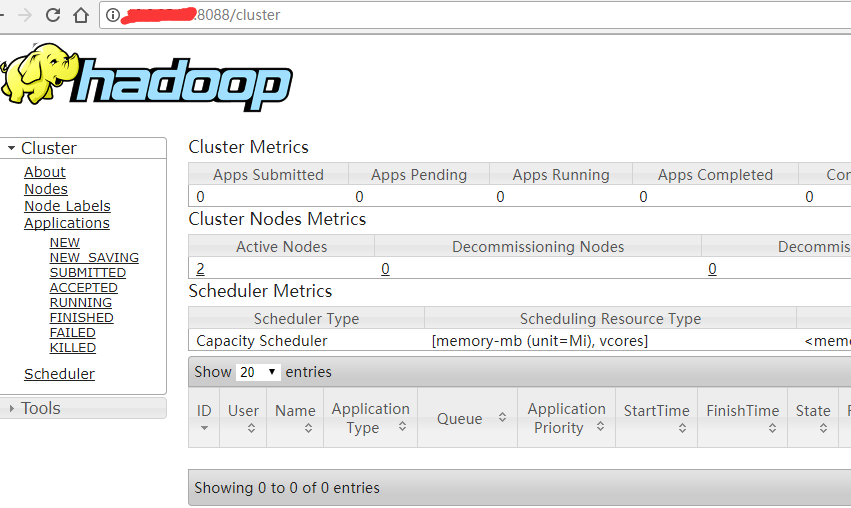

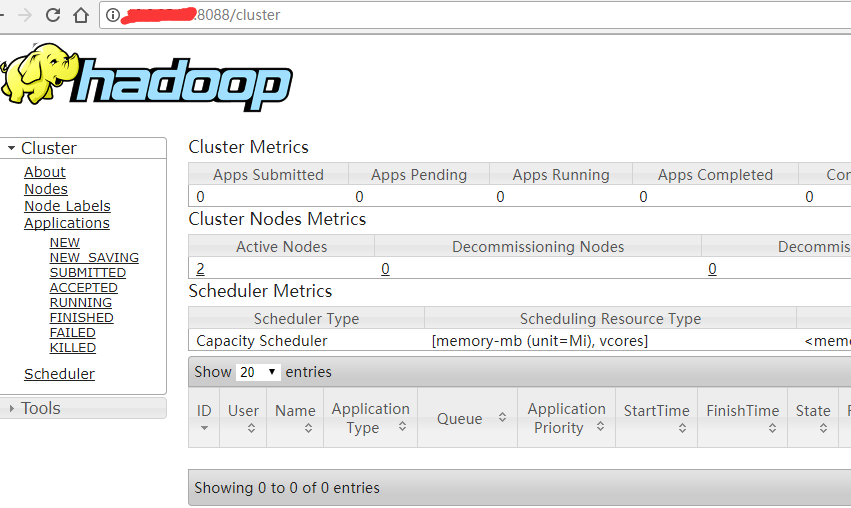

(三)Web端查看

访问 http://10.0.0.1:8088/

export HADOOP_PREFIX=/home/cloud/hadoop-3.0.0

export HADOOP_HDFS_HOME=/home/cloud/hadoop-3.0.0

export HADOOP_CONF_DIR=/home/cloud/hadoop-3.0.0/etc/hadoopsource /etc/profile

(二)启动hadoop

老规矩,首次启动需要format namenode。

hdfs namenode -formatstart-dfs.shstart-yarn.sh如果不报错的话,应该看到如下信息:

在Master机器上通过JPS命令查看:

$ jps

6993 SecondaryNameNode

7715 NodeManager

9524 Jps

7371 ResourceManager

6492 NameNode

6669 DataNode在Slave机器上jps:

$ jps

21360 DataNode

30233 Jps

21643 NodeManager(三)Web端查看

访问 http://10.0.0.1:8088/

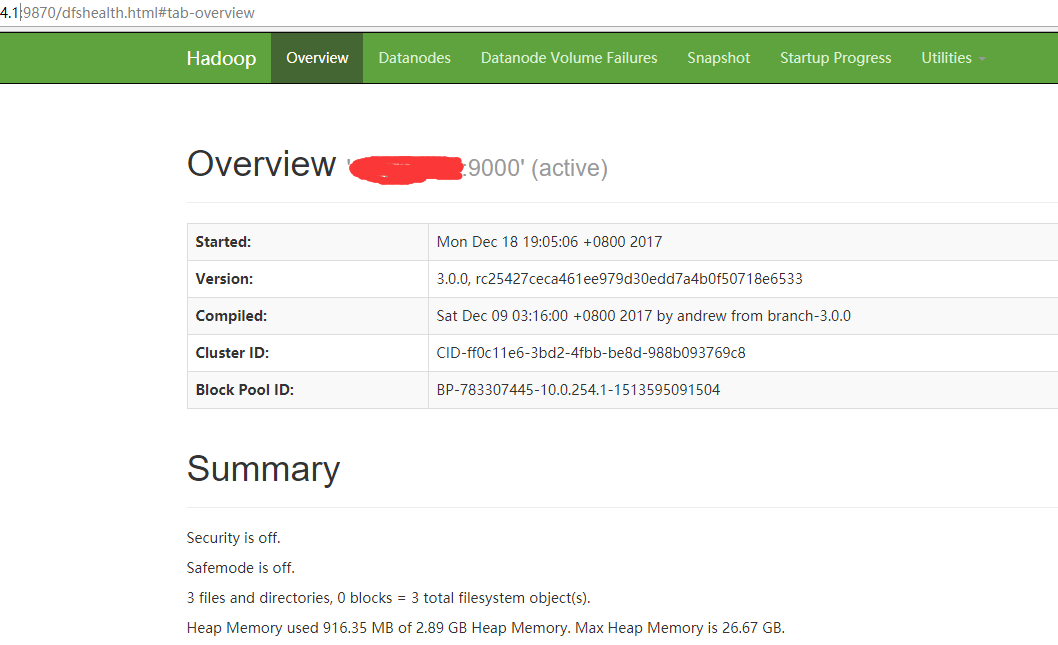

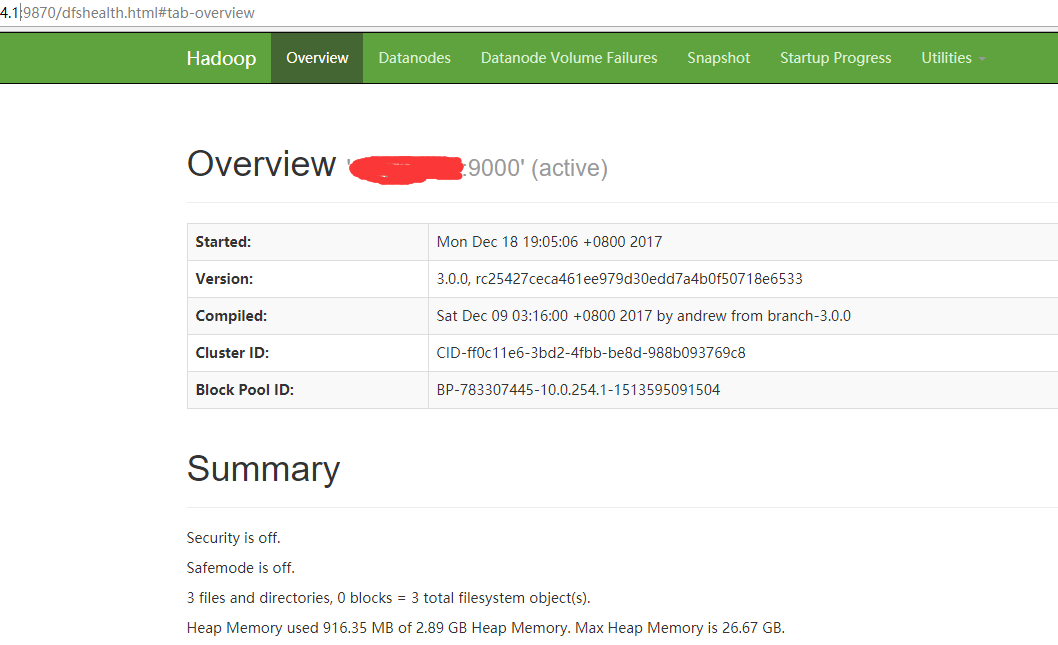

访问 http://10.0.0.1:9870 ,注意,这里是9870,不是50070了:

(四)OK,大功告成

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?