python爬虫之requests

在python中使用requests简化了网络请求,在使用requests之前需要pip install requests来安装requests,requests中文手册是一个很好的入门资料。

制作爬虫需要对html的结构有一定的了解,同时使用正则表达式原生匹配的爬虫也会随网页结构的改变而失效,所以若使下述代码无效,请分析网页的结构是否发生了改变。

抓取网页:极客学院视频库网页

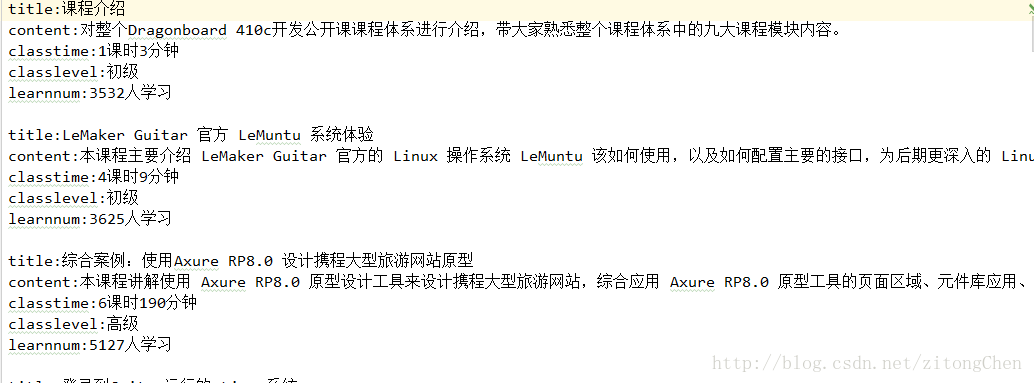

爬取效果:

# -*-coding:utf-8 -*-

import requests

import re

import sys

''' 1.先获取整个网页 2.获取整个网页中的课程块 3.通过正则表达使获取想要的特定内容 '''

class spider(object):

def __init__(self):

print(u'开始爬取内容...')

# 用于获取网页的源代码

def getsource(self, url):

html = requests.get(url)

html.encoding = 'utf-8'

return html.text

# 改变链接中的页码数

def changepage(self, url, total_page):

now_page = int(re.search('pageNum=(\d+)', url, re.S).group(1)) # 返回页码数

page_group = []

for i in range(now_page, total_page + 1):

link = re.sub('pageNum=\d+', 'pageNum=%s' % i, url, re.S) # 替换url中的页码数

page_group.append(link)

return page_group

# 抓取每个课程块消息

def geteveryclass(self, source):

everyclass = re.findall('(<li id=".*?</li>)', source, re.S)

return everyclass

# 从课程块中得到我们想要的信息

def getinfo(self, eachclass):

info = {}

info['title'] = re.search('.*>(.*?)</a></h2>', eachclass, re.S).group(1)

info['content'] = re.search('.*>(.*?)</p>', eachclass, re.S).group(1).strip()

timeandlevel = re.findall('<em>(.*?)</em>', eachclass, re.S)

classtime = timeandlevel[0].split() # 截取字符中的空格

info['classtime'] = classtime[0] + classtime[1]

info['classlevel'] = timeandlevel[1]

info['learnnum'] = re.search('"learn-number">(.*?)</em>', eachclass, re.S).group(1)

return info

def saveinfo(self, classinfo):

f = open('info.txt', 'a', encoding='utf-8')

for each in classinfo:

f.writelines('title:' + each['title'] + '\n')

f.writelines('content:' + each['content'] + '\n')

f.writelines('classtime:' + each['classtime'] + '\n')

f.writelines('classlevel:' + each['classlevel'] + '\n')

f.writelines('learnnum:' + each['learnnum'] + '\n\n')

f.close()

if __name__ == '__main__':

classinfo = []

url = 'http://www.jikexueyuan.com/course/?pageNum=2'

jikespider = spider()

all_links = jikespider.changepage(url, 20)

print(all_links)

for link in all_links:

print(u'正在处理页面:' + link)

html = jikespider.getsource(link)

everyclass = jikespider.geteveryclass(html)

for each in everyclass:

info = jikespider.getinfo(each)

classinfo.append(info)

jikespider.saveinfo(classinfo)

8万+

8万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?