在运行这个with sam-hq的时候出现下面报错:

/opt/conda/lib/python3.10/site-packages/torch/functional.py:504: UserWarning: torch.meshgrid: in an upcoming release, it will be required to pass the indexing argument. (Triggered internally at /opt/conda/conda-bld/pytorch_1670525552843/work/aten/src/ATen/native/TensorShape.cpp:3190.)

return _VF.meshgrid(tensors, **kwargs) # type: ignore[attr-defined]

final text_encoder_type: bert-base-uncased

_IncompatibleKeys(missing_keys=[], unexpected_keys=['label_enc.weight', 'bert.embeddings.position_ids'])

/opt/conda/lib/python3.10/site-packages/transformers/modeling_utils.py:942: FutureWarning: The `device` argument is deprecated and will be removed in v5 of Transformers.

warnings.warn(

/opt/conda/lib/python3.10/site-packages/torch/utils/checkpoint.py:31: UserWarning: None of the inputs have requires_grad=True. Gradients will be None

warnings.warn("None of the inputs have requires_grad=True. Gradients will be None")

Traceback (most recent call last):

File "/home/appuser/Grounded-Segment-Anything/grounded_sam_demo.py", line 201, in <module>

predictor = SamPredictor(sam_hq_model_registry[sam_version](checkpoint=sam_hq_checkpoint).to(device))

File "/home/appuser/Grounded-Segment-Anything/segment_anything/segment_anything/build_sam_hq.py", line 15, in build_sam_hq_vit_h

return _build_sam(

File "/home/appuser/Grounded-Segment-Anything/segment_anything/segment_anything/build_sam_hq.py", line 107, in _build_sam

info = sam.load_state_dict(state_dict, strict=False)

File "/opt/conda/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1671, in load_state_dict

raise RuntimeError('Error(s) in loading state_dict for {}:\n\t{}'.format(

RuntimeError: Error(s) in loading state_dict for Sam:

size mismatch for image_encoder.neck.0.weight: copying a param with shape torch.Size([256, 320, 1, 1]) from checkpoint, the shape in current model is torch.Size([256, 1280, 1, 1]).

size mismatch for mask_decoder.compress_vit_feat.0.weight: copying a param with shape torch.Size([160, 256, 2, 2]) from checkpoint, the shape in current model is torch.Size([1280, 256, 2, 2]).

最后一句说是形状不匹配

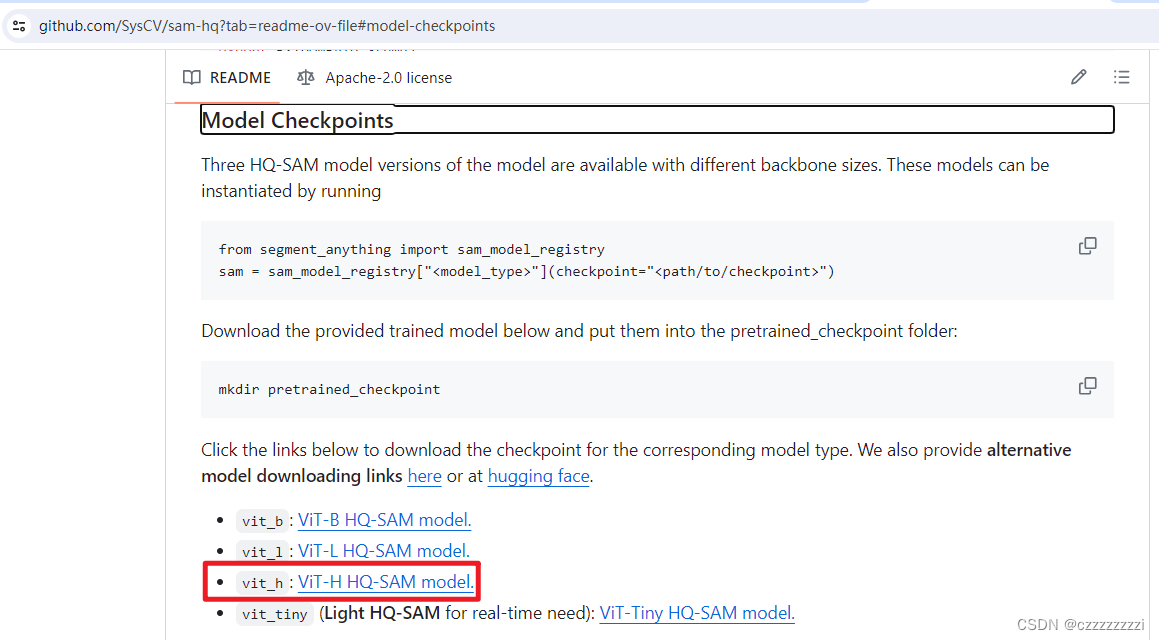

原因:未使用正确的HQ-SAM模型

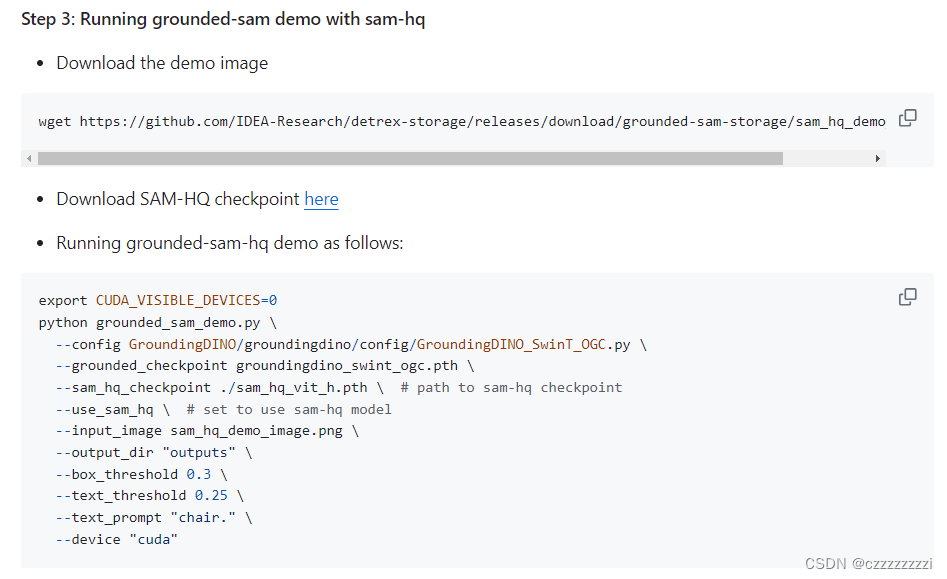

在HQ-SAM这一页,要注意下载正确的模型,因为sam_hq_vi_h太大了,就下载了tiny,本来我以为只要把运行脚本里面这行改成sam_hq_vit_tiny就行结果还是报错。

--sam_hq_checkpoint ./sam_hq_vit_h.pth \ # path to sam-hq checkpoint下载vit_h模型并放到项目目录后可以成功运行😊

root@b90c7258b1d1:/home/appuser/Grounded-Segment-Anything# python3 grounded_sam_demo.py \

> --config GroundingDINO/groundingdino/config/GroundingDINO_SwinT_OGC.py \

> --grounded_checkpoint groundingdino_swint_ogc.pth \

> --sam_hq_checkpoint ./sam_hq_vit_h.pth \

> --use_sam_hq \

> --input_image sam_hq_demo_image.png \

> --output_dir "out" \

> --box_threshold 0.3 \

> --text_threshold 0.25 \

> --text_prompt "chair." \

> --device "cuda"

/opt/conda/lib/python3.10/site-packages/torch/functional.py:504: UserWarning: torch.meshgrid: in an upcoming release, it will be required to pass the indexing argument. (Triggered internally at /opt/conda/conda-bld/pytorch_1670525552843/work/aten/src/ATen/native/TensorShape.cpp:3190.)

return _VF.meshgrid(tensors, **kwargs) # type: ignore[attr-defined]

final text_encoder_type: bert-base-uncased

_IncompatibleKeys(missing_keys=[], unexpected_keys=['label_enc.weight', 'bert.embeddings.position_ids'])

/opt/conda/lib/python3.10/site-packages/transformers/modeling_utils.py:942: FutureWarning: The `device` argument is deprecated and will be removed in v5 of Transformers.

warnings.warn(

/opt/conda/lib/python3.10/site-packages/torch/utils/checkpoint.py:31: UserWarning: None of the inputs have requires_grad=True. Gradients will be None

warnings.warn("None of the inputs have requires_grad=True. Gradients will be None")

<All keys matched successfully>

root@b90c7258b1d1:/home/appuser/Grounded-Segment-Anything# ls

CITATION.cff chatbot.py grounding_dino_demo.py

Dockerfile cog.yaml groundingdino_swint_ogc.pth

EfficientSAM gradio_app.py out

GroundingDINO grounded-sam-osx outputs

LICENSE grounded_sam.ipynb playground

Makefile grounded_sam_3d_box.ipynb predict.py

README.md grounded_sam_colab_demo.ipynb recognize-anything

VISAM grounded_sam_demo.py requirements.txt

assets grounded_sam_inpainting_demo.py sam_hq_demo_image.png

automatic_label_demo.py grounded_sam_osx_demo.py sam_hq_vit_h.pth

automatic_label_ram_demo.py grounded_sam_simple_demo.py sam_hq_vit_tiny.pth

automatic_label_simple_demo.py grounded_sam_visam.py sam_vit_h_4b8939.pth

automatic_label_tag2text_demo.py grounded_sam_whisper_demo.py segment_anything

bert-base-uncased grounded_sam_whisper_inpainting_demo.py voxelnext_3d_box

root@b90c7258b1d1:/home/appuser/Grounded-Segment-Anything# cd out

root@b90c7258b1d1:/home/appuser/Grounded-Segment-Anything/out# ls

grounded_sam_output.jpg mask.jpg mask.json raw_image.jpg

文章讲述了在使用GitHub项目Grounded-Segment-Anything时,遇到SamPredictor加载预训练模型(如sam_hq_vit_h)时由于模型大小不合适导致的形状不匹配错误。开发者提醒需确保下载并正确使用相应版本的HQ-SAM模型,如tiny版本,以解决加载问题。

文章讲述了在使用GitHub项目Grounded-Segment-Anything时,遇到SamPredictor加载预训练模型(如sam_hq_vit_h)时由于模型大小不合适导致的形状不匹配错误。开发者提醒需确保下载并正确使用相应版本的HQ-SAM模型,如tiny版本,以解决加载问题。

https://github.com/IDEA-Research/Grounded-Segment-Anything?tab=readme-ov-file

https://github.com/IDEA-Research/Grounded-Segment-Anything?tab=readme-ov-file

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?