环境准备

Java JDK 1.8 hadoop3.1.2 spark2.4.3

cd /home/hadoop

sudo chown -R hadoop:hadoop ./spark-2.4.3

cp ./conf/spark-env.sh.template ./conf/spark-env.sh

在spark-env.sh加入最后一行

export SPARK_DIST_CLASSPATH=$(/opt/hadoop-3.1.2/bin/hadoop classpath)

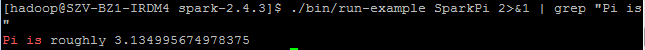

运行spark例子

进入spark目录,执行以下命令

./bin/run-example SparkPi 2>&1 |grep "Pi is"

输出:

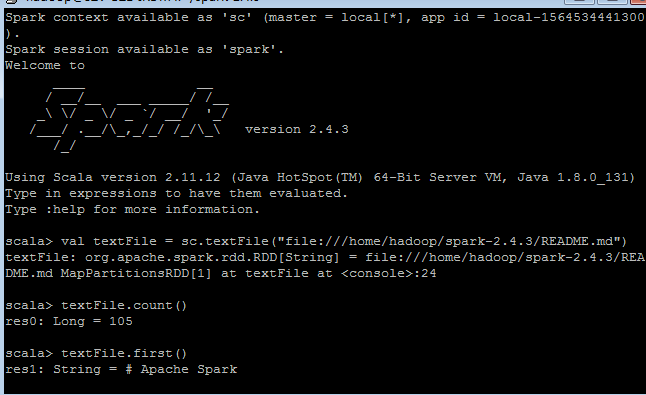

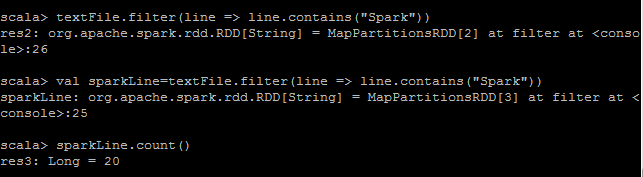

spark交互式命令

cd ./bin/spark-shell

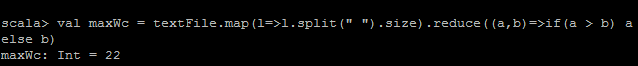

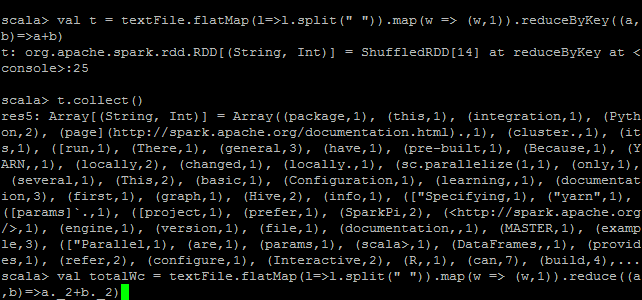

单词最多的那一行内容共有几个单词

计算文件中不同单词数量

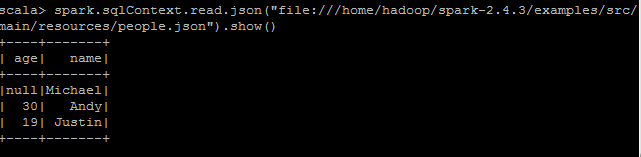

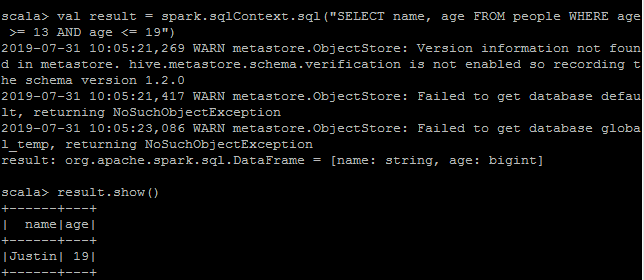

SparkSql和DataFrame

在spark2.0.x中,sqlContext在sparkSession中 spark.sqlContext.sql(...)

或者

val sqlContext = new org.apache.spark.sql.SQLContext(sc)

https://stackoverflow.com/questions/42993521/apache-spark-error-not-found-value-sqlcontext

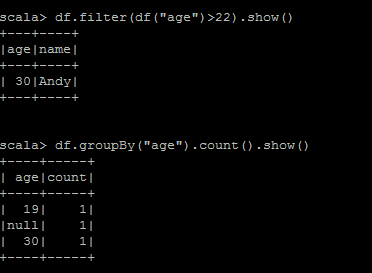

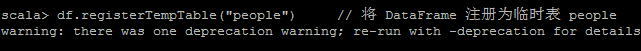

或者用sql语句

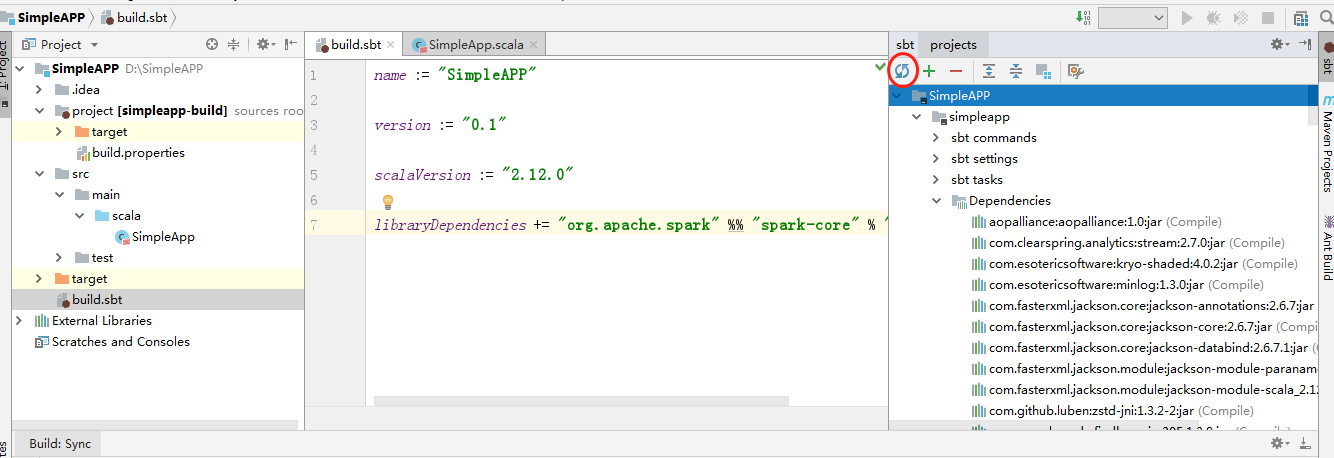

idea开发spark应用

环境安装

idea安装scala以及sbt,参考官网说明

scala的sbt打包工具类似于maven,在build.sbt声明scala和spark版本后,可以在idea右上角sbt导入依赖

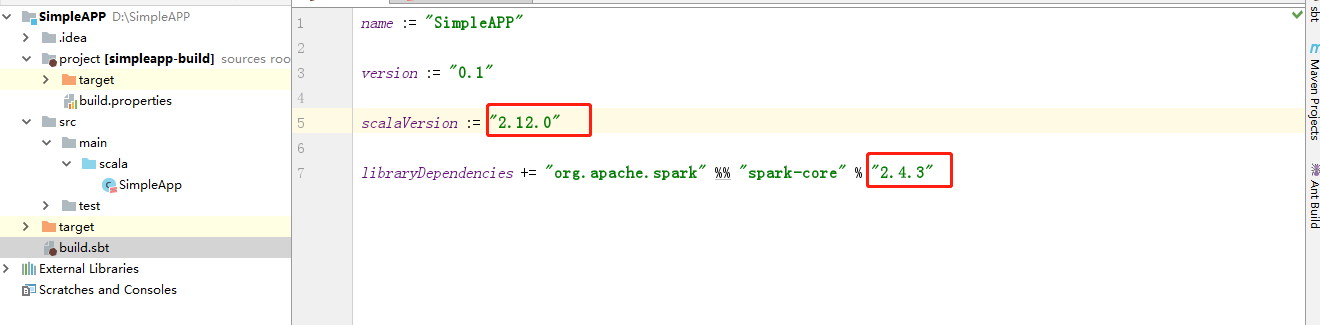

scala与spark2.4.3对应关系

Spark runs on Java 8+, Python 2.7+/3.4+ and R 3.1+. For the Scala API, Spark 2.4.3 uses Scala 2.12. You will need to use a compatible Scala version (2.12.x).

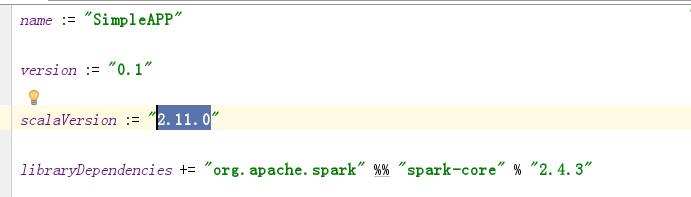

以上官网这句话是错误的,spark2.4.3实际对应的scala2.11

参考这个问题

所以sbt文件如下:

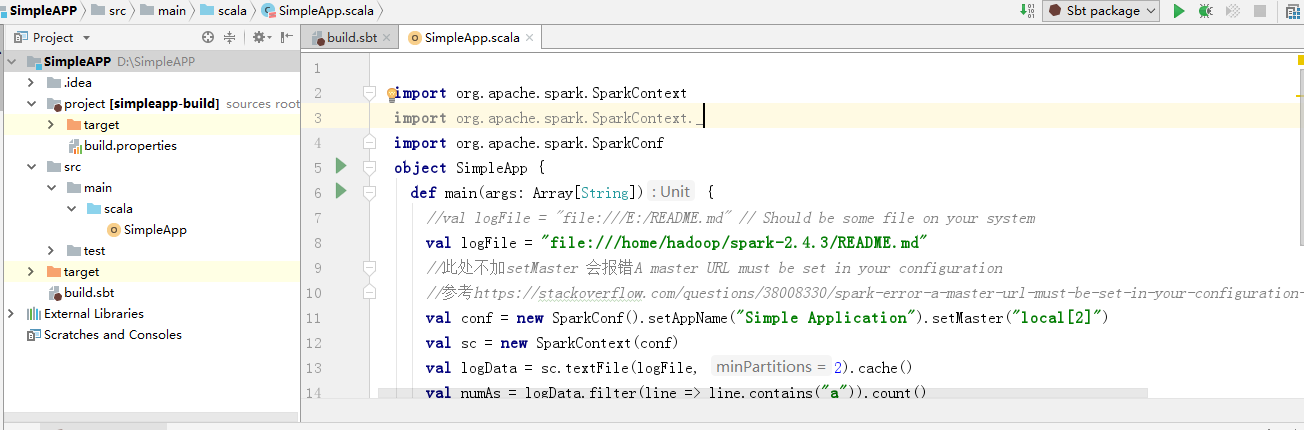

spark应用

import org.apache.spark.SparkContext

import org.apache.spark.SparkContext._

import org.apache.spark.SparkConf

object SimpleApp {

def main(args: Array[String]) {

//val logFile = "file:///E:/README.md" // Should be some file on your system

val logFile = "file:///home/hadoop/spark-2.4.3/README.md"

//此处不加setMaster 会报错A master URL must be set in your configuration

//参考https://stackoverflow.com/questions/38008330/spark-error-a-master-url-must-be-set-in-your-configuration-when-submitting-a

val conf = new SparkConf().setAppName("Simple Application").setMaster("local[2]")

val sc = new SparkContext(conf)

val logData = sc.textFile(logFile, 2).cache()

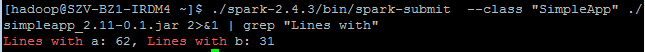

val numAs = logData.filter(line => line.contains("a")).count()

val numBs = logData.filter(line => line.contains("b")).count()

println("Lines with a: %s, Lines with b: %s".format(numAs, numBs))

}

}

应用的目录结构

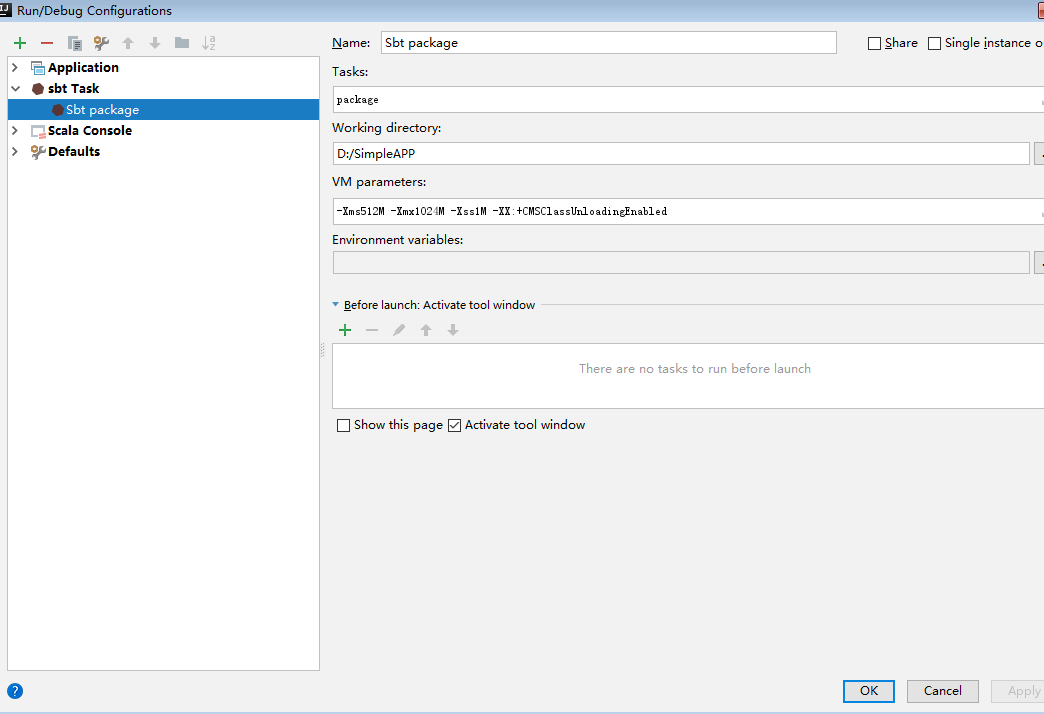

idea配置sbt打包命令

如果是编译,package改为compile即可

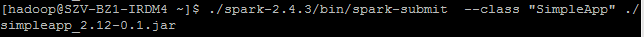

spark-submit 运行程序

sbt打包生成的jar复制到centOS的/home/hadoop目录下面,修改权限

chmod u+x SimpleApp

提交任务

问题

- Caused by: java.lang.ClassNotFoundException: scala.runtime.LambdaDeserialize

修改scala版本,与spark兼容,spark2.4.3对应scala版本改为2.11.0

结果

2111

2111

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?