长短期记忆网络(LSTM,Long Short-Term Memory)

使用kears 搭建一个LSTM预测模型,使用2022年美国大学生数学建模大赛中C题中处理后的BTC比特币的数据进行数据训练和预测。

这篇博客包含两个预测,一种是使用前N天的数据预测后一天的数据,使用前N天的数据预测后N天的数据

使用前五十个数据进行预测后一天的数据。

总数据集:1826个数据

数据下载地址:需要的可以自行下载,很快

- 链接:https://pan.baidu.com/s/1TmQxLfzHiyOL3vEVcuWlgQ

- 提取码:wy0f

模型结构

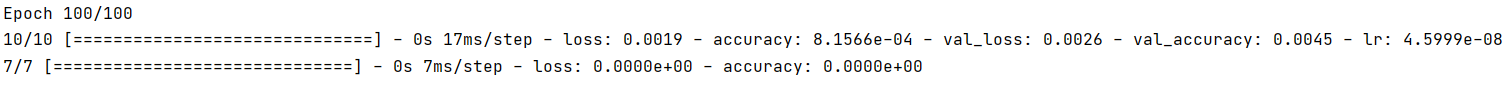

Model: "sequential"_________________________________________________________________Layer (type) Output Shape Param # =================================================================lstm (LSTM) (None, 50, 64) 16896 _________________________________________________________________lstm_1 (LSTM) (None, 50, 64) 33024 _________________________________________________________________lstm_2 (LSTM) (None, 32) 12416 _________________________________________________________________dropout (Dropout) (None, 32) 0 _________________________________________________________________dense (Dense) (None, 1) 33 =================================================================Total params: 62,369Trainable params: 62,369Non-trainable params: 0_________________________________________________________________训练100次:

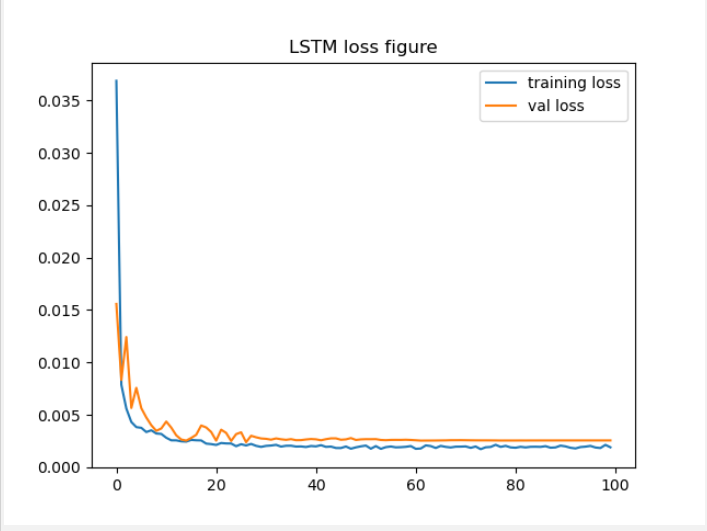

损失函数图像:

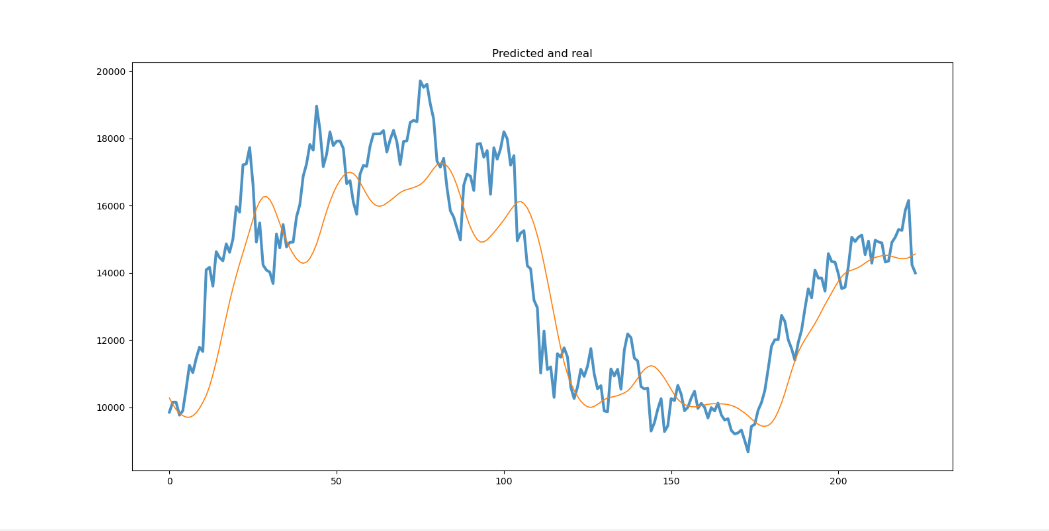

预测和真实值比较,可以看到效果并不是很好,这个需要自己调参进行变化

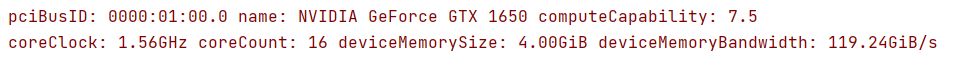

- 我的GPU加速时1650还挺快,7.5算力,训练时间可以接受

代码:

import numpy as npimport pandas as pdimport matplotlib.pyplot as pltfrom tensorflow import kerasfrom tensorflow.keras import layersfrom sklearn.preprocessing import MinMaxScaler# 数据处理部分# 读入数据data = pd.read_excel('BTCtest.xlsx')print(len(data))# 划分训练集与验证集data = data[['Value']]train = data[0:1277]valid = data[1278:1550]test = data[1551:]time_step = 50 # 输入序列长度# 归一化scaler = MinMaxScaler(feature_range=(0, 1))# datas 切片数据 time_step要输入的维度 pred 预测维度def scalerClass(datas,scaler,time_step,pred): x, y = [], [] scaled_data = scaler.fit_transform(datas) for i in range(time_step, len(datas) - pred): x.append(scaled_data[i - time_step:i]) y.append(scaled_data[i: i + pred]) # 把x_train转变为array数组 x, y = np.array(x), np.array(y).reshape(-1, 1) # reshape(-1,1)的意思时不知道分成多少行,但是是1列 return x,y# 训练集 验证集 测试集 切片x_train,y_train = scalerClass(train,scaler,time_step=time_step,pred=1)x_valid, y_valid = scalerClass(valid,scaler,time_step=time_step,pred=1)x_test, y_test = scalerClass(test,scaler,time_step=time_step,pred=1)# 建立神经网络模型model = keras.Sequential()model.add(layers.LSTM(64, return_sequences=True, input_shape=(x_train.shape[1:])))model.add(layers.LSTM(64, return_sequences=True))model.add(layers.LSTM(32))model.add(layers.Dropout(0.1))model.add(layers.Dense(1))# model.compile(optimizer = 优化器,loss = 损失函数, metrics = ["准确率”])# “adam" 或者 tf.keras.optimizers.Adam(lr = 学习率,decay = 学习率衰减率)# ”mse" 或者 tf.keras.losses.MeanSquaredError()model.compile(optimizer=keras.optimizers.Adam(), loss='mse',metrics=['accuracy'])# monitor:要监测的数量。# factor:学习速率降低的因素。new_lr = lr * factor# patience:没有提升的epoch数,之后学习率将降低。# verbose:int。0:安静,1:更新消息。# mode:{auto,min,max}之一。在min模式下,当监测量停止下降时,lr将减少;在max模式下,当监测数量停止增加时,它将减少;在auto模式下,从监测数量的名称自动推断方向。# min_delta:对于测量新的最优化的阀值,仅关注重大变化。# cooldown:在学习速率被降低之后,重新恢复正常操作之前等待的epoch数量。# min_lr:学习率的下限learning_rate= keras.callbacks.ReduceLROnPlateau(monitor='val_loss', patience=3, factor=0.7, min_lr=0.00000001)#显示模型结构model.summary()# 训练模型history = model.fit(x_train, y_train, batch_size = 128, epochs=100, validation_data=(x_valid, y_valid), callbacks=[learning_rate])# loss变化趋势可视化plt.title('LSTM loss figure')plt.plot(history.history['loss'],label='training loss')plt.plot(history.history['val_loss'], label='val loss')plt.legend(loc='upper right')plt.show()#### 预测结果分析&可视化 ##### 输入测试数据,输出预测结果y_pred = model.predict(x_test)# 输入数据和标签,输出损失和精确度model.evaluate(x_test)scaler.fit_transform(pd.DataFrame(valid['Value'].values))# 反归一化y_pred = scaler.inverse_transform(y_pred.reshape(-1,1)[:,0].reshape(1,-1)) #只取第一列y_test = scaler.inverse_transform(y_test.reshape(-1,1)[:,0].reshape(1,-1))# 预测效果可视化plt.title('Predicted and real')plt.figure(figsize=(16, 8))dict = { 'Predictions': y_pred[0], 'Value': y_test[0]}data_pd = pd.DataFrame(dict)plt.plot(data_pd[['Value']],linewidth=3,alpha=0.8)plt.plot(data_pd[['Predictions']],linewidth=1.2)#plt.savefig('lstm.png', dpi=600)plt.show()预测后几天的数据和后一天原理是一样的,因为预测的是5天的数据所以不能使用图像显示出来,只能取出预测五天的头一天的数据进行绘图。数据结构可以打印出来的,我没有反归一化,需要的时候再弄把

前五十天预测五天的代码:

# 调用库 import numpy as npimport pandas as pdimport matplotlib.pyplot as pltfrom tensorflow import kerasfrom tensorflow.keras import layersfrom sklearn.preprocessing import MinMaxScaler# 读入数据data = pd.read_excel('BTCtest.xlsx')time_step = 50 # 输入序列长度# 划分训练集与验证集data = data[['Value']]train = data[0:1277] #70%valid = data[1278:1550] #15%test = data[1551:] #15%# 归一化scaler = MinMaxScaler(feature_range=(0, 1))# 定义一个切片函数# datas 切片数据 time_step要输入的维度 pred 预测维度def scalerClass(datas,scaler,time_step,pred): x, y = [], [] scaled_data = scaler.fit_transform(datas) for i in range(time_step, len(datas) - pred): x.append(scaled_data[i - time_step:i]) y.append(scaled_data[i: i + pred]) # 把x_train转变为array数组 x, y = np.array(x), np.array(y).reshape(-1, 5) # reshape(-1,5)的意思时不知道分成多少行,但是是五列 return x,y# 训练集 验证集 测试集 切片x_train,y_train = scalerClass(train,scaler,time_step=time_step,pred=5)x_valid, y_valid = scalerClass(valid,scaler,time_step=time_step,pred=5)x_test, y_test = scalerClass(test,scaler,time_step=time_step,pred=5)# 建立网络模型model = keras.Sequential()model.add(layers.LSTM(64, return_sequences=True, input_shape=(x_train.shape[1:])))model.add(layers.LSTM(64, return_sequences=True))model.add(layers.LSTM(32) 上海党性培训 www.utibetganxun.com )model.add(layers.Dropout(0.1))model.add(layers.Dense(5))model.compile(optimizer=keras.optimizers.Adam(), loss='mse',metrics=['accuracy'])learning_rate_reduction = keras.callbacks.ReduceLROnPlateau(monitor='val_loss', patience=3, factor=0.7, min_lr=0.000000005)model.summary()history = model.fit(x_train, y_train, batch_size = 128, epochs=30, validation_data=(x_valid, y_valid), callbacks=[learning_rate_reduction])# loss变化趋势可视化plt.title('LSTM loss figure')plt.plot(history.history['loss'],label='training loss')plt.plot(history.history['val_loss'], label='val loss')plt.legend(loc='upper right')plt.show()#### 预测结果分析&可视化 ####y_pred = model.predict(x_test)model.evaluate(x_test)scaler.fit_transform(pd.DataFrame(valid['Value'].values))print(y_pred)print(y_test)# 预测效果可视化# 反归一化y_pred = scaler.inverse_transform(y_pred.reshape(-1,5)[:,0].reshape(1,-1)) #只取第一列y_test = scaler.inverse_transform(y_test.reshape(-1,5)[:,0].reshape(1,-1))plt.figure(figsize=(16, 8))plt.title('Predicted and real')dict_data = { 'Predictions': y_pred.reshape(1,-1)[0], 'Value': y_test[0]}data_pd = pd.DataFrame(dict_data)plt.plot(data_pd[['Value']],linewidth=3,alpha=0.8)plt.plot(data_pd[['Predictions']],linewidth=1.2)plt.savefig('lstm.png', dpi=600)plt.show()

408

408

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?