方案简介

本文使用ESXi模拟4个存储节点,ceph使用的版本为ceph version 14.2.10 nautilus (stable)。操作系统为BCLinux。操作系统下的版本信息为BigCloud Enterprise Linux release 8.2.2107 (Core)。

Bcache概述

bcache 是一个 Linux 内核块层超速缓存。可使用一个或多个高速磁盘(例如 SSD)作为一个或多个速度低速磁盘的超速缓存。bcache支持三种缓存策略:

- writeback:回写策略,所有的数据将先写入缓存盘,然后等待系统将数据回写入后端数据盘中。

- writethrough:直写策略(默认策略),数据将会同时写入缓存盘和后端数据盘。

- writearoud:数据将直接写入后端磁盘。

集群环境规划

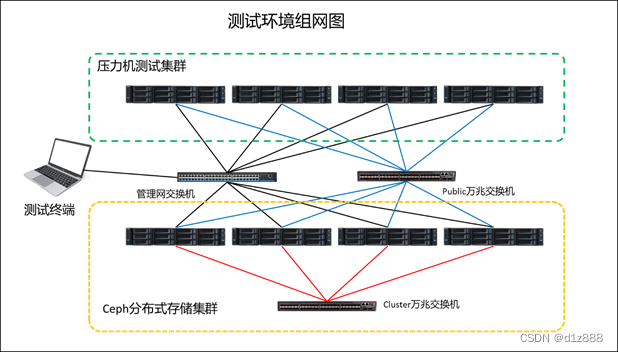

物理组网

这里使用的是虚拟机进行模拟。建立4台虚拟机,每个虚拟机添加20块HDD和4块1.8TB的nvme。物理组网方式如下:

IP地址规划

IP地址规划如下:

| 节点 |

千兆 |

public |

cluster |

| node01 |

192.168.13.1 |

188.188.13.1 |

10.10.13.1 |

| node02 |

192.168.13.2 |

188.188.13.2 |

10.10.13.2 |

| node03 |

192.168.13.3 |

188.188.13.3 |

10.10.13.3 |

| node04 |

192.168.13.4 |

188.188.13.4 |

10.10.13.4 |

| client01 |

192.168.13.101 |

188.188.13.101 |

硬盘划分

ceph应用,每个nvme分15个区,其中3个一组对应一个HDD。

DB分区、WAL分区、Bcache、HDD对应一个osd服务。

如果是物理机,所有机械盘使用jbod模式。这里是虚拟机,添加了4块1.8T的nvme和20块8TB的HDD。

存储节点环境搭建

基础环境配置

配置主机名

node01-node04节点执行,这里以node01节点为例:

[root@localhost ~]# hostnamectl set-hostname node01配置hosts文件

配置node01-node04的/etc/hosts文件。

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.13.1 node01

192.168.13.2 node02

192.168.13.3 node03

192.168.13.4 node04

设置防火墙

所有存储节点关闭防火墙,下述命令在node01-node04所有存储节点执行。

[root@localhost ~]# systemctl

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

790

790

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?