环境:win10 (linux下其实一样)

下载:https://mirrors.tuna.tsinghua.edu.cn/apache/kafka/ 注意:这里不要下载 src版本,没法启动的

安装:因为下载的是tgz文件,所以不需要安装,解压到相应的地方就可以了。

启动:

1、启动内置 zookeeper

bin\windows\zookeeper-server-start.bat config\zookeeper.properties

启动报错:kafka启动报错,找不到或无法加载主类 Files\Java\jdk1.8.0_144\lib\dt.jar;C:\Program

"C:\Program file"中有个空格闹的,最简单的处理方式,修改jdk的安装路径,保证安装路径中没有空格;

2、启动kafka

bin\windows\kafka-server-start.bat config\server.properties

3、创建主题topic,topic = demo

bin\windows\kafka-topics.bat --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic test

D:\soft\kafka_2.11-1.1.0>bin\windows\kafka-topics.bat --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic test

Created topic "test".查看创建的topic :

bin\windows\kafka-topics.bat --list --zookeeper localhost:2181

D:\soft\kafka_2.11-1.1.0>bin\windows\kafka-topics.bat --list --zookeeper localhost:2181

__consumer_offsets

test

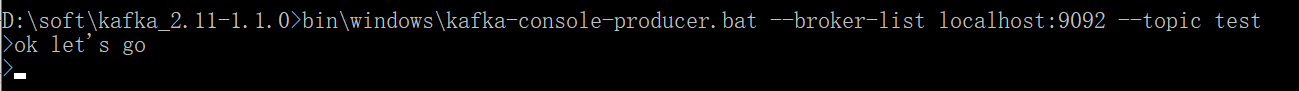

topic-test4、启动生产者 producer

bin\windows\kafka-console-producer.bat --broker-list localhost:9092 --topic test

执行后,可以输入msg:

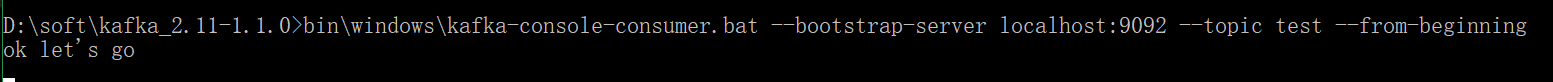

5、启动消费者 customer

bin\windows\kafka-console-consumer.bat --bootstrap-server localhost:9092 --topic test --from-beginning

源生java 实现demo

1、引入maven

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_2.12</artifactId>

<version>1.0.0</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

<version>1.0.0</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-streams</artifactId>

<version>1.0.0</version>

</dependency>

2、topic 创建

package com.my.admin.kafka;

import org.apache.kafka.clients.admin.AdminClient;

import org.apache.kafka.clients.admin.CreateTopicsResult;

import org.apache.kafka.clients.admin.NewTopic;

import java.util.ArrayList;

import java.util.Properties;

import java.util.concurrent.ExecutionException;

/**

* @ClassName Study

* @Description 源生java实现

* @Author Administrator

* @Date 2019/10/4 19:33

* @Version 1.0

*/

public class KfkTopic {

/**

* kafka 创建Topic

* @param args

*/

public static void main(String[] args) {

//创建topic

Properties props = new Properties();

props.put("bootstrap.servers", "192.168.0.102:9092");

AdminClient adminClient = AdminClient.create(props);

ArrayList<NewTopic> topics = new ArrayList<NewTopic>();

NewTopic newTopic = new NewTopic("topic-test", 1, (short) 1);

topics.add(newTopic);

CreateTopicsResult result = adminClient.createTopics(topics);

try {

result.all().get();

} catch (InterruptedException e) {

e.printStackTrace();

} catch (ExecutionException e) {

e.printStackTrace();

}

}

}

2、 生产者

package com.my.admin.kafka;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.Producer;

import org.apache.kafka.clients.producer.ProducerRecord;

import java.util.Properties;

/**

* @ClassName KafkaProducer

* @Description 源生java实现

* @Author Administrator

* @Date 2019/10/4 19:40

* @Version 1.0

*/

public class KfkProducer {

public static void main(String[] args){

Properties props = new Properties();

props.put("bootstrap.servers", "192.168.0.102:9092");

props.put("acks", "all");

props.put("retries", 0);

props.put("batch.size", 16384);

props.put("linger.ms", 1);

props.put("buffer.memory", 33554432);

props.put("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

props.put("value.serializer", "org.apache.kafka.common.serialization.StringSerializer");

Producer<String, String> producer = new KafkaProducer<String, String>(props);

for (int i = 0; i < 100; i++)

producer.send(new ProducerRecord<String, String>("topic-test", "我是key:"+Integer.toString(i), Integer.toString(i)));

producer.close();

}

}

3、消费者

package com.my.admin.kafka;

import org.apache.kafka.clients.consumer.ConsumerRebalanceListener;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer;

import org.apache.kafka.common.TopicPartition;

import java.util.Arrays;

import java.util.Collection;

import java.util.Properties;

/**

* @ClassName KafkaConsumer

* @Description 源生java实现

* @Author Administrator

* @Date 2019/10/4 19:42

* @Version 1.0

*/

public class KfkConsumer {

public static void main(String[] args){

Properties props = new Properties();

props.put("bootstrap.servers", "192.168.0.102:9092");

props.put("group.id", "test");

props.put("enable.auto.commit", "true");

props.put("auto.commit.interval.ms", "1000");

props.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

props.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

final KafkaConsumer<String, String> consumer = new KafkaConsumer<String,String>(props);

consumer.subscribe(Arrays.asList("topic-test"),new ConsumerRebalanceListener() {

public void onPartitionsRevoked(Collection<TopicPartition> collection) {

}

public void onPartitionsAssigned(Collection<TopicPartition> collection) {

//将偏移设置到最开始

consumer.seekToBeginning(collection);

}

});

while (true) {

ConsumerRecords<String, String> records = consumer.poll(100);

for (ConsumerRecord<String, String> record : records)

System.out.printf("offset = %d, key = %s, value = %s%n", record.offset(), record.key(), record.value());

}

}

}

执行顺序:先执行topic创建, 再执行消费者,再执行生产者,看消费者日志 ok

参考:https://blog.csdn.net/woshixiazaizhe/article/details/80610432

https://www.cnblogs.com/hei12138/p/7805475.html

https://www.cnblogs.com/xuwujing/p/8371127.html

spring-boot + kafka demo

引入maven依赖

spring-boot版本:<version>2.1.8.RELEASE</version>

kafka:2.2.5 版本错了,会报错。 上面的源码实现的 依赖最好删了,要不kakfa-client版本会有冲突。

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

<version>2.2.5.RELEASE</version>

</dependency>

生产者:

创建一个spring boot 工程

application.yml

name: kakfa-producer

server:

port: 8082

spring:

kafka:

producer:

bootstrap-servers: 192.168.0.102:9092java代码一个

package com.yzht.kafka;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.kafka.core.KafkaTemplate;

import org.springframework.scheduling.annotation.EnableScheduling;

import org.springframework.scheduling.annotation.Scheduled;

import org.springframework.stereotype.Component;

import org.springframework.util.concurrent.ListenableFuture;

import java.util.UUID;

/**

* 生产者

* 使用@EnableScheduling注解开启定时任务

*/

@Component

@EnableScheduling

public class KafkaProducer {

@Autowired

private KafkaTemplate kafkaTemplate;

/**

* 定时任务

*/

@Scheduled(cron = "00/1 * * * * ?")

public void send(){

String message = UUID.randomUUID().toString();

ListenableFuture future = kafkaTemplate.send("app_log", message);

future.addCallback(o -> System.out.println("send-消息发送成功:" + message), throwable -> System.out.println("消息发送失败:" + message));

}

}

启动工程,看日志 ok

消费者:

再创建一个工程

application.yml

name: kafka-consumer

server:

port: 8081

spring:

kafka:

consumer:

enable-auto-commit: true

group-id: applog

auto-offset-reset: latest

bootstrap-servers: 192.168.0.102:9092java一个

package com.my.admin.kafka;

import org.springframework.kafka.annotation.KafkaListener;

import org.springframework.stereotype.Component;

/**

* 消费者

* 使用@KafkaListener注解,可以指定:主题,分区,消费组

*/

@Component

public class KafkaConsumer {

@KafkaListener(topics = {"app_log"})

public void receive(String message){

System.out.println("app_log--消费消息:" + message);

}

}启动,看日志 ok

430

430

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?