问题描述:

Fast R-CNN(rotate)原版提供的 setup.py 是在linux中使用的,在linux里可以直接编译。

而在windows下需要修改 setup.py

解决方案:

先提供思路,最后附上代码。

1)修改 setup.py

- 不需要使用 CUDA 的部分:(在 setup.py 文件中搜索,在

ext_modules中没有出现.cu的,就是不需要使用),将原版的 setup.py 修改为 setup_new.py

ext_modules = [

Extension('rotate_polygon_nms',

source=['rotate_polygon_nms_kernel.cu', 'rotate_polygon_nms.pyx']

……

]

- 使用 CUDA 的部分:(上面代码中的情况,就是需要使用),将原版的 setup.py 修改为 setup_cuda.py

2)编译修改后的 setup.py

将CMD定位到修改后的 setup.py 所在位置,运行以下命令:

python setup_new.py install

python setup_cuda.py install

如果提示Microsoft Visual C++ 9.0 is required ...,在CMD中输入以下命令:

SET VS90COMNTOOLS=%VS110COMNTOOLS% (如果电脑中装的是vs2012)

SET VS90COMNTOOLS=%VS120COMNTOOLS% (如果电脑中装的是vs2013)

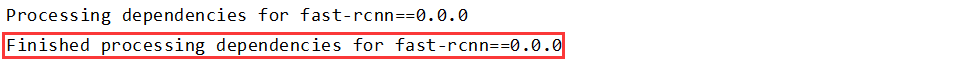

最后出现Finished processing ...就编译成功了。

如果在编译过程中出现了错误,以下可能会帮到你:

-

错误提示

'cl.exe' failed with exit status 2

【参考】:pip 安装模块时 cl.exe failed 可能的解决办法

如果都解决不了,可以尝试卸载VS -

错误提示

rbbox_overlaps_kernel.cu(156): error: expected a ";"

找到文件的对应位置,把 and 换成 &&

-

错误提示

error: don't know how to set runtime library search path for MSVC

删掉下面框起来的

-

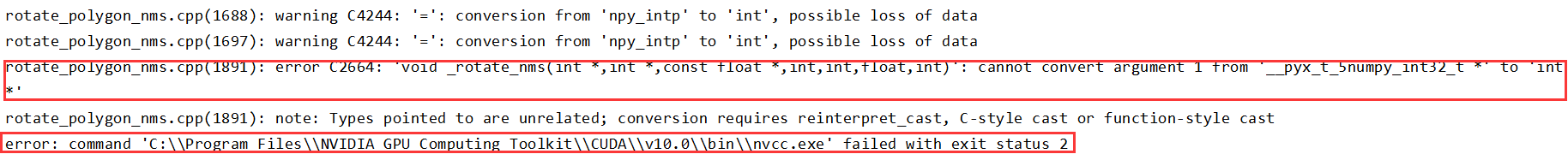

错误提示

error C2664: 'void _rotate_nms(int *,int *,const float *,int,int,float,int)': cannot convert argument 1 from '__pyx_t_5numpy_int32_t *' to 'int *'

错误提示nvcc fatal : No input files specified; use option --help for more information

【参考】:windows下编译tensorflow Faster RCNN的lib/Makefile(非常非常有帮助,感谢博主!)

3)Makefile

最后,保持 CMD 定位到的 setup.py 所在位置,运行以下命令:

python setup_new.py build_ext --inplace

python setup_cuda.py build_ext --inplace

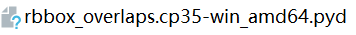

会生成类似下面这样的文件:

4)删除缓存文件

按照 Makefile 中的提示,所有编译过程结束以后,可以删除编译过程中生成的 build 文件等。

5)结语

到此为止,win10编译 Fast R-CNN 所需的setup.py(rotate) tensorflow版就全部结束了!

接下来就可以开始愉快的调试代码了~

附:代码

setup_new.py

# --------------------------------------------------------

# Windows

# Fast R-CNN

# Copyright (c) 2015 Microsoft

# Licensed under The MIT License [see LICENSE for details]

# Written by Ross Girshick

# --------------------------------------------------------

import os

from os.path import join as pjoin

import numpy as np

from setuptools import setup

from distutils.extension import Extension

from Cython.Distutils import build_ext

# change for windows, by MrX

nvcc_bin = 'nvcc.exe'

lib_dir = 'lib/x64'

def find_in_path(name, path):

"Find a file in a search path"

# Adapted from http://code.activestate.com/recipes/52224-find-a-file-given-a-search-path/

for dir in path.split(os.pathsep):

binpath = pjoin(dir, name)

if os.path.exists(binpath):

return os.path.abspath(binpath)

return None

def locate_cuda():

"""Locate the CUDA environment on the system

Returns a dict with keys 'home', 'nvcc', 'include', and 'lib64'

and values giving the absolute path to each directory.

Starts by looking for the CUDAHOME env variable. If not found, everything

is based on finding 'nvcc' in the PATH.

"""

# first check if the CUDAHOME env variable is in use

if 'CUDA_PATH' in os.environ:

home = os.environ['CUDA_PATH']

print("home = %s\n" % home)

nvcc = pjoin(home, 'bin', nvcc_bin)

else:

# otherwise, search the PATH for NVCC

default_path = pjoin(os.sep, 'usr', 'local', 'cuda', 'bin')

nvcc = find_in_path(nvcc_bin, os.environ['PATH'] + os.pathsep + default_path)

if nvcc is None:

raise EnvironmentError('The nvcc binary could not be '

'located in your $PATH. Either add it to your path, or set $CUDA_PATH')

home = os.path.dirname(os.path.dirname(nvcc))

print("home = %s, nvcc = %s\n" % (home, nvcc))

cudaconfig = {'home': home, 'nvcc': nvcc,

'include': pjoin(home, 'include'),

'lib64': pjoin(home, lib_dir)}

for k, v in cudaconfig.items():

if not os.path.exists(v):

raise EnvironmentError('The CUDA %s path could not be located in %s' % (k, v))

return cudaconfig

CUDA = locate_cuda()

# Obtain the numpy include directory. This logic works across numpy versions.

try:

numpy_include = np.get_include()

except AttributeError:

numpy_include = np.get_numpy_include()

def customize_compiler_for_nvcc(self):

"""inject deep into distutils to customize how the dispatch

to cl/nvcc works.

If you subclass UnixCCompiler, it's not trivial to get your subclass

injected in, and still have the right customizations (i.e.

distutils.sysconfig.customize_compiler) run on it. So instead of going

the OO route, I have this. Note, it's kindof like a wierd functional

subclassing going on."""

# tell the compiler it can processes .cu

# self.src_extensions.append('.cu')

# save references to the default compiler_so and _comple methods

# default_compiler_so = self.spawn

# default_compiler_so = self.rc

super = self.compile

# now redefine the _compile method. This gets executed for each

# object but distutils doesn't have the ability to change compilers

# based on source extension: we add it.

def compile(sources, output_dir=None, macros=None, include_dirs=None, debug=0, extra_preargs=None,

extra_postargs=None, depends=None):

postfix = os.path.splitext(sources[0])[1]

if postfix == '.cu':

# use the cuda for .cu files

# self.set_executable('compiler_so', CUDA['nvcc'])

# use only a subset of the extra_postargs, which are 1-1 translated

# from the extra_compile_args in the Extension class

postargs = extra_postargs['nvcc']

else:

postargs = extra_postargs['cl']

return super(sources, output_dir, macros, include_dirs, debug, extra_preargs, postargs, depends)

# reset the default compiler_so, which we might have changed for cuda

# self.rc = default_compiler_so

# inject our redefined _compile method into the class

self.compile = compile

# run the customize_compiler

class custom_build_ext(build_ext):

def build_extensions(self):

customize_compiler_for_nvcc(self.compiler)

build_ext.build_extensions(self)

ext_modules = [

# unix _compile: obj, src, ext, cc_args, extra_postargs, pp_opts

Extension(

"cython_bbox",

["bbox.pyx"],

extra_compile_args={'cl': []},

# extra_compile_args={'gcc': ["-Wno-cpp", "-Wno-unused-function"]},

include_dirs = [numpy_include]

),

Extension(

"cython_nms",

["nms.pyx"],

extra_compile_args={'cl': []},

# extra_compile_args={'gcc': ["-Wno-cpp", "-Wno-unused-function"]},

include_dirs = [numpy_include]

)

]

setup(

name='tf_faster_rcnn',

ext_modules=ext_modules,

# inject our custom trigger

cmdclass={'build_ext': custom_build_ext},

)

setup_cuda.py

【注意】:第五行的PATH需要根据自己电脑的情况进行修改:

# 没有把vs的bin路径添加到环境变量

PATH = "C:/Program Files (x86)/Microsoft Visual Studio 14.0/VC/bin"

# 环境变量里有vs的bin路径

PATH = os.environ.get('PATH')

import numpy as np

import os

# on Windows, we need the original PATH without Anaconda's compiler in it:

PATH = "C:/Program Files (x86)/Microsoft Visual Studio 14.0/VC/bin"

from distutils.spawn import spawn, find_executable

from setuptools import setup, find_packages, Extension

from setuptools.command.build_ext import build_ext

import sys

from os.path import join as pjoin

# change for windows, by MrX

nvcc_bin = 'nvcc.exe'

lib_dir = 'lib/x64'

# CUDA specific config

# nvcc is assumed to be in user's PATH

nvcc_compile_args = ['-O', '--ptxas-options=-v', '-arch=sm_35', '-c', '--compiler-options=-fPIC']

nvcc_compile_args = os.environ.get('NVCCFLAGS', '').split() + nvcc_compile_args

cuda_libs = ['cublas']

# Obtain the numpy include directory. This logic works across numpy versions.

try:

numpy_include = np.get_include()

except AttributeError:

numpy_include = np.get_numpy_include()

def find_in_path(name, path):

"Find a file in a search path"

# Adapted fom

# http://code.activestate.com/recipes/52224-find-a-file-given-a-search-path/

for dir in path.split(os.pathsep):

binpath = pjoin(dir, name)

if os.path.exists(binpath):

return os.path.abspath(binpath)

return None

def locate_cuda():

"""Locate the CUDA environment on the system

Returns a dict with keys 'home', 'nvcc', 'include', and 'lib64'

and values giving the absolute path to each directory.

Starts by looking for the CUDAHOME env variable. If not found, everything

is based on finding 'nvcc' in the PATH.

"""

# first check if the CUDAHOME env variable is in use

if 'CUDA_PATH' in os.environ:

home = os.environ['CUDA_PATH']

print("home = %s\n" % home)

nvcc = pjoin(home, 'bin', nvcc_bin)

else:

# otherwise, search the PATH for NVCC

default_path = pjoin(os.sep, 'usr', 'local', 'cuda', 'bin')

nvcc = find_in_path(nvcc_bin, os.environ['PATH'] + os.pathsep + default_path)

if nvcc is None:

raise EnvironmentError('The nvcc binary could not be '

'located in your $PATH. Either add it to your path, or set $CUDA_PATH')

home = os.path.dirname(os.path.dirname(nvcc))

print("home = %s, nvcc = %s\n" % (home, nvcc))

cudaconfig = {'home': home, 'nvcc': nvcc,

'include': pjoin(home, 'include'),

'lib64': pjoin(home, lib_dir)}

for k, v in cudaconfig.items():

if not os.path.exists(v):

raise EnvironmentError('The CUDA %s path could not be located in %s' % (k, v))

return cudaconfig

CUDA = locate_cuda()

class CUDA_build_ext(build_ext):

"""

Custom build_ext command that compiles CUDA files.

Note that all extension source files will be processed with this compiler.

"""

def build_extensions(self):

self.compiler.src_extensions.append('.cu')

self.compiler.set_executable('compiler_so', 'nvcc')

self.compiler.set_executable('linker_so', 'nvcc --shared')

if hasattr(self.compiler, '_c_extensions'):

self.compiler._c_extensions.append('.cu') # needed for Windows

self.compiler.spawn = self.spawn

build_ext.build_extensions(self)

def spawn(self, cmd, search_path=1, verbose=0, dry_run=0):

"""

Perform any CUDA specific customizations before actually launching

compile/link etc. commands.

"""

if (sys.platform == 'darwin' and len(cmd) >= 2 and cmd[0] == 'nvcc' and

cmd[1] == '--shared' and cmd.count('-arch') > 0):

# Versions of distutils on OSX earlier than 2.7.9 inject

# '-arch x86_64' which we need to strip while using nvcc for

# linking

while True:

try:

index = cmd.index('-arch')

del cmd[index:index+2]

except ValueError:

break

elif self.compiler.compiler_type == 'msvc':

# There are several things we need to do to change the commands

# issued by MSVCCompiler into one that works with nvcc. In the end,

# it might have been easier to write our own CCompiler class for

# nvcc, as we're only interested in creating a shared library to

# load with ctypes, not in creating an importable Python extension.

# - First, we replace the cl.exe or link.exe call with an nvcc

# call. In case we're running Anaconda, we search cl.exe in the

# original search path we captured further above -- Anaconda

# inserts a MSVC version into PATH that is too old for nvcc.

cmd[:1] = ['nvcc', '--compiler-bindir',

os.path.dirname(find_executable("cl.exe", PATH))

or cmd[0]]

# - Secondly, we fix a bunch of command line arguments.

for idx, c in enumerate(cmd):

# create .dll instead of .pyd files

#if '.pyd' in c: cmd[idx] = c = c.replace('.pyd', '.dll') #20160601, by MrX

# replace /c by -c

if c == '/c': cmd[idx] = '-c'

# replace /DLL by --shared

elif c == '/DLL': cmd[idx] = '--shared'

# remove --compiler-options=-fPIC

elif '-fPIC' in c: del cmd[idx]

# replace /Tc... by ...

elif c.startswith('/Tc'): cmd[idx] = c[3:]

# replace /Fo... by -o ...

elif c.startswith('/Fo'): cmd[idx:idx+1] = ['-o', c[3:]]

# replace /LIBPATH:... by -L...

elif c.startswith('/LIBPATH:'): cmd[idx] = '-L' + c[9:]

# replace /OUT:... by -o ...

elif c.startswith('/OUT:'): cmd[idx:idx+1] = ['-o', c[5:]]

# remove /EXPORT:initlibcudamat or /EXPORT:initlibcudalearn

elif c.startswith('/EXPORT:'): del cmd[idx]

# replace cublas.lib by -lcublas

elif c == 'cublas.lib': cmd[idx] = '-lcublas'

# fix ID=2 error

elif 'ID=2' in c: cmd[idx] = c[:15]

# replace /Tp by ..

elif c.startswith('/Tp'): cmd[idx] = c[3:]

# - Finally, we pass on all arguments starting with a '/' to the

# compiler or linker, and have nvcc handle all other arguments

if '--shared' in cmd:

pass_on = '--linker-options='

# we only need MSVCRT for a .dll, remove CMT if it sneaks in:

cmd.append('/NODEFAULTLIB:libcmt.lib')

else:

pass_on = '--compiler-options='

cmd = ([c for c in cmd if c[0] != '/'] +

[pass_on + ','.join(c for c in cmd if c[0] == '/')])

# For the future: Apart from the wrongly set PATH by Anaconda, it

# would suffice to run the following for compilation on Windows:

# nvcc -c -O -o <file>.obj <file>.cu

# And the following for linking:

# nvcc --shared -o <file>.dll <file1>.obj <file2>.obj -lcublas

# This could be done by a NVCCCompiler class for all platforms.

spawn(cmd, search_path, verbose, dry_run)

cudamat_ext = [Extension('rotate_polygon_nms',

sources=['rotate_polygon_nms_kernel.cu', 'rotate_polygon_nms.pyx'],

language='c++',

libraries=cuda_libs,

extra_compile_args=nvcc_compile_args,

include_dirs = [numpy_include, CUDA['include']])

]

setup(

name='fast_rcnn',

ext_modules=cudamat_ext,

# inject our custom trigger

cmdclass={'build_ext': CUDA_build_ext},

)

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?