项目的需求需要爬虫某网的商品信息,自己通过Requests,BeautifulSoup等编写了一个spider,把抓取的数据存到数据库里面。

跑起来的感觉速度有点慢,尤其是进入详情页面抓取信息的时候,小白入门,也不知道应该咋个整,反正就是跟着学嘛。

网上的爬虫框架还是挺多的,现在打算学习spcrapy重新写。

下面是记录官方文档的一些学习notes.

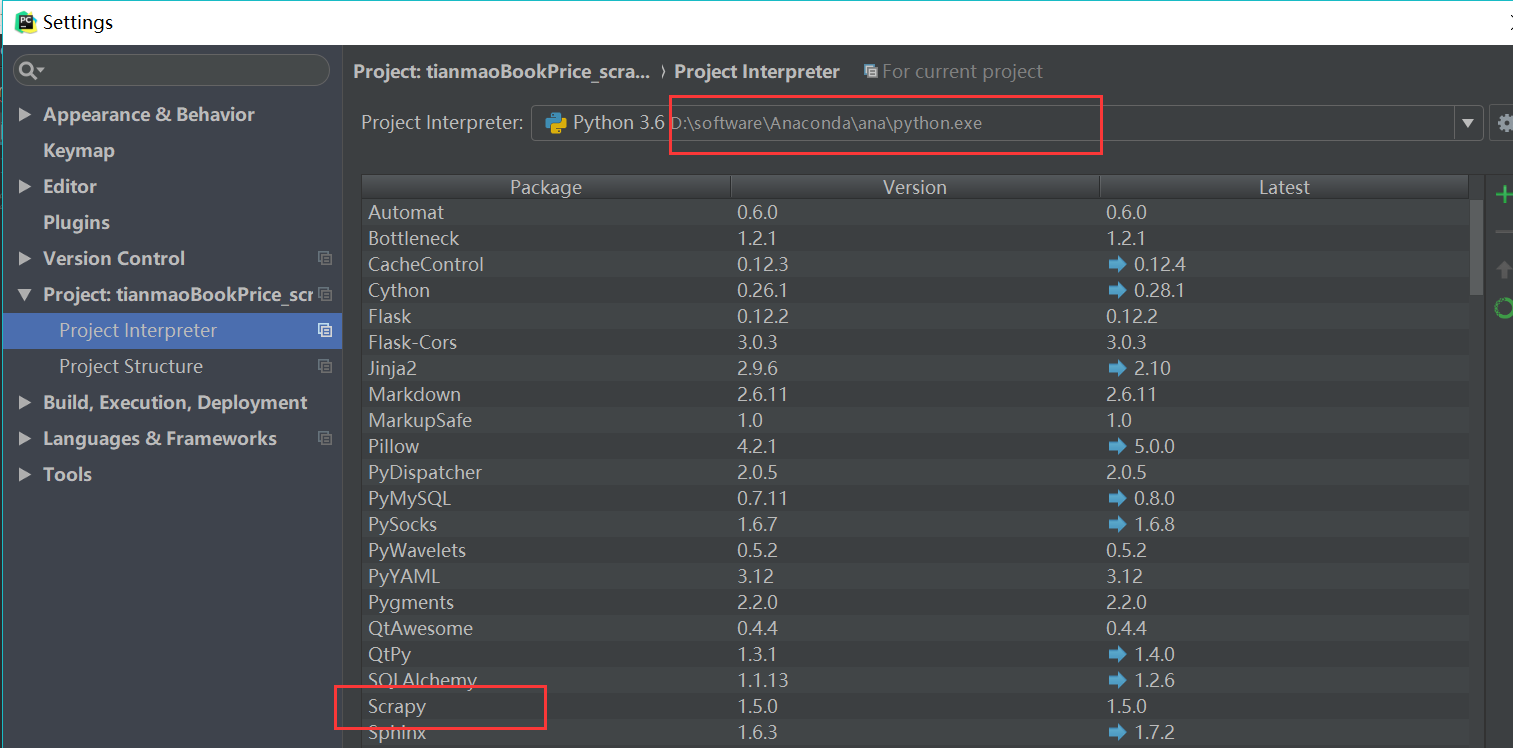

scrapy的环境是在anaconda里面搞得,所以子啊pycharm里面的 preject interpreter 选择anaconda下面的python.exe.

很多时候自己老是要忘记设置这个,会导致很多包都import不进来,,因为我很多包都是通过anaconda环境装的。

下面是给的第一个测试例子

1 class QuotesSpider(scrapy.Spider): 2 name = "quotes" 3 start_urls = [ 4 'http://quotes.toscrape.com/tag/humor/', 5 ] 6 7 def parse(self, response): 8 for quote in response.css('div.quote'): 9 yield { 10 'text': quote.css('span.text::text').extract_first(), 11 'author': quote.xpath('span/small/text()').extract_first(), 12 } 13 14 next_page = response.css('li.next a::attr("href")').extract_first() 15 if next_page is not None: 16 yield response.follow(next_page, self.parse)

在anaconda 的prompt里面输入命令

scrapy runspider quote_spider.py -o quote.json

注意要在文件所在的路径下面哦

运行成功后,会生成一个quote.json的文件

[

{"text": "\u201cThe person, be it gentleman or lady, who has not pleasure in a good novel, must be intolerably stupid.\u201d", "author": "Jane Austen"},

{"text": "\u201cA day without sunshine is like, you know, night.\u201d", "author": "Steve Martin"},

{"text": "\u201cAnyone who thinks sitting in church can make you a Christian must also think that sitting in a garage can make you a car.\u201d", "author": "Garrison Keillor"},

{"text": "\u201cBeauty is in the eye of the beholder and it may be necessary from time to time to give a stupid or misinformed beholder a black eye.\u201d", "author": "Jim Henson"},

{"text": "\u201cAll you need is love. But a little chocolate now and then doesn't hurt.\u201d", "author": "Charles M. Schulz"},

{"text": "\u201cRemember, we're madly in love, so it's all right to kiss me anytime you feel like it.\u201d", "author": "Suzanne Collins"},

{"text": "\u201cSome people never go crazy. What truly horrible lives they must lead.\u201d", "author": "Charles Bukowski"},

{"text": "\u201cThe trouble with having an open mind, of course, is that people will insist on coming along and trying to put things in it.\u201d", "author": "Terry Pratchett"},

{"text": "\u201cThink left and think right and think low and think high. Oh, the thinks you can think up if only you try!\u201d", "author": "Dr. Seuss"},

{"text": "\u201cThe reason I talk to myself is because I\u2019m the only one whose answers I accept.\u201d", "author": "George Carlin"},

{"text": "\u201cI am free of all prejudice. I hate everyone equally. \u201d", "author": "W.C. Fields"},

{"text": "\u201cA lady's imagination is very rapid; it jumps from admiration to love, from love to matrimony in a moment.\u201d", "author": "Jane Austen"}

]

当你执行scrapy runspider quote_spider.py -o quote.json这条命令的时候,Scrapy会在这个文件里面去look for Spider的定义,找到后用scrapy的crawler engine运行。

通过向start_urls 属性中定义的URL发送请求,并调用默认回调方法parse,将响应对象作为参数传递,从而开始爬网。在parse回调中,我们使用CSS Selector循环引用元素,产生一个带有提取的引用文本和作者的Python字典,查找指向下一页的链接,并使用与parse回调相同的方法安排另一个请求

220

220

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?