本案例在JDK已经安装好的情况下

1.在官网下载Hadoop1.2.1

https://dist.apache.org/repos/dist/release/hadoop/common/

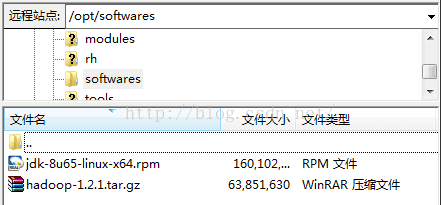

2.在虚拟机上进行如下操作,分别存放软件包和安装软件

[root@centOS ~] # cd /opt

[root@centOS opt] # mkdir softwares

[root@centOS opt]# mkdir modules

3.使用FileZilla将其移动到/opt/softwares

4.进入到/opt/softwares下解压文件到到/opt/modules,命令如下:

[root@centOS softwares]# tar -zxvf hadoop-1.2.1.tar.gz -C /opt/modules/

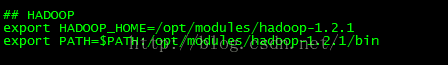

5.配置Hadoop环境变量,添加如下类容,命令:

[root@centOS ~]# vi /etc/profile

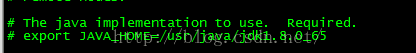

6.配置Hadoop中JDK的路径,首先进入到配置环境目录,在

[root@centOS /]# cd /opt/modules/hadoop-1.2.1/conf/

[root@centOS conf]# vi hadoop-env.sh

找到JAVA_HOME,添加JDK的安装路径

7.在/opt下创建数据输入目录,将conf目录下xml文件拷入该目录下

[root@centOS data]# mkdir input

[root@centOS data]# cp /modules/hadoop-1.2.1/conf/*.xml /opt/data/input/

8.运行实例程序:

[root@centOS hadoop-1.2.1]# hadoop jar hadoop-examples-1.2.1.jar grep /opt/data/input/ /opt/data/output 'dfs[a-z.]+'

Warning: $HADOOP_HOME is deprecated.

15/11/25 22:24:47 INFO util.NativeCodeLoader: Loaded the native-hadoop library

15/11/25 22:24:48 WARN snappy.LoadSnappy: Snappy native library not loaded

15/11/25 22:24:48 INFO mapred.FileInputFormat: Total input paths to process : 7

15/11/25 22:24:50 INFO mapred.JobClient: Running job: job_local1920513106_0001

15/11/25 22:24:50 INFO mapred.LocalJobRunner: Waiting for map tasks

15/11/25 22:24:50 INFO mapred.LocalJobRunner: Starting task: attempt_local1920513106_0001_m_000000_0

15/11/25 22:24:52 INFO mapred.JobClient: map 0% reduce 0%

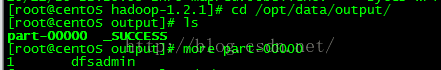

出现如上诉,运行没报错,进入/opt/data/output下,有如下文件表示成功

特别注意报错:

1.普通用户权限不足

2.主机名与未配置

3.JDK环境变量配置错误

207

207

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?