In this section, we will fit an LSTM on the multivariate input data.

本章,我们将在多变量输入数据上拟合LSTM

First, we must split the prepared dataset into train and test sets. To speed up the training of the model for this demonstration, we will only fit the model on the first year of data, then evaluate it on the remaining 4 years of data. If you have time, consider exploring the inverted version of this test harness.

首先,我们必须将准备好的数据集分为训练和测试集。 为了加速此演示模型的培训,我们将只在第一年的数据上拟合模型,然后在剩余的4年数据中对其进行评估。 如果你有时间,可以考虑探索这个测试工具的倒置版本(意思就是四年训练,一年测试)。

The example below splits the dataset into train and test sets, then splits the train and test sets into input and output variables. Finally, the inputs (X) are reshaped into the 3D format expected by LSTMs, namely [samples, timesteps, features].

下面的例子将数据集分解为训练集和测试集,然后将训练集和测试集分解为输入和输出变量。 最后,输入(X)被重新整形为LSTM预期的3D格式,即[samples, timesteps, features]。

# split into train and test sets values = reframed.values n_train_hours = 365 * 24 train = values[:n_train_hours, :] test = values[n_train_hours:, :] # split into input and outputs train_X, train_y = train[:, :-1], train[:, -1] test_X, test_y = test[:, :-1], test[:, -1] # reshape input to be 3D [samples timesteps features] train_X = train_X.reshape((train_X.shape[0], 1, train_X.shape[1])) test_X = test_X.reshape((test_X.shape[0], 1, test_X.shape[1])) print(train_X.shape, train_y.shape, test_X.shape, test_y.shape)

Running this example prints the shape of the train and test input and output sets with about 9K hours of data for training and about 35K hours for testing.

运行该示例,打印出训练和测试的输入输出集的形状,大约9k小时的数据用于训练,大约35k小时的数据用于测试

(8760, 1, 8) (8760,) (35039, 1, 8) (35039,)Now we can define and fit our LSTM model.

现在我们可以定义和拟合我们的LSTM模型、

We will define the LSTM with 50 neurons in the first hidden layer and 1 neuron in the output layer for predicting pollution. The input shape will be 1 time step with 8 features.

我们将用第一个隐藏层50个神经元和输出层一个神经元定义LSTM来预测污染,输入格式将是8个特征的一个时间步

We will use the Mean Absolute Error (MAE) loss function and the efficient Adam version of stochastic gradient descent.

我们将使用平均绝对误差(MAE)损失函数和随机梯度下降的高效Adam版本。

The model will be fit for 50 training epochs with a batch size of 72. Remember that the internal state of the LSTM in Keras is reset at the end of each batch, so an internal state that is a function of a number of days may be helpful (try testing this).

该模型将适用于批量为72的50个训练时期。请记住,Keras中的LSTM的内部状态在每批次结束时被重置,因此一个多天函数的内部状态 也许更有帮助(尝试测试这个)(72不就是3天吗?这特么是说用更多的天数来做为一个batch吗?我特么就到现在都不明白重置状态到底指什么!)。

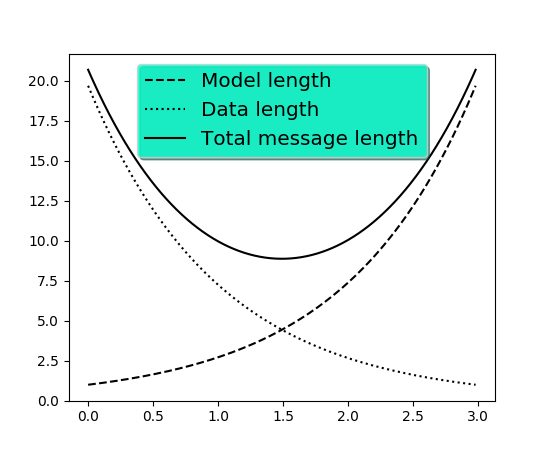

Finally, we keep track of both the training and test loss during training by setting the validation_data argument in the fit() function. At the end of the run both the training and test loss are plotted.

最后,我们通过在fit()函数中设置validation_data参数来跟踪训练期间的训练和测试损失。 在运行结束时,绘制训练和测试损失。

# design network model = Sequential() model.add(LSTM(50, input_shape=(train_X.shape[1], train_X.shape[2]))) model.add(Dense(1)) model.compile(loss='mae', optimizer='adam') # fit network history = model.fit(train_X, train_y, epochs=50, batch_size=72, validation_data=(test_X, test_y), verbose=2, shuffle=False) # plot history pyplot.plot(history.history['loss'], label='train') pyplot.plot(history.history['val_loss'], label='test') pyplot.legend() pyplot.show()

matplotlib.pyplot.

legend

(

*args,

**kwargs

)

Places a legend on the axes.

To make a legend for lines which already exist on the axes (via plot for instance), simply call this function with an iterable of strings, one for each legend item. For example:

ax.plot([1, 2, 3])

ax.legend(['A simple line'])

However, in order to keep the “label” and the legend element instance together, it is preferable to specify the label either at artist creation, or by calling the set_label() method on the artist:

line, = ax.plot([1, 2, 3], label='Inline label')

# Overwrite the label by calling the method.

line.set_label('Label via method')

ax.legend()

Specific lines can be excluded from the automatic legend element selection by defining a label starting with an underscore. This is default for all artists, so calling legend() without any arguments and without setting the labels manually will result in no legend being drawn.

For full control of which artists have a legend entry, it is possible to pass an iterable of legend artists followed by an iterable of legend labels respectively:

legend((line1, line2, line3), ('label1', 'label2', 'label3'))

| Parameters: | loc : int or string or pair of floats, default: ‘upper right’

bbox_to_anchor :

ncol : integer

prop : None or

fontsize : int or float or {‘xx-small’, ‘x-small’, ‘small’, ‘medium’, ‘large’, ‘x-large’, ‘xx-large’}

numpoints : None or int

scatterpoints : None or int

scatteryoffsets : iterable of floats

markerscale : None or int or float

markerfirst : bool

frameon : None or bool

fancybox : None or bool

shadow : None or bool

framealpha : None or float

facecolor : None or “inherit” or a color spec

edgecolor : None or “inherit” or a color spec

mode : {“expand”, None}

bbox_transform : None or

title : str or None

borderpad : float or None

labelspacing : float or None

handlelength : float or None

handletextpad : float or None

borderaxespad : float or None

columnspacing : float or None

handler_map : dict or None

|

|---|

Notes

Not all kinds of artist are supported by the legend command. See Legend guide for details.

Examples

(Source code, png, pdf)

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?