背景:

一个正常运行的2节点(172.16.23.170,172.16.23.171,主库为172.16.23.171 catdb)MGR单主集群需要新增节点

1.原2节点集群滚动修改(先修改从节点)

(1)stop group_replication;

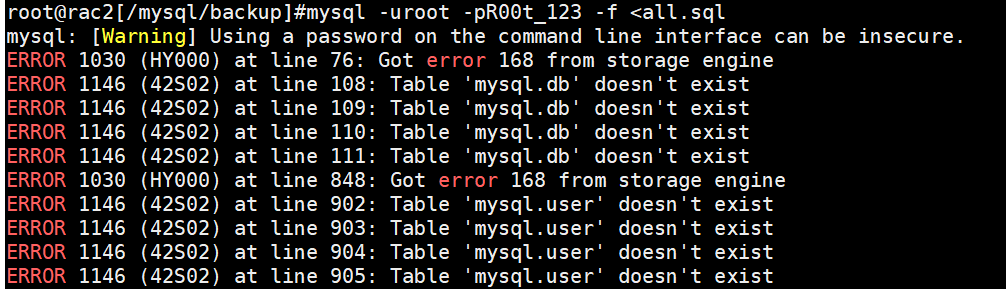

若不停,会报错

![]()

(2)group_replication_ip_whitelist

set @@global.group_replication_ip_whitelist='10.45.53.31,172.16.23.170,172.16.23.171';

(3)group_replication_group_seeds

set @@global.group_replication_group_seeds='rac2:33061,shard12:33061,catdb:33061';

(4)start group_replication;

select * from performance_schema.replication_group_members;

show variables like 'group%';

(5)修改两节点的/ect/my.cnf以防重启失效

group_replication_group_seeds="rac2:33061,shard12:33061,catdb:33061"

group_replication_ip_whitelist="10.45.53.31,172.16.23.170,172.16.23.171"

2.新节点mysql安装配置

(1)四节点配置/etc/hosts

#mgr

172.16.23.171 catdb

172.16.23.170 shard12

10.45.53.31 rac2 #新增节点

(2)安装同版本mysql

rpm -qa|grep maria|awk '{print "rpm -e ",$1,"--nodeps"}'|sh

mkdir /mysql

cd /mysql

mkdir bin_log

mkdir log

mkdir data

mkdir undo_tbls

mkdir relay_log

groupadd -g 512 mysql

useradd -u 512 -g 512 -d /mysql mysql

chown -R mysql.mysql /mysql

rpm -ivh mysql-commercial-common-5.7.27-1.1.el6.x86_64.rpm

rpm -ivh mysql-commercial-libs-5.7.27-1.1.el6.x86_64.rpm

rpm -ivh mysql-commercial-client-5.7.27-1.1.el6.x86_64.rpm

rpm -ivh mysql-commercial-devel-5.7.27-1.1.el6.x86_64.rpm

rpm -ivh mysql-commercial-embedded-5.7.27-1.1.el6.x86_64.rpm

rpm -ivh mysql-commercial-embedded-devel-5.7.27-1.1.el6.x86_64.rpm

rpm -ivh mysql-commercial-server-5.7.27-1.1.el6.x86_64.rpm --nodeps

(3) 配置/etc/my.cnf

[client]

socket=/var/lib/mysql/mysql.sock

[mysqld]

disabled_storage_engines="MyISAM,BLACKHOLE,FEDERATED,ARCHIVE,MEMORY"

server_id=3

gtid_mode=ON

enforce_gtid_consistency=ON

master_info_repository=TABLE

relay_log_info_repository=TABLE

binlog_checksum=NONE

log_slave_updates=ON

log_bin=binlog

binlog_format=ROW

plugin_load_add='group_replication.so' ##保证group_replication自动安装

transaction_write_set_extraction=XXHASH64

group_replication_group_name="2fe0209a-11a4-11ea-8bb5-005056a024d1"

##group_name三主机需一致,可以先启动主节点得到其uuid,使用uuid,select uuid();

group_replication_start_on_boot=off

group_replication_local_address= "rac2:33061"

group_replication_group_seeds= "rac2:33061,shard12:33061,catdb:33061" ##最好使用hostname而不是ip

group_replication_ip_whitelist="10.45.53.30,172.16.23.170,172.16.23.171"

##官方文档没有配置,默认设为automatic,但是经过测试,不强制配whitelist无法将三节点关联

group_replication_bootstrap_group=off

group_replication_member_weight=30

auto_increment_increment=3

auto_increment_offset=3

port=3306

datadir=/mysql/data

socket=/var/lib/mysql/mysql.sock

log-error=/mysql/log/mysqld.log

log_timestamps=SYSTEM ##error_log,slow_log默认时间戳是UTC,加上system保证记录操作系统时间戳

pid-file=/var/run/mysqld/mysqld.pid

log-bin=/mysql/bin_log/mysql-binlog

relay_log=/mysql/relay_log/mysql-relaylog

innodb_undo_directory=/mysql/undo_tbls

innodb_undo_tablespaces=4

innodb_undo_log_truncate=on

slow_query_log_file=/mysql/log/slow.log

general_log_file=/mysql/log/general.log

transaction-isolation = READ-COMMITTED

character-set-server=utf8mb4

collation-server=utf8mb4_bin

lower_case_table_names

expire_logs_days=7

只需改四处,server_id和group_replication_local_address,auto_increment_offset和group_replication_member_weight

3.启动新增节点

(1) service mysqld start

(2) 用生成的root的密码来登录并修改root密码,为了不同步修改过程,可以暂时关了sql_log_bin

SET SQL_LOG_BIN=0;

alter user root@'localhost' identified by '123';

SET SQL_LOG_BIN=1;

(3) 新建复制用户

SET SQL_LOG_BIN=0;

CREATE USER rpl_user@'%' IDENTIFIED BY '123';

GRANT REPLICATION SLAVE ON *.* TO rpl_user@'%';

FLUSH PRIVILEGES;

SET SQL_LOG_BIN=1;

4.初始化从节点

(1)备份--为减少主库压力,可以从从库进行备份

mysqldump -uroot -p123 --single-transaction --master-data=2 --all-databases > all.sql

(2)恢复到新增节点

mysql -uroot -p123 -f <all.sql

此时有个报错,mysql.user和mysql.db没有覆盖创建成功

看了mysqld.log发现,新增节点无法创建引擎为MyISAM的系统表,是因为/etc/my.cnf中有一项

disabled_storage_engines="MyISAM,BLACKHOLE,FEDERATED,ARCHIVE,MEMORY"

故而无法创建MyISAM表,由于此参数是read-only的参数,在/etc/my.cnf中注释掉,重启mysqld,导入成功;然后去掉注释,重启mysqld,重新将disabled_storage_engines="MyISAM,BLACKHOLE,FEDERATED,ARCHIVE,MEMORY"

(3)配置group_replication_recovery复制通道

CHANGE MASTER TO MASTER_USER='rpl_user', MASTER_PASSWORD='123' FOR CHANNEL 'group_replication_recovery';

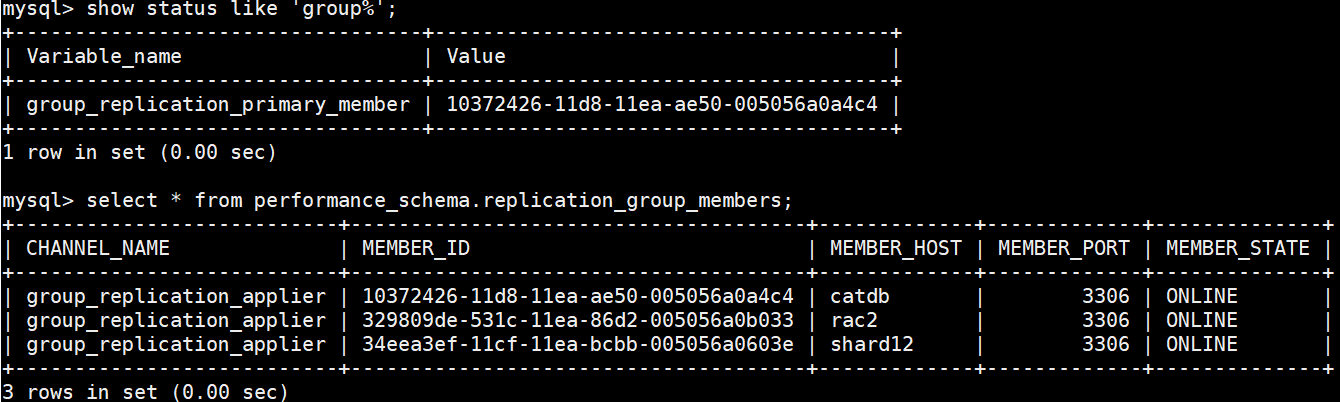

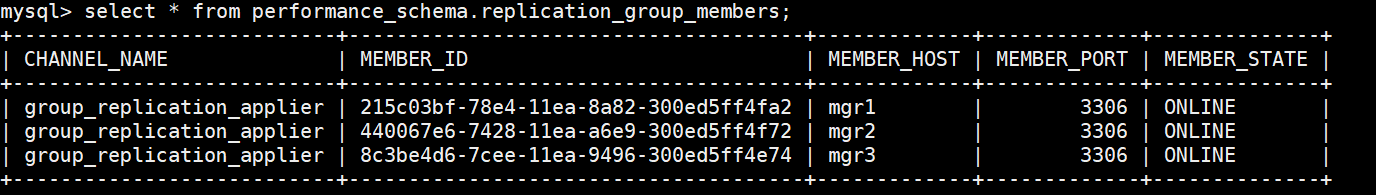

(4)start group_replication

![]()

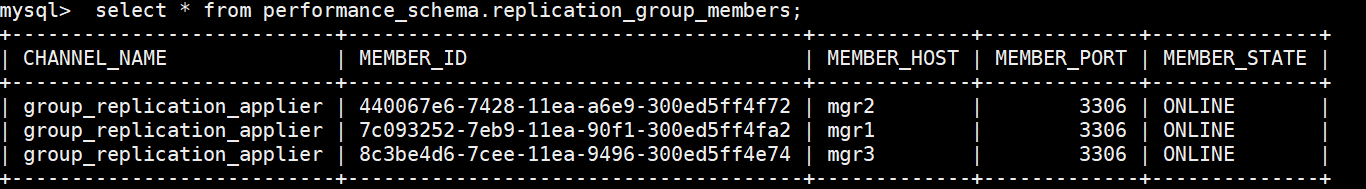

(5)检查新增节点是否加入并test复制是否成功

select * from performance_schema.replication_group_members;

5.其他

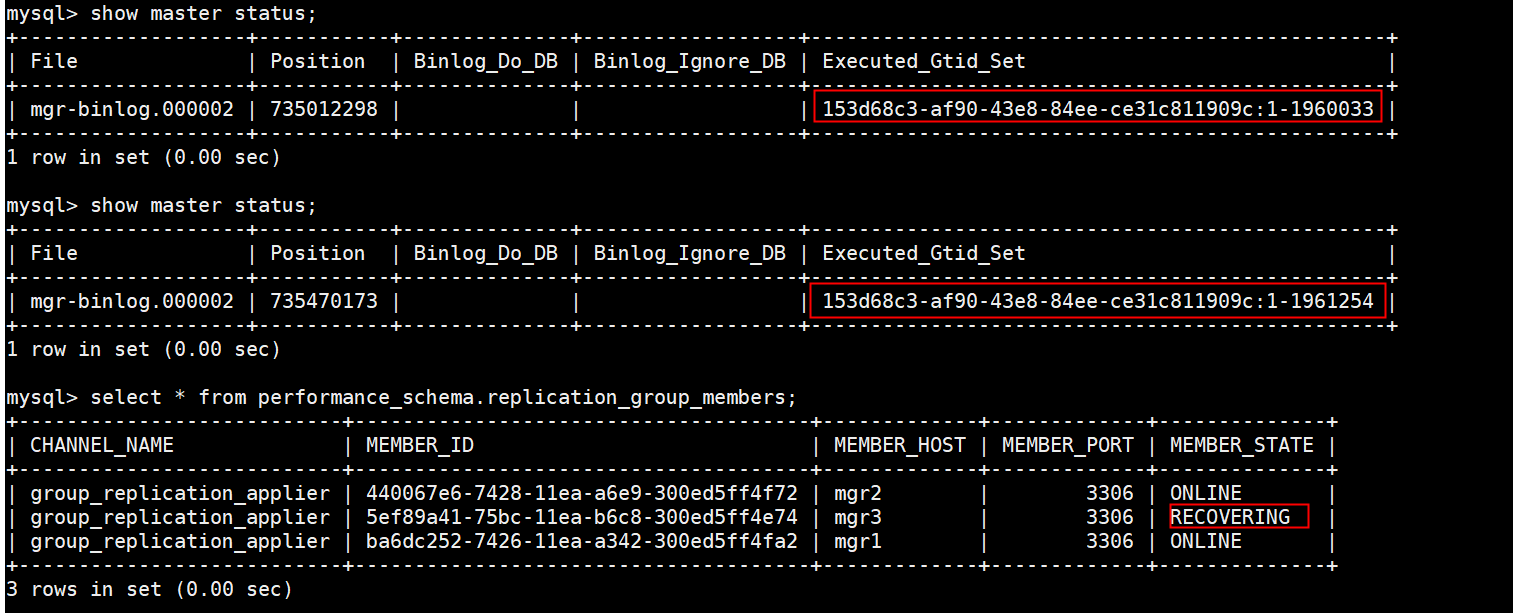

(1)binlog的默认保留时间是7天,由expire_logs_days控制,若自mgr创建起binlog全没删或者mgr创建没超过7天,可以跳过新增节点初始化(1),(2)步骤,直接执行(3),(4),(5),组复制会自动拉取binlog,过程中新增节点的Executed_Gtid_Set一直在更新。但是相对于其他方式,自动拉取的速度特别慢,所以不是很推荐用这种方式新增节点。

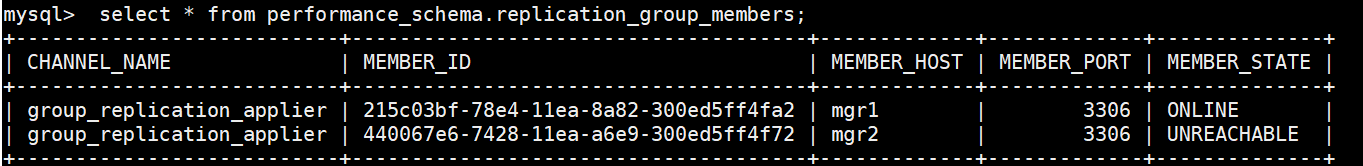

此过程中新增的节点状态为recovery,经所内环境测试,自动拉取有点慢,且新增节点online后,主节点有可能会出现下述情况:

此时,新增的节点UNREACHABLE,我是通过重启两个节点的组复制解决的

(2)mysqldump属于逻辑备份,恢复比物理备份要慢,相同大小的库逻辑备份比物理备份的恢复要慢很多。新增节点可以使用xtrabackup来备份恢复,步骤如下:

备份:

xtrabackup --defaults-file=/etc/my.cnf --user=root --password=123 --parallel=4 --target-dir=/backup/xtrabackup --backup --binlog-info=on --galera-info

cd /backup/xtrabackup

将备份scp到新增节主机上,执行恢复

xtrabackup --defaults-file=/etc/my.cnf --user=root --password=123 --target-dir=/backup/xtrabackup --prepare

xtrabackup --defaults-file=/etc/my.cnf --user=root --password=123 --target-dir=/backup/xtrabackup --copy-back

chown -R mysql.mysql /mysql

启动mysqld,service mysqld start

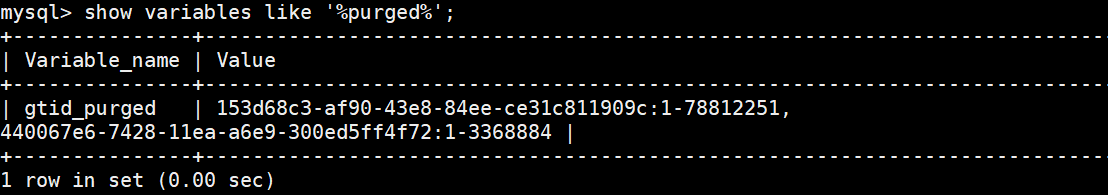

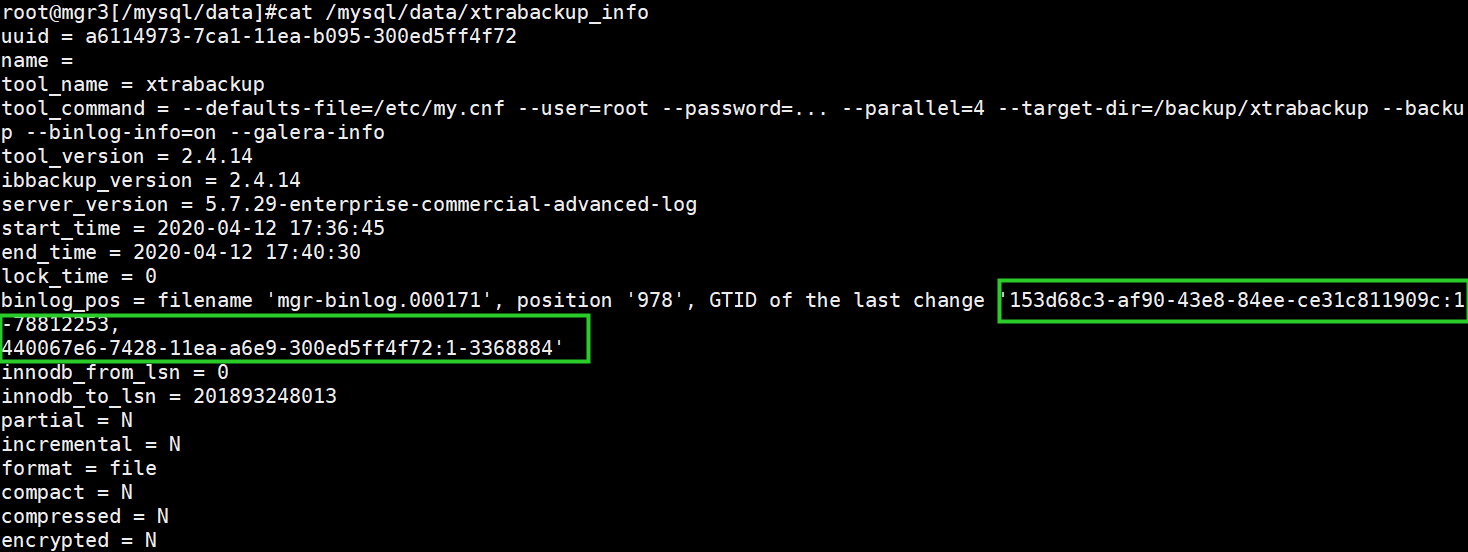

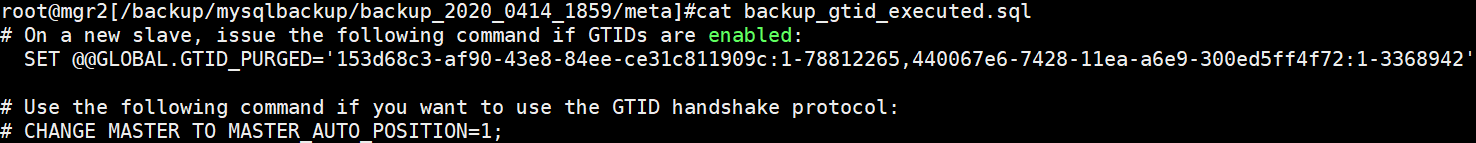

mgr的恢复主要是基于binlog和gtid,由于xtrabackup恢复的gtid_purged与实际不符,需要手动设gtid_purged

cat /mysql/data/xtrabackup_info

设gtid_purged之前先将gtid_executed置空:

reset master;

set GLOBAL gtid_purged='153d68c3-af90-43e8-84ee-ce31c811909c:1-78812253,440067e6-7428-11ea-a6e9-300ed5ff4f72:1-3368884'

start group_replication;

online说明状态正常:

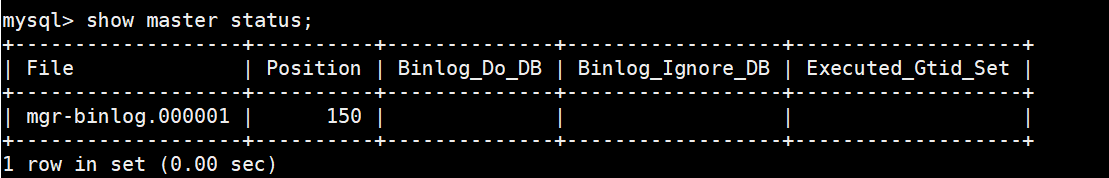

(3)mysqlbackup新增节点:相较于xtrabackup,mysql原生的备份工具,mysqlbackup对于mgr的新增节点更为友好(不需要手动设置gtid_purged),备份恢复的速度也快于xtrabackup

备份:

mysqlbackup --defaults-file=/etc/my.cnf --backup-image=my.mbi_`date +%Y_%m%d_%H%M` --backup-dir=/backup/mysqlbackup/backup_`date +%Y_%m%d_%H%M` --user=root -p123 --read-threads=3 --process-threads=8 --write-threads=3 --compress backup-to-image

可以看出来,在备份的时候生成了一个backup_gtid_executed.sql,应该是在恢复时自动执行的

将备份scp到新增节主机上,执行恢复

cd /backup/mysqlbackup/backup_2020_0414_1859

scp -r * mgr1:/backup/mysqlbackup

恢复:

mysqlbackup --defaults-file=/etc/my.cnf --backup-image=my.mbi_2020_0414_1859 --backup-dir=/backup/mysqlbackup/ --uncompress copy-back-and-apply-log --force

chown -R mysql.mysql /mysql

启动mysqld,service mysqld start

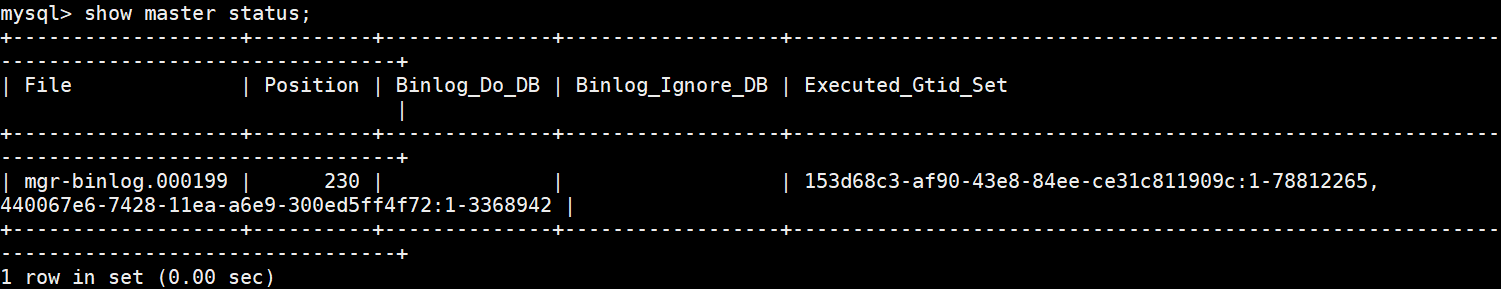

show master status;

可以看到GLOBAL.GTID_PURGED已经被修改正确

start group_replication;

online说明状态正常:

411

411

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?