hadoop环境搭建详情见hadoop系列第一篇博客(hadoop配置直接影响到本程序的运行)

另外,windows环境下运行mapreduce程序需要hadoop.dll与winutils.exe的支持https://github.com/steveloughran/winutils

本次示例为hadoop2.8.3,把对应版本的hadoop.dll与winutils.exe复制到本地hadoop文件夹的bin目录下,并把hadoop.dll复制一份到windows系统C:\Windows\System32中(本地hadoop无需修改etc目录下的配置,但要设置Windows系统环境变量HADOOP_HOME与path并加载到eclipse中)

数据准备:

[hadoop@yourname ~]$ hadoop dfs -mkdir /wordcount [hadoop@yourname ~]$ hadoop dfs -mkdir /wordcount/input [hadoop@yourname ~]$ hadoop dfs -copyFromLocal test.txt /wordcount/input/yourname详见hadoop系列第一篇博客;hadoop是登录linux系统的用户名;~指/home/hadoop目录;test.txt是在/home/hadoop目录下,上传到hdfs中/wordcount/input/目录下

test.txt

test hadoop hello hadooppackage com.hadoop.test; import java.io.IOException; import java.util.Iterator; import java.util.StringTokenizer; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.IntWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapred.OutputCollector; import org.apache.hadoop.mapred.Reporter; import org.apache.hadoop.mapred.TextInputFormat; import org.apache.hadoop.mapred.TextOutputFormat; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.Mapper; import org.apache.hadoop.mapreduce.Reducer; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; public class WordCount { public static class WordCountMapper extends Mapper<Object, Text, Text, IntWritable> { private final static IntWritable one = new IntWritable(1); private Text word = new Text(); @Override protected void map(Object key, Text value, Mapper<Object, Text, Text, IntWritable>.Context context) throws IOException, InterruptedException { StringTokenizer itr = new StringTokenizer(value.toString()); while (itr.hasMoreTokens()) { word.set(itr.nextToken()); context.write(word, one); } } } public static class WordCountReducer extends Reducer<Text, IntWritable, Text, IntWritable> { private IntWritable result = new IntWritable(); @Override protected void reduce(Text key, Iterable<IntWritable> values, Reducer<Text, IntWritable, Text, IntWritable>.Context output) throws IOException, InterruptedException { int sum = 0; for(IntWritable value : values){ sum += value.get(); } result.set(sum); output.write(key, result); } } public static void main(String[] args) throws Exception { String input = "hdfs://192.168.1.101:9000/wordcount/input"; String output = "hdfs://192.168.1.101:9000/wordcount/output"; Configuration conf = new Configuration(); //配置信息不可缺少 conf.set("mapreduce.framework.name","yarn"); conf.set("yarn.resourcemanager.hostname","192.168.1.101"); conf.set("fs.defaultFS","hdfs://192.168.1.101:9000/"); conf.set("mapreduce.app-submission.cross-platform", "true"); conf.set("mapreduce.jobhistory.address", "192.168.1.101:10020"); Job job = Job.getInstance(conf); job.setJarByClass(WordCount.class); job.setJar("E:/wordcount.jar"); job.setJobName("WordCount"); job.setOutputKeyClass(Text.class); job.setOutputValueClass(IntWritable.class); job.setMapperClass(WordCountMapper.class); job.setCombinerClass(WordCountReducer.class); job.setReducerClass(WordCountReducer.class); FileInputFormat.addInputPath(job, new Path(input)); FileOutputFormat.setOutputPath(job, new Path(output)); job.waitForCompletion(true); System.exit(0); } }

不配置conf.set("mapreduce.jobhistory.address", "192.168.1.101:10020");

异常:java.io.IOException:java.net.ConnectException: Call From yourname/192.168.182.100 to 0.0.0.0:10020 failed on connection exception: java.net.ConnectException: Connection refused若没有指定配置信息,mapreduce则不会运行在远程的linux中(http://192.168.1.101:8088/cluster中无运行记录)

conf.set("mapreduce.framework.name","yarn");

conf.set("yarn.resourcemanager.hostname","192.168.1.101");

conf.set("fs.defaultFS","hdfs://192.168.1.101:9000/");

conf.set("mapreduce.app-submission.cross-platform", "true");//指定远程跨平台运行

conf.set("mapreduce.jobhistory.address", "192.168.1.101:10020");job.setJar("E:/wordcount.jar"); 设置jar包,运行程序之前需要先将应用打包并放在指定位置,否则报异常java.io.FileNotFoundException

运行mapreduce:sbin目录下启动start-dfs.sh start-yarn.sh mr-jobhistory-daemon.sh start historyserver

eclipse右键运行run on hadoop

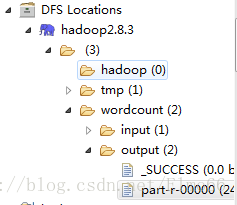

通过DFS Locations查看运行结果:

在eclipse中可双击part-r-00000打开查看结果(命令行形式:hadoop dfs -cat /wordcount/output/part-r-00000):

hadoop 2

hello 1

test 1若需要多次运行,则需要在运行前删掉output目录

如果本篇博客对你有帮助,请记得打赏给小哥哥哦丷丷。

1392

1392

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?