概述

Flink有个流处理的任务一直运行着,程序用到了State,而且每次结果都得依赖前面的State,所以程序重新启动要指定CheckPoint或者SavePoint的恢复路径,配置了状态后端为RocksDBStateBackend,路径为:hdfs:///flink/checkpoints/pack-download-streaming,前面几次程序出现问题都能直接从上次CheckPoint保存的路径恢复状态。

这次程序挂了,然后HDFS的NameNode的Active也从原来的110节点换成了107节点,原本我写的恢复的路径是:hdfs://192.168.2.110:8020/flink/checkpoints/pack-download-streaming/6d2efb85e595f43d34e66245fb95e32a/chk-8181,那报Operation category READ is not supported in state standby可以理解,但是把恢复路径改成hdfs:///flink/checkpoints/pack-download-streaming/6d2efb85e595f43d34e66245fb95e32a/chk-8181,甚至是hdfs://192.168.2.107:8020/flink/checkpoints/pack-download-streaming/6d2efb85e595f43d34e66245fb95e32a/chk-8181都是报一样的错,连core-site.xml和hdfs-site.xml都尝试拷贝进去了还是不管用。

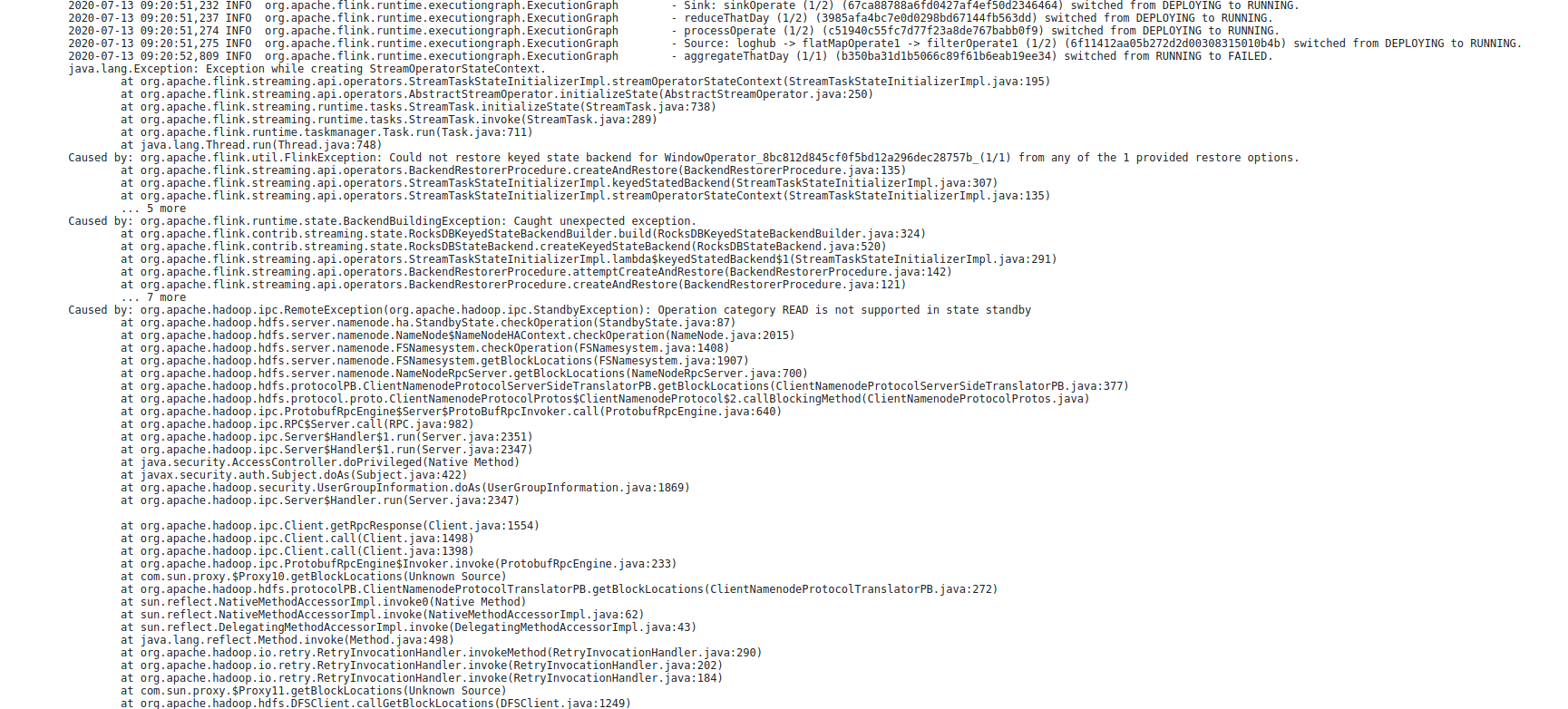

报错大体如下:

java.lang.Exception: Exception while creating StreamOperatorStateContext.

at org.apache.flink.streaming.api.operators.StreamTaskStateInitializerImpl.streamOperatorStateContext(StreamTaskStateInitializerImpl.java:195)

at org.apache.flink.streaming.api.operators.AbstractStreamOperator.initializeState(AbstractStreamOperator.java:250)

at org.apache.flink.streaming.runtime.tasks.StreamTask.initializeState(StreamTask.java:738)

at org.apache.flink.streaming.runtime.tasks.StreamTask.invoke(StreamTask.java:289)

at org.apache.flink.runtime.taskmanager.Task.run(Task.java:711)

at java.lang.Thread.run(Thread.java:748)

Caused by: org.apache.flink.util.FlinkException: Could not restore keyed state backend for WindowOperator_8bc812d845cf0f5bd12a296dec28757b_(1/1) from any of the 1 provided restore options.

at org.apache.flink.streaming.api.operators.BackendRestorerProcedure.createAndRestore(BackendRestorerProcedure.java:135)

at org.apache.flink.streaming.api.operators.StreamTaskStateInitializerImpl.keyedStatedBackend(StreamTaskStateInitializerImpl.java:307)

at org.apache.flink.streaming.api.operators.StreamTaskStateInitializerImpl.streamOperatorStateContext(StreamTaskStateInitializerImpl.java:135)

... 5 more

Caused by: org.apache.flink.runtime.state.BackendBuildingException: Caught unexpected exception.

at org.apache.flink.contrib.streaming.state.RocksDBKeyedStateBackendBuilder.build(RocksDBKeyedStateBackendBuilder.java:324)

at org.apache.flink.contrib.streaming.state.RocksDBStateBackend.createKeyedStateBackend(RocksDBStateBackend.java:520)

at org.apache.flink.streaming.api.operators.StreamTaskStateInitializerImpl.lambda$keyedStatedBackend$1(StreamTaskStateInitializerImpl.java:291)

at org.apache.flink.streaming.api.operators.BackendRestorerProcedure.attemptCreateAndRestore(BackendRestorerProcedure.java:142)

at org.apache.flink.streaming.api.operators.BackendRestorerProcedure.createAndRestore(BackendRestorerProcedure.java:121)

... 7 more

Caused by: org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.ipc.StandbyException): Operation category READ is not supported in state standby

at org.apache.hadoop.hdfs.server.namenode.ha.StandbyState.checkOperation(StandbyState.java:87)

at org.apache.hadoop.hdfs.server.namenode.NameNode$NameNodeHAContext.checkOperation(NameNode.java:2015)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.checkOperation(FSNamesystem.java:1408)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.getBlockLocations(FSNamesystem.java:1907)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.getBlockLocations(NameNodeRpcServer.java:700)

截图:

解决

第一种解决方式:把HDFS的Active节点从107切回到110节点那肯定没问题,但是。。。没但是。

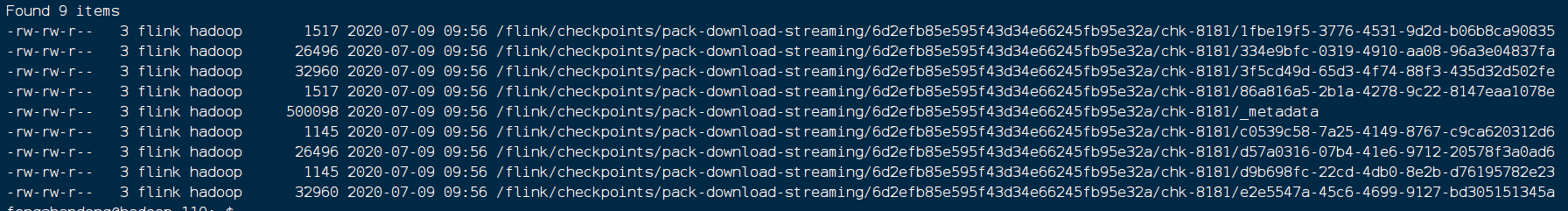

第二种解决方式:指定的路径怎么指定107都不行,那猜测它内部有地方直接写死了IP地址什么的,所以外部恢复指定了也不管用,感觉挺有道理也怪扯的,然后就去看一眼CheckPoint的_metadata文件,

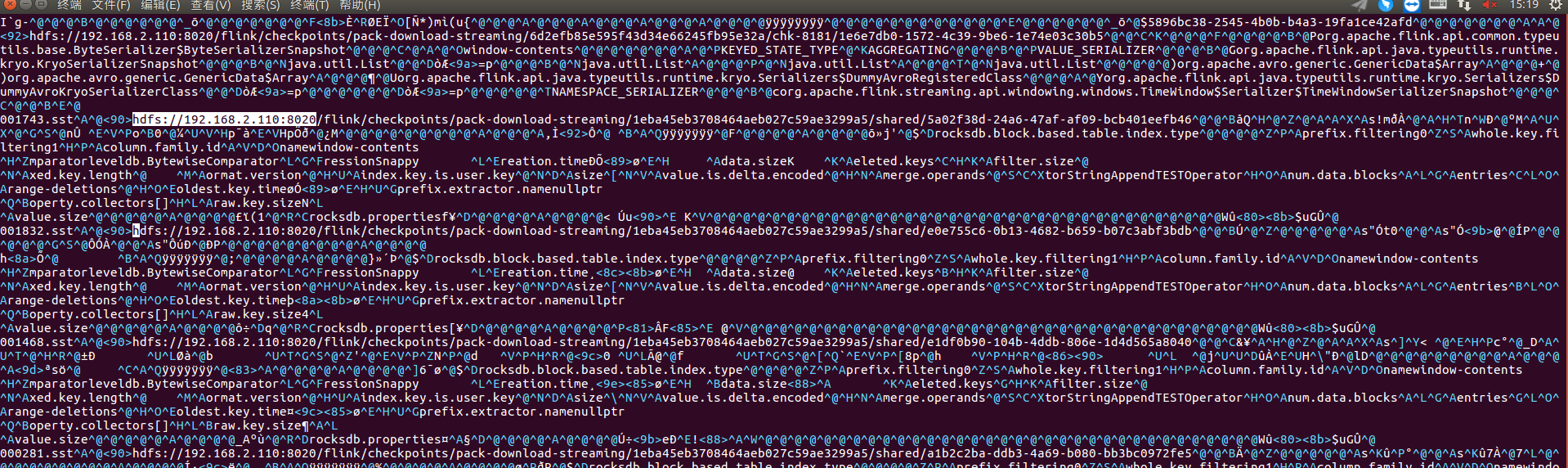

直接下载到本地然后打开:

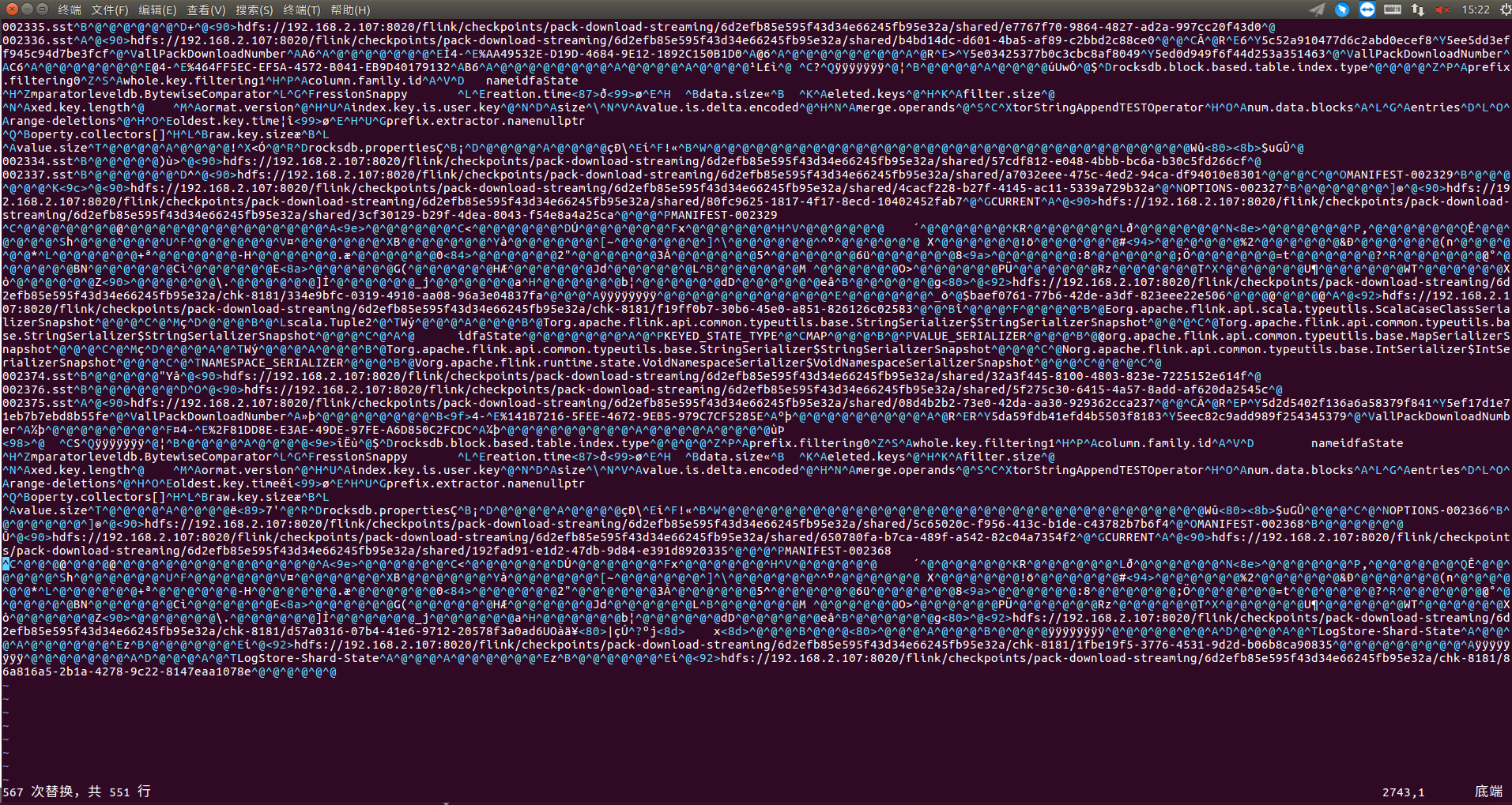

其他地方看不懂,但是IP地址还是能看得到的,所以我就直接把metadata文件给改了::%s/192.168.2.110/192.168.2.107/g

替换成功:

保存以后直接把6d2efb85e595f43d34e66245fb95e32a文件夹上传到HDFS的data文件夹下,指定恢复的路径为:hdfs:///data/6d2efb85e595f43d34e66245fb95e32a/chk-8181

然后就正常启动了,不过直接修改metadata文件这种行为我还是觉得有点奇怪。

763

763

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?