核心方法

The key to passing function pointers to CUDA kernel is to use static pointers to device pointers followed by copying the pointers to the host side. Otherwise, I am sure you will get different kinds of weird errors.

核心就是: 使用static 指针指向device端的指针. 然后将指针拷贝到host端.

模板函数指针

#include <iostream>

// Since C++ 11 需要支持C++ 11. 否则报错!

template<typename T>

using func_t = T (*) (T, T);

template <typename T>

__device__ T add_func (T x, T y)

{

return x + y;

}

template <typename T>

__device__ T mul_func (T x, T y)

{

return x * y;

}

// Required for functional pointer argument in kernel function

// Static pointers to device functions

template <typename T>

__device__ func_t<T> p_add_func = add_func<T>;

template <typename T>

__device__ func_t<T> p_mul_func = mul_func<T>;

template <typename T>

__global__ void kernel(func_t<T> op, T * d_x, T * d_y, T * result)

{

*result = (*op)(*d_x, *d_y);

}

template <typename T>

void test(T x, T y)

{

func_t<T> h_add_func;

func_t<T> h_mul_func;

T * d_x, * d_y;

cudaMalloc(&d_x, sizeof(T));

cudaMalloc(&d_y, sizeof(T));

cudaMemcpy(d_x, &x, sizeof(T), cudaMemcpyHostToDevice);

cudaMemcpy(d_y, &y, sizeof(T), cudaMemcpyHostToDevice);

T result;

T * d_result, * h_result;

cudaMalloc(&d_result, sizeof(T));

h_result = &result;

// Copy device function pointer to host side

cudaMemcpyFromSymbol(&h_add_func, p_add_func<T>, sizeof(func_t<T>));

cudaMemcpyFromSymbol(&h_mul_func, p_mul_func<T>, sizeof(func_t<T>));

kernel<T><<<1,1>>>(h_add_func, d_x, d_y, d_result);

cudaDeviceSynchronize();

cudaMemcpy(h_result, d_result, sizeof(T), cudaMemcpyDeviceToHost);

std::cout << "Sum: " << result << std::endl;

kernel<T><<<1,1>>>(h_mul_func, d_x, d_y, d_result);

cudaDeviceSynchronize();

cudaMemcpy(h_result, d_result, sizeof(T), cudaMemcpyDeviceToHost);

std::cout << "Product: " << result << std::endl;

}

int main()

{

std::cout << "Test int for type int ..." << std::endl;

test<int>(2.05, 10.00);

std::cout << "Test float for type float ..." << std::endl;

test<float>(2.05, 10.00);

std::cout << "Test double for type double ..." << std::endl;

test<double>(2.05, 10.00);

}

vs2019 + cuda 10.1 测试:

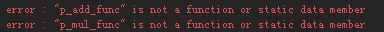

vs2013+cuda6.5 报错:

不用模板

先修改为不用模板的形式:

#include <iostream>

#include "cuda_runtime.h"

#include "device_launch_parameters.h"

typedef float(*func_t) (float, float);

__device__ float add_func(float x, float y)

{

return x + y;

}

__device__ float mul_func(float x, float y)

{

return x * y;

}

// Required for functional pointer argument in kernel function

// Static pointers to device functions

__device__ func_t p_add_func = add_func;

__device__ func_t p_mul_func = mul_func;

__global__ void kernel(func_t op, float * d_x, float * d_y, float * result)

{

*result = (*op)(*d_x, *d_y);

}

void test(float x, float y)

{

func_t h_add_func;

func_t h_mul_func;

float * d_x, *d_y;

cudaMalloc(&d_x, sizeof(float));

cudaMalloc(&d_y, sizeof(float));

cudaMemcpy(d_x, &x, sizeof(float), cudaMemcpyHostToDevice);

cudaMemcpy(d_y, &y, sizeof(float), cudaMemcpyHostToDevice);

float result;

float * d_result, *h_result;

cudaMalloc(&d_result, sizeof(float));

h_result = &result;

// Copy device function pointer to host side

cudaMemcpyFromSymbol(&h_add_func, p_add_func, sizeof(func_t));

cudaMemcpyFromSymbol(&h_mul_func, p_mul_func, sizeof(func_t));

kernel << <1, 1 >> >(h_add_func, d_x, d_y, d_result);

cudaDeviceSynchronize();

cudaMemcpy(h_result, d_result, sizeof(float), cudaMemcpyDeviceToHost);

std::cout << "Sum: " << result << std::endl;

kernel << <1, 1 >> >(h_mul_func, d_x, d_y, d_result);

cudaDeviceSynchronize();

cudaMemcpy(h_result, d_result, sizeof(float), cudaMemcpyDeviceToHost);

std::cout << "Product: " << result << std::endl;

}

int main()

{

std::cout << "Test int for type int ..." << std::endl;

test(2.05, 10.00);

std::cout << "Test float for type float ..." << std::endl;

test(2.05, 10.00);

std::cout << "Test double for type double ..." << std::endl;

test(2.05, 10.00);

}

vs2013+cuda6.5 结果:

446

446

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?