先把网页Down下来,看看图片包含在哪个标签里面

简单粗暴的识别方法,用'进行分割

var url = pageHtml.Split('\'');

int index = url[i].IndexOf(".jpeg");

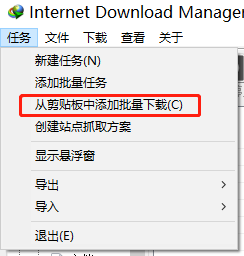

if(index == (url[i].Length - 5))得到的Url,然后拿着Url进行下载即可,有的网站有反扒,不让直接下载怎么办,上工具 internet download manager,复制全部的Url打开工具软件

复制Url后选择,从剪切板中添加批量下载

哇塞,好美

代码在下面

using RestSharp;

using RestSharp.Extensions;

using System;

using System.Collections.Generic;

using System.Drawing;

using System.IO;

using System.Linq;

using System.Net;

using System.Net.Http;

using System.Text;

using System.Threading.Tasks;

namespace PictureSpider

{

public class Program

{

public static void Main(string[] args)

{

try

{

WebClient webClient = new WebClient();

webClient.Credentials = CredentialCache.DefaultCredentials;

webClient.Headers.Add("Accept", "image/gif, image/x-xbitmap, image/jpeg, image/pjpeg, */*");

webClient.Headers.Add("Accept-Language", "zh-CN");

webClient.Headers.Add("Host", "");

webClient.Headers.Add("User-Agent", "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.2; SV1)");

webClient.Headers.Add("Content-Type", "application/x-www-form-urlencoded");

webClient.Credentials = CredentialCache.DefaultCredentials;//获取或设置用于向Internet资源的请求进行身份验证的网络凭据

Byte[] pageData = webClient.DownloadData("https://www.vmgirls.com/15298.html"); //从指定网站下载数据

string pageHtml = Encoding.Default.GetString(pageData); //如果获取网站页面采用的是GB2312,则使用这句

using (System.IO.StreamWriter file = new System.IO.StreamWriter(@"html.txt", true))

{

file.WriteLine(pageHtml);

}

//找到'进行分割

var url = pageHtml.Split('\'');

List<string> urls = new List<string>();

for(int i=0;i< url.Length;i++)

{

int index = url[i].IndexOf(".jpeg");

if(index == (url[i].Length - 5))

{

string pictrueUrl = "https:" + url[i];

urls.Add(pictrueUrl);

Console.WriteLine(pictrueUrl);

try

{

byte[] Bytes = webClient.DownloadData(pictrueUrl);

using (MemoryStream ms = new MemoryStream(Bytes))

{

Image outputImg = Image.FromStream(ms);

outputImg.Save($"{i.ToString()}.jpeg");

}

}

catch (Exception ex)

{

Console.WriteLine(ex.ToString());

}

}

}

using (System.IO.StreamWriter file = new System.IO.StreamWriter(@"url.txt", true))

{

for (int i = 0; i < urls.Count(); i++)

{

file.WriteLine(urls[i]);

}

}

}

catch (WebException webEx)

{

Console.WriteLine(webEx.Message.ToString());

}

Console.ReadLine();

}

}

}

735

735

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?