从零实现

# 导入包和模块

%matplotlib inline

import torch

import torch.nn as nn

import numpy as np

import sys

sys.path.append("..")

import d2lzh_pytorch as d2l

print(torch.__version__)

def dropout(X, drop_prob):

X = X.float()

assert 0 <= drop_prob <= 1

# drop_prob的值必须在0-1之间,和数据库中的断言一个意思

keep_prob = 1 -drop_prob # 保存元素概率

# 这种情况下把全部元素丢弃

if keep_prob == 0: #keep_prob=0等价于1-p=0

return torch.zeros_like(X)

mask = (torch.rand(X.shape) < keep_prob).float()

# torch.rand()均匀分布,小于号<判别,若真,返回1,否则返回0

return mask * X / keep_prob # 重新计算新的隐藏单元的公式实现

定义模型参数

num_inputs, num_outputs, num_hiddens1, num_hiddens2 = 784, 10, 256, 256

# 设定各层超参数

W1 = torch.tensor(np.random.normal(0, 0.01, size=(num_inputs, num_hiddens1)),

dtype=torch.float, requires_grad=True)

b1 = torch.zeros(num_hiddens1, requires_grad=True)

W2 = torch.tensor(np.random.normal(0, 0.01, size=(num_hiddens1, num_hiddens2)),

dtype=torch.float, requires_grad=True)

b2 = torch.zeros(num_hiddens2, requires_grad=True)

W3 = torch.tensor(np.random.normal(0, 0.01, size=(num_hiddens2, num_outputs)),

dtype=torch.float, requires_grad=True)

b3 = torch.zeros(num_outputs, requires_grad=True)

params = [W1, b1, W2, b2, W3, b3]

定义模型

drop_prob1, drop_prob2 = 0.2, 0.5 # 设定丢弃概率

def net(X, is_training=True):

X = X.view(-1, num_inputs)

H1 = (torch.matmul(X, W1) + b1).relu()

if is_training: # 只在训练时使用丢弃法

H1 = dropout(H1, drop_prob1) # 在第一层隐藏层使用丢弃法

H2 = (torch.matmul(H1, W2) + b2).relu()

if is_training:

H2 = dropout(H2, drop_prob2) # 在第一层隐藏层使用丢弃法

return torch.matmul(H2, W3) + b3

训练和测试模型

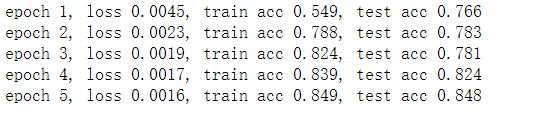

num_epochs, lr, batch_size = 5, 100.0, 256

# 设定训练周期,学习率和批量大小

loss = torch.nn.CrossEntropyLoss()

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs, batch_size, params, lr)

简洁实现

# 声明各层网络,Dropout层不在测试时发挥作用

net = nn.Sequential(d2l.FlattenLayer(),

nn.Linear(num_inputs, num_hiddens1),

nn.ReLU(),

nn.Dropout(drop_prob1),

nn.Linear(num_hiddens1, num_hiddens2),

nn.ReLU(),

nn.Dropout(drop_prob2),

nn.Linear(num_hiddens2, 10)

)

# 初始化参数

for param in net.parameters():

nn.init.normal_(param, mean=0, std=0.1)

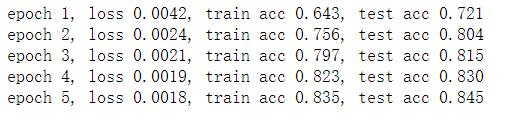

训练和测试模型

# 定义优化器

optimizer = torch.optim.SGD(net.parameters(), lr=0.5)

# 训练模型

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs,

batch_size,None, None, optimizer)

# 训练集准确率普遍高于测试集,但两者相差不大,符合实际情况

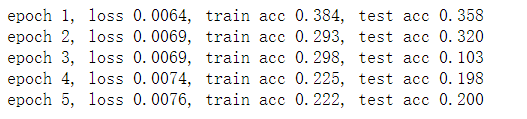

添加权重衰减系数 weight_decay

# 定义优化器

optimizer = torch.optim.SGD(net.parameters(), weight_decay=0.1, lr=0.5)

# 训练模型

d2l.train_ch3(net, train_iter, test_iter, loss, num_epochs,

batch_size,None, None, optimizer)

# 准确率急剧下降,说明丢弃法和权重衰减同时使用对模型复杂度降低过于巨大

470

470

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?