本案例软件包:链接:https://pan.baidu.com/s/1zABhjj2umontXe2CYBW_DQ

提取码:1123(若链接失效在下面评论,我会及时更新).

目录

2.在/sparkapp/src/main/scala文件夹下编辑SimpleApp.scala

5. 通过spark-submit运行程序

(1)sbt编译打包

1.创建文件夹:

[hadoop@master ~]$ mkdir ./sparkapp

[hadoop@master ~]$ mkdir -p ./sparkapp/src/main/scala

2.在/sparkapp/src/main/scala文件夹下编辑SimpleApp.scala

/* SimpleApp.scala */

import org.apache.spark.SparkContext

import org.apache.spark.SparkContext._

import org.apache.spark.SparkConf

object SimpleApp {

def main(args: Array[String]) {

val logFile = "file:///usr/local/spark/README.md" // Should be some file on your system

val conf = new SparkConf().setAppName("Simple Application")

val sc = new SparkContext(conf)

val logData = sc.textFile(logFile, 2).cache()

val numAs = logData.filter(line => line.contains("a")).count()

val numBs = logData.filter(line => line.contains("b")).count()

println("Lines with a: %s, Lines with b: %s".format(numAs, numBs))

}

}

这段代码的作用是计算/usr/local/spark/README文件中包含“a”和“b”的行数,并打印结果。

3. 编译打包

cd ~

vim ./sparkapp/simple.sbt文件内容:

name := "Simple Project"

version := "1.0"

scalaVersion := "2.11.8"

libraryDependencies += "org.apache.spark" %% "spark-core" % "2.1.0"

检查应用程序文件结构:

cd ~/sparkapp/

find .

应用程序打包(保持联网,系统自动下载相关依赖包,时间较长,以后再次执行打包的时候就不会这么长时间了):

cd ~/sparkapp

/usr/local/sbt/sbt package

通过spark-submit运行程序

[hadoop@master ~]$ /usr/local/spark/bin/spark-submit \

> --class "SimpleApp" \

> ~/sparkapp/target/scala-2.11/simple-project_2.11-1.0.jar 2>&1 | grep "Lines with a:"执行结果如下图所示:

(2)Maven编译打包Scala程序

1.创建文件夹

[hadoop@master ~]$ mkdir ./sparkapp2

[hadoop@master ~]$ mkdir -p ./sparkapp2/src/main/scala

2.编辑文件

[hadoop@master ~]$ vim ./sparkapp2/pom.xml <project>

<groupId>edu.berkeley</groupId>

<artifactId>simple-project</artifactId>

<modelVersion>4.0.0</modelVersion>

<name>Simple Project</name>

<packaging>jar</packaging>

<version>1.0</version>

<repositories>

<repository>

<id>Akka repository</id>

<url>http://repo.akka.io/releases</url>

</repository>

</repositories>

<dependencies>

<dependency> <!-- Spark dependency -->

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>2.1.0</version>

</dependency>

</dependencies>

<build>

<sourceDirectory>src/main/scala</sourceDirectory>

<plugins>

<plugin>

<groupId>org.scala-tools</groupId>

<artifactId>maven-scala-plugin</artifactId>

<executions>

<execution>

<goals>

<goal>compile</goal>

</goals>

</execution>

</executions>

<configuration>

<scalaVersion>2.11.8</scalaVersion>

<args>

<arg>-target:jvm-1.5</arg>

</args>

</configuration>

</plugin>

</plugins>

</build>

</project>

3. 查看应用程序文件结构

cd sparkapp2

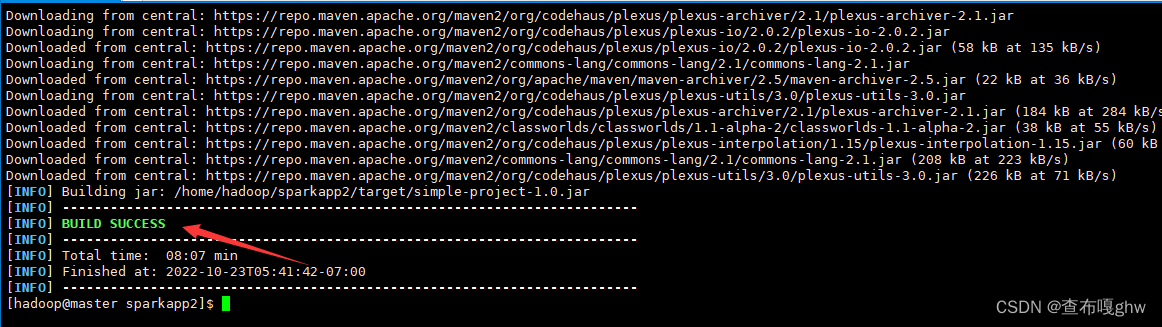

find .4.打包jar包(时间很长)

cd sparkapp2

/usr/local/maven/bin/mvn/ package

打包完成(见下图)

5. 通过spark-submit运行程序

[hadoop@master sparkapp2]$ /usr/local/spark/bin/spark-submit \

> --class "SimpleApp" \

> ~/sparkapp2/target/simple-project-1.0.jar 2>&1 | grep "Lines with a:"

613

613

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?