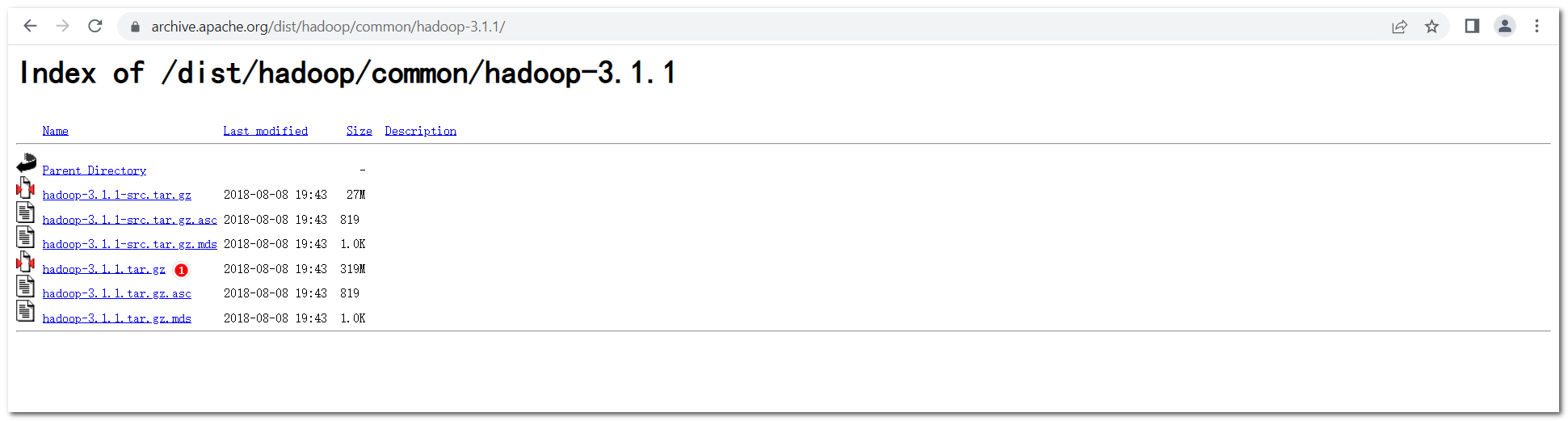

下载链接

官网下载:https://archive.apache.org/dist/hadoop/common/hadoop-3.1.1/hadoop-3.1.1.tar.gz

安装步骤

① 下载

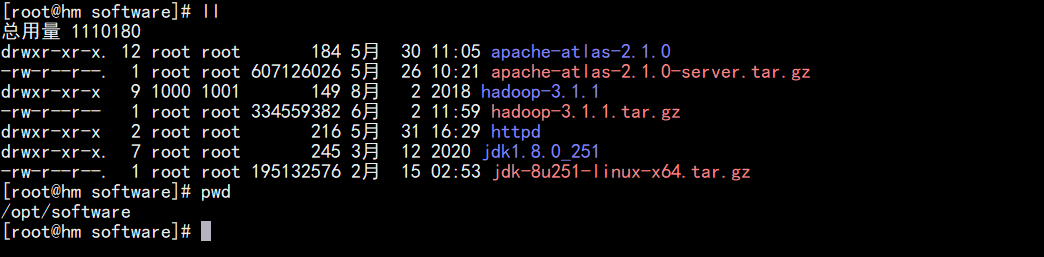

② 解压

tar -zxvf hadoop-3.1.1.tar.gz

③ 环境变量

在“/etc/profile.d”目录中,创建一个文件 rills-atlas.sh ,内容如下:

### 1. SET HADOOP ENVIRONMENT ###

HADOOP_HOME=/opt/software/hadoop-3.1.1

PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

export HADOOP_HOME PATH

④ 验证

[root@hm output]# hadoop version

Hadoop 3.1.1

Source code repository https://github.com/apache/hadoop -r 2b9a8c1d3a2caf1e733d57f346af3ff0d5ba529c

Compiled by leftnoteasy on 2018-08-02T04:26Z

Compiled with protoc 2.5.0

From source with checksum f76ac55e5b5ff0382a9f7df36a3ca5a0

This command was run using /opt/software/hadoop-3.1.1/share/hadoop/common/hadoop-common-3.1.1.jar

[root@hm output]#

⑤ 测试

按如下指令进行操作,完成测试,说明安装成功。

[root@hm hadoop-3.1.1]# ll

总用量 180

drwxr-xr-x 2 1000 1001 183 8月 2 2018 bin

drwxr-xr-x 3 1000 1001 20 8月 2 2018 etc

drwxr-xr-x 2 1000 1001 106 8月 2 2018 include

drwxr-xr-x 2 root root 4096 6月 2 14:35 input

drwxr-xr-x 3 1000 1001 20 8月 2 2018 lib

drwxr-xr-x 4 1000 1001 288 8月 2 2018 libexec

-rw-r--r-- 1 1000 1001 147144 7月 29 2018 LICENSE.txt

-rw-r--r-- 1 1000 1001 21867 7月 29 2018 NOTICE.txt

drwxr-xr-x 2 root root 88 6月 2 14:37 output

-rw-r--r-- 1 1000 1001 1366 7月 29 2018 README.txt

drwxr-xr-x 3 1000 1001 4096 8月 2 2018 sbin

drwxr-xr-x 4 1000 1001 31 8月 2 2018 share

[root@hm hadoop-3.1.1]# pwd

/opt/software/hadoop-3.1.1

[root@hm hadoop-3.1.1]# mkdir input

[root@hm hadoop-3.1.1]# cp etc/hadoop/*.xml input

[root@hm hadoop-3.1.1]# cp etc/hadoop/*.properties input

[root@hm hadoop-3.1.1]# bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-3.1.1.jar grep input output 'dfs[a-z.]+'

[root@hm hadoop-3.1.1]# cd output/

[root@hm output]# ll

总用量 4

-rw-r--r-- 1 root root 180 6月 2 14:37 part-r-00000

-rw-r--r-- 1 root root 0 6月 2 14:36 _SUCCESS

[root@hm output]# cat part-r-00000

3 dfs.server.namenode.

3 dfs.logger

2 dfs.audit.logger

2 dfs.audit.log.maxfilesize

2 dfs.audit.log.maxbackupindex

1 dfsadmin

1 dfs.namenode.servicerpc

1 dfs.namenode.rpc

1 dfs.log

[root@hm output]#

⑥ 免密

[root@hm hadoop-3.1.1]# ssh localhost

# 输入密码

[root@hm ~]# ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa

[root@hm ~]# cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

[root@hm ~]# chmod 0600 ~/.ssh/authorized_keys

# ctrl + D, 然后进行测试

[root@hm hadoop-3.1.1]# ssh localhost

# 无需再输入密码

⑦ 配置

// 1. 修改core-site.xml配置文件

[root@hm hadoop-3.1.1]# vim /opt/software/hadoop-3.1.1/etc/hadoop/core-site.xml

// 增加以下信息

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

</configuration>

// 2. 修改hdfs-site.xml配置文件

[root@hm hadoop-3.1.1]# vim /opt/software/hadoop-3.1.1/etc/hadoop/hdfs-site.xml

// 增加以下信息

<configuration>

<property>

<name>dfs.namenode.name.dir</name>

<value>/usr/local/hadoop/data/nameNode</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/usr/local/hadoop/data/dataNode</value>

</property>

</configuration>

// 3. 在start-dfs.sh和stop-dfs.sh文件中,添加以下参数:

HDFS_DATANODE_USER=root

HADOOP_SECURE_DN_USER=hdfs

HDFS_NAMENODE_USER=root

HDFS_SECONDARYNAMENODE_USER=root

// 4. 在start-yarn.sh和stop-yarn.sh文件中,添加以下参数:

YARN_RESOURCEMANAGER_USER=root

HADOOP_SECURE_DN_USER=yarn

YARN_NODEMANAGER_USER=root

⑧ 格式化

[root@hm hadoop-3.1.1]# bin/hdfs namenode -format

⑨ 启动

[root@hm hadoop-3.1.1]# sbin/start-dfs.sh

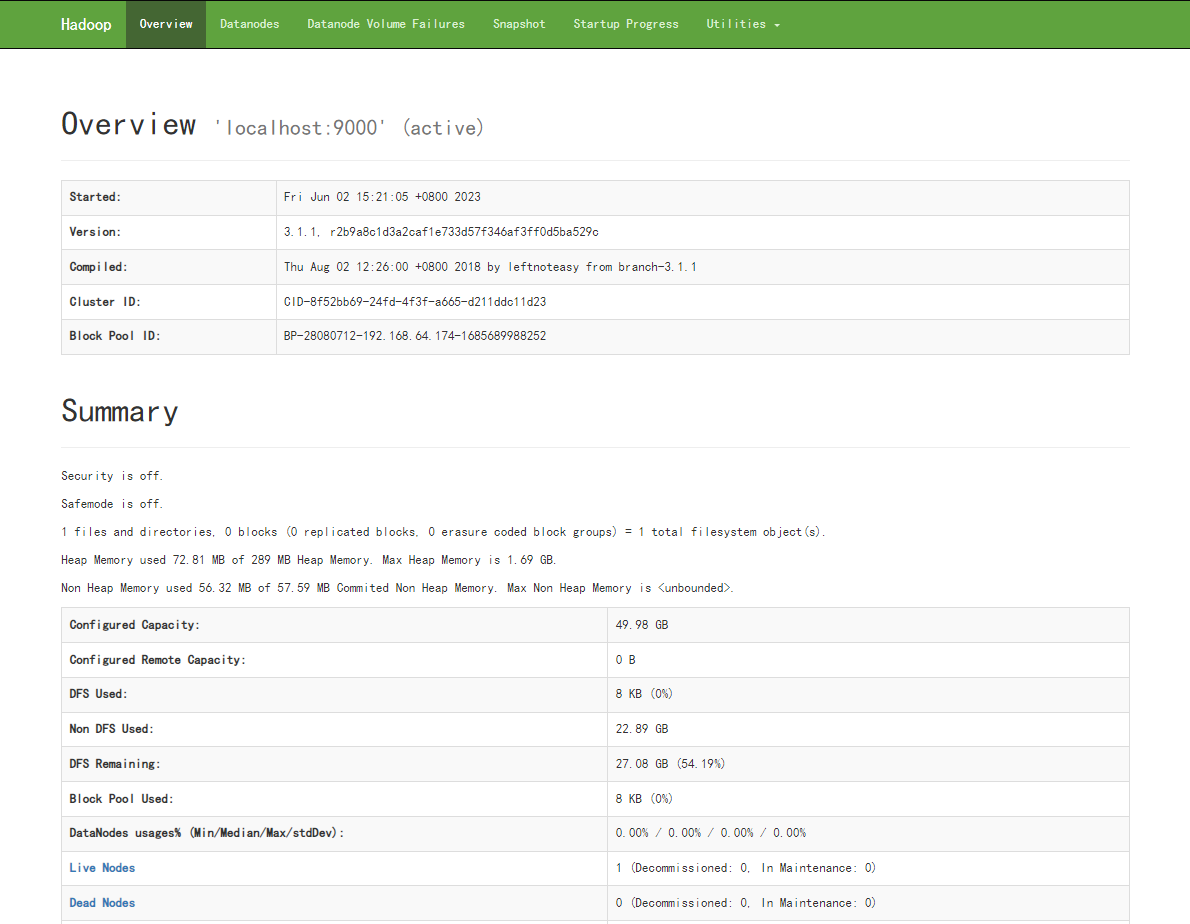

⑩ 查看

查看页面:http://192.168.64.174:9870/

查看配置:http://192.168.64.174:9870/conf

查看日志:http://192.168.64.174:9870/logs/

JMX:http://192.168.64.174:9870/jmx

文章详细描述了Hadoop3.1.1的下载、解压、环境变量设置、版本验证、测试执行、SSH免密配置、核心及HDFS站点配置、格式化NameNode、启动服务以及查看服务状态的过程。

文章详细描述了Hadoop3.1.1的下载、解压、环境变量设置、版本验证、测试执行、SSH免密配置、核心及HDFS站点配置、格式化NameNode、启动服务以及查看服务状态的过程。

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?