本次博主给大家带来大数据环境下经常遇到的MapReduce合并hdfs小文件到大文件

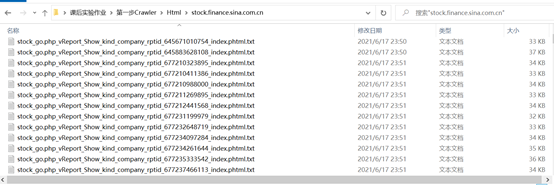

首先看小文件如下:

我们需要把这所有小文件的内容都合并到一个大的文件里面

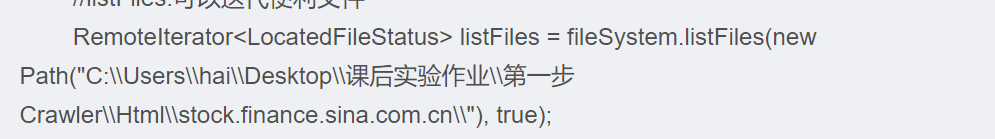

亲,只要直接修改输入路径

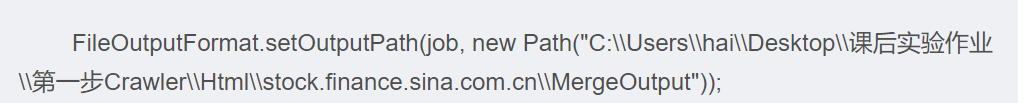

输出路径指定到某个文件下即可

直接上代码:

import org.apache.commons.io.FileUtils;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.LocatedFileStatus;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.fs.RemoteIterator;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;import java.io.File;

import java.io.IOException;

import java.util.Iterator;

/**

* @Author 海龟

* @Date 2021/6/18 9:07

* @Desc 合并

*/

public class MergeFileJob {

public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

Configuration conf = new Configuration();

//设置一个任务

Job job = Job.getInstance(conf, "my small merge big file");

//设置job的运行类

job.setJarByClass(MergeFileJob.class);//设置Map和Reduce处理类

job.setMapperClass(MergeSmallFileMapper.class);

job.setReducerClass(MergeSmallFileReduce.class);//map输出类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(Text.class);

//设置job/reduce输出类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);FileSystem fileSystem = FileSystem.get(conf);

//listFiles:可以迭代便利文件

RemoteIterator<LocatedFileStatus> listFiles = fileSystem.listFiles(new Path("C:\\Users\\hai\\Desktop\\课后实验作业\\第一步Crawler\\Html\\stock.finance.sina.com.cn\\"), true);

while (listFiles.hasNext()) {

LocatedFileStatus fileStatus = listFiles.next();

Path filesPath = fileStatus.getPath();

if (!fileStatus.isDirectory()) {

//判断大小 及格式

if (fileStatus.getLen() < 2 * 1014 * 1024 && filesPath.getName().contains(".txt")) {

//文件输入路径

FileInputFormat.addInputPath(job,filesPath);

}

}

}

//判断输出文件是否存在,存在即删除

File outFile = new File("hdfs://192.168.11.10:9000/MergeOutput");if (outFile.exists()) {

FileUtils.deleteDirectory(outFile);

}

FileOutputFormat.setOutputPath(job, new Path("C:\\Users\\hai\\Desktop\\课后实验作业\\第一步Crawler\\Html\\stock.finance.sina.com.cn\\MergeOutput"));

Boolean status = job.waitForCompletion(true);

if (status){

System.out.println("文件合并成功!!");}else {

System.out.println("文件合并失败....");

}}

private static class MergeSmallFileMapper extends Mapper<LongWritable,Text,Text,Text>{

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//将文件名作为key,内容作为value输出

//1.获取文件名

FileSplit inputSplit = (FileSplit) context.getInputSplit();

String fileName = inputSplit.getPath().getName();

//打印文件名以及与之对应的内容

context.write(new Text(fileName),value);

}

}public static class MergeSmallFileReduce extends Reducer<Text,Text,Text,Text>{

/**

*

* @param key:文件名

* @param values:一个文件的所有内容

* @param context

* @throws IOException

* @throws InterruptedException

*/

@Override

protected void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

//将迭代器中的内容拼接

Iterator<Text> iterator = values.iterator();

//使用StringBuffer

StringBuffer stringBuffer = new StringBuffer();

while (iterator.hasNext()){

stringBuffer.append(iterator.next()).append(",");

}

//打印

context.write(key,new Text(stringBuffer.toString()));

}

}}

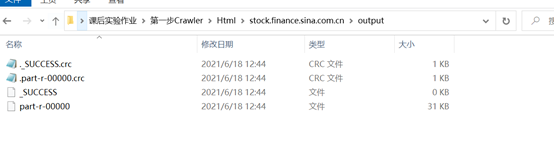

运行结果如下:

成功后打开右键记事本打开part-r-00000文件即可查看内容。

如果有帮助,可以点赞收藏哦!

2022

2022

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?