Kubeadm方式部署K8S

环境尽量参考本人发布的Centos初始化优化做完之后,进行安装

相关包依赖下载地址:

链接:https://pan.baidu.com/s/1Cb1Kkuqe1eXpaEqa-QqRuA

提取码:091e

1.环境准备

| 操作系统 | IP | 主机名 | 组件 |

|---|---|---|---|

| Centos7 | 192.168.200.101 | k8s-master | kubeadm、kubelet、kubectl、docker-ce |

| Centos7 | 192.168.200.102 | k8s-slave1 | kubeadm、kubelet、kubectl、docker-ce |

| Centos7 | 192.168.200.103 | k8s-slave2 | kubeadm、kubelet、kubectl、docker-ce |

注意:所有主机配置推荐CPU:4C+ Memory:4G+

2.准备好提前做好的包

//三台主机同样操作

1.创建一个目录

mkdir -p /data/software && cd /data/software

2.将提前下好的包拖进去解压

tar -xvf 10_kubeadmin_install.tar.bz2

//将解压的包传到其他宿主机

[root@localhost software]# scp -r kubeadmin_install/ root@192.168.200.102:/data/software/

[root@localhost software]# scp -r kubeadmin_install/ root@192.168.200.103:/data/software/

3.安装01_update_kernel

cd kubeadmin_install/

rpm -ivh --force --nodeps /data/software/kubeadmin_install/01_update_kernel/* 或 yum localinstall -y /data/software/kubeadmin_install/01_update_kernel/*

4.升级kernel-ml

rpm -ivh --force --nodeps /data/software/kubeadmin_install/02_kernel-ml/* 或 yum localinstall -y /data/software/kubeadmin_install/02_kernel-ml/*

5.查看你的系统上所有可用内核

[root@localhost software]# awk -F\' '$1=="menuentry " {print i++ " : " $2}' /etc/grub2.cfg

0 : CentOS Linux (6.2.1-1.el7.elrepo.x86_64) 7 (Core)

1 : CentOS Linux (3.10.0-1160.83.1.el7.x86_64) 7 (Core)

2 : CentOS Linux (3.10.0-1127.el7.x86_64) 7 (Core)

3 : CentOS Linux (0-rescue-420c3da34b1846a5809b16a1b5290cc2) 7 (Core)

6.设置默认版本,其中0是上面查询出来的可用内核

[root@localhost ~]# grub2-set-default 0

#生产grub配置文件

[root@localhost ~]# grub2-mkconfig -o /boot/grub2/grub.cfg

#重启reboot

7.删除旧内核

[root@localhost ~]# rpm -qa |grep kernel

#删除旧内核的 RPM 包,具体内容视上述命令的返回结果而定

[root@localhost ~]# yum remove kernel-3.10.0-1127.el7.x86_64 \

> kernel-tools-libs-3.10.0-1127.el7.x86_64 \

> kernel-tools-3.10.0-1127.el7.x86_64 \

> kernel-tools-3.10.0-1160.83.1.el7.x86_64

或 yum -y remove kernel-3.10.0-1127.el7.x86_64 kernel-tools-libs-3.10.0-1127.el7.x86_64 kernel-tools-3.10.0-1127.el7.x86_64 kernel-tools-3.10.0-1160.83.1.el7.x86_64

8.所有主机IP地址不能设置成dhcp,要配置成静态IP,所有主机配置主机名并绑定hosts,不同主机名称不同

hostnamectl set-hostname k8s-master && bash

hostnamectl set-hostname k8s-slave1 && bash

hostnamectl set-hostname k8s-slave2 && bash

[root@k8s-master ~]# cat << EOF >> /etc/hosts

> 192.168.200.101 k8s-master

> 192.168.200.102 k8s-slave1

> 192.168.200.103 k8s-slave2

> EOF

scp /etc/hosts k8s-slave1:/etc/

scp /etc/hosts k8s-slave2:/etc/

9.关闭selinux

setenforce 0

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config

10.关闭防火墙

[root@localhost ~]# iptables -F

[root@localhost ~]# systemctl disable --now firewalld

[root@localhost ~]# systemctl disable --now dnsmasq

[root@localhost ~]# systemctl disable --now NetworkManager

11.所有主机禁用swap分区

[root@k8s-master ~]# swapoff -a

[root@k8s-master ~]# sed -i '/swap/s/^/#/' /etc/fstab

为什么要关闭swap?

swap 启用后,在使用磁盘空间和内存交换数据时,性能表现会较差,会减慢程序执行的速度。有的软件的设计师不想使用交换,例如:kubelet 在 v1.8 版本以后强制要求 swap 必须关闭,否则会报错:

12.所有时间同步

[root@k8s-master ~]# yum -y install ntpdate

[root@k8s-master ~]# ntpdate time2.aliyun.com

[root@k8s-master ~]# hwclock --systohc

[root@localhost ~]# crontab -e

*/5 * * * * /usr/sbin/ntpdate time2.aliyun.com

[root@k8s-master ~]# timedatectl set-timezone Asia/Shanghai

13.所有节点配置limit

[root@k8s-master ~]# ulimit -SHn 65535

[root@k8s-master ~]# vim /etc/security/limits.conf #末尾添加如下内容

* soft nofile 65536

* hard nofile 131072

* soft nproc 65535

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

14.做免密

[root@k8s-slave1 ~]# ssh-keygen -t rsa

[root@k8s-slave2 ~]# ssh-copy-id k8s-master

[root@k8s-slave2 ~]# ssh-copy-id k8s-slave1

[root@k8s-slave2 ~]# ssh-copy-id k8s-slave2

15.所有主机将桥接的IPv4流量传递到iptables的链

[root@k8s-master ~]# cat << EOF >> /etc/sysctl.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

[root@k8s-master ~]# modprobe br_netfilter

[root@k8s-master ~]# modprobe overlay

[root@k8s-master ~]# sysctl -p

16.所有主机安装模块

[root@k8s-master ~]# tee /etc/modules-load.d/ipvs.conf <<'EOF'

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

nf_conntrack

ip_tables

ip_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

[root@k8s-master ~]# systemctl enable --now systemd-modules-load.service

[root@k8s-master ~]# lsmod |grep -e ip_vs -e nf_conntrack #查看会什么都没有重启在查看就会生成

17.开启一些k8s集群中必须的内核参数,所有节点配置k8s内核

[root@k8s-master ~]# cat <<EOF > /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

user.max_user_namespaces=28633

fs.may_detach_mounts = 1

net.ipv4.conf.all.route_localnet = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF

[root@k8s-master ~]# sysctl --system

18.所有节点配置完内核后,重启服务器,保证重启后内核依旧加载

[root@k8s-master ~]# reboot

[root@k8s-master ~]# lsmod | grep --color=auto -e ip_vs -e nf_conntrack

19.所有主机设置日志的保存方式

在Centos7以后,因为引导方式改为了systemd,所以有两个日志系统同时在工作,默认的是rsyslogd,以及systemd journald,使用systemd journald更好一些,因此我们更改默认为systemd journald,只保留一个日志的保存方式

[root@k8s-master ~]# mkdir /var/log/journal

[root@k8s-master ~]# mkdir /etc/systemd/journal.conf.d

[root@k8s-master ~]# cat >/etc/systemd/journal.conf.d/99-prophet.conf <<EOF

[Journal]

#持久化保存到磁盘

Storage=persistent

#压缩历史日志

Compress=yes

SyncIntervalSec=5m

RateLimitInterval=30s

RateLimitBurst=1000

#最大占用空间10G

SystemMaxUse=10G

#单日志文件最大200M

SystemMaxFileSize=200M

#日志保存时间2周

MaxRetentionSec=2week

#不将日志转发到syslog

ForwardToSyslog=no

EOF

[root@k8s-master ~]# systemctl restart systemd-journald

3.部署docker环境 (三台都装)

去到提前下载好解压下的包里,安装docker

[root@k8s-master kubeadmin_install]# rpm -ivh --force --nodeps /data/software/kubeadmin_install/03_docker_need_rpm_1/*

[root@k8s-master kubeadmin_install]# rpm -ivh --force --nodeps /data/software/kubeadmin_install/04_docker_need_rpm_2/*

[root@k8s-master kubeadmin_install]# rpm -ivh --force --nodeps /data/software/kubeadmin_install/05_docker_package/*

4.启动docker

1.启动docker并设为开机自启

[root@k8s-master kubeadmin_install]# systemctl --now enable docker

Created symlink from /etc/systemd/system/multi-user.target.wants/docker.service to /usr/lib/systemd/system/docker.service

2.配置加速器

[root@k8s-master ~]# cat > /etc/docker/daemon.json << EOF

{

"registry-mirrors": [

"https://nyakyfun.mirror.aliyuncs.com",

"https://registry.docker-cn.com",

"http://hub-mirror.c.163.com",

"https://docker.mirrors.ustc.edu.cn"

],

"exec-opts": ["native.cgroupdriver=systemd"],

"max-concurrent-downloads": 10,

"max-concurrent-uploads": 5,

"log-driver": "json-file",

"log-opts": {

"max-size": "300m",

"max-file": "2"

},

"live-restore": true,

"storage-driver": "overlay2"

}

EOF

max-concurrent-downloads #并发下载的线程数

max-concurrent-uploads #并发上传的线程数

log-opts #定义docker日志限制

live-restore #重启docker服务时不会影响现有容器的运行

[root@k8s-master ~]# systemctl daemon-reload

[root@k8s-master ~]# systemctl restart docker

[root@k8s-master ~]# docker version

5.部署kubernetes集群

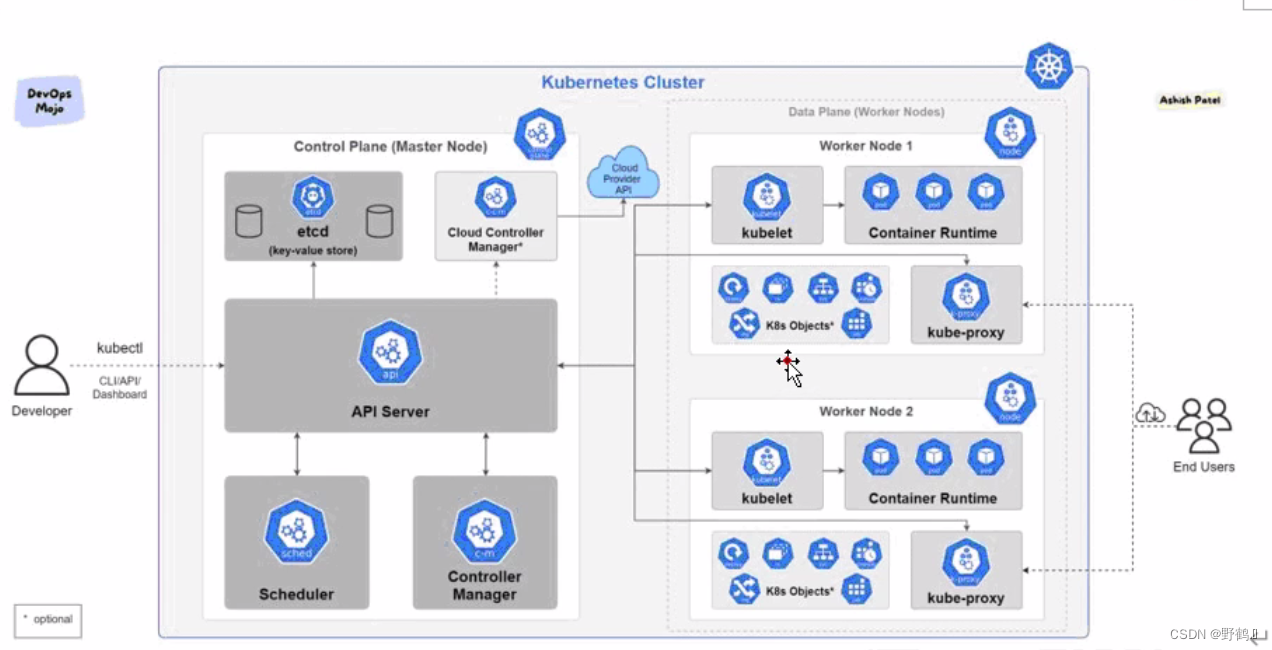

1.组件介绍

所有主机都需要安装下面三个组件

kubeadm:安装工具,使所有的组件都会以容器的方式运行

kubectl:客户端连接K8S API工具

kubelet:运行在node节点,用来启动容器的工具

2.安装kubeadm

[root@k8s-master kubeadmin_install]# cp -ar /data/software/kubeadmin_install/yum_repo/k8s_kubeadm_repo/ /etc/yum.repos.d/

[root@k8s-master kubeadmin_install]# yum -y localinstall /data/software/kubeadmin_install/06_kubeadm_1.23.7_package/*

[root@k8s-master kubeadmin_install]# systemctl enable --now kubelet

Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service.

kubelet 刚安装完成后,通过 systemctl start kubelet 方式是无法启动的,需要加入节点或初始化为 master 后才可启动成功。

3.kubeadm的命令

kubeadm config 命令除了用于输出默认配置项到文件中,还提供了其他一些常用功能:

kubeadm config view:查看当前集群中的配置值。

kubeadm config print join-defaults:输出 kubeadm join 默认参数文件的内容。

kubeadm config images list:列出所需的镜像列表。

kubeadm config images pull:拉取镜像到本地。

kubeadm config upload from-flags:由配置参数生成ConfigMap。

4.自定义init-config.yaml配置---master节点

[root@k8s-master ~]# vim /data/software/kubeadmin_install/kubeadm_config/init-config.yaml

apiVersion: kubeadm.k8s.io/v1beta3

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 192.168.200.101 //master节点IP地址

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

imagePullPolicy: IfNotPresent

name: k8s-master //如果使用域名保证可以解析,或直接使用 IP 地址

taints: null

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta3

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager: {}

dns: {}

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.aliyuncs.com/google_containers //修改为国内地址

kind: ClusterConfiguration

kubernetesVersion: 1.23.0

networking:

dnsDomain: cluster.local

serviceSubnet: 10.96.0.0/12

podSubnet: 10.244.0.0/16 //新增加 Pod 网段

scheduler: {}

[root@k8s-master kubeadmin_install]# kubeadm config images list --config /data/software/

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

500

500

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?