分享一下我老师大神的人工智能教程!零基础,通俗易懂!http://blog.csdn.net/jiangjunshow

也欢迎大家转载本篇文章。分享知识,造福人民,实现我们中华民族伟大复兴!

在前面一篇文章浅谈Android系统进程间通信(IPC)机制Binder中的Server和Client获得Service Manager接口之路中,介绍了在Android系统中Binder进程间通信机制中的Server角色是如何获得Service Manager远程接口的,即defaultServiceManager函数的实现。Server获得了Service Manager远程接口之后,就要把自己的Service添加到Service Manager中去,然后把自己启动起来,等待Client的请求。本文将通过分析源代码了解Server的启动过程是怎么样的。

《Android系统源代码情景分析》一书正在进击的程序员网(http://0xcc0xcd.com)中连载,点击进入!

本文通过一个具体的例子来说明Binder机制中Server的启动过程。我们知道,在Android系统中,提供了多媒体播放的功能,这个功能是以服务的形式来提供的。这里,我们就通过分析MediaPlayerService的实现来了解Media Server的启动过程。

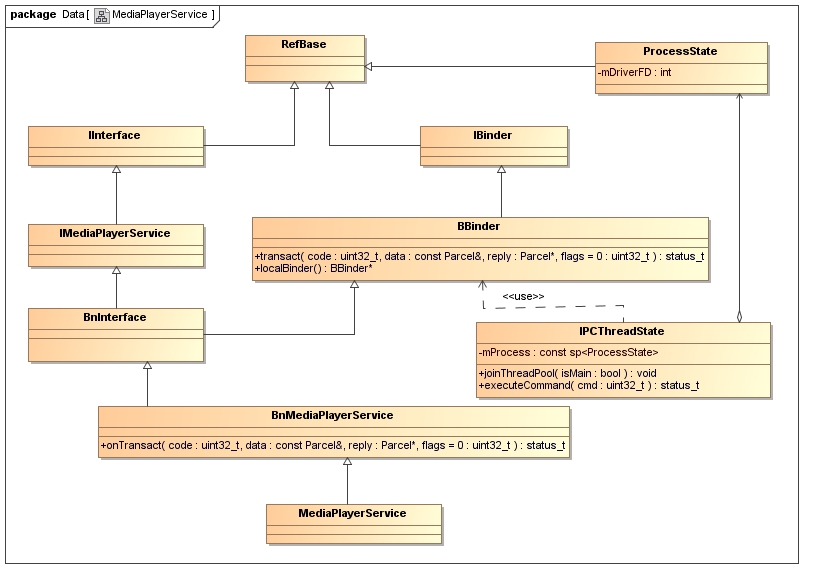

首先,看一下MediaPlayerService的类图,以便我们理解下面要描述的内容。

我们将要介绍的主角MediaPlayerService继承于BnMediaPlayerService类,熟悉Binder机制的同学应该知道BnMediaPlayerService是一个Binder Native类,用来处理Client请求的。BnMediaPlayerService继承于BnInterface<IMediaPlayerService>类,BnInterface是一个模板类,它定义在frameworks/base/include/binder/IInterface.h文件中:

template<typename INTERFACE>class BnInterface : public INTERFACE, public BBinder{public: virtual sp<IInterface> queryLocalInterface(const String16& _descriptor); virtual const String16& getInterfaceDescriptor() const;protected: virtual IBinder* onAsBinder();};实际上,BnMediaPlayerService并不是直接接收到Client处发送过来的请求,而是使用了IPCThreadState接收Client处发送过来的请求,而IPCThreadState又借助了ProcessState类来与Binder驱动程序交互。有关IPCThreadState和ProcessState的关系,可以参考上一篇文章浅谈Android系统进程间通信(IPC)机制Binder中的Server和Client获得Service Manager接口之路,接下来也会有相应的描述。IPCThreadState接收到了Client处的请求后,就会调用BBinder类的transact函数,并传入相关参数,BBinder类的transact函数最终调用BnMediaPlayerService类的onTransact函数,于是,就开始真正地处理Client的请求了。

了解了MediaPlayerService类结构之后,就要开始进入到本文的主题了。

首先,看看MediaPlayerService是如何启动的。启动MediaPlayerService的代码位于frameworks/base/media/mediaserver/main_mediaserver.cpp文件中:

int main(int argc, char** argv){ sp<ProcessState> proc(ProcessState::self()); sp<IServiceManager> sm = defaultServiceManager(); LOGI("ServiceManager: %p", sm.get()); AudioFlinger::instantiate(); MediaPlayerService::instantiate(); CameraService::instantiate(); AudioPolicyService::instantiate(); ProcessState::self()->startThreadPool(); IPCThreadState::self()->joinThreadPool();}先看下面这句代码:

sp<ProcessState> proc(ProcessState::self());sp<ProcessState> ProcessState::self(){ if (gProcess != NULL) return gProcess; AutoMutex _l(gProcessMutex); if (gProcess == NULL) gProcess = new ProcessState; return gProcess;}Mutex gProcessMutex;sp<ProcessState> gProcess;ProcessState::ProcessState() : mDriverFD(open_driver()) , mVMStart(MAP_FAILED) , mManagesContexts(false) , mBinderContextCheckFunc(NULL) , mBinderContextUserData(NULL) , mThreadPoolStarted(false) , mThreadPoolSeq(1){ if (mDriverFD >= 0) { // XXX Ideally, there should be a specific define for whether we // have mmap (or whether we could possibly have the kernel module // availabla).#if !defined(HAVE_WIN32_IPC) // mmap the binder, providing a chunk of virtual address space to receive transactions. mVMStart = mmap(0, BINDER_VM_SIZE, PROT_READ, MAP_PRIVATE | MAP_NORESERVE, mDriverFD, 0); if (mVMStart == MAP_FAILED) { // *sigh* LOGE("Using /dev/binder failed: unable to mmap transaction memory.\n"); close(mDriverFD); mDriverFD = -1; }#else mDriverFD = -1;#endif } if (mDriverFD < 0) { // Need to run without the driver, starting our own thread pool. }}先看open_driver函数的实现,这个函数同样位于frameworks/base/libs/binder/ProcessState.cpp文件中:

static int open_driver(){ if (gSingleProcess) { return -1; } int fd = open("/dev/binder", O_RDWR); if (fd >= 0) { fcntl(fd, F_SETFD, FD_CLOEXEC); int vers;#if defined(HAVE_ANDROID_OS) status_t result = ioctl(fd, BINDER_VERSION, &vers);#else status_t result = -1; errno = EPERM;#endif if (result == -1) { LOGE("Binder ioctl to obtain version failed: %s", strerror(errno)); close(fd); fd = -1; } if (result != 0 || vers != BINDER_CURRENT_PROTOCOL_VERSION) { LOGE("Binder driver protocol does not match user space protocol!"); close(fd); fd = -1; }#if defined(HAVE_ANDROID_OS) size_t maxThreads = 15; result = ioctl(fd, BINDER_SET_MAX_THREADS, &maxThreads); if (result == -1) { LOGE("Binder ioctl to set max threads failed: %s", strerror(errno)); }#endif } else { LOGW("Opening '/dev/binder' failed: %s\n", strerror(errno)); } return fd;}open在Binder驱动程序中的具体实现,请参考前面一篇文章浅谈Service Manager成为Android进程间通信(IPC)机制Binder守护进程之路,这里不再重复描述。打开/dev/binder设备文件后,Binder驱动程序就为MediaPlayerService进程创建了一个struct binder_proc结构体实例来维护MediaPlayerService进程上下文相关信息。

我们来看一下ioctl文件操作函数执行BINDER_VERSION命令的过程:

status_t result = ioctl(fd, BINDER_VERSION, &vers);static long binder_ioctl(struct file *filp, unsigned int cmd, unsigned long arg){ int ret; struct binder_proc *proc = filp->private_data; struct binder_thread *thread; unsigned int size = _IOC_SIZE(cmd); void __user *ubuf = (void __user *)arg; /*printk(KERN_INFO "binder_ioctl: %d:%d %x %lx\n", proc->pid, current->pid, cmd, arg);*/ ret = wait_event_interruptible(binder_user_error_wait, binder_stop_on_user_error < 2); if (ret) return ret; mutex_lock(&binder_lock); thread = binder_get_thread(proc); if (thread == NULL) { ret = -ENOMEM; goto err; } switch (cmd) { ...... case BINDER_VERSION: if (size != sizeof(struct binder_version)) { ret = -EINVAL; goto err; } if (put_user(BINDER_CURRENT_PROTOCOL_VERSION, &((struct binder_version *)ubuf)->protocol_version)) { ret = -EINVAL; goto err; } break; ...... } ret = 0;err: ...... return ret;}很简单,只是将BINDER_CURRENT_PROTOCOL_VERSION写入到传入的参数arg指向的用户缓冲区中去就返回了。BINDER_CURRENT_PROTOCOL_VERSION是一个宏,定义在kernel/common/drivers/staging/android/binder.h文件中:

/* This is the current protocol version. */#define BINDER_CURRENT_PROTOCOL_VERSION 7/* Use with BINDER_VERSION, driver fills in fields. */struct binder_version { /* driver protocol version -- increment with incompatible change */ signed long protocol_version;};这里有一个重要的地方要注意的是,由于这里是打开设备文件/dev/binder之后,第一次进入到binder_ioctl函数,因此,这里调用binder_get_thread的时候,就会为当前线程创建一个struct binder_thread结构体变量来维护线程上下文信息,具体可以参考浅谈Service Manager成为Android进程间通信(IPC)机制Binder守护进程之路一文。

接着我们再来看一下ioctl文件操作函数执行BINDER_SET_MAX_THREADS命令的过程:

result = ioctl(fd, BINDER_SET_MAX_THREADS, &maxThreads);这个函数调用最终进入到Binder驱动程序的binder_ioctl函数中,我们只关注BINDER_SET_MAX_THREADS相关的部分逻辑:

static long binder_ioctl(struct file *filp, unsigned int cmd, unsigned long arg){ int ret; struct binder_proc *proc = filp->private_data; struct binder_thread *thread; unsigned int size = _IOC_SIZE(cmd); void __user *ubuf = (void __user *)arg; /*printk(KERN_INFO "binder_ioctl: %d:%d %x %lx\n", proc->pid, current->pid, cmd, arg);*/ ret = wait_event_interruptible(binder_user_error_wait, binder_stop_on_user_error < 2); if (ret) return ret; mutex_lock(&binder_lock); thread = binder_get_thread(proc); if (thread == NULL) { ret = -ENOMEM; goto err; } switch (cmd) { ...... case BINDER_SET_MAX_THREADS: if (copy_from_user(&proc->max_threads, ubuf, sizeof(proc->max_threads))) { ret = -EINVAL; goto err; } break; ...... } ret = 0;err: ...... return ret;}回到ProcessState的构造函数中,这里还通过mmap函数来把设备文件/dev/binder映射到内存中,这个函数在浅谈Service Manager成为Android进程间通信(IPC)机制Binder守护进程之路一文也已经有详细介绍,这里不再重复描述。宏BINDER_VM_SIZE就定义在ProcessState.cpp文件中:

#define BINDER_VM_SIZE ((1*1024*1024) - (4096 *2))这样,ProcessState全局唯一变量gProcess就创建完毕了,回到frameworks/base/media/mediaserver/main_mediaserver.cpp文件中的main函数,下一步是调用defaultServiceManager函数来获得Service Manager的远程接口,这个已经在上一篇文章浅谈Android系统进程间通信(IPC)机制Binder中的Server和Client获得Service Manager接口之路有详细描述,读者可以回过头去参考一下。

再接下来,就进入到MediaPlayerService::instantiate函数把MediaPlayerService添加到Service Manger中去了。这个函数定义在frameworks/base/media/libmediaplayerservice/MediaPlayerService.cpp文件中:

void MediaPlayerService::instantiate() { defaultServiceManager()->addService( String16("media.player"), new MediaPlayerService());}在上一篇文章浅谈Android系统进程间通信(IPC)机制Binder中的Server和Client获得Service Manager接口之路中说到,defaultServiceManager返回的实际是一个BpServiceManger类实例,因此,我们看一下BpServiceManger::addService的实现,这个函数实现在frameworks/base/libs/binder/IServiceManager.cpp文件中:

class BpServiceManager : public BpInterface<IServiceManager>{public: BpServiceManager(const sp<IBinder>& impl) : BpInterface<IServiceManager>(impl) { } ...... virtual status_t addService(const String16& name, const sp<IBinder>& service) { Parcel data, reply; data.writeInterfaceToken(IServiceManager::getInterfaceDescriptor()); data.writeString16(name); data.writeStrongBinder(service); status_t err = remote()->transact(ADD_SERVICE_TRANSACTION, data, &reply); return err == NO_ERROR ? reply.readExceptionCode() } ......};先来看这一句的调用:

data.writeInterfaceToken(IServiceManager::getInterfaceDescriptor());// Write RPC headers. (previously just the interface token)status_t Parcel::writeInterfaceToken(const String16& interface){ writeInt32(IPCThreadState::self()->getStrictModePolicy() | STRICT_MODE_PENALTY_GATHER); // currently the interface identification token is just its name as a string return writeString16(interface);}再来看下面的调用:

data.writeString16(name);往下看:

data.writeStrongBinder(service);status_t Parcel::writeStrongBinder(const sp<IBinder>& val){ return flatten_binder(ProcessState::self(), val, this);}/* * This is the flattened representation of a Binder object for transfer * between processes. The 'offsets' supplied as part of a binder transaction * contains offsets into the data where these structures occur. The Binder * driver takes care of re-writing the structure type and data as it moves * between processes. */struct flat_binder_object { /* 8 bytes for large_flat_header. */ unsigned long type; unsigned long flags; /* 8 bytes of data. */ union { void *binder; /* local object */ signed long handle; /* remote object */ }; /* extra data associated with local object */ void *cookie;};我们进入到flatten_binder函数看看:

status_t flatten_binder(const sp<ProcessState>& proc, const sp<IBinder>& binder, Parcel* out){ flat_binder_object obj; obj.flags = 0x7f | FLAT_BINDER_FLAG_ACCEPTS_FDS; if (binder != NULL) { IBinder *local = binder->localBinder(); if (!local) { BpBinder *proxy = binder->remoteBinder(); if (proxy == NULL) { LOGE("null proxy"); } const int32_t handle = proxy ? proxy->handle() : 0; obj.type = BINDER_TYPE_HANDLE; obj.handle = handle; obj.cookie = NULL; } else { obj.type = BINDER_TYPE_BINDER; obj.binder = local->getWeakRefs(); obj.cookie = local; } } else { obj.type = BINDER_TYPE_BINDER; obj.binder = NULL; obj.cookie = NULL; } return finish_flatten_binder(binder, obj, out);}obj.flags = 0x7f | FLAT_BINDER_FLAG_ACCEPTS_FDS;传进来的binder即为MediaPlayerService::instantiate函数中new出来的MediaPlayerService实例,因此,不为空。又由于MediaPlayerService继承自BBinder类,它是一个本地Binder实体,因此binder->localBinder返回一个BBinder指针,而且肯定不为空,于是执行下面语句:

obj.type = BINDER_TYPE_BINDER;obj.binder = local->getWeakRefs();obj.cookie = local;函数调用finish_flatten_binder来将这个flat_binder_obj写入到Parcel中去:

inline static status_t finish_flatten_binder( const sp<IBinder>& binder, const flat_binder_object& flat, Parcel* out){ return out->writeObject(flat, false);}status_t Parcel::writeObject(const flat_binder_object& val, bool nullMetaData){ const bool enoughData = (mDataPos+sizeof(val)) <= mDataCapacity; const bool enoughObjects = mObjectsSize < mObjectsCapacity; if (enoughData && enoughObjects) {restart_write: *reinterpret_cast<flat_binder_object*>(mData+mDataPos) = val; // Need to write meta-data? if (nullMetaData || val.binder != NULL) { mObjects[mObjectsSize] = mDataPos; acquire_object(ProcessState::self(), val, this); mObjectsSize++; } // remember if it's a file descriptor if (val.type == BINDER_TYPE_FD) { mHasFds = mFdsKnown = true; } return finishWrite(sizeof(flat_binder_object)); } if (!enoughData) { const status_t err = growData(sizeof(val)); if (err != NO_ERROR) return err; } if (!enoughObjects) { size_t newSize = ((mObjectsSize+2)*3)/2; size_t* objects = (size_t*)realloc(mObjects, newSize*sizeof(size_t)); if (objects == NULL) return NO_MEMORY; mObjects = objects; mObjectsCapacity = newSize; } goto restart_write;}mObjects[mObjectsSize] = mDataPos;再回到BpServiceManager::addService函数中,调用下面语句:

status_t err = remote()->transact(ADD_SERVICE_TRANSACTION, data, &reply);status_t BpBinder::transact( uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags){ // Once a binder has died, it will never come back to life. if (mAlive) { status_t status = IPCThreadState::self()->transact( mHandle, code, data, reply, flags); if (status == DEAD_OBJECT) mAlive = 0; return status; } return DEAD_OBJECT;}再进入到IPCThreadState::transact函数,看看做了些什么事情:

status_t IPCThreadState::transact(int32_t handle, uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags){ status_t err = data.errorCheck(); flags |= TF_ACCEPT_FDS; IF_LOG_TRANSACTIONS() { TextOutput::Bundle _b(alog); alog << "BC_TRANSACTION thr " << (void*)pthread_self() << " / hand " << handle << " / code " << TypeCode(code) << ": " << indent << data << dedent << endl; } if (err == NO_ERROR) { LOG_ONEWAY(">>>> SEND from pid %d uid %d %s", getpid(), getuid(), (flags & TF_ONE_WAY) == 0 ? "READ REPLY" : "ONE WAY"); err = writeTransactionData(BC_TRANSACTION, flags, handle, code, data, NULL); } if (err != NO_ERROR) { if (reply) reply->setError(err); return (mLastError = err); } if ((flags & TF_ONE_WAY) == 0) { #if 0 if (code == 4) { // relayout LOGI(">>>>>> CALLING transaction 4"); } else { LOGI(">>>>>> CALLING transaction %d", code); } #endif if (reply) { err = waitForResponse(reply); } else { Parcel fakeReply; err = waitForResponse(&fakeReply); } #if 0 if (code == 4) { // relayout LOGI("<<<<<< RETURNING transaction 4"); } else { LOGI("<<<<<< RETURNING transaction %d", code); } #endif IF_LOG_TRANSACTIONS() { TextOutput::Bundle _b(alog); alog << "BR_REPLY thr " << (void*)pthread_self() << " / hand " << handle << ": "; if (reply) alog << indent << *reply << dedent << endl; else alog << "(none requested)" << endl; } } else { err = waitForResponse(NULL, NULL); } return err;}函数首先调用writeTransactionData函数准备好一个struct binder_transaction_data结构体变量,这个是等一下要传输给Binder驱动程序的。struct binder_transaction_data的定义我们在浅谈Service Manager成为Android进程间通信(IPC)机制Binder守护进程之路一文中有详细描述,读者不妨回过去读一下。这里为了方便描述,将struct binder_transaction_data的定义再次列出来:

struct binder_transaction_data { /* The first two are only used for bcTRANSACTION and brTRANSACTION, * identifying the target and contents of the transaction. */ union { size_t handle; /* target descriptor of command transaction */ void *ptr; /* target descriptor of return transaction */ } target; void *cookie; /* target object cookie */ unsigned int code; /* transaction command */ /* General information about the transaction. */ unsigned int flags; pid_t sender_pid; uid_t sender_euid; size_t data_size; /* number of bytes of data */ size_t offsets_size; /* number of bytes of offsets */ /* If this transaction is inline, the data immediately * follows here; otherwise, it ends with a pointer to * the data buffer. */ union { struct { /* transaction data */ const void *buffer; /* offsets from buffer to flat_binder_object structs */ const void *offsets; } ptr; uint8_t buf[8]; } data;};status_t IPCThreadState::writeTransactionData(int32_t cmd, uint32_t binderFlags, int32_t handle, uint32_t code, const Parcel& data, status_t* statusBuffer){ binder_transaction_data tr; tr.target.handle = handle; tr.code = code; tr.flags = binderFlags; const status_t err = data.errorCheck(); if (err == NO_ERROR) { tr.data_size = data.ipcDataSize(); tr.data.ptr.buffer = data.ipcData(); tr.offsets_size = data.ipcObjectsCount()*sizeof(size_t); tr.data.ptr.offsets = data.ipcObjects(); } else if (statusBuffer) { tr.flags |= TF_STATUS_CODE; *statusBuffer = err; tr.data_size = sizeof(status_t); tr.data.ptr.buffer = statusBuffer; tr.offsets_size = 0; tr.data.ptr.offsets = NULL; } else { return (mLastError = err); } mOut.writeInt32(cmd); mOut.write(&tr, sizeof(tr)); return NO_ERROR;}注意,这里的cmd为BC_TRANSACTION。 这个函数很简单,在这个场景下,就是执行下面语句来初始化本地变量tr:

tr.data_size = data.ipcDataSize();tr.data.ptr.buffer = data.ipcData();tr.offsets_size = data.ipcObjectsCount()*sizeof(size_t);tr.data.ptr.offsets = data.ipcObjects();writeInt32(IPCThreadState::self()->getStrictModePolicy() | STRICT_MODE_PENALTY_GATHER);writeString16("android.os.IServiceManager");writeString16("media.player");writeStrongBinder(new MediaPlayerService());回到IPCThreadState::transact函数中,接下去看,(flags & TF_ONE_WAY) == 0为true,并且reply不为空,所以最终进入到waitForResponse(reply)这条路径来。我们看一下waitForResponse函数的实现:

status_t IPCThreadState::waitForResponse(Parcel *reply, status_t *acquireResult){ int32_t cmd; int32_t err; while (1) { if ((err=talkWithDriver()) < NO_ERROR) break; err = mIn.errorCheck(); if (err < NO_ERROR) break; if (mIn.dataAvail() == 0) continue; cmd = mIn.readInt32(); IF_LOG_COMMANDS() { alog << "Processing waitForResponse Command: " << getReturnString(cmd) << endl; } switch (cmd) { case BR_TRANSACTION_COMPLETE: if (!reply && !acquireResult) goto finish; break; case BR_DEAD_REPLY: err = DEAD_OBJECT; goto finish; case BR_FAILED_REPLY: err = FAILED_TRANSACTION; goto finish; case BR_ACQUIRE_RESULT: { LOG_ASSERT(acquireResult != NULL, "Unexpected brACQUIRE_RESULT"); const int32_t result = mIn.readInt32(); if (!acquireResult) continue; *acquireResult = result ? NO_ERROR : INVALID_OPERATION; } goto finish; case BR_REPLY: { binder_transaction_data tr; err = mIn.read(&tr, sizeof(tr)); LOG_ASSERT(err == NO_ERROR, "Not enough command data for brREPLY"); if (err != NO_ERROR) goto finish; if (reply) { if ((tr.flags & TF_STATUS_CODE) == 0) { reply->ipcSetDataReference( reinterpret_cast<const uint8_t*>(tr.data.ptr.buffer), tr.data_size, reinterpret_cast<const size_t*>(tr.data.ptr.offsets), tr.offsets_size/sizeof(size_t), freeBuffer, this); } else { err = *static_cast<const status_t*>(tr.data.ptr.buffer); freeBuffer(NULL, reinterpret_cast<const uint8_t*>(tr.data.ptr.buffer), tr.data_size, reinterpret_cast<const size_t*>(tr.data.ptr.offsets), tr.offsets_size/sizeof(size_t), this); } } else { freeBuffer(NULL, reinterpret_cast<const uint8_t*>(tr.data.ptr.buffer), tr.data_size, reinterpret_cast<const size_t*>(tr.data.ptr.offsets), tr.offsets_size/sizeof(size_t), this); continue; } } goto finish; default: err = executeCommand(cmd); if (err != NO_ERROR) goto finish; break; } }finish: if (err != NO_ERROR) { if (acquireResult) *acquireResult = err; if (reply) reply->setError(err); mLastError = err; } return err;}status_t IPCThreadState::talkWithDriver(bool doReceive){ LOG_ASSERT(mProcess->mDriverFD >= 0, "Binder driver is not opened"); binder_write_read bwr; // Is the read buffer empty? const bool needRead = mIn.dataPosition() >= mIn.dataSize(); // We don't want to write anything if we are still reading // from data left in the input buffer and the caller // has requested to read the next data. const size_t outAvail = (!doReceive || needRead) ? mOut.dataSize() : 0; bwr.write_size = outAvail; bwr.write_buffer = (long unsigned int)mOut.data(); // This is what we'll read. if (doReceive && needRead) { bwr.read_size = mIn.dataCapacity(); bwr.read_buffer = (long unsigned int)mIn.data(); } else { bwr.read_size = 0; } IF_LOG_COMMANDS() { TextOutput::Bundle _b(alog); if (outAvail != 0) { alog << "Sending commands to driver: " << indent; const void* cmds = (const void*)bwr.write_buffer; const void* end = ((const uint8_t*)cmds)+bwr.write_size; alog << HexDump(cmds, bwr.write_size) << endl; while (cmds < end) cmds = printCommand(alog, cmds); alog << dedent; } alog << "Size of receive buffer: " << bwr.read_size << ", needRead: " << needRead << ", doReceive: " << doReceive << endl; } // Return immediately if there is nothing to do. if ((bwr.write_size == 0) && (bwr.read_size == 0)) return NO_ERROR; bwr.write_consumed = 0; bwr.read_consumed = 0; status_t err; do { IF_LOG_COMMANDS() { alog << "About to read/write, write size = " << mOut.dataSize() << endl; }#if defined(HAVE_ANDROID_OS) if (ioctl(mProcess->mDriverFD, BINDER_WRITE_READ, &bwr) >= 0) err = NO_ERROR; else err = -errno;#else err = INVALID_OPERATION;#endif IF_LOG_COMMANDS() { alog << "Finished read/write, write size = " << mOut.dataSize() << endl; } } while (err == -EINTR); IF_LOG_COMMANDS() { alog << "Our err: " << (void*)err << ", write consumed: " << bwr.write_consumed << " (of " << mOut.dataSize() << "), read consumed: " << bwr.read_consumed << endl; } if (err >= NO_ERROR) { if (bwr.write_consumed > 0) { if (bwr.write_consumed < (ssize_t)mOut.dataSize()) mOut.remove(0, bwr.write_consumed); else mOut.setDataSize(0); } if (bwr.read_consumed > 0) { mIn.setDataSize(bwr.read_consumed); mIn.setDataPosition(0); } IF_LOG_COMMANDS() { TextOutput::Bundle _b(alog); alog << "Remaining data size: " << mOut.dataSize() << endl; alog << "Received commands from driver: " << indent; const void* cmds = mIn.data(); const void* end = mIn.data() + mIn.dataSize(); alog << HexDump(cmds, mIn.dataSize()) << endl; while (cmds < end) cmds = printReturnCommand(alog, cmds); alog << dedent; } return NO_ERROR; } return err;}最后,通过ioctl(mProcess->mDriverFD, BINDER_WRITE_READ, &bwr)进行到Binder驱动程序的binder_ioctl函数,我们只关注cmd为BINDER_WRITE_READ的逻辑:

static long binder_ioctl(struct file *filp, unsigned int cmd, unsigned long arg){ int ret; struct binder_proc *proc = filp->private_data; struct binder_thread *thread; unsigned int size = _IOC_SIZE(cmd); void __user *ubuf = (void __user *)arg; /*printk(KERN_INFO "binder_ioctl: %d:%d %x %lx\n", proc->pid, current->pid, cmd, arg);*/ ret = wait_event_interruptible(binder_user_error_wait, binder_stop_on_user_error < 2); if (ret) return ret; mutex_lock(&binder_lock); thread = binder_get_thread(proc); if (thread == NULL) { ret = -ENOMEM; goto err; } switch (cmd) { case BINDER_WRITE_READ: { struct binder_write_read bwr; if (size != sizeof(struct binder_write_read)) { ret = -EINVAL; goto err; } if (copy_from_user(&bwr, ubuf, sizeof(bwr))) { ret = -EFAULT; goto err; } if (binder_debug_mask & BINDER_DEBUG_READ_WRITE) printk(KERN_INFO "binder: %d:%d write %ld at %08lx, read %ld at %08lx\n", proc->pid, thread->pid, bwr.write_size, bwr.write_buffer, bwr.read_size, bwr.read_buffer); if (bwr.write_size > 0) { ret = binder_thread_write(proc, thread, (void __user *)bwr.write_buffer, bwr.write_size, &bwr.write_consumed); if (ret < 0) { bwr.read_consumed = 0; if (copy_to_user(ubuf, &bwr, sizeof(bwr))) ret = -EFAULT; goto err; } } if (bwr.read_size > 0) { ret = binder_thread_read(proc, thread, (void __user *)bwr.read_buffer, bwr.read_size, &bwr.read_consumed, filp->f_flags & O_NONBLOCK); if (!list_empty(&proc->todo)) wake_up_interruptible(&proc->wait); if (ret < 0) { if (copy_to_user(ubuf, &bwr, sizeof(bwr))) ret = -EFAULT; goto err; } } if (binder_debug_mask & BINDER_DEBUG_READ_WRITE) printk(KERN_INFO "binder: %d:%d wrote %ld of %ld, read return %ld of %ld\n", proc->pid, thread->pid, bwr.write_consumed, bwr.write_size, bwr.read_consumed, bwr.read_size); if (copy_to_user(ubuf, &bwr, sizeof(bwr))) { ret = -EFAULT; goto err; } break; } ...... } ret = 0;err: ...... return ret;}binder_thread_write(struct binder_proc *proc, struct binder_thread *thread, void __user *buffer, int size, signed long *consumed){ uint32_t cmd; void __user *ptr = buffer + *consumed; void __user *end = buffer + size; while (ptr < end && thread->return_error == BR_OK) { if (get_user(cmd, (uint32_t __user *)ptr)) return -EFAULT; ptr += sizeof(uint32_t); if (_IOC_NR(cmd) < ARRAY_SIZE(binder_stats.bc)) { binder_stats.bc[_IOC_NR(cmd)]++; proc->stats.bc[_IOC_NR(cmd)]++; thread->stats.bc[_IOC_NR(cmd)]++; } switch (cmd) { ..... case BC_TRANSACTION: case BC_REPLY: { struct binder_transaction_data tr; if (copy_from_user(&tr, ptr, sizeof(tr))) return -EFAULT; ptr += sizeof(tr); binder_transaction(proc, thread, &tr, cmd == BC_REPLY); break; } ...... } *consumed = ptr - buffer; } return 0;}static voidbinder_transaction(struct binder_proc *proc, struct binder_thread *thread,struct binder_transaction_data *tr, int reply){ struct binder_transaction *t; struct binder_work *tcomplete; size_t *offp, *off_end; struct binder_proc *target_proc; struct binder_thread *target_thread = NULL; struct binder_node *target_node = NULL; struct list_head *target_list; wait_queue_head_t *target_wait; struct binder_transaction *in_reply_to = NULL; struct binder_transaction_log_entry *e; uint32_t return_error; ...... if (reply) { ...... } else { if (tr->target.handle) { ...... } else { target_node = binder_context_mgr_node; if (target_node == NULL) { return_error = BR_DEAD_REPLY; goto err_no_context_mgr_node; } } ...... target_proc = target_node->proc; if (target_proc == NULL) { return_error = BR_DEAD_REPLY; goto err_dead_binder; } ...... } if (target_thread) { ...... } else { target_list = &target_proc->todo; target_wait = &target_proc->wait; } ...... /* TODO: reuse incoming transaction for reply */ t = kzalloc(sizeof(*t), GFP_KERNEL); if (t == NULL) { return_error = BR_FAILED_REPLY; goto err_alloc_t_failed; } ...... tcomplete = kzalloc(sizeof(*tcomplete), GFP_KERNEL); if (tcomplete == NULL) { return_error = BR_FAILED_REPLY; goto err_alloc_tcomplete_failed; } ...... if (!reply && !(tr->flags & TF_ONE_WAY)) t->from = thread; else t->from = NULL; t->sender_euid = proc->tsk->cred->euid; t->to_proc = target_proc; t->to_thread = target_thread; t->code = tr->code; t->flags = tr->flags; t->priority = task_nice(current); t->buffer = binder_alloc_buf(target_proc, tr->data_size, tr->offsets_size, !reply && (t->flags & TF_ONE_WAY)); if (t->buffer == NULL) { return_error = BR_FAILED_REPLY; goto err_binder_alloc_buf_failed; } t->buffer->allow_user_free = 0; t->buffer->debug_id = t->debug_id; t->buffer->transaction = t; t->buffer->target_node = target_node; if (target_node) binder_inc_node(target_node, 1, 0, NULL); offp = (size_t *)(t->buffer->data + ALIGN(tr->data_size, sizeof(void *))); if (copy_from_user(t->buffer->data, tr->data.ptr.buffer, tr->data_size)) { ...... return_error = BR_FAILED_REPLY; goto err_copy_data_failed; } if (copy_from_user(offp, tr->data.ptr.offsets, tr->offsets_size)) { ...... return_error = BR_FAILED_REPLY; goto err_copy_data_failed; } ...... off_end = (void *)offp + tr->offsets_size; for (; offp < off_end; offp++) { struct flat_binder_object *fp; ...... fp = (struct flat_binder_object *)(t->buffer->data + *offp); switch (fp->type) { case BINDER_TYPE_BINDER: case BINDER_TYPE_WEAK_BINDER: { struct binder_ref *ref; struct binder_node *node = binder_get_node(proc, fp->binder); if (node == NULL) { node = binder_new_node(proc, fp->binder, fp->cookie); if (node == NULL) { return_error = BR_FAILED_REPLY; goto err_binder_new_node_failed; } node->min_priority = fp->flags & FLAT_BINDER_FLAG_PRIORITY_MASK; node->accept_fds = !!(fp->flags & FLAT_BINDER_FLAG_ACCEPTS_FDS); } if (fp->cookie != node->cookie) { ...... goto err_binder_get_ref_for_node_failed; } ref = binder_get_ref_for_node(target_proc, node); if (ref == NULL) { return_error = BR_FAILED_REPLY; goto err_binder_get_ref_for_node_failed; } if (fp->type == BINDER_TYPE_BINDER) fp->type = BINDER_TYPE_HANDLE; else fp->type = BINDER_TYPE_WEAK_HANDLE; fp->handle = ref->desc; binder_inc_ref(ref, fp->type == BINDER_TYPE_HANDLE, &thread->todo); ...... } break; ...... } } if (reply) { ...... } else if (!(t->flags & TF_ONE_WAY)) { BUG_ON(t->buffer->async_transaction != 0); t->need_reply = 1; t->from_parent = thread->transaction_stack; thread->transaction_stack = t; } else { ...... } t->work.type = BINDER_WORK_TRANSACTION; list_add_tail(&t->work.entry, target_list); tcomplete->type = BINDER_WORK_TRANSACTION_COMPLETE; list_add_tail(&tcomplete->entry, &thread->todo); if (target_wait) wake_up_interruptible(target_wait); return; ......}target_node = binder_context_mgr_node;target_proc = target_node->proc;target_list = &target_proc->todo;target_wait = &target_proc->wait; /* TODO: reuse incoming transaction for reply */ t = kzalloc(sizeof(*t), GFP_KERNEL); if (t == NULL) { return_error = BR_FAILED_REPLY; goto err_alloc_t_failed; } ...... tcomplete = kzalloc(sizeof(*tcomplete), GFP_KERNEL); if (tcomplete == NULL) { return_error = BR_FAILED_REPLY; goto err_alloc_tcomplete_failed; } ...... if (!reply && !(tr->flags & TF_ONE_WAY)) t->from = thread; else t->from = NULL; t->sender_euid = proc->tsk->cred->euid; t->to_proc = target_proc; t->to_thread = target_thread; t->code = tr->code; t->flags = tr->flags; t->priority = task_nice(current); t->buffer = binder_alloc_buf(target_proc, tr->data_size, tr->offsets_size, !reply && (t->flags & TF_ONE_WAY)); if (t->buffer == NULL) { return_error = BR_FAILED_REPLY; goto err_binder_alloc_buf_failed; } t->buffer->allow_user_free = 0; t->buffer->debug_id = t->debug_id; t->buffer->transaction = t; t->buffer->target_node = target_node; if (target_node) binder_inc_node(target_node, 1, 0, NULL); offp = (size_t *)(t->buffer->data + ALIGN(tr->data_size, sizeof(void *))); if (copy_from_user(t->buffer->data, tr->data.ptr.buffer, tr->data_size)) { ...... return_error = BR_FAILED_REPLY; goto err_copy_data_failed; } if (copy_from_user(offp, tr->data.ptr.offsets, tr->offsets_size)) { ...... return_error = BR_FAILED_REPLY; goto err_copy_data_failed; }t->buffer = binder_alloc_buf(target_proc, tr->data_size, tr->offsets_size, !reply && (t->flags & TF_ONE_WAY)); if (copy_from_user(t->buffer->data, tr->data.ptr.buffer, tr->data_size)) { ...... return_error = BR_FAILED_REPLY; goto err_copy_data_failed; } if (copy_from_user(offp, tr->data.ptr.offsets, tr->offsets_size)) { ...... return_error = BR_FAILED_REPLY; goto err_copy_data_failed; }if (target_node) binder_inc_node(target_node, 1, 0, NULL); switch (fp->type) { case BINDER_TYPE_BINDER: case BINDER_TYPE_WEAK_BINDER: { struct binder_ref *ref; struct binder_node *node = binder_get_node(proc, fp->binder); if (node == NULL) { node = binder_new_node(proc, fp->binder, fp->cookie); if (node == NULL) { return_error = BR_FAILED_REPLY; goto err_binder_new_node_failed; } node->min_priority = fp->flags & FLAT_BINDER_FLAG_PRIORITY_MASK; node->accept_fds = !!(fp->flags & FLAT_BINDER_FLAG_ACCEPTS_FDS); } if (fp->cookie != node->cookie) { ...... goto err_binder_get_ref_for_node_failed; } ref = binder_get_ref_for_node(target_proc, node); if (ref == NULL) { return_error = BR_FAILED_REPLY; goto err_binder_get_ref_for_node_failed; } if (fp->type == BINDER_TYPE_BINDER) fp->type = BINDER_TYPE_HANDLE; else fp->type = BINDER_TYPE_WEAK_HANDLE; fp->handle = ref->desc; binder_inc_ref(ref, fp->type == BINDER_TYPE_HANDLE, &thread->todo); ...... } break;现在,由于要把这个Binder实体MediaPlayerService交给target_proc,也就是Service Manager来管理,也就是说Service Manager要引用这个MediaPlayerService了,于是通过binder_get_ref_for_node为MediaPlayerService创建一个引用,并且通过binder_inc_ref来增加这个引用计数,防止这个引用还在使用过程当中就被销毁。注意,到了这里的时候,t->buffer中的flat_binder_obj的type已经改为BINDER_TYPE_HANDLE,handle已经改为ref->desc,跟原来不一样了,因为这个flat_binder_obj是最终是要传给Service Manager的,而Service Manager只能够通过句柄值来引用这个Binder实体。

最后,把待处理事务加入到target_list列表中去:

list_add_tail(&t->work.entry, target_list);list_add_tail(&tcomplete->entry, &thread->todo);if (target_wait) wake_up_interruptible(target_wait);这里我们先忽略一下Service Manager被唤醒之后的场景,继续MedaPlayerService的启动过程,然后再回来。

回到binder_ioctl函数,bwr.read_size > 0为true,于是进入binder_thread_read函数:

static intbinder_thread_read(struct binder_proc *proc, struct binder_thread *thread, void __user *buffer, int size, signed long *consumed, int non_block){ void __user *ptr = buffer + *consumed; void __user *end = buffer + size; int ret = 0; int wait_for_proc_work; if (*consumed == 0) { if (put_user(BR_NOOP, (uint32_t __user *)ptr)) return -EFAULT; ptr += sizeof(uint32_t); }retry: wait_for_proc_work = thread->transaction_stack == NULL && list_empty(&thread->todo); ....... if (wait_for_proc_work) { ....... } else { if (non_block) { if (!binder_has_thread_work(thread)) ret = -EAGAIN; } else ret = wait_event_interruptible(thread->wait, binder_has_thread_work(thread)); } ...... while (1) { uint32_t cmd; struct binder_transaction_data tr; struct binder_work *w; struct binder_transaction *t = NULL; if (!list_empty(&thread->todo)) w = list_first_entry(&thread->todo, struct binder_work, entry); else if (!list_empty(&proc->todo) && wait_for_proc_work) w = list_first_entry(&proc->todo, struct binder_work, entry); else { if (ptr - buffer == 4 && !(thread->looper & BINDER_LOOPER_STATE_NEED_RETURN)) /* no data added */ goto retry; break; } if (end - ptr < sizeof(tr) + 4) break; switch (w->type) { ...... case BINDER_WORK_TRANSACTION_COMPLETE: { cmd = BR_TRANSACTION_COMPLETE; if (put_user(cmd, (uint32_t __user *)ptr)) return -EFAULT; ptr += sizeof(uint32_t); binder_stat_br(proc, thread, cmd); if (binder_debug_mask & BINDER_DEBUG_TRANSACTION_COMPLETE) printk(KERN_INFO "binder: %d:%d BR_TRANSACTION_COMPLETE\n", proc->pid, thread->pid); list_del(&w->entry); kfree(w); binder_stats.obj_deleted[BINDER_STAT_TRANSACTION_COMPLETE]++; } break; ...... } if (!t) continue; ...... }done: ...... return 0;}这里,thread->transaction_stack和thread->todo均不为空,于是wait_for_proc_work为false,由于binder_has_thread_work的时候,返回true,这里因为thread->todo不为空,因此,线程虽然调用了wait_event_interruptible,但是不会睡眠,于是继续往下执行。

由于thread->todo不为空,执行下列语句:

if (!list_empty(&thread->todo)) w = list_first_entry(&thread->todo, struct binder_work, entry); switch (w->type) { ...... case BINDER_WORK_TRANSACTION_COMPLETE: { cmd = BR_TRANSACTION_COMPLETE; if (put_user(cmd, (uint32_t __user *)ptr)) return -EFAULT; ptr += sizeof(uint32_t); ...... list_del(&w->entry); kfree(w); } break; ...... }binder_ioctl函数返回到用户空间之前,把数据消耗情况拷贝回用户空间中:

if (copy_to_user(ubuf, &bwr, sizeof(bwr))) { ret = -EFAULT; goto err;} if (err >= NO_ERROR) { if (bwr.write_consumed > 0) { if (bwr.write_consumed < (ssize_t)mOut.dataSize()) mOut.remove(0, bwr.write_consumed); else mOut.setDataSize(0); } if (bwr.read_consumed > 0) { mIn.setDataSize(bwr.read_consumed); mIn.setDataPosition(0); mOut.setDataSize(0); mIn.setDataSize(bwr.read_consumed); mIn.setDataPosition(0);这时候,下面语句执行后:

const bool needRead = mIn.dataPosition() >= mIn.dataSize();这时候,下面语句执行后:

const size_t outAvail = (!doReceive || needRead) ? mOut.dataSize() : 0;switch (cmd) {case BR_TRANSACTION_COMPLETE: if (!reply && !acquireResult) goto finish; break;......}这次,needRead就为true了,而outAvail仍为0,所以bwr.read_size不为0,bwr.write_size为0。于是通过:

ioctl(mProcess->mDriverFD, BINDER_WRITE_READ, &bwr)wait_event_interruptible(thread->wait, binder_has_thread_work(thread));现在,我们可以回到Service Manager被唤醒的过程了。我们接着前面浅谈Service Manager成为Android进程间通信(IPC)机制Binder守护进程之路这篇文章的最后,继续描述。此时, Service Manager正在binder_thread_read函数中调用wait_event_interruptible_exclusive进入休眠状态。上面被MediaPlayerService启动后进程唤醒后,继续执行binder_thread_read函数:

static intbinder_thread_read(struct binder_proc *proc, struct binder_thread *thread, void __user *buffer, int size, signed long *consumed, int non_block){ void __user *ptr = buffer + *consumed; void __user *end = buffer + size; int ret = 0; int wait_for_proc_work; if (*consumed == 0) { if (put_user(BR_NOOP, (uint32_t __user *)ptr)) return -EFAULT; ptr += sizeof(uint32_t); }retry: wait_for_proc_work = thread->transaction_stack == NULL && list_empty(&thread->todo); ...... if (wait_for_proc_work) { ...... if (non_block) { if (!binder_has_proc_work(proc, thread)) ret = -EAGAIN; } else ret = wait_event_interruptible_exclusive(proc->wait, binder_has_proc_work(proc, thread)); } else { ...... } ...... while (1) { uint32_t cmd; struct binder_transaction_data tr; struct binder_work *w; struct binder_transaction *t = NULL; if (!list_empty(&thread->todo)) w = list_first_entry(&thread->todo, struct binder_work, entry); else if (!list_empty(&proc->todo) && wait_for_proc_work) w = list_first_entry(&proc->todo, struct binder_work, entry); else { if (ptr - buffer == 4 && !(thread->looper & BINDER_LOOPER_STATE_NEED_RETURN)) /* no data added */ goto retry; break; } if (end - ptr < sizeof(tr) + 4) break; switch (w->type) { case BINDER_WORK_TRANSACTION: { t = container_of(w, struct binder_transaction, work); } break; ...... } if (!t) continue; BUG_ON(t->buffer == NULL); if (t->buffer->target_node) { struct binder_node *target_node = t->buffer->target_node; tr.target.ptr = target_node->ptr; tr.cookie = target_node->cookie; ...... cmd = BR_TRANSACTION; } else { ...... } tr.code = t->code; tr.flags = t->flags; tr.sender_euid = t->sender_euid; if (t->from) { struct task_struct *sender = t->from->proc->tsk; tr.sender_pid = task_tgid_nr_ns(sender, current->nsproxy->pid_ns); } else { tr.sender_pid = 0; } tr.data_size = t->buffer->data_size; tr.offsets_size = t->buffer->offsets_size; tr.data.ptr.buffer = (void *)t->buffer->data + proc->user_buffer_offset; tr.data.ptr.offsets = tr.data.ptr.buffer + ALIGN(t->buffer->data_size, sizeof(void *)); if (put_user(cmd, (uint32_t __user *)ptr)) return -EFAULT; ptr += sizeof(uint32_t); if (copy_to_user(ptr, &tr, sizeof(tr))) return -EFAULT; ptr += sizeof(tr); ...... list_del(&t->work.entry); t->buffer->allow_user_free = 1; if (cmd == BR_TRANSACTION && !(t->flags & TF_ONE_WAY)) { t->to_parent = thread->transaction_stack; t->to_thread = thread; thread->transaction_stack = t; } else { t->buffer->transaction = NULL; kfree(t); binder_stats.obj_deleted[BINDER_STAT_TRANSACTION]++; } break; }done: ...... return 0;}Service Manager被唤醒之后,就进入while循环开始处理事务了。这里wait_for_proc_work等于1,并且proc->todo不为空,所以从proc->todo列表中得到第一个工作项:

w = list_first_entry(&proc->todo, struct binder_work, entry);t = container_of(w, struct binder_transaction, work);if (t->buffer->target_node) { struct binder_node *target_node = t->buffer->target_node; tr.target.ptr = target_node->ptr; tr.cookie = target_node->cookie; ...... cmd = BR_TRANSACTION;} else { ......}tr.code = t->code;tr.flags = t->flags;tr.sender_euid = t->sender_euid;if (t->from) { struct task_struct *sender = t->from->proc->tsk; tr.sender_pid = task_tgid_nr_ns(sender, current->nsproxy->pid_ns);} else { tr.sender_pid = 0;}tr.data_size = t->buffer->data_size;tr.offsets_size = t->buffer->offsets_size;tr.data.ptr.buffer = (void *)t->buffer->data + proc->user_buffer_offset;tr.data.ptr.offsets = tr.data.ptr.buffer + ALIGN(t->buffer->data_size, sizeof(void *));tr.data.ptr.buffer = (void *)t->buffer->data + proc->user_buffer_offset;tr.data.ptr.offsets = tr.data.ptr.buffer + ALIGN(t->buffer->data_size, sizeof(void *));接着就是把tr的内容拷贝到用户传进来的缓冲区去了,指针ptr指向这个用户缓冲区的地址:

if (put_user(cmd, (uint32_t __user *)ptr)) return -EFAULT;ptr += sizeof(uint32_t);if (copy_to_user(ptr, &tr, sizeof(tr))) return<给我老师的人工智能教程打call!http://blog.csdn.net/jiangjunshow

8934

8934

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?