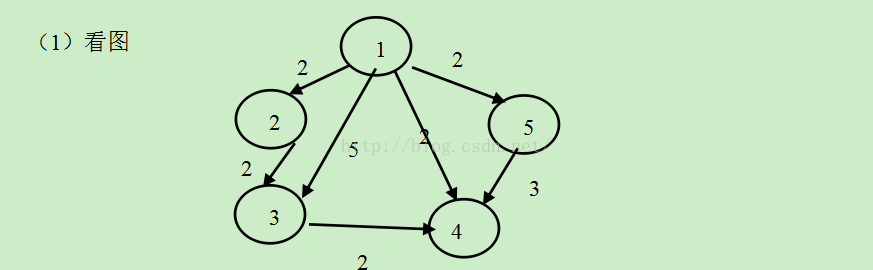

edge.txt (边数据)

1 2 2

1 3 5

1 4 1

2 3 2

3 4 2

4 5 3

5 1 2

vertex.txt(顶点数据)

1 2

1 3

1 4

2 3

3 4

4 6

5 1

package main.scala.com.spark.demo.com.spark.graphx

import java.io.PrintWriter

import java.io.File

import org.apache.spark.graphx.{VertexRDD, Edge, Graph}

import org.apache.spark.rdd.RDD

import org.apache.spark.{SparkConf, SparkContext}

import scala.collection.immutable.HashSet

/**

* Created by root on 15-10-18.

*/

object MultiPointshortestPathFinal {

def main(args: Array[String]) {

val conf = new SparkConf().setAppName("shortestpath").setMaster("local")

val sc = new SparkContext(conf)

val edgeFile:RDD[String] = sc.textFile("hdfs://127.0.0.1:9000/data01/edge.txt")

val vertexFile:RDD[String] = sc.textFile("hdfs://127.0.0.1:9000/data01/vertex.txt")

//edge

val edge = edgeFile.map { e =>

val fields = e.split(" ")

Edge(fields(0).toLong,fields(1).toLong,fields(2).toLong)

}

//vertex

val vertex = vertexFile.map{e=>

val fields = e.split(" ")

(fields(0).toLong,fields(1))

}

val graph = Graph(vertex,edge,"").persist()

// println(graph.edges.collect.mkString("\n"))

//用pregel计算最短路径

// Initialize the graph such that all vertices except the root have distance infinity.

//初始化各节点到原点的距离

val vset = scala.collection.mutable.Set(1,2,3,4,5);

// var verticesArr = VertexRDD[10]

for(sid <- vset){

val sourceId = sid

val initialGraph = graph.mapVertices((id, _) => if (id == sourceId) 0.0 else Double.PositiveInfinity)

//begin

val sssp = initialGraph.pregel(Double.PositiveInfinity)(

// Vertex Program,节点处理消息的函数,dist为原节点属性(Double),newDist为消息类型(Double)

(id, dist, newDist) => math.min(dist, newDist),

// Send Message,发送消息函数,返回结果为(目标节点id,消息(即最短距离))

triplet => {

if (triplet.srcAttr + triplet.attr < triplet.dstAttr) {

Iterator((triplet.dstId, triplet.srcAttr + triplet.attr))

} else {

Iterator.empty

}

},

//Merge Message,对消息进行合并的操作,类似于Hadoop中的combiner

(a, b) => math.min(a, b)

)//over

println(sssp.vertices.collect.mkString("\n"))

sssp.vertices.saveAsTextFile("hdfs://127.0.0.1:9000/data01/short1"+sid+".txt")

println("vertexInfo")

println(vertex.collect.mkString("\n"))

println("sid==>>"+sid)

}

}

}

val edgeFile:RDD[String] = sc.textFile("hdfs://127.0.0.1:9000/data01/edge.txt")

val vertexFile:RDD[String] = sc.textFile("hdfs://127.0.0.1:9000/data01/vertex.txt")

//edge

val edge = edgeFile.map { e =>

val fields = e.split(" ")

Edge(fields(0).toLong,fields(1).toLong,fields(2).toLong)

}

//vertex

val vertex = vertexFile.map{e=>

val fields = e.split(" ")

(fields(0).toLong,fields(1))

}

val graph = Graph(vertex,edge,"").persist()

// println(graph.edges.collect.mkString("\n"))

//用pregel计算最短路径

// Initialize the graph such that all vertices except the root have distance infinity.

//初始化各节点到原点的距离

val vset = scala.collection.mutable.Set(1,2,3,4,5);

// var verticesArr = VertexRDD[10]

for(sid <- vset){

val sourceId = sid

val initialGraph = graph.mapVertices((id, _) => if (id == sourceId) 0.0 else Double.PositiveInfinity)

//begin

val sssp = initialGraph.pregel(Double.PositiveInfinity)(

// Vertex Program,节点处理消息的函数,dist为原节点属性(Double),newDist为消息类型(Double)

(id, dist, newDist) => math.min(dist, newDist),

// Send Message,发送消息函数,返回结果为(目标节点id,消息(即最短距离))

triplet => {

if (triplet.srcAttr + triplet.attr < triplet.dstAttr) {

Iterator((triplet.dstId, triplet.srcAttr + triplet.attr))

} else {

Iterator.empty

}

},

//Merge Message,对消息进行合并的操作,类似于Hadoop中的combiner

本文介绍如何使用Spark处理图数据,通过边数据(edge.txt)和顶点数据(vertex.txt),计算图中每个顶点之间的最短路径。数据包括各个顶点的连接关系及其权重,例如:1到2的权重为2,1到3的权重为5等。

本文介绍如何使用Spark处理图数据,通过边数据(edge.txt)和顶点数据(vertex.txt),计算图中每个顶点之间的最短路径。数据包括各个顶点的连接关系及其权重,例如:1到2的权重为2,1到3的权重为5等。

855

855

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?