基于机器学习库的神经网络代码已经非常精简了,对于用户来说都是黑盒子,其内部到底怎么运作的呢?原理虽然简单,但真正要操刀书写,估计一时半会也不好弄,幸好大牛们开源了、只需要一个类就能明明白白!!!

backprop.h

//

// Fully connected multilayered feed //

// forward artificial neural network using //

// Backpropogation algorithm for training. //

//

// 基于反向传播算法的全连接多层前向人工神经网络

#ifndef backprop_h

#define backprop_h

#include <assert.h>

#include <iostream>

#include <stdio.h>

#include <math.h>

using namespace std;

//B-P网络

class CBackProp{

// output of each neuron

double **out;

// delta error value for each neuron

double **delta;

// vector of weights for each neuron

double ***weight;

// no of layers in net

// including input layer

int numl;

// vector of numl elements for size

// of each layer

int *lsize;

// learning rate //学习率

double beta;

// momentum parameter //动量参数

double alpha;

// storage for weight-change made //

// in previous epoch

double ***prevDwt;

// squashing function //压缩函数

double sigmoid(double in);

public:

~CBackProp();

// initializes and allocates memory

CBackProp(int nl,int *sz,double b,double a);

// backpropogates error for one set of input //反向传播

void bpgt(double *in,double *tgt);

// feed forwards activations for one set of inputs //前向激活

void ffwd(double *in);

// returns mean square error of the net //均方误差

double mse(double *tgt) const;

// returns i'th output of the net //输出

double Out(int i) const;

};

#endif

backprop.cpp

#include "backprop.h"

#include <time.h>

#include <stdlib.h>

// initializes and allocates memory on heap

CBackProp::CBackProp(int nl,int *sz,double b,double a)

: beta(b)

, alpha(a)

{

int i=0;

// set no of layers and their sizes //网络层数和节点数

numl = nl;

lsize = new int[numl];

for(int i=0;i<numl;i++)

{

lsize[i] = sz[i];

}

// allocate memory for output of each neuron

out = new double*[numl];

for( i=0;i<numl;i++)

{

out[i] = new double[lsize[i]];

}

// allocate memory for delta

delta = new double*[numl];

for(i=1;i<numl;i++)

{

delta[i] = new double[lsize[i]];

}

// allocate memory for weights

weight = new double**[numl];

for(i=1;i<numl;i++)

{

weight[i] = new double*[lsize[i]];

}

for(i=1;i<numl;i++)

{

for(int j=0;j<lsize[i];j++)

{

weight[i][j] = new double[lsize[i-1]+1];

}

}

// allocate memory for previous weights

prevDwt = new double**[numl];

for(i=1;i<numl;i++)

{

prevDwt[i] = new double*[lsize[i]];

}

for(i=1;i<numl;i++)

{

for(int j=0;j<lsize[i];j++)

{

prevDwt[i][j] = new double[lsize[i-1]+1];

}

}

// seed and assign random weights

srand((unsigned)(time(NULL)));

for(i=1;i<numl;i++)

{

for(int j=0;j<lsize[i];j++)

{

for(int k=0;k<lsize[i-1]+1;k++)

{

weight[i][j][k] = (double)(rand())/(RAND_MAX/2) - 1;//32767

}

}

}

// initialize previous weights to 0 for first iteration

for(i=1;i<numl;i++)

{

for(int j=0;j<lsize[i];j++)

{

for(int k=0;k<lsize[i-1]+1;k++)

{

prevDwt[i][j][k] = (double)0.0;

}

}

}

// Note that the following variables are unused,

//

// delta[0]

// weight[0]

// prevDwt[0]

// I did this intentionaly to maintains consistancy in numbering the layers.

// Since for a net having n layers, input layer is refered to as 0th layer,

// first hidden layer as 1st layer and the nth layer as output layer. And

// first (0th) layer just stores the inputs hence there is no delta or weigth

// values corresponding to it.

}

CBackProp::~CBackProp()

{

int i=0;

// free out

for(i=0;i<numl;i++)

{

delete[] out[i];

}

delete[] out;

// free delta

for(i=1;i<numl;i++)

{

delete[] delta[i];

}

delete[] delta;

// free weight

for(i=1;i<numl;i++)

{

for(int j=0;j<lsize[i];j++)

{

delete[] weight[i][j];

}

}

for(i=1;i<numl;i++)

{

delete[] weight[i];

}

delete[] weight;

// free prevDwt

for(i=1;i<numl;i++)

{

for(int j=0;j<lsize[i];j++)

{

delete[] prevDwt[i][j];

}

}

for(i=1;i<numl;i++)

{

delete[] prevDwt[i];

}

delete[] prevDwt;

// free layer info

delete[] lsize;

}

// sigmoid function //激活函数

double CBackProp::sigmoid(double in)

{

return (double)(1/(1+exp(-in)));

}

// mean square error //均方差

double CBackProp::mse(double *tgt) const

{

double mse=0;

for(int i=0;i<lsize[numl-1];i++)

{

mse += (tgt[i]-out[numl-1][i])*(tgt[i]-out[numl-1][i]);

}

return mse/2;

}

// returns i'th output of the net //输出网络结果

double CBackProp::Out(int i) const

{

return out[numl-1][i];

}

// feed forward one set of input //对输入进行前向传播计算

void CBackProp::ffwd(double *in)

{

double sum;

int i=0;

// assign content to input layer

for(i=0;i<lsize[0];i++)

{

out[0][i]=in[i]; // output_from_neuron(i,j) Jth neuron in Ith Layer

}

// assign output(activation) value

// to each neuron usng sigmoid func

for(i=1;i<numl;i++)

{// For each layer

for(int j=0;j<lsize[i];j++)

{// For each neuron in current layer

sum = 0.0;

for(int k=0;k<lsize[i-1];k++)

{// For input from each neuron in preceeding layer

sum += out[i-1][k]*weight[i][j][k]; // Apply weight to inputs and add to sum

}

sum += weight[i][j][lsize[i-1]]; // Apply bias

out[i][j] = sigmoid(sum); // Apply sigmoid function

}

}

}

// backpropogate errors from output

// layer uptill the first hidden layer //反向传播误差直到第一个隐含层

void CBackProp::bpgt(double *in,double *tgt)

{

double sum;

int i=0;

// update output values for each neuron

ffwd(in);

// find delta for output layer

for(i=0;i<lsize[numl-1];i++)

{

delta[numl-1][i] = out[numl-1][i]*

(1-out[numl-1][i])*(tgt[i]-out[numl-1][i]);

}

// find delta for hidden layers

for(i=numl-2;i>0;i--)

{

for(int j=0;j<lsize[i];j++)

{

sum = 0.0;

for(int k=0;k<lsize[i+1];k++)

{

sum += delta[i+1][k]*weight[i+1][k][j];

}

delta[i][j] = out[i][j]*(1-out[i][j])*sum;

}

}

// apply momentum ( does nothing if alpha=0 )

for(i=1;i<numl;i++)

{

for(int j=0;j<lsize[i];j++)

{

for(int k=0;k<lsize[i-1];k++)

{

weight[i][j][k] += alpha*prevDwt[i][j][k];

}

weight[i][j][lsize[i-1]] += alpha*prevDwt[i][j][lsize[i-1]];

}

}

// adjust weights usng steepest descent

for(i=1;i<numl;i++)

{

for(int j=0;j<lsize[i];j++)

{

for(int k=0;k<lsize[i-1];k++)

{

prevDwt[i][j][k] = beta*delta[i][j]*out[i-1][k];

weight[i][j][k] += prevDwt[i][j][k];

}

prevDwt[i][j][lsize[i-1]] = beta*delta[i][j];

weight[i][j][lsize[i-1]] += prevDwt[i][j][lsize[i-1]];

}

}

}

main.cpp

#include "BackProp.h"

// NeuralNet.cpp : Defines the entry point for the console application.

//

//异或运算

int main(int argc, char* argv[])

{

// prepare XOR traing data //训练数据,最后一列为结果

double data[][4] = {

0, 0, 0, 0,

0, 0, 1, 1,

0, 1, 0, 1,

0, 1, 1, 0,

1, 0, 0, 1,

1, 0, 1, 0,

1, 1, 0, 0,

1, 1, 1, 1 };

// prepare test data //测试数据

double testData[][3]={

0, 0, 0, //0

0, 0, 1, //1

0, 1, 0, //1

0, 1, 1, //0

1, 0, 0, //1

1, 0, 1, //0

1, 1, 0, //0

1, 1, 1 };//1

// defining a net with 4 layers having 3,3,3, and 1 neuron respectively,

// the first layer is input layer i.e. simply holder for the input parameters

// and has to be the same size as the no of input parameters, in out example 3

int numLayers = 4, lSz[4] = {3,3,2,1}; //网络层数、每层节点个数

// Learing rate - beta //学习率

// momentum - alpha //动量

// Threshhold - thresh (value of target mse, training stops once it is achieved) //精度阈值

double beta = 0.3, alpha = 0.1, Thresh = 0.00001;

// maximum no of iterations during training

long num_iter = 2000000; //最大轮数

// Creating the net

CBackProp *bp = new CBackProp(numLayers, lSz, beta, alpha);

long i=0;

cout<< endl << "Now training the network...." << endl;

for (i=0; i<num_iter; i++)//训练轮数

{

bp->bpgt(data[i%8], &data[i%8][3]);//反向传播调整网络权重

if( bp->mse(&data[i%8][3]) < Thresh )//达到精度,停止训练

{

cout << endl << "Network Trained. Threshold value achieved in " << i << " iterations." << endl;

cout << "MSE: " << bp->mse(&data[i%8][3])

<< endl << endl;

break;

}

if ( i%(num_iter/10) == 0 )//每10轮输出训练结果

{

cout<< endl << "MSE: " << bp->mse(&data[i%8][3])

<< "... Training..." << endl;

}

}

if ( i == num_iter )

{

cout << endl << i << " iterations completed..."

<< "MSE: " << bp->mse(&data[(i-1)%8][3]) << endl;

}

cout<< "Now using the trained network to make predctions on test data...." << endl << endl;

for ( i = 0 ; i < 8 ; i++ )

{

bp->ffwd(testData[i]);//对测试数据进行预测

cout << testData[i][0]<< " " << testData[i][1]<< " " << testData[i][2]<< " " << bp->Out(0) << endl;

}

return 0;

}

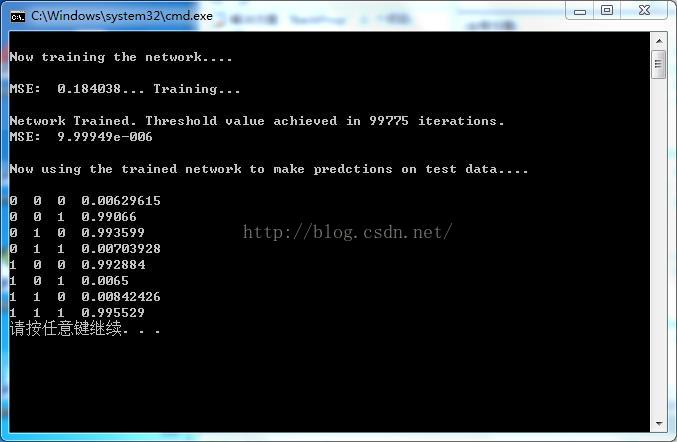

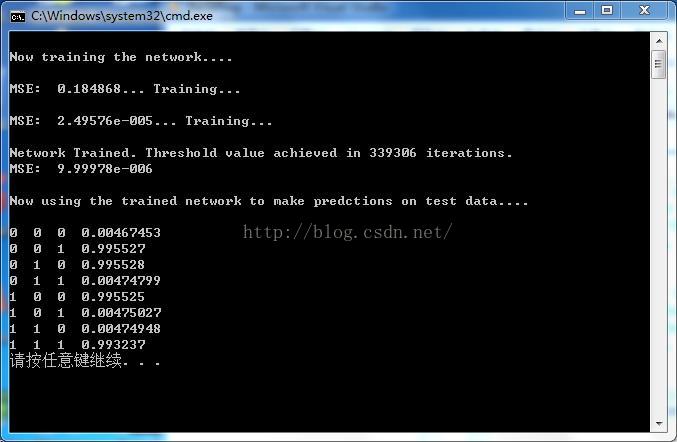

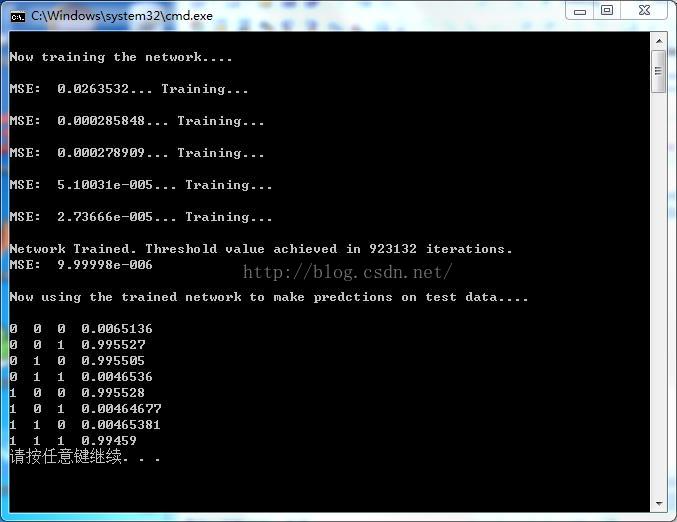

结果很好:

back propagation算法

https://www.zhihu.com/question/27239198#answer-31377317

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?