简介

本文主要介绍如何将爬取的《Elasticsearch: 权威指南》数据写入到ES中

完整代码github地址

Maven依赖

使用Java High Level REST Client进行数据写入,添加maven依赖

<dependency>

<groupId>org.elasticsearch.client</groupId>

<artifactId>elasticsearch-rest-high-level-client</artifactId>

<version>6.8.3</version>

</dependency>

创建client

/**

* 获取client

*/

private static RestHighLevelClient getClient() {

final CredentialsProvider credentialsProvider = new BasicCredentialsProvider();

// 设置用户名和密码,使用为访问es_doc专门创建的search账号

credentialsProvider.setCredentials(AuthScope.ANY,

new UsernamePasswordCredentials("search", "123456"));

// 设置es的host,端口及认证方式

RestClientBuilder builder = RestClient.builder(

new HttpHost("192.168.1.14", 9200))

.setHttpClientConfigCallback(httpClientBuilder -> httpClientBuilder

.setDefaultCredentialsProvider(credentialsProvider));

return new RestHighLevelClient(builder);

}

构建写入请求

/**

* 索引名称

*/

private static final String INDEX_NAME = "es_doc";

/**

* 文档类型,使用_doc,固定写法

*/

private static final String DOC_TYPE = "_doc";

String dir = "D:/es-doc/权威指南";

// 获取爬取文件集合

@SuppressWarnings("unchecked")

Collection<File> collection = FileUtils.listFiles(new File(dir), TrueFileFilter.TRUE, TrueFileFilter.TRUE);

// 待写入ES对象列表

List<IndexRequest> requests = new ArrayList<>();

collection.forEach(file -> {

try {

String content = IOUtils.toString(new FileInputStream(file));

EsPage esPage = JSON.parseObject(content, EsPage.class);

log.debug("file:{},esPage:{}", file.getPath(), esPage.getTitle());

// 只有当标题和内容都不为空时才添加到待写入列表中

if (!StringUtils.isAnyBlank(esPage.getContent(), esPage.getTitle())) {

// 设置文件名和写入时间

esPage.setFileName(file.getName());

esPage.setToEsDate(EsDateUtil.getEsDateStringNow());

IndexRequest indexRequest = new IndexRequest(INDEX_NAME, DOC_TYPE, esPage.getFileName())

.source(JSON.toJSONString(esPage), XContentType.JSON);

requests.add(indexRequest);

}

} catch (IOException e) {

log.error("将文件解析为EsPage对象失败", e);

}

});

写入方法

展示两种写入方式

- bulk批量写入

- For循环写入

bulk批量写入

/**

* 使用bulk批量写入

*

* @param requests 待写入IndexRequest列表

*/

private static void writeBulkBatch(List<IndexRequest> requests) {

PerformanceMonitor.begin("writeBulkBatch", false);

RestHighLevelClient client = null;

try {

client = getClient();

BulkRequest bulkRequest = new BulkRequest();

requests.forEach(bulkRequest::add);

BulkResponse responses = client.bulk(bulkRequest, RequestOptions.DEFAULT);

log.info("写入条数:{},存在异常:{},异常信息:{}", responses.getItems().length, responses.hasFailures(), responses.buildFailureMessage());

} catch (Exception e) {

log.error("writeBulkBatch发生异常", e);

} finally {

PerformanceMonitor.end();

closeClient(client);

}

}

For循环写入

/**

* 使用for循环实现批量写入

*

* @param requests 待写入IndexRequest列表

*/

public static void writeForBatch(List<IndexRequest> requests) {

PerformanceMonitor.begin("writeForBatch", false);

RestHighLevelClient client = null;

try {

client = getClient();

for (IndexRequest request : requests) {

IndexResponse indexResponse = client.index(request, RequestOptions.DEFAULT);

log.info("indexResponse:{}", indexResponse);

}

} catch (Exception e) {

log.error("writeBulkBatch发生异常", e);

} finally {

PerformanceMonitor.end();

closeClient(client);

}

}

完整代码

https://github.com/huanqingdong/search-engine-demo/blob/master/crawling-data/src/main/java/app/WriteDataToEs.java

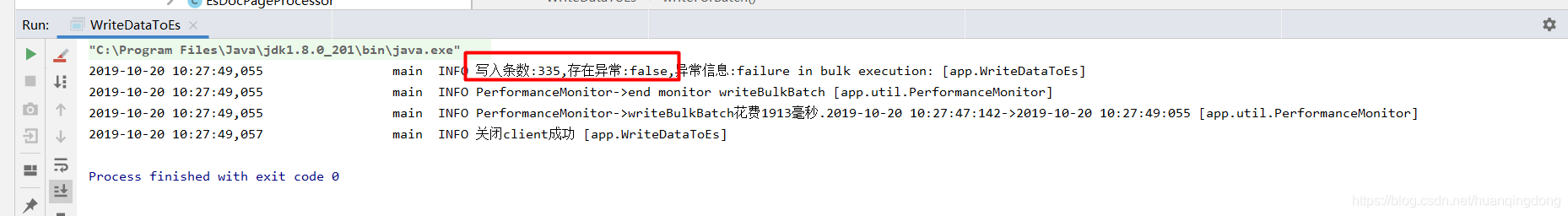

写入情况截图

使用bulk方式写入

共写入335行数据

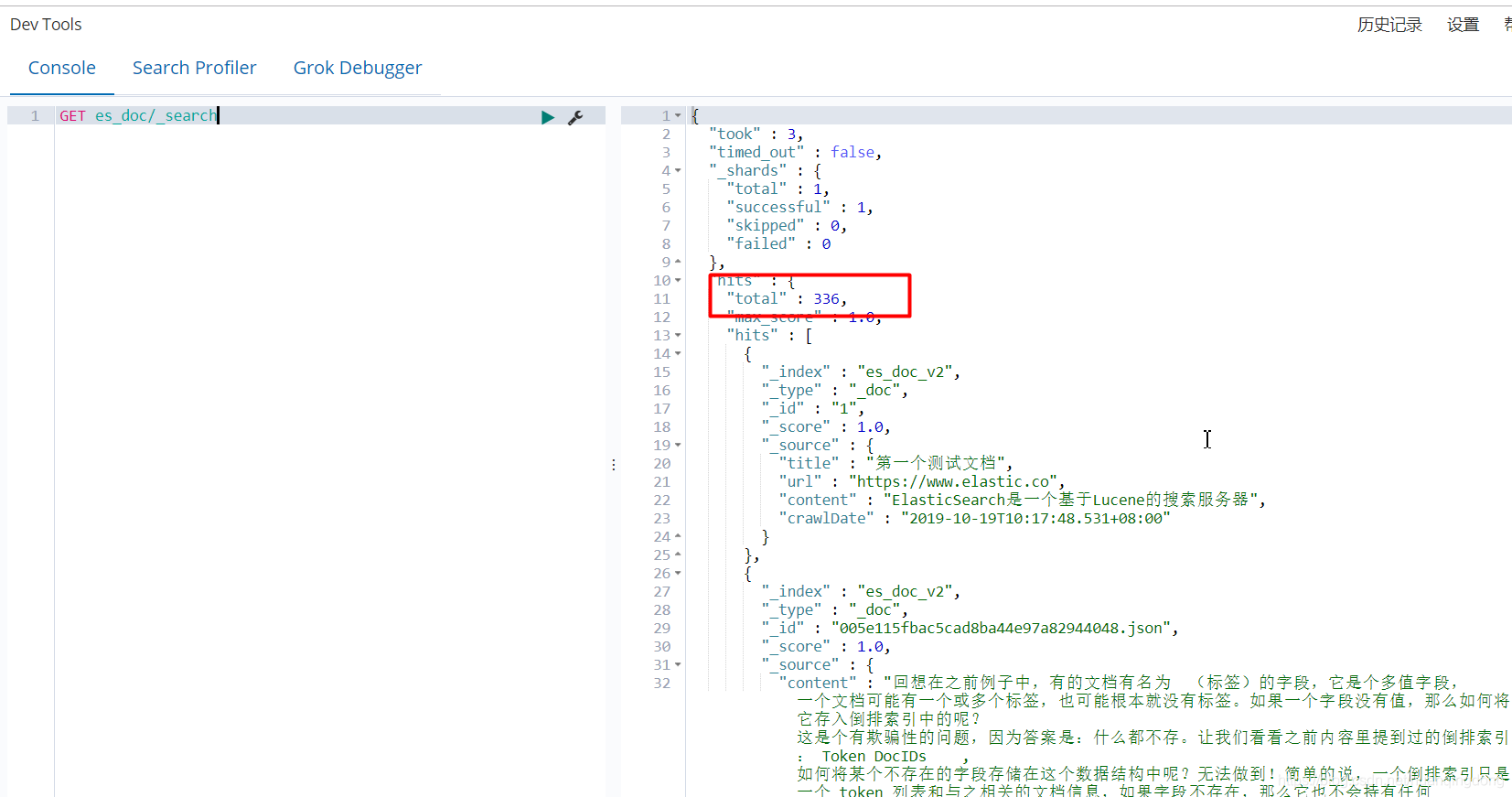

kibana数据查询

共查询出336条数据,bulk写入的335条+1条测试数据,至此写入完成。

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?