1. 概述

Redis 提供了string、hash、list、set、sorted set、bitmap等一系列常用的数据结构。

Flink 没有直接提供官方的 Redis 连接器,但 Bahir 项目提供了 Flink-Redis 的连接工具

2. 使用

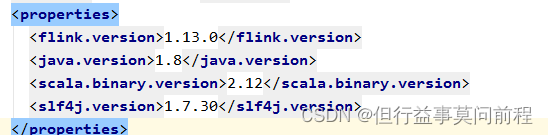

2.1 引入依赖

服务器上的flink版本与引入的依赖之间有冲突,使用exclusions排除

<dependency>

<groupId>org.apache.bahir</groupId>

<artifactId>flink-connector-redis_2.11</artifactId>

<version>1.0</version>

<exclusions>

<exclusion>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java_2.11</artifactId>

</exclusion>

</exclusions>

</dependency>

2.2 RedisSink

连接器提供了一个 RedisSink,继承抽象类 RichSinkFunction,RedisSink 的构造方法需要传入两个参数:FlinkJedisConfigBase:Jedis 的连接配置;RedisMapper:Redis 映射类接口,说明怎样将数据转换成写入 Redis 的类型

2.3 代码

pojo对象

public class Event {

public String user;

public String url;

public long timestamp;

public Event() {

}

public Event(String user, String url, Long timestamp) {

this.user = user;

this.url = url;

this.timestamp = timestamp;

}

@Override

public int hashCode() {

return super.hashCode();

}

public String getUser() {

return user;

}

public void setUser(String user) {

this.user = user;

}

public String getUrl() {

return url;

}

public void setUrl(String url) {

this.url = url;

}

public Long getTimestamp() {

return timestamp;

}

public void setTimestamp(Long timestamp) {

this.timestamp = timestamp;

}

@Override

public String toString() {

return "Event{" +

"user='" + user + '\'' +

", url='" + url + '\'' +

", timestamp=" + new Timestamp(timestamp) +

'}';

}

}

(1) 从kafka读取数据

(2) 转换操作

(3) 写入redis

public class SinkToRedis {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env =

StreamExecutionEnvironment.getExecutionEnvironment();

Properties properties = new Properties();

properties.setProperty("bootstrap.servers", "192.168.42.102:9092,192.168.42.103:9092,192.168.42.104:9092");

properties.setProperty("group.id", "consumer-group");

properties.setProperty("key.deserializer",

"org.apache.kafka.common.serialization.StringDeserializer");

properties.setProperty("value.deserializer",

"org.apache.kafka.common.serialization.StringDeserializer");

properties.setProperty("auto.offset.reset", "latest");

DataStreamSource<String> stream = env.addSource(new

FlinkKafkaConsumer<String>(

"clicks",

new SimpleStringSchema(),

properties

));

SingleOutputStreamOperator<Event> flatMap = stream.flatMap(new FlatMapFunction<String, Event>() {

@Override

public void flatMap(String s, Collector<Event> collector) throws Exception {

String[] split = s.split(",");

collector.collect(new Event(split[0], split[1], Long.parseLong(split[2])));

}

});

FlinkJedisPoolConfig config = new FlinkJedisPoolConfig.Builder().setHost("192.168.0.23").setPassword("123456").setDatabase(15).build();

flatMap.addSink(new RedisSink<>(config, new RedisMapper<Event>() {

@Override

public RedisCommandDescription getCommandDescription() {

return new RedisCommandDescription(RedisCommand.HSET, "events");

}

@Override

public String getKeyFromData(Event data) {

return data.user;

}

@Override

public String getValueFromData(Event data) {

return data.url;

}

}));

env.execute();

}

}

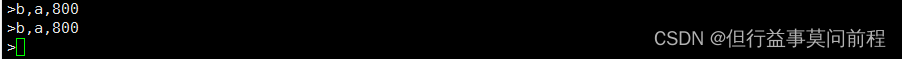

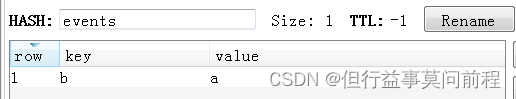

结果:

./kafka-console-producer.sh --broker-list 192.168.42.102:9092,192.168.42.103:9092,192.168.42.104:9092 --topic clicks

1805

1805

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?